Within science and engineering, the word ‘quantum’ may spark associations with speed and capability, referencing a superior computer that can perform tasks a classical computer cannot. In cyber security, some may recognize ‘quantum’ in relation to cryptography or, more recently, as the name of a new ransomware group, which achieved network-wide encryption a mere four hours after an initial infection.

Although this group now has a reputation for carrying out fast and efficient attacks, speed is not their only tactic. In August 2022, Darktrace detected a Quantum Ransomware incident where attackers remained in the victim’s network for almost a month after the initial signs of infection, before detonating ransomware. This was a stark difference to previously reported attacks, demonstrating that as motives change, so do threat actors’ strategies.

The Quantum Group

Quantum was first identified in August 2021 as the latest of several rebrands of MountLocker ransomware [1]. As part of this rebrand, the extension ‘.quantum’ is appended to filenames that are encrypted and the associated ransom notes are named ‘README_TO_DECRYPT.html’ [2].

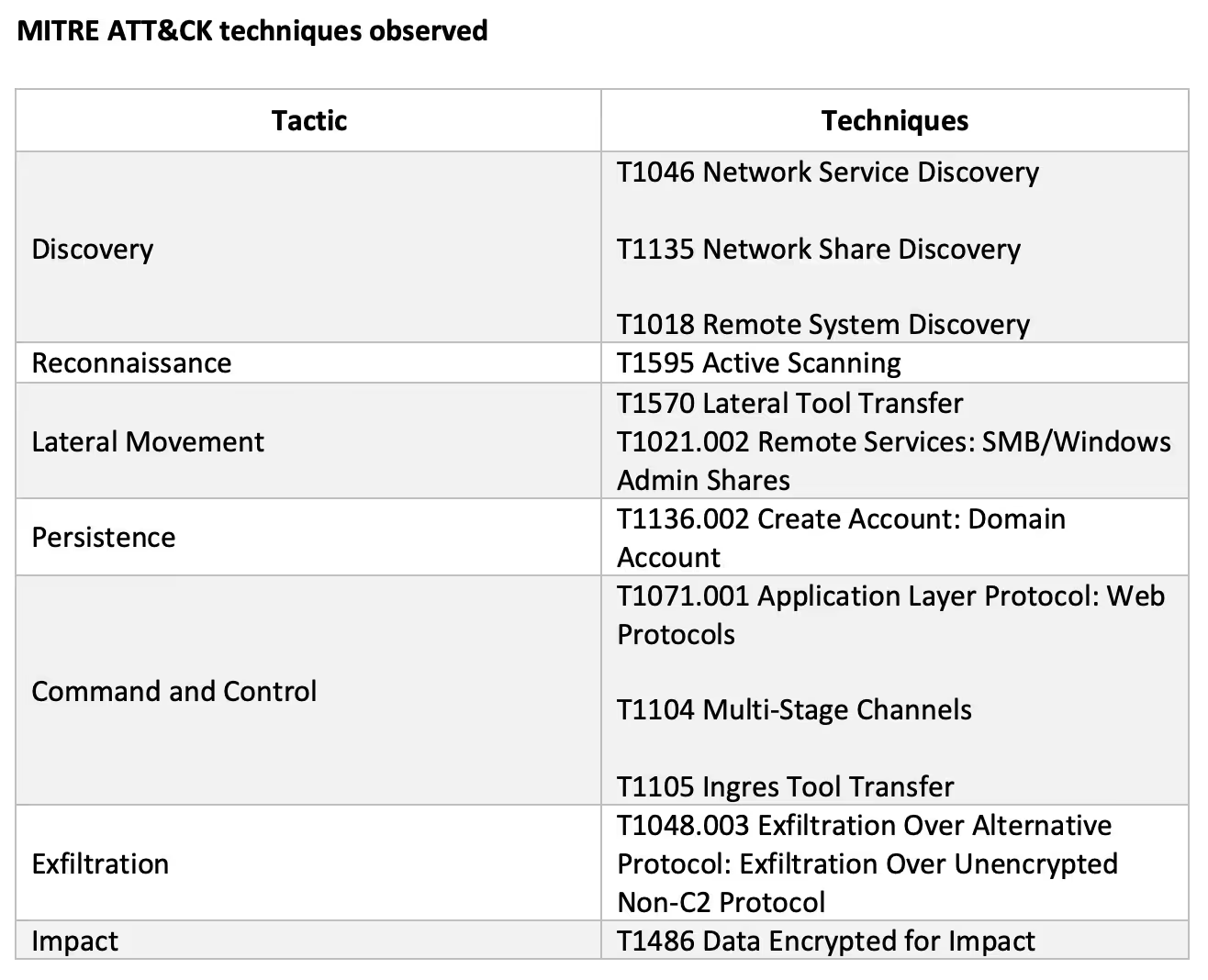

From April 2022, media coverage of this group has increased following a DFIR report detailing an attack that progressed from initial access to domain-wide ransomware within four hours [3]. To put this into perspective, the global median dwell time for ransomware in 2020 and 2021 is 5 days [4]. In the case of Quantum, threat actors gained direct keyboard access to devices merely 2 hours after initial infection. The ransomware was staged on the domain controller around an hour and a half later, and executed 12 minutes after that.

Quantum’s behaviour bears similarities to other groups, possibly due to their history and recruitment. Several members of the disbanded Conti ransomware group are reported to have joined the Quantum and BumbleBee operations. Security researchers have also identified similarities in the payloads and C2 infrastructure used by these groups [5 & 6]. Notably, these are the IcedID initial payload and Cobalt Strike C2 beacon used in this attack. Darktrace has also observed and prevented IcedID and Cobalt Strike activity from BumbleBee across several customer environments.

The Attack

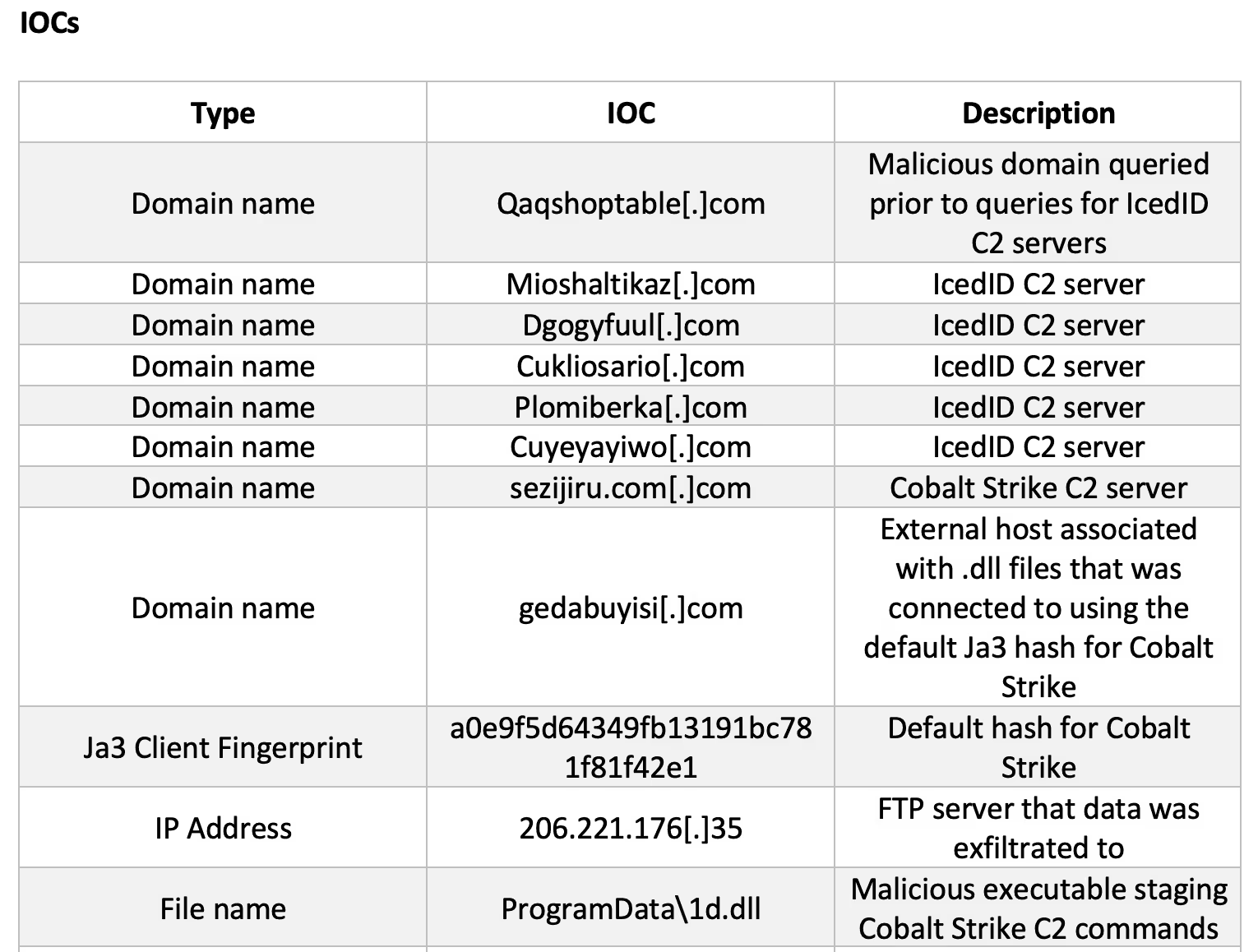

From 11th July 2022, a device suspected to be patient zero made repeated DNS queries for external hosts that appear to be associated with IcedID C2 traffic [7 & 8]. In several reported cases [9 & 10], this banking trojan is delivered through a phishing email containing a malicious attachment that loads an IcedID DLL. As Darktrace was not deployed in the prospect’s email environment, there was no visibility of the initial access vector, however an example of a phishing campaign containing this payload is presented below. It is also possible that the device was already infected prior to joining the network.

It was not until 22nd July that activity was seen which indicated the attack had progressed to the next stage of the kill chain. This contrasts the previously seen attacks where the progression to Cobalt Strike C2 beaconing and reconnaissance and lateral movement occurred within 2 hours of the initial infection [12 & 13]. In this case, patient zero initiated numerous unusual connections to other internal devices using a compromised account, connections that were indicative of reconnaissance using built-in Windows utilities:

· DNS queries for hostnames in the network

· SMB writes to IPC$ shares of those hostnames queried, binding to the srvsvc named pipe to enumerate things such as SMB shares and services on a device, client access permissions on network shares and users logged in to a remote session

· DCE-RPC connections to the endpoint mapper service, which enables identification of the ports assigned to a particular RPC service

These connections were initiated using an existing credential on the device and just like the dwelling time, differed from previously reported Quantum group attacks where discovery actions were spawned and performed automatically by the IcedID process [14]. Figure 3 depicts how Darktrace detected that this activity deviated from the device’s normal behaviour.

Four days later, on the 26th of July, patient zero performed SMB writes of DLL and MSI executables to the C$ shares of internal devices including domain controllers, using a privileged credential not previously seen on the patient zero device. The deviation from normal behaviour that this represents is also displayed in Figure 3. Throughout this activity, patient zero made DNS queries for the external Cobalt Strike C2 server shown in Figure 4. Cobalt Strike has often been seen as a secondary payload delivered via IcedID, due to IcedID’s ability to evade detection and deploy large scale campaigns [15]. It is likely that reconnaissance and lateral movement was performed under instructions received by the Cobalt Strike C2 server.

The SMB writes to domain controllers and usage of a new account suggests that by this stage, the attacker had achieved domain dominance. The attacker also appeared to have had hands-on access to the network via a console; the repetition of the paths ‘programdata\v1.dll’ and ‘ProgramData\v1.dll’, in lower and title case respectively, suggests they were entered manually.

These DLL files likely contained a copy of the malware that injects into legitimate processes such as winlogon, to perform commands that call out to C2 servers [16]. Shortly after the file transfers, the affected domain controllers were also seen beaconing to external endpoints (‘sezijiru[.]com’ and ‘gedabuyisi[.]com’) that OSINT tools have associated with these DLL files [17 & 18]. Moreover, these SSL connections were made using a default client fingerprint for Cobalt Strike [19], which is consistent with the initial delivery method. To illustrate the beaconing nature of these connections, Figure 5 displays the 4.3 million daily SSL connections to one of the C2 servers during the attack. The 100,000 most recent connections were initiated by 11 unique source IP addresses alone.

Shortly after the writes, the attack progressed to the penultimate stage. The next day, on the 27th of July, the attackers moved to achieve their first objective: data exfiltration. Data exfiltration is not always performed by the Quantum ransomware gang. Researchers have noted discrepancies between claims of data theft made in their ransom notes versus the lack of data seen leaving the network, although this may have been missed due to covert exfiltration via a Cobalt Strike beacon [20].

In contrast, this attack displayed several gigabytes of data leaving internal devices including servers that had previously beaconed to Cobalt Strike C2 servers. This data was transferred overtly via FTP, however the attacker still attempted to conceal the activity using ephemeral ports (FTP in EPSV mode). FTP is an effective method for attackers to exfiltrate large files as it is easy to use, organizations often neglect to monitor outbound usage, and it can be shipped through ports that will not be blocked by traditional firewalls [21].

Figure 6 displays an example of the FTP data transfer to attacker-controlled infrastructure, in which the destination share appears structured to identify the organization that the data was stolen from, suggesting there may be other victim organizations’ data stored. This suggests that data exfiltration was an intended outcome of this attack.

Data was continuously exfiltrated until a week later when the final stage of the attack was achieved and Quantum ransomware was detonated. Darktrace detected the following unusual SMB activity initiated from the attacker-created account that is a hallmark for ransomware (see Figure 7 for example log):

· Symmetric SMB Read to Write ratio, indicative of active encryption

· Sustained MIME type conversion of files, with the extension ‘.quantum’ appended to filenames

· SMB writes of a ransom note ‘README_TO_DECRYPT.html’ (see Figure 8 for an example note)

The example in Figure 8 mentions that the attacker also possessed large volumes of victim data. It is likely that the gigabytes of data exfiltrated over FTP were leveraged as blackmail to further extort the victim organization for payment.

Darktrace Coverage

If Darktrace/Email was deployed in the prospect’s environment, the initial payload (if delivered through a phishing email) could have been detected and held from the recipient’s inbox. Although DETECT identified anomalous network behaviour at each stage of the attack, since the incident occurred during a trial phase where Darktrace could only detect but not respond, the attack was able to progress through the kill chain. If RESPOND/Network had been configured in the targeted environment, the unusual connections observed during the initial access, C2, reconnaissance and lateral movement stages of the attack could have been blocked. This would have prevented the attackers from delivering the later stage payloads and eventual ransomware into the target network.

It is often thought that a properly implemented backup strategy is sufficient defense against ransomware [23], however as discussed in a previous Darktrace blog, the increasing frequency of double extortion attacks in a world where ‘data is the new oil’ demonstrates that backups alone are not a mitigation for the risk of a ransomware attack [24]. Equally, the lack of preventive defenses in the target’s environment enabled the attacker’s riskier decision to dwell in the network for longer and allowed them to optimize their potential reward.

Recent crackdowns from law enforcement on ransomware groups have shifted these groups’ approaches to aim for a balance between low risk and significant financial rewards [25]. However, given the Quantum gang only have a 5% market share in Q2 2022, compared to the 13.2% held by LockBit and 16.9% held by BlackCat [26], a riskier strategy may be favourable, as a longer dwell time and double extortion outcome offers a ‘belt and braces’ approach to maximizing the rewards from carrying out this attack. Alternatively, the gaps in-between the attack stages may imply that more than one player was involved in this attack, although this group has not been reported to operate a franchise model before [27]. Whether assisted by others or driving for a risk approach, it is clear that Quantum (like other actors) are continuing to adapt to ensure their financial success. They will continue to be successful until organizations dedicate themselves to ensuring that the proper data protection and network security measures are in place.

Conclusion

Ransomware has evolved over time and groups have merged and rebranded. However, this incident of Quantum ransomware demonstrates that regardless of the capability to execute a full attack within hours, prolonging an attack to optimize potential reward by leveraging double extortion tactics is sometimes still the preferred action. The pattern of network activity mirrors the techniques used in other Quantum attacks, however this incident lacked the continuous progression of the group’s attacks reported recently and may represent a change of motives during the process. Knowing that attacker motives can change reinforces the need for organizations to invest in preventative controls- an organization may already be too far down the line if it is executing its backup contingency plans. Darktrace DETECT/Network had visibility over both the early network-based indicators of compromise and the escalation to the later stages of this attack. Had Darktrace also been allowed to respond, this case of Quantum ransomware would also have had a very short dwell time, but a far better outcome for the victim.

Thanks to Steve Robinson for his contributions to this blog.

Appendices

References

[3], [12], [14], [16], [20] https://thedfirreport.com/2022/04/25/quantum-ransomware/

[4] https://www.mandiant.com/sites/default/files/2022-04/M-Trends%202022%20Executive%20Summary.pdf

[8] https://github.com/stamparm/maltrail/blob/master/trails/static/malware/icedid.txt

[11] https://twitter.com/0xToxin/status/1564289244084011014

[13], [27] https://cybernews.com/security/quantum-ransomware-gang-fast-and-furious/

[17] https://www.virustotal.com/gui/domain/gedabuyisi.com/relations

[18] https://www.virustotal.com/gui/domain/sezijiru.com/relations.

[19] https://github.com/ByteSecLabs/ja3-ja3s-combo/blob/master/master-list.txt

[21] https://www.darkreading.com/perimeter/ftp-hacking-on-the-rise

[22] https://www.pcrisk.com/removal-guides/23352-quantum-ransomware