What is the Cyber Assessment Framework?

The Cyber Assessment Framework (CAF) acts as guide for organizations, specifically across essential services, critical national infrastructure and regulated sectors, across the UK for assessing, managing and improving their cybersecurity, cyber resilience and cyber risk profile.

The guidance in the Cyber Assessment Framework aligns with regulations such as The Network and Information Systems Regulations (NIS), The Network and Information Security Directive (NIS2) and the Cyber Security and Resilience Bill.

What’s new with the Cyber Assessment Framework 4.0?

On 6 August 2025, the UK’s National Cyber Security Centre (NCSC) released Cyber Assessment Framework 4.0 (CAF v4.0) a pivotal update that reflects the increasingly complex threat landscape and the regulatory need for organisations to respond in smarter, more adaptive ways.

The Cyber Assessment Framework v4.0 introduces significant shifts in expectations, including, but not limited to:

- Understanding threats in terms of the capabilities, methods and techniques of threat actors and the importance of maintaining a proactive security posture (A2.b)

- The use of secure software development principles and practices (A4.b)

- Ensuring threat intelligence is understood and utilised - with a focus on anomaly-based detection (C1.f)

- Performance of proactive threat hunting with automation where appropriate (C2.a)

This blog post will focus on these components of the framework. However, we encourage readers to get the full scope of the framework by visiting the NCSC website where they can access the full framework here.

In summary, the changes to the framework send a clear signal: the UK’s technical authority now expects organisations to move beyond static rule-based systems and embrace more dynamic, automated defences. For those responsible for securing critical national infrastructure and essential services, these updates are not simply technical preferences, but operational mandates.

At Darktrace, this evolution comes as no surprise. In fact, it reflects the approach we've championed since our inception.

Why Darktrace? Leading the way since 2013

Darktrace was built on the principle that detecting cyber threats in real time requires more than signatures, thresholds, or retrospective analysis. Instead, we pioneered a self-learning approach powered by artificial intelligence, that understands the unique “normal” for every environment and uses this baseline to spot subtle deviations indicative of emerging threats.

From the beginning, Darktrace has understood that rules and lists will never keep pace with adversaries. That’s why we’ve spent over a decade developing AI that doesn't just alert, it learns, reasons, explains, and acts.

With Cyber Assessment Framework v4.0, the bar has been raised to meet this new reality. For technical practitioners tasked with evaluating their organisation’s readiness, there are five essential questions that should guide the selection or validation of anomaly detection capabilities.

6 Questions you should ask about your security posture to align with CAF v4

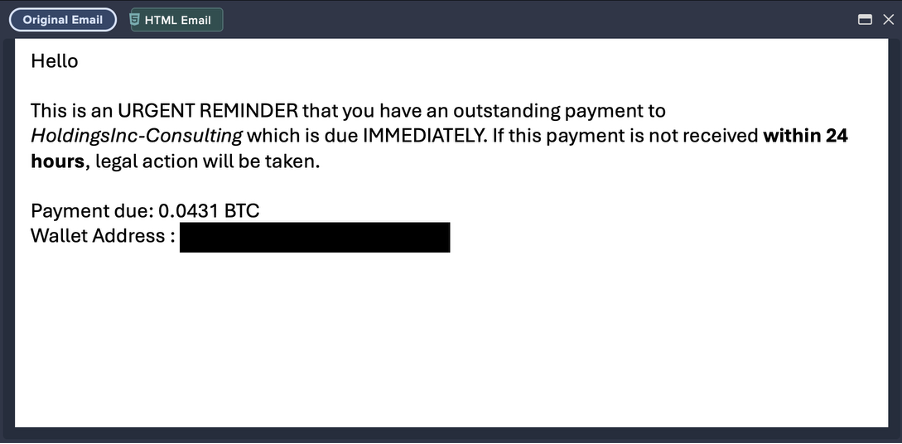

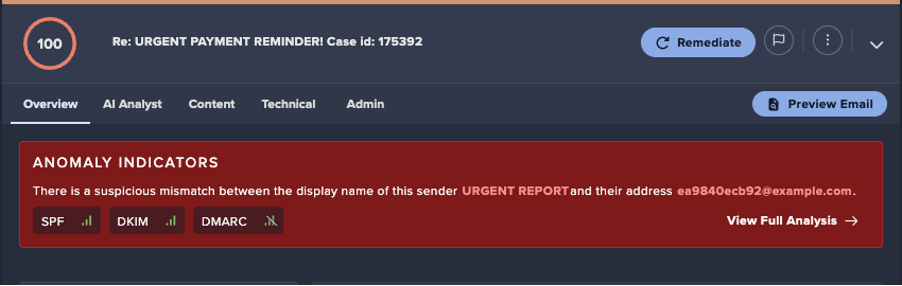

1. Can your tools detect threats by identifying anomalies?

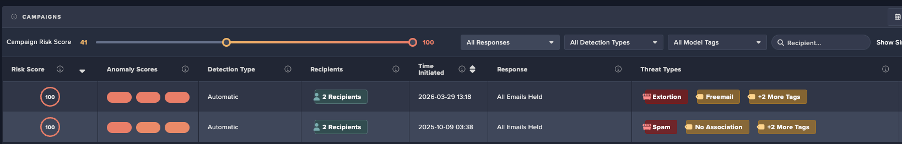

Cyber Assessment Framework v4.0 principle C1.f has been added in this version and requires that, “Threats to the operation of network and information systems, and corresponding user and system behaviour, are sufficiently understood. These are used to detect cyber security incidents.”

This marks a significant shift from traditional signature-based approaches, which rely on known Indicators of Compromise (IOCs) or predefined rules to an expectation that normal user and system behaviour is understood to an extent enabling abnormality detection.

Why this shift?

An overemphasis on threat intelligence alone leaves defenders exposed to novel threats or new variations of existing threats. By including reference to “understanding user and system behaviour” the framework is broadening the methods of threat detection beyond the use of threat intelligence and historical attack data.

While CAF v4.0 places emphasis on understanding normal user and system behaviour and using that understanding to detect abnormalities and as a result, adverse activity. There is a further expectation that threats are understood in terms of industry specific issues and that monitoring is continually updated

Darktrace uses an anomaly-based approach to threat detection which involves establishing a dynamic baseline of “normal” for your environment, then flagging deviations from that baseline — even when there’s no known IoCs to match against. This allows security teams to surface previously unseen tactics, techniques, and procedures in real time, whether it’s:

- An unexpected outbound connection pattern (e.g., DNS tunnelling);

- A first-time API call between critical services;

- Unusual calls between services; or

- Sensitive data moving outside normal channels or timeframes.

The requirement that organisations must be equipped to monitor their environment, create an understanding of normal and detect anomalous behaviour aligns closely with Darktrace’s capabilities.

2. Is threat hunting structured, repeatable, and improving over time?

CAF v4.0 introduces a new focus on structured threat hunting to detect adverse activity that may evade standard security controls or when such controls are not deployable.

Principle C2.a outlines the need for documented, repeatable threat hunting processes and stresses the importance of recording and reviewing hunts to improve future effectiveness. This inclusion acknowledges that reactive threat hunting is not sufficient. Instead, the framework calls for:

- Pre-determined and documented methods to ensure threat hunts can be deployed at the requisite frequency;

- Threat hunts to be converted into automated detection and alerting, where appropriate;

- Maintenance of threat hunt records and post-hunt analysis to drive improvements in the process and overall security posture;

- Regular review of the threat hunting process to align with updated risks;

- Leveraging automation for improvement, where appropriate;

- Focus on threat tactics, techniques and procedures, rather than one-off indicators of compromise.

Traditionally, playbook creation has been a manual process — static, slow to amend, and limited by human foresight. Even automated SOAR playbooks tend to be stock templates that can’t cover the full spectrum of threats or reflect the specific context of your organisation.

CAF v4.0 sets the expectation that organisations should maintain documented, structured approaches to incident response. But Darktrace / Incident Readiness & Recovery goes further. Its AI-generated playbooks are bespoke to your environment and updated dynamically in real time as incidents unfold. This continuous refresh of “New Events” means responders always have the latest view of what’s happening, along with an updated understanding of the AI's interpretation based on real-time contextual awareness, and recommended next steps tailored to the current stage of the attack.

The result is far beyond checkbox compliance: a living, adaptive response capability that reduces investigation time, speeds containment, and ensures actions are always proportionate to the evolving threat.

3. Do you have a proactive security posture?

Cyber Assessment Framework v4.0 does not want organisations to detect threats, it expects them to anticipate and reduce cyber risk before an incident ever occurs. That is s why principle A2.b calls for a security posture that moves from reactive detection to predictive, preventative action.

A proactive security posture focuses on reducing the ease of the most likely attack paths in advance and reducing the number of opportunities an adversary has to succeed in an attack.

To meet this requirement, organisations could benefit in looking for solutions that can:

- Continuously map the assets and users most critical to operations;

- Identify vulnerabilities and misconfigurations in real time;

- Model likely adversary behaviours and attack paths using frameworks like MITRE ATT&CK; and

- Prioritise remediation actions that will have the highest impact on reducing overall risk.

When done well, this approach creates a real-time picture of your security posture, one that reflects the dynamic nature and ongoing evolution of both your internal environment and the evolving external threat landscape. This enables security teams to focus their time in other areas such as validating resilience through exercises such as red teaming or forecasting.

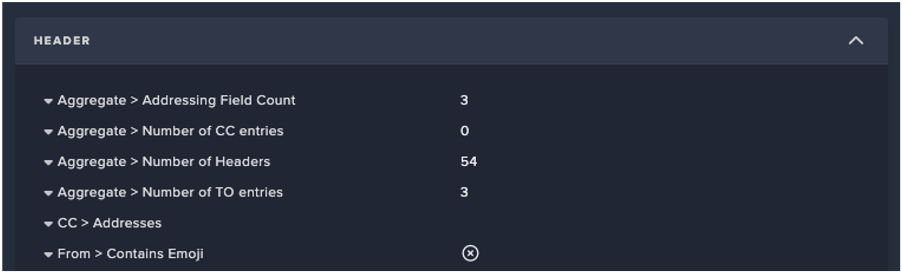

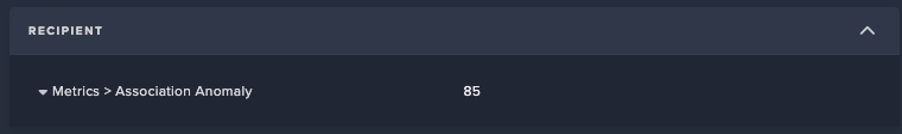

4. Can your team/tools customize detection rules and enable autonomous responses?

CAF v4.0 places greater emphasis on reducing false positives and acting decisively when genuine threats are detected.

The framework highlights the need for customisable detection rules and, where appropriate, autonomous response actions that can contain threats before they escalate:

The following new requirements are included:

- C1.c.: Alerts and detection rules should be adjustable to reduce false positives and optimise responses. Custom tooling and rules are used in conjunction with off the shelf tooling and rules;

- C1.d: You investigate and triage alerts from all security tools and take action – allowing for improvement and prioritization of activities;

- C1.e: Monitoring and detection personnel have sufficient understanding of operational context and deal with workload effectively as well as identifying areas for improvement (alert or triage fatigue is not present);

- C2.a: Threat hunts should be turned into automated detections and alerting where appropriate and automation should be leveraged to improve threat hunting.

Tailored detection rules improve accuracy, while automation accelerates response, both of which help satisfy regulatory expectations. Cyber AI Analyst allows for AI investigation of alerts and can dramatically reduce the time a security team spends on alerts, reducing alert fatigue, allowing more time for strategic initiatives and identifying improvements.

5. Is your software secure and supported?

CAF v4.0 introduced a new principle which requires software suppliers to leverage an established secure software development framework. Software suppliers must be able to demonstrate:

- A thorough understanding of the composition and provenance of software provided;

- That the software development lifecycle is informed by a detailed and up to date understanding of threat; and

- They can attest to the authenticity and integrity of the software, including updates and patches.

Darktrace is committed to secure software development and all Darktrace products and internally developed systems are developed with secure engineering principles and security by design methodologies in place. Darktrace commits to the inclusion of security requirements at all stages of the software development lifecycle. Darktrace is ISO 27001, ISO 27018 and ISO 42001 Certified – demonstrating an ongoing commitment to information security, data privacy and artificial intelligence management and compliance, throughout the organisation.

6. Is your incident response plan built on a true understanding of your environment and does it adapt to changes over time?

CAF v4.0 raises the bar for incident response by making it clear that a plan is only as strong as the context behind it. Your response plan must be shaped by a detailed, up-to-date understanding of your organisation’s specific network, systems, and operational priorities.

The framework’s updates emphasise that:

- Plans must explicitly cover the network and information systems that underpin your essential functions because every environment has different dependencies, choke points, and critical assets.

- They must be readily accessible even when IT systems are disrupted ensuring critical steps and contact paths aren’t lost during an incident.

- They should be reviewed regularly to keep pace with evolving risks, infrastructure changes, and lessons learned from testing.

From government expectation to strategic advantage

Cyber Assessment Framework v4.0 signals a powerful shift in cybersecurity best practice. The newest version sets a higher standard for detection performance, risk management, threat hunting software development and proactive security posture.

For Darktrace, this is validation of the approach we have taken since the beginning: to go beyond rules and signatures to deliver proactive cyber resilience in real-time.

-----

Disclaimer:

This document has been prepared on behalf of Darktrace Holdings Limited. It is provided for information purposes only to provide prospective readers with general information about the Cyber Assessment Framework (CAF) in a cyber security context. It does not constitute legal, regulatory, financial or any other kind of professional advice and it has not been prepared with the reader and/or its specific organisation’s requirements in mind. Darktrace offers no warranties, guarantees, undertakings or other assurances (whether express or implied) that: (i) this document or its content are accurate or complete; (ii) the steps outlined herein will guarantee compliance with CAF; (iii) any purchase of Darktrace’s products or services will guarantee compliance with CAF; (iv) the steps outlined herein are appropriate for all customers. Neither the reader nor any third party is entitled to rely on the contents of this document when making/taking any decisions or actions to achieve compliance with CAF. To the fullest extent permitted by applicable law or regulation, Darktrace has no liability for any actions or decisions taken or not taken by the reader to implement any suggestions contained herein, or for any third party products, links or materials referenced. Nothing in this document negates the responsibility of the reader to seek independent legal or other advice should it wish to rely on any of the statements, suggestions, or content set out herein.

The cybersecurity landscape evolves rapidly, and blog content may become outdated or superseded. We reserve the right to update, modify, or remove any content without notice.

%201.png)

.avif)

.jpg)

.jpg)