17%: The figure that changes your risk math

Most organizations deploy a Secure Email Gateway (SEG) assuming it will catch whatever their native email security provider would not be able to. But the data tells a different story. Nearly one in six of the riskiest inbound emails still evade the native + SEG layers on the first pass – 17% is the average SEG miss rate after Microsoft filtering.

How did we calculate the miss rate? The figure comes from a volume-weighted analysis of real-world enterprise deployments where Darktrace operated alongside a SEG, compared to deployments without a SEG. It’s based on how each security layer treated malicious emails on the first instance – if the SEG missed the email at the initial filtering but caught it minutes or hours later we considered it a miss, because the threat had already been exposed to the user. We computed the mean per category miss count across the top three widely deployed SEGs and divided that by the total number of threats that had already bypassed native filters. The resulting rate is 17.8%, conservatively communicated as “about 17%.”

This result is a powerful directional signal – not a guarantee for every environment – but significant enough to merit a closer look.

What SEGs miss most (and why it matters)

Our analysis shows that SEGs most frequently miss context-driven, low-signal attacks.

These are the kinds of emails that look convincing to recipients and rely on business context, without overtly malicious indicators, including:

Solicitation and fraudulent requests (~21% miss rate)

Deceptive invoices, vendor “updates,” payment term changes, or urgent favors. These messages often lack obvious payloads and exploit business process mimicry, making them nearly indistinguishable from genuine correspondence in the eyes of static, rule-based filters dependent on payload analysis. 22% of breaches stemming from external actors were a result of social engineering in 2025 (Verizon 2025 Data Breach Investigations Report).

Phishing links (~20% miss rate)

Links to credential harvesters or later-weaponized sites using new or compromised domains, redirects, or shorteners. URL rotation and staging evade list-based controls; the linguistic and workflow context looks routine. This also includes threats that leverage legitimate cloud platforms to disguise their intent and avoid reputation analysis. Phishing remains one of the most expensive cause of breaches, an average cost of $4.8 million (IBM Cost of a Data Breach Report 2025).

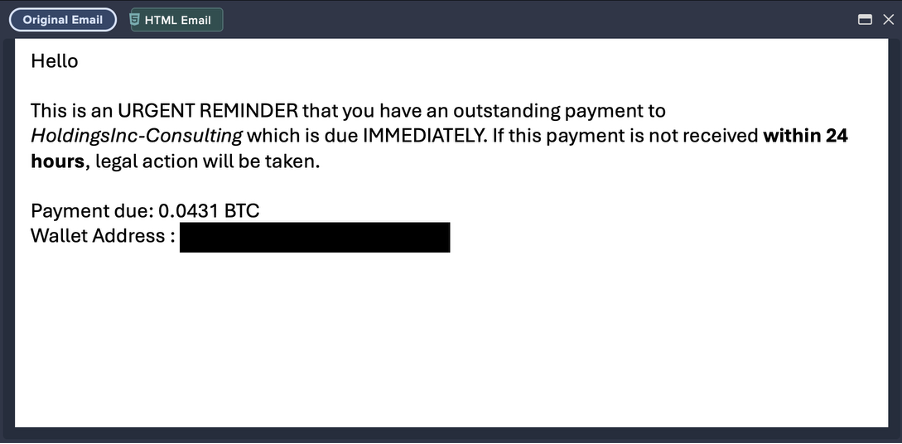

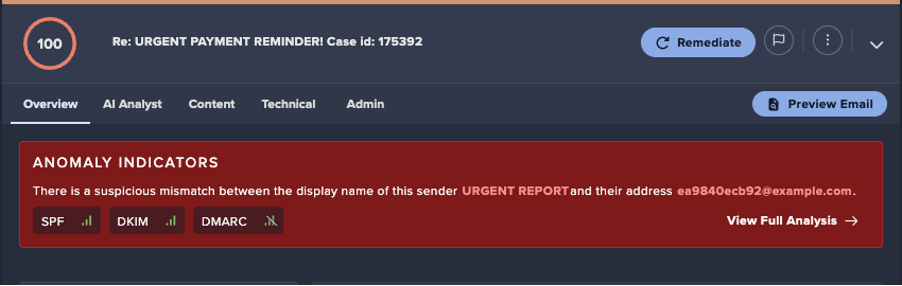

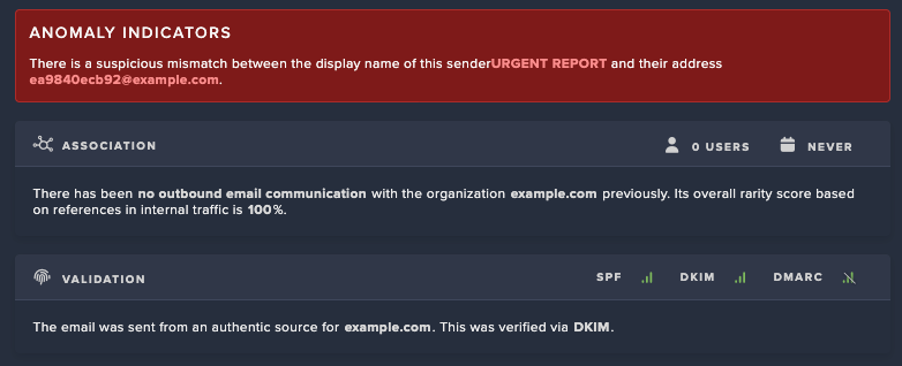

User impersonation (~19% miss rate)

Convincing messages that mimic executives, colleagues, or partners, often with subtle display-name or address manipulation. These attacks rely on social engineering and context, bypassing static detection and reputation checks.

Other notable misses: Credential harvesting lures and forged/abused sender addresses, both typically light on static indicators but heavy on contextual clues.

Why SEGs miss these emails

Let’s look at some of the reasons SEGs fail to catch more advanced, context-driven attacks.

- Attack-centric bias. SEGs excel at recognizing known-bad indicators (spam, commodity malware). But today’s high-impact threats are supercharged by AI and can be hyper-customized with polymorphic malware or personalized social engineering. They mirror normal business communications and weaponize trust, not binary patterns.

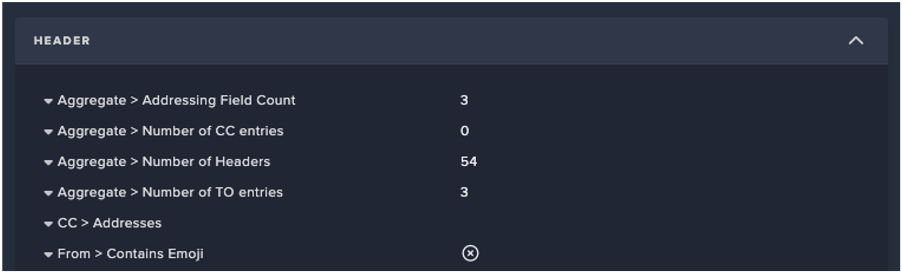

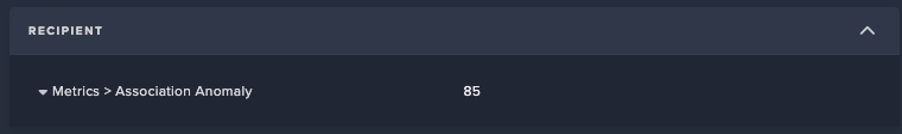

- Limited behavioral understanding. Without modeling each user’s “normal” pattern of life, subtle anomalies (timing, tone, counterpart, transaction patterns) can look benign, even if they should be flagged. Some modern solutions have begun to incorporate behavioral analysis into their products, but these are still supplements for additional information rather than integrated into the core threat detection engine.

- Assumed trust. Account compromise and attacks that abuse legitimate services exploit trust. SEGs weren’t designed to handle these kinds of threats, in fact, they assume trust in order to minimize false positives, leaving them wide open to attackers.

- Siloed detection. Email rarely tells the whole story. Attacks pivot across email, identity, and SaaS; single-channel tools can’t connect those dots in real time. This issue is exacerbated when email security vendors are only focused on email activity, ignoring activity beyond the inbox like network or cloud account activity.

- Adaptive evasion. Fast domain churn, benign-looking links, and clean hosting on trusted platforms routinely outpace static rules and blocklists. No matter how great your threat intelligence or threat research teams may be, there is a reliance on a first victim – which leads to defenders remaining one step behind attackers.

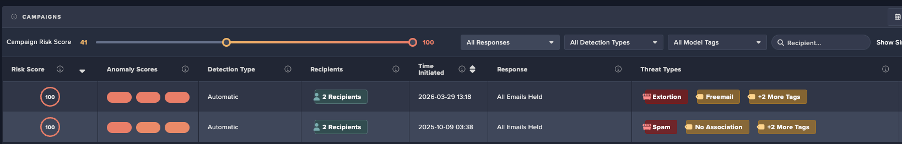

How Darktrace / EMAIL catches the threats SEGs miss

Everywhere a SEG falters, Darktrace excels. Let’s take a look why.

- Self-Learning AI: Darktrace learns the unique communication patterns of every user, department, and supplier, flagging the subtle deviations that typify social engineering and impersonation.

- A zero trust approach: According to Gartner, many organizations fail to extend their zero-trust strategy to email, leaving a critical gap. Darktrace assumes no trust, applying the zero trust principle across all aspects of email communication.

- Cross-domain context: Correlates behavior across email, identity, and SaaS, exposing multi-stage campaigns that a siloed SEG can’t piece together.

- Better together with native providers: Operates alongside your native email security – not against it – so protection is additive. Darktrace ingests native signals and orchestrate unified quarantine without duplicating policy stacks or forcing you to disable built-in protections.

For example: one of our customers, a global enterprise saw a surge of “document-share” notifications from a trusted collaboration platform. The domain and authentication looked fine; their SEG allowed it. Darktrace / EMAIL flagged it because the supplier’s sharing behavior and permission scope deviated from normal (volume, recipients, and access level). Follow-up confirmed the supplier account was compromised. Behavioral context – not rules or signatures – made the difference.

Three steps to building a modern email security stack

Let’s end with three strategic takeaways for ensuring your email security is fit-for-purpose.

- Defense-in-depth = diversity, not duplication

Why it matters: Two security layers with the same detection philosophy (e.g. SEG + native email security) create overlapping blind spots. Both native email security providers and SEGs are attack-centric solutions that rely on past threats and threat intelligence. True defense-in-depth ensures you are asking different questions of every email that comes through.

How to apply: Pair your native email security with behavioral AI that learns how your business communicates. Eliminate redundant layers that only add cost and latency.

- Coordinate the layers you keep

Why it matters: Layers that don’t talk create delays and hand-offs; SEGs often become sole decision-makers by forcing native protections off.

How to apply: Favor an ICES approach that ingests native signals and can orchestrate unified quarantine, so detections become actions in one motion.

- Quantify your security gap with a POV

Why it matters: Every environment is different. You need evidence before making changes to your stack.

How to apply: Run Darktrace / EMAIL in observe mode next to your current stack to surface exactly what’s still getting through. Use those results to plan your transition and measure improvement.

Ready to claim 17% more protection? Request a demo with Darktrace / EMAIL to quantify what your SEG is missing, then decide how much of that residual risk you’re willing to accept. We’ll help you plan a clean, staged transition that preserves native protections and streamlines operations. In the meantime, calculate your potential ROI using Darktrace / EMAIL with our handy calculator.

[related-resource]

See why Darktrace is an email security Leader

Read the Gartner® Magic Quadrant™ report & discover what it means to be recognized as an email security Leader.

.avif)

%201.png)

.jpg)