The State of AI in Cybersecurity

In a recent survey outlined in Darktrace’s State of AI Cyber Security whitepaper, 95% of cyber security professionals agree that AI-powered security solutions will improve their organization’s detection of cyber-threats [1]. Crucially, a combination of multiple AI methods is the most effective to improve cybersecurity; improving threat detection, accelerating threat investigation and response, and providing visibility across an organization’s digital environment.

In March 2024, Darktrace’s AI-led security platform was able to detect suspicious activity affecting a customer’s email, Software-as-a-Service (SaaS), and network environments, whilst its applied supervised learning capability, Cyber AI Analyst, autonomously correlated and connected all of these events together in one single incident, explained concisely using natural language processing.

Attack Overview

Following an initial email attack vector, an attacker logged into a compromised SaaS user account from the Netherlands, changed inbox rules, and leveraged the account to send thousands of phishing emails to internal and external users. Internal users fell victim to the emails by clicking on contained suspicious links that redirected them to newly registered suspicious domains hosted on same IP address as the hijacked SaaS account login. This activity triggered multiple alerts in Darktrace DETECT™ on both the network and SaaS side, all of which were correlated into one Cyber AI Analyst incident.

In this instance, Darktrace RESPOND™ was not active on any of the customer’s environments, meaning the compromise was able to escalate until their security team acted on the alerts raised by DETECT. Had RESPOND been enabled at the time of the attack, it would have been able to apply swift actions to contain the attack by blocking connections to suspicious endpoints on the network side and disabling users deviating from their normal behavior on the customer’s SaaS environment.

Nevertheless, thanks to DETECT and Cyber AI Analyst, Darktrace was able to provide comprehensive visibility across the customer’s three digital estate environments, decreasing both investigation and response time which enabled them to quickly enact remediation during the attack. This highlights the crucial role that Darktrace’s combined AI approach can play in anomaly detection cyber defense

Attack Details & Darktrace Coverage

1. Email: the initial attack vector

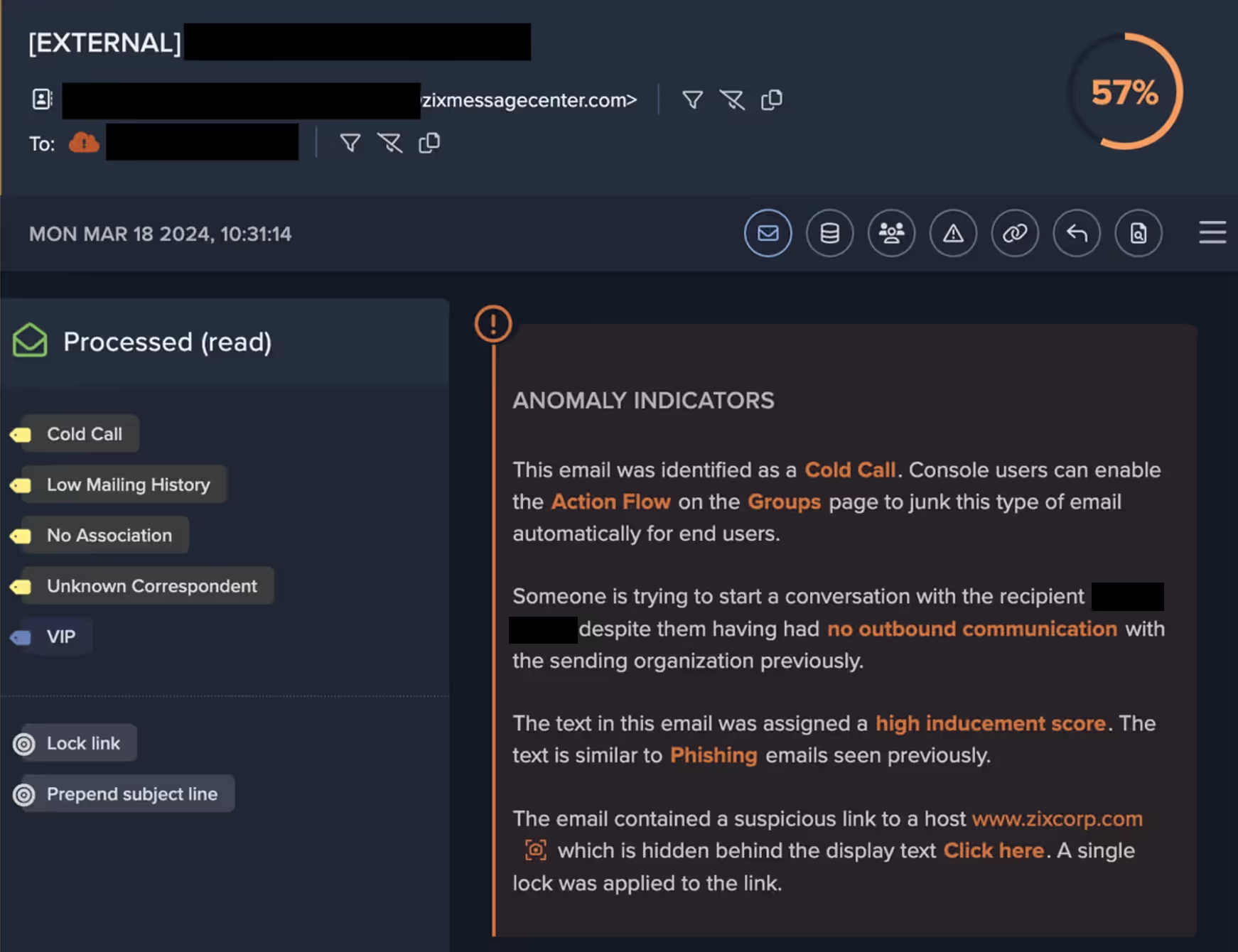

The initial attack vector was likely email, as on March 18, 2024, Darktrace observed a user device making several connections to the email provider “zixmail[.]net”, shortly before it connected to the first suspicious domain. Darktrace/Email identified multiple unusual inbound emails from an unknown sender that contained a suspicious link. Darktrace recognized these emails as potentially malicious and locked the link, ensuring that recipients could not directly click it.

2. Escalation to Network

Later that day, despite Darktrace/Email having locked the link in the suspicious email, the user proceeded to click on it and was directed to a suspicious external location, namely “rz8js7sjbef[.]latovafineart[.]life”, which triggered the Darktrace/Network DETECT model “Suspicious Domain”. Darktrace/Email was able to identify that this domain had only been registered 4 days before this activity and was hosted on an IP address based in the Netherlands, 193.222.96[.]9.

3. SaaS Account Hijack

Just one minute later, Darktrace/Apps observed the user’s Microsoft 365 account logging into the network from the same IP address. Darktrace understood that this represented unusual SaaS activity for this user, who had only previously logged into the customer’s SaaS environment from the US, triggering the “Unusual External Source for SaaS Credential Use” model.

4. SaaS Account Updates

A day later, Darktrace identified an unusual administrative change on the user’s Microsoft 365 account. After logging into the account, the threat actor was observed setting up a new multi-factor authentication (MFA) method on Microsoft Authenticator, namely requiring a 6-digit code to authenticate. Darktrace understood that this authentication method was different to the methods previously used on this account; this, coupled with the unusual login location, triggered the “Unusual Login and Account Update” DETECT model.

5. Obfuscation Email Rule

On March 20, Darktrace detected the threat actor creating a new email rule, named “…”, on the affected account. Attackers are typically known to use ambiguous or obscure names when creating new email rules in order to evade the detection of security teams and endpoints users.

The parameters for the email rule were:

“AlwaysDeleteOutlookRulesBlob: False, Force: False, MoveToFolder: RSS Feeds, Name: ..., MarkAsRead: True, StopProcessingRules: True.”

This rule was seemingly created with the intention of obfuscating the sending of malicious emails, as the rule would move sent emails to the "RSS Feeds” folder, a commonly used tactic by attackers as the folder is often left unchecked by endpoint users. Interestingly, Darktrace identified that, despite the initial unusual login coming from the Netherlands, the email rule was created from a different destination IP, indicating that the attacker was using a Virtual Private Network (VPN) after gaining a foothold in the network.

6. Outbound Phishing Emails Sent

Later that day, the attacker was observed using the compromised customer account to send out numerous phishing emails to both internal and external recipients. Darktrace/Email detected a significant spike in inbound emails on the compromised account, with the account receiving bounce back emails or replies in response to the phishing emails. Darktrace further identified that the phishing emails contained a malicious DocSend link hidden behind the text “Click Here”, falsely claiming to be a link to the presentation platform Prezi.

7. Suspicious Domains and Redirects

After the phishing emails were sent, multiple other internal users accessed the DocSend link, which directed them to another suspicious domain, “thecalebgroup[.]top”, which had been registered on the same day and was hosted on the aforementioned Netherlands-based IP, 193.222.96[.]91. At the time of the attack, this domain had not been reported by any open-source intelligence (OSINT), but it has since been flagged as malicious by multiple vendors [2].

8. Cyber AI Analyst’s Investigation

As this attack was unfolding, Darktrace’s Cyber AI Analyst was able to autonomously investigate the events, correlating them into one wider incident and continually adding a total of 14 new events to the incident as more users fell victim to the phishing links.

Cyber AI Analyst successfully weaved together the initial suspicious domain accessed in the initial email attack vector (Figure 5), the hijack of the SaaS account from the Netherlands IP (Figure 6), and the connection to the suspicious redirect link (Figure 7). Cyber AI Analyst was also able to uncover other related activity that took place at the time, including a potential attempt to exfiltrate data out of the customer’s network.

By autonomously analyzing the thousands of connections taking place on a network at any given time, Darktrace’s Cyber AI Analyst is able to detect seemingly separate anomalous events and link them together in one incident. This not only provides organizations with full visibility over potential compromises on their networks, but also saves their security teams precious time ensuring they can quickly scope out the ongoing incident and begin remediation.

Conclusion

In this scenario, Darktrace demonstrated its ability to detect and correlate suspicious activities across three critical areas of a customer’s digital environment: email, SaaS, and network.

It is essential that cyber defenders not only adopt AI but use a combination of AI technology capable of learning and understanding the context of an organization’s entire digital infrastructure. Darktrace’s anomaly-based approach to threat detection allows it to identify subtle deviations from the expected behavior in network devices and SaaS users, indicating potential compromise. Meanwhile, Cyber AI Analyst dynamically correlates related events during an ongoing attack, providing organizations and their security teams with the information needed to respond and remediate effectively.

Credit to Zoe Tilsiter, Analyst Consulting Lead (EMEA), Brianna Leddy, Director of Analysis

Appendices

References

[1] https://darktrace.com/state-of-ai-cyber-security

[2] https://www.virustotal.com/gui/domain/thecalebgroup.top

Darktrace DETECT Model Coverage

SaaS Models

- SaaS / Access / Unusual External Source for SaaS Credential Use

- SaaS / Compromise / Unusual Login and Account Update

- SaaS / Compliance / Anomalous New Email Rule

- SaaS / Compromise / Unusual Login and New Email Rule

Network Models

- Device / Suspicious Domain

- Multiple Device Correlations / Multiple Devices Breaching Same Model

Cyber AI Analyst Incidents

- Possible Hijack of Office365 Account

- Possible SSL Command and Control

Indicators of Compromise (IoCs)

IoC – Type – Description

193.222.96[.]91 – IP – Unusual Login Source

thecalebgroup[.]top – Domain – Possible C2 Endpoint

rz8js7sjbef[.]latovafineart[.]life – Domain – Possible C2 Endpoint

https://docsend[.]com/view/vcdmsmjcskw69jh9 - Domain - Phishing Link

%201.png)

%201.png)