What is vishing?

Vishing, or voice phishing, is a type of cyber-attack that utilizes telephone devices to deceive targets. Threat actors typically use social engineering tactics to convince targets that they can be trusted, for example, by masquerading as a family member, their bank, or trusted a government entity. One method frequently used by vishing actors is to intimidate their targets, convincing them that they may face monetary fines or jail time if they do not provide sensitive information.

What makes vishing attacks dangerous to organizations?

Vishing attacks utilize social engineering tactics that exploit human psychology and emotion. Threat actors often impersonate trusted entities and can make it appear as though a call is coming from a reputable or known source. These actors often target organizations, specifically their employees, and pressure them to obtain sensitive corporate data, such as privileged credentials, by creating a sense of urgency, intimidation or fear. Corporate credentials can then be used to gain unauthorized access to an organization’s network, often bypassing traditional security measures and human security teams.

Darktrace’s coverage of vishing attack

On August 12, 2024, Darktrace / NETWORK identified malicious activity on the network of a customer in the hospitality sector. The customer later confirmed that a threat actor had gained unauthorized access through a vishing attack. The attacker successfully spoofed the IT support phone number and called a remote employee, eventually leading to the compromise.

Establishing a Foothold

During the call, the remote employee was requested to authenticate via multi-factor authentication (MFA). Believing the caller to be a member of their internal IT support, using the legitimate caller ID, the remote user followed the instructions and confirmed the MFA prompt, providing access to the customer’s network.

This authentication allowed the threat actor to login into the customer’s environment by proxying through their Virtual Private Network (VPN) and gain a foothold in the network. As remote users are assigned the same static IP address when connecting to the corporate environment, the malicious actor appeared on the network using the correct username and IP address. While this stealthy activity might have evaded traditional security tools and human security teams, Darktrace’s anomaly-based threat detection identified an unusual login from a different hostname by analyzing NTLM requests from the static IP address, which it determined to be anomalous.

Observed Activity

- On 2024-08-12 the static IP was observed using a credential belonging to the remote user to initiate an SMB session with an internal domain controller, where the authentication method NTLM was used

- A different hostname from the usual hostname associated with this remote user was identified in the NTLM authentication request sent from a device with the static IP address to the domain controller

- This device does not appear to have been seen on the network prior to this event.

Darktrace, therefore, recognized that this login was likely made by a malicious actor.

Internal Reconnaissance

Darktrace subsequently observed the malicious actor performing a series of reconnaissance activities, including LDAP reconnaissance, device hostname reconnaissance, and port scanning:

- The affected device made a 53-second-long LDAP connection to another internal domain controller. During this connection, the device obtained data about internal Active Directory (AD) accounts, including the AD account of the remote user

- The device made HTTP GET requests (e.g., HTTP GET requests with the Target URI ‘/nice ports,/Trinity.txt.bak’), indicative of Nmap usage

- The device started making reverse DNS lookups for internal IP addresses.

Lateral Movement

The threat actor was also seen making numerous failed NTLM authentication requests using a generic default Windows credential, indicating an attempt to brute force and laterally move through the network. During this activity, Darktrace identified that the device was using a different hostname than the one typically used by the remote employee.

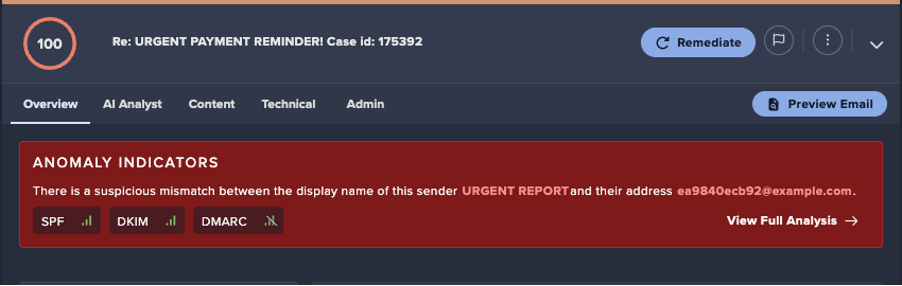

Cyber AI Analyst

In addition to the detection by Darktrace / NETWORK, Darktrace’s Cyber AI Analyst launched an autonomous investigation into the ongoing activity. The investigation was able to correlate the seemingly separate events together into a broader incident, continuously adding new suspicious linked activities as they occurred.

Upon completing the investigation, Cyber AI Analyst provided the customer with a comprehensive summary of the various attack phases detected by Darktrace and the associated incidents. This clear presentation enabled the customer to gain full visibility into the compromise and understand the activities that constituted the attack.

Darktrace Autonomous Response

Despite the sophisticated techniques and social engineering tactics used by the attacker to bypass the customer’s human security team and existing security stack, Darktrace’s AI-driven approach prevented the malicious actor from continuing their activities and causing more harm.

Darktrace’s Autonomous Response technology is able to enforce a pattern of life based on what is ‘normal’ and learned for the environment. If activity is detected that represents a deviation from expected activity from, a model alert is triggered. When Darktrace’s Autonomous Response functionality is configured in autonomous response mode, as was the case with the customer, it swiftly applies response actions to devices and users without the need for a system administrator or security analyst to perform any actions.

In this instance, Darktrace applied a number of mitigative actions on the remote user, containing most of the activity as soon as it was detected:

- Block all outgoing traffic

- Enforce pattern of life

- Block all connections to port 445 (SMB)

- Block all connections to port 9401

The growing threat of vishing in a remote workforce

This vishing attack underscores the significant risks remote employees face and the critical need for companies to address vishing threats to prevent network compromises. The remote employee in this instance was deceived by a malicious actor who spoofed the phone number of internal IT Support and convinced the employee to perform approve an MFA request. This sophisticated social engineering tactic allowed the attacker to proxy through the customer’s VPN, making the malicious activity appear legitimate due to the use of static IP addresses.

Despite the stealthy attempts to perform malicious activities on the network, Darktrace’s focus on anomaly detection enabled it to swiftly identify and analyze the suspicious behavior. This led to the prompt determination of the activity as malicious and the subsequent blocking of the malicious actor to prevent further escalation.

While the exact motivation of the threat actor in this case remains unclear, the 2023 cyber-attack on MGM Resorts serves as a stark illustration of the potential consequences of such threats. MGM Resorts experienced significant disruptions and data breaches following a similar vishing attack, resulting in financial and reputational damage [1]. If the attack on the customer had not been detected, they too could have faced sensitive data loss and major business disruptions. This incident underscores the critical importance of robust security measures and vigilant monitoring to protect against sophisticated cyber threats.

Insights from Darktrace’s First 6: Half-year threat report for 2024

Darktrace’s First 6: Half-Year Threat Report 2024 highlights the latest attack trends and key threats observed by the Darktrace Threat Research team in the first six months of 2024.

- Focuses on anomaly detection and behavioral analysis to identify threats

- Maps mitigated cases to known, publicly attributed threats for deeper context

- Offers guidance on improving security posture to defend against persistent threats

Appendices

Credit to Rajendra Rushanth (Cyber Security Analyst) and Ryan Traill (Threat Content Lead)

Darktrace Model Detections

- Device / Unusual LDAP Bind and Search Activity

- Device / Attack and Recon Tools

- Device / Network Range Scan

- Device / Suspicious SMB Scanning Activity

- Device / RDP Scan

- Device / UDP Enumeration

- Device / Large Number of Model Breaches

- Device / Network Scan

- Device / Multiple Lateral Movement Model Breaches (Enhanced Monitoring)

- Device / Reverse DNS Sweep

- Device / SMB Session Brute Force (Non-Admin)

List of Indicators of Compromise (IoCs)

IoC - Type – Description

/nice ports,/Trinity.txt.bak - URI – Unusual Nmap Usage

MITRE ATT&CK Mapping

Tactic – ID – Technique

INITIAL ACCESS – T1200 – Hardware Additions

DISCOVERY – T1046 – Network Service Scanning

DISCOVERY – T1482 – Domain Trust Discovery

RECONNAISSANCE – T1590 – IP Addresses

T1590.002 – DNS

T1590.005 – IP Addresses

RECONNAISSANCE – T1592 – Client Configurations

T1592.004 – Client Configurations

RECONNAISSANCE – T1595 – Scanning IP Blocks

T1595.001 – Scanning IP Blocks

T1595.002 – Vulnerability Scanning

%201.png)

.jpg)