Black Friday deals are rolling in, and so are the phishing scams

As the world gears up for Black Friday and the festive shopping season, inboxes flood with deals and delivery notifications, creating a perfect storm for phishing attackers to strike.

Contributing to the confusion, legitimate brands often rely on similar urgency cues, limited-time offers, and high-volume email campaigns used by scammers, blurring the lines between real deals and malicious lookalikes. While security teams remain extra vigilant during this period, the risk of phishing emails slipping in unnoticed remains high, as does the risk of individuals clicking to take advantage of holiday shopping offers.

Analysis conducted by Darktrace’s global analyst team revealed that phishing attacks taking advantage of Black Friday jumped by 620% in the weeks leading up to the holiday weekend, with the volume of phishing attacks expected to jump a further 20-30% during Black Friday week itself.

First observation: Brand impersonation

Brand impersonation was one of the techniques that stood out, with threat actors creating convincing emails – likely assisted by generative AI – purporting to be from household brands including special offers and promotions.

The week before Thanksgiving (15-21 November) saw 201% more phishing attempts mimicking US retailers than the same week in October, as attackers sought to profit off the back of the busy holiday shopping season. It’s not just about volume, either – attackers are spoofing brands people love to shop with during the holidays. Fake emails that look like they’re from well-known retailers like Macy’s, Walmart, and Target were up by 54% just across last week1. Even so, Amazon is the most impersonated brand, making up 80% of phishing attempts in Darktrace’s analysis of global consumer brands like Apple, Alibaba and Netflix.

While major brands invest heavily in protecting their organizations and customers from cyber-attacks, impersonation is a complicated area as it falls outside of a brand’s legitimate infrastructure and security remit. Retail brands have a huge attack surface, creating plenty of vectors for impersonation, while fake domains, social profiles, and promotional messages can be created quickly and at scale.

Second observation: Fake marketing domains

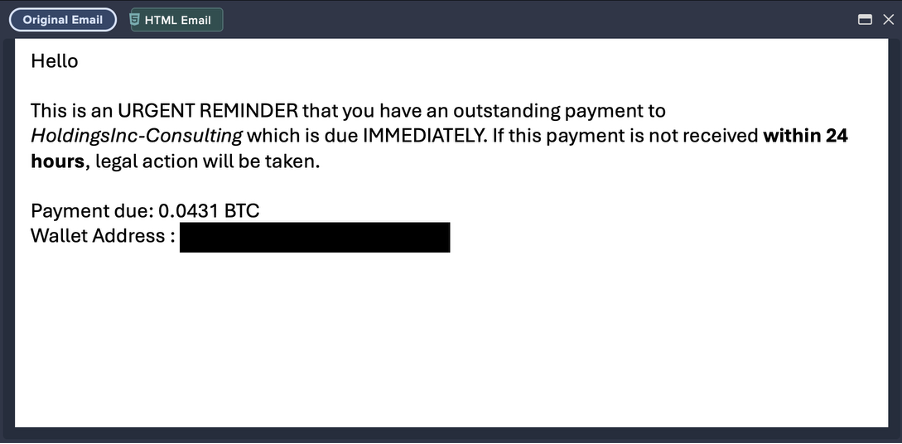

One prominent Black Friday phishing campaign observed landing in many inboxes uses fake domains purporting to be from marketing sites, like “Pal.PetPlatz.com” and “Epicbrandmarketing.com”.

These emails tend to operate in one of two ways. Some contain “deals” for luxury items such as Rolex watches or Louis Vuitton handbags, designed to tempt readers into clicking. However, the majority are tied to a made-up brand called Deal Watchdogs, which promotes “can’t-miss” Amazon Black Friday offers – designed to lure readers into acting fast to secure legitimate time-sensitive deals. Any user who clicks a link is taken to a fake Amazon website where they are tricked into inputting sensitive data and payment details.

Third observation: The impact of generative AI

The biggest shift seen in phishing in recent years is how much more convincing scam emails are thanks to generative AI. 27% of phishing emails observed by Darktrace in 2024 contained over 1,000 characters2, suggesting LLM use in their creation. Tools like ChatGPT and Gemini lower the barrier to entry for cyber-criminals, allowing them to create phishing campaigns that humans find it difficult to spot.

Let’s take a look at a dummy email created by a member of our team without a technical background to illustrate how easy it is to spin up an email that looks and feels like a genuine Black Friday offer. With two prompts, generative AI created a convincing “sale” email that could easily pass as the real thing without requiring any technical skill.

%20(3).avif)

Anyone can now create convincing brand spoofs, and they can do it at scale. That makes it even more important for email users to pause, check the sender, and think before they click.

Why phishing scams hurt consumers and brands

These spoofs don’t just drain shoppers’ bank accounts and grab their personal data. They erode trust, drive people away from real sites, and ultimately hurt brands’ sales. And the fakes keep getting sharper, more convincing, and harder to spot.

Though brands should implement email controls like DMARC to help reduce spoofing, they can’t stop attackers from registering new look-alike domains or using other channels. At the end of the day, human users remain vulnerable to well-crafted scams, particularly when the element of trust from a well-known brand is involved. And while brands can’t prevent all impersonation scams, the fallout can still erode consumer trust and damage their reputation.

In order to limit the impact of these scams, two things need to work together: better education so consumers know when to slow down and look twice, and email security (plus a DMARC solution and an attack surface management tool) that can adapt faster than the attackers – protecting both shoppers and the brands they love.

Tips to stay safe while Black Friday shopping online

On top of retailers implementing robust email security, there are some simple steps shoppers can take to stay safer while shopping this holiday season.

- Check every website (twice). Scammers make tiny changes you can barely see. They’ll switch Walmart.com for Waimart.com and most people won’t notice. If something looks even slightly off, check the URL carefully and, if you’re unsure, search for reviews of that exact address.

- Santa keeps the real gifts in the workshop. Don’t just click through from sales emails. Use them as a prompt to log in directly to the official app or site, where any genuine notifications will appear.

- Look at the payment options. Real retailers usually offer a handful of recognizable ways to pay; if a site pushes only odd methods or upfront transfers, don’t use it.

- Be skeptical of Christmas miracles. If a deal on a big-ticket item looks too good to be true, it usually is.

- Leave the rushing to the elves. Countdown timers and “last chance” banners are designed to make you click before you think. Take a breath, double-check the sender and the site, and then decide whether to buy.

Email security you can trust this holiday season

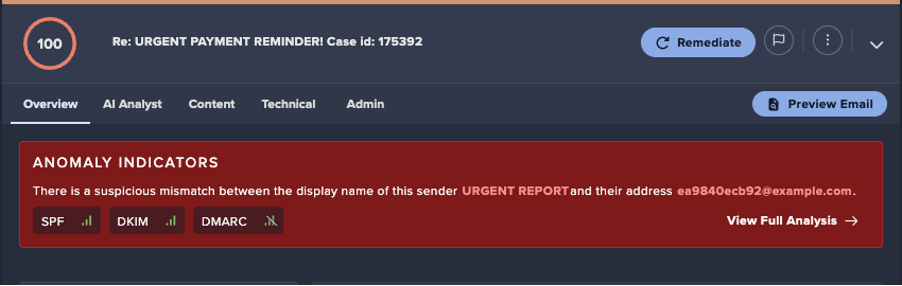

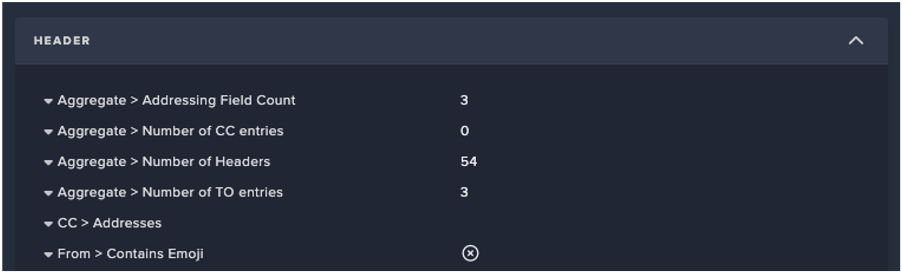

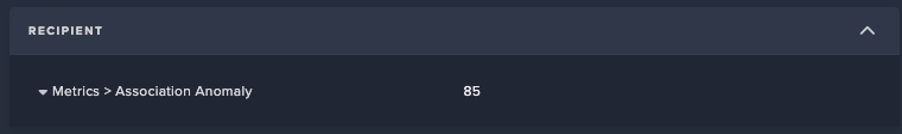

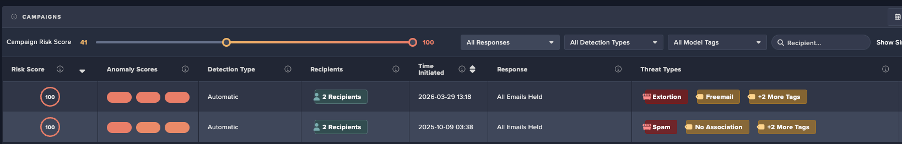

The heightened holiday shopping season shines a spotlight on an uncomfortable reality: now that phishing emails are harder than ever to distinguish from legitimate brand communication, traditional spam filters and Secure Email Gateways struggle to keep up. In order to protect against communication-based attacks, organizations require email security that can evaluate the full context of an email – not just surface-level indicators – and stop malicious messages before they reach inboxes.

Darktrace / EMAIL uses Self-Learning AI to understand the behavior and patterns of every user, so it can detect the subtle inconsistencies that reveal a message isn’t genuine, from shifts in tone and writing style to unexpected links, unfamiliar senders, or off-brand visual cues. By identifying these anomalies automatically – and either holding them entirely, or neutralizing malicious elements – it removes the burden from employees to catch near-imperceptible errors and reinforces protection for the entire organization, from staff to customers to brand reputation.

Join our live broadcast on 9 December, where Darktrace will reveal new, industry-first innovations in email security keeping organizations safe this Christmas – from DMARC to DLP. Sign up to the live launch event now.

For a deeper dive into some specific Black Friday phishing campaigns surfaced by the Darktrace threat analysis team, read the follow-up blog here.

A note on methodology

Insights derive from anonymous live data across 6,500 customers protected by Darktrace / EMAIL. Darktrace created models tracking verified phishing emails that:

- Explicitly mentioned Black Friday

- Impersonated US retailers popular during the holiday season (Walmart, Target, Best Buy, Macy's, Old Navy, 1800-Flowers)

- Impersonated major global brands (Apple, eBay, Netflix, Alibaba and PayPal)

Tracking ran from October 1 to November 21.

References

[1] Based on live tracking of phishing emails spoofing Walmart, Target, Best Buy, Macy's, Old Navy, 1800-Flowers across email inboxes protected by Darktrace. November 15 – November 21, 2025

[2] Based on analysis of 30.4 million phishing emails between December 21, 2023, and December 18, 2024. Darktrace Annual Threat Report 2024.

[related-resource]

Replace your SEG with context-aware email security

A practical guide for CISOs for replacing outdated SEGs with AI-driven email security, optimized for Microsoft 365.

.avif)

.avif)

%201.png)

.jpg)