What is Vendor Email Compromise?

Vendor Email Compromise (VEC) refers to an attack where actors breach a third-party provider to exploit their access, relationships, or systems for malicious purposes. The initially compromised entities are often the target’s existing partners, though this can extend to any organization or individual the target is likely to trust.

It sits at the intersection of supply chain attacks and business email compromise (BEC), blending technical exploitation with trust-based deception. Attackers often infiltrate existing conversations, leveraging AI to mimic tone and avoid common spelling and grammar pitfalls. Malicious content is typically hosted on otherwise reputable file sharing platforms, meaning any shared links initially seem harmless.

While techniques to achieve initial access may have evolved, the goals remain familiar. Threat actors harvest credentials, launch subsequent phishing campaigns, attempt to redirect invoice payments for financial gain, and exfiltrate sensitive corporate data.

Why traditional defenses fall short

These subtle and sophisticated email attacks pose unique challenges for defenders. Few busy people would treat an ongoing conversation with a trusted contact with the same level of suspicion as an email from the CEO requesting ‘URGENT ASSISTANCE!’ Unfortunately, many traditional secure email gateways (SEGs) struggle with this too. Detecting an out-of-character email, when it does not obviously appear out of character, is a complex challenge. It’s hardly surprising, then, that 83% of organizations have experienced a security incident involving third-party vendors [1].

This article explores how Darktrace detected four different vendor compromise campaigns for a single customer, within a two-week period in 2025. Darktrace / EMAIL successfully identified the subtle indicators that these seemingly benign emails from trusted senders were, in fact, malicious. Due to the configuration of Darktrace / EMAIL in this customer’s environment, it was unable to take action against the malicious emails. However, if fully enabled to take Autonomous Response, it would have held all offending emails identified.

How does Darktrace detect vendor compromise?

The answer lies at the core of how Darktrace operates: anomaly detection. Rather than relying on known malicious rules or signatures, Darktrace learns what

‘normal’ looks like for an environment, then looks for anomalies across a wide range of metrics. Despite the resourcefulness of the threat actors involved in this case, Darktrace identified many anomalies across these campaigns.

Different campaigns, common traits

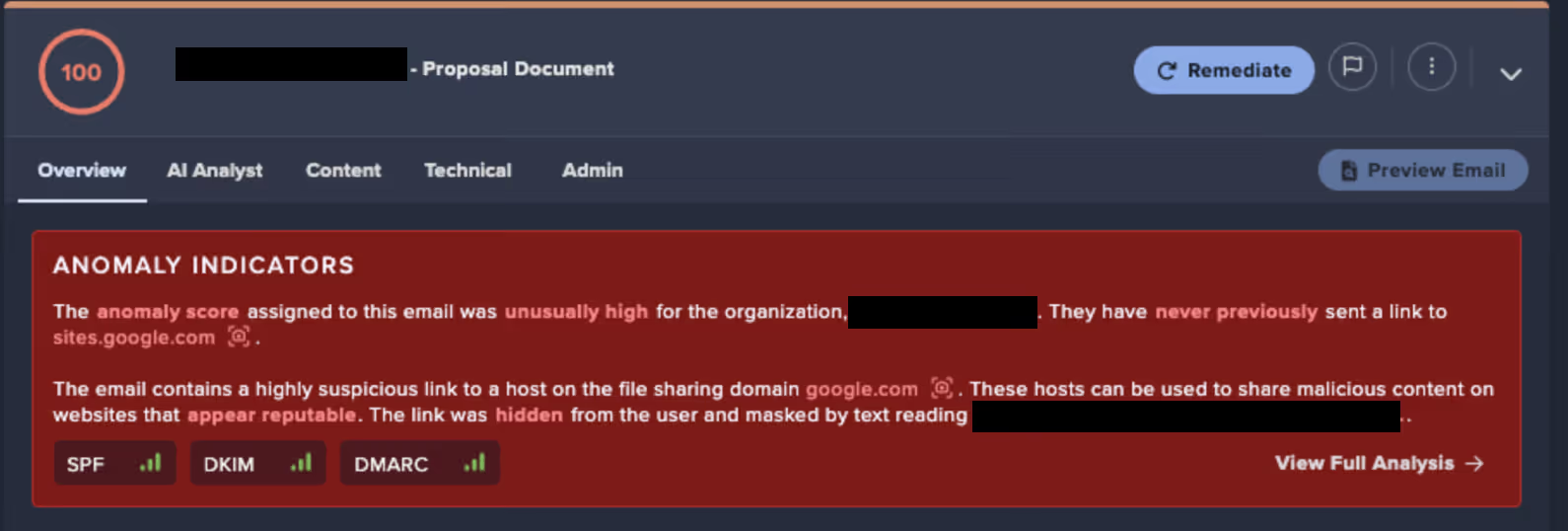

A wide variety of approaches was observed. Individuals, shared mailboxes and external contractors were all targeted. Two emails originated from compromised current vendors, while two came from unknown compromised organizations - one in an associated industry. The sender organizations were either familiar or, at the very least, professional in appearance, with no unusual alphanumeric strings or suspicious top-level domains (TLDs). Subject line, such as “New Approved Statement From [REDACTED]” and “[REDACTED] - Proposal Document” appeared unremarkable and were not designed to provoke heightened emotions like typical social engineering or BEC attempts.

All emails had been given a Microsoft Spam Confidence Level of 1, indicating Microsoft did not consider them to be spam or malicious [2]. They also passed authentication checks (including SPF, and in some cases DKIM and DMARC), meaning they appeared to originate from an authentic source for the sender domain and had not been tampered with in transit.

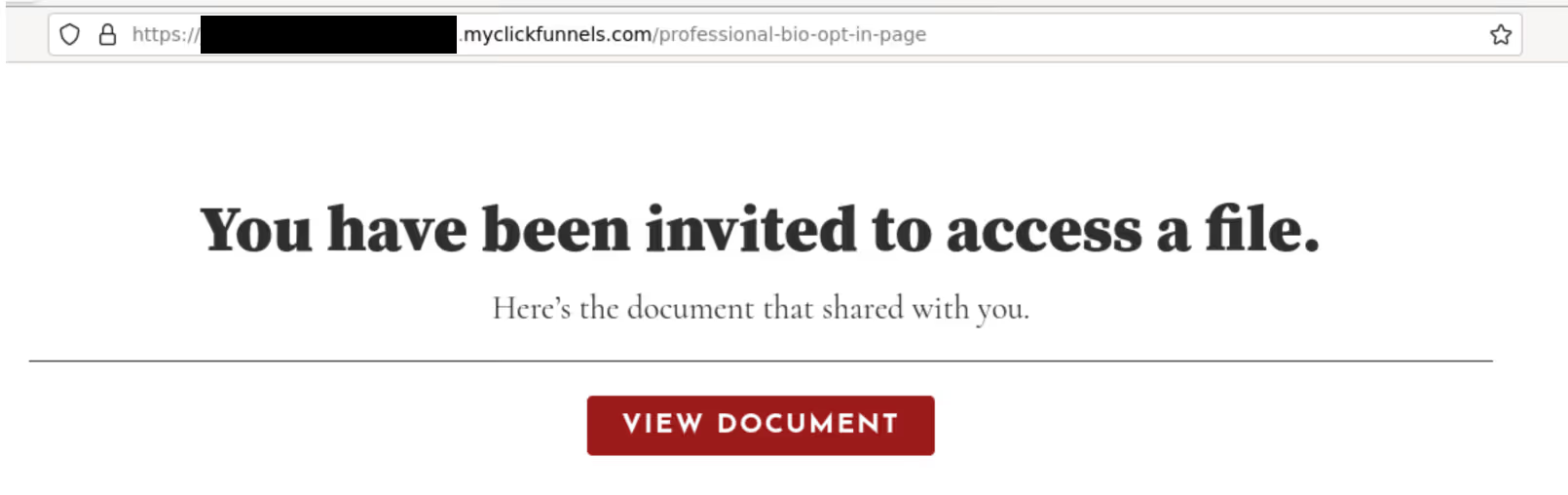

All observed phishing emails contained a link hosted on a legitimate and commonly used file-sharing site. These sites were often convincingly themed, frequently featuring the name of a trusted vendor either on the page or within the URL, to appear authentic and avoid raising suspicion. However, these links served only as the initial step in a more complex, multi-stage phishing process.

%20Date08132025%200740%20AM.avif)

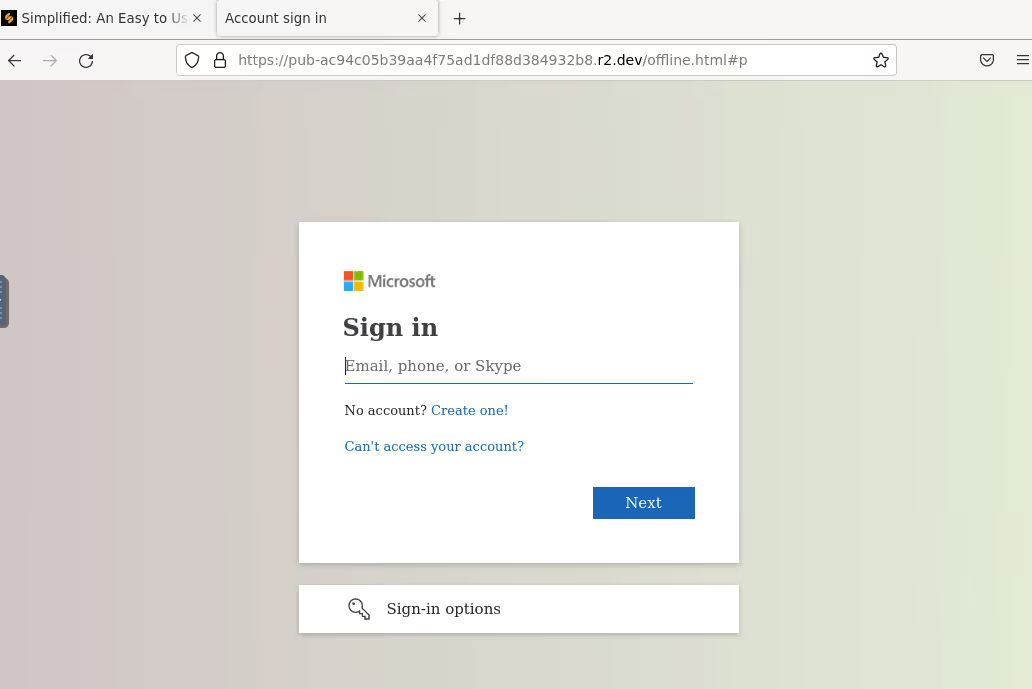

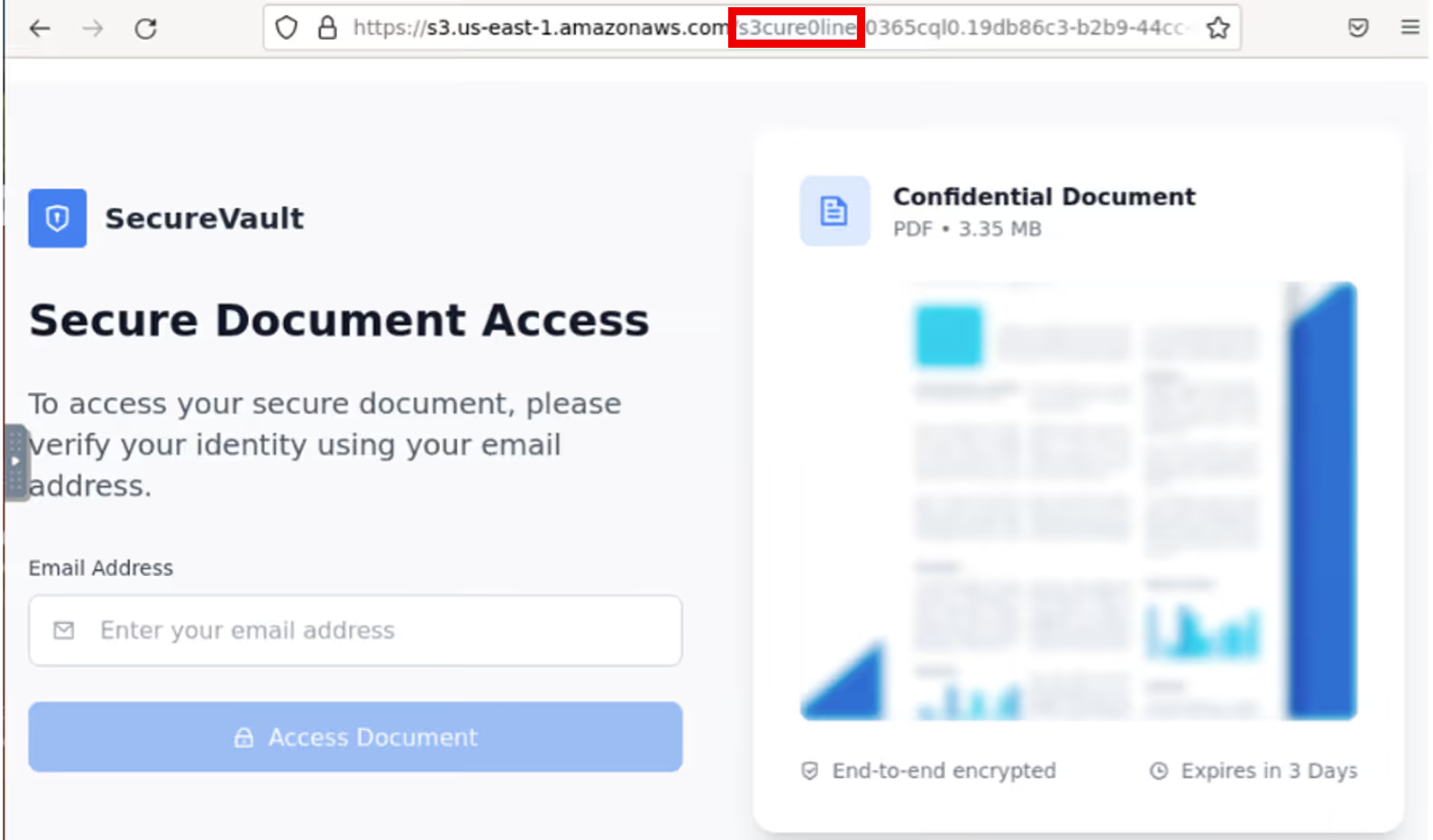

If followed, the recipient would be redirected, sometimes via CAPTCHA, to fake Microsoft login pages designed to capturing credentials, namely http://pub-ac94c05b39aa4f75ad1df88d384932b8.r2[.]dev/offline[.]html and https://s3.us-east-1.amazonaws[.]com/s3cure0line-0365cql0.19db86c3-b2b9-44cc-b339-36da233a3be2ml0qin/s3cccql0.19db86c3-b2b9-44cc-b339-36da233a3be2%26l0qn[.]html#.

The latter made use of homoglyphs to deceive the user, with a link referencing ‘s3cure0line’, rather than ‘secureonline’. Post-incident investigation using open-source intelligence (OSINT) confirmed that the domains were linked to malicious phishing endpoints [3] [4].

Darktrace Anomaly Detection

Some senders were unknown to the network, with no previous outbound or inbound emails. Some had sent the email to multiple undisclosed recipients using BCC, an unusual behavior for a new sender.

Where the sender organization was an existing vendor, Darktrace recognized out-of-character behavior, in this case it was the first time a link to a particular file-sharing site had been shared. Often the links themselves exhibited anomalies, either being unusually prominent or hidden altogether - masked by text or a clickable image.

Crucially, Darktrace / EMAIL is able to identify malicious links at the time of processing the emails, without needing to visit the URLs or analyze the destination endpoints, meaning even the most convincing phishing pages cannot evade detection – meaning even the most convincing phishing emails cannot evade detection. This sets it apart from many competitors who rely on crawling the endpoints present in emails. This, among other things, risks disruption to user experience, such as unsubscribing them from emails, for instance.

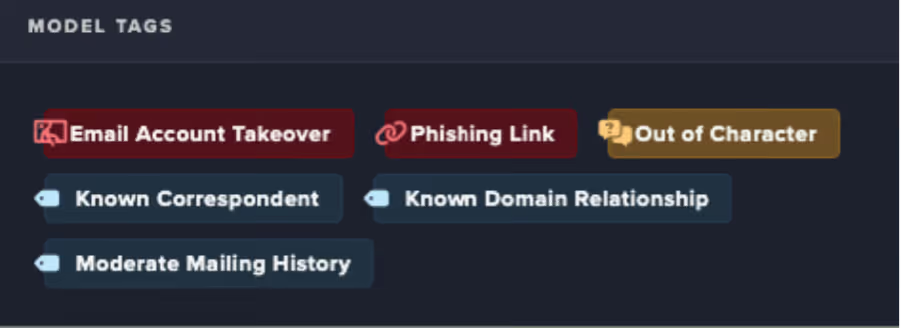

Darktrace was also able to determine that the malicious emails originated from a compromised mailbox, using a series of behavioral and contextual metrics to make the identification. Upon analysis of the emails, Darktrace autonomously assigned several contextual tags to highlight their concerning elements, indicating that the messages contained phishing links, were likely sent from a compromised account, and originated from a known correspondent exhibiting out-of-character behavior.

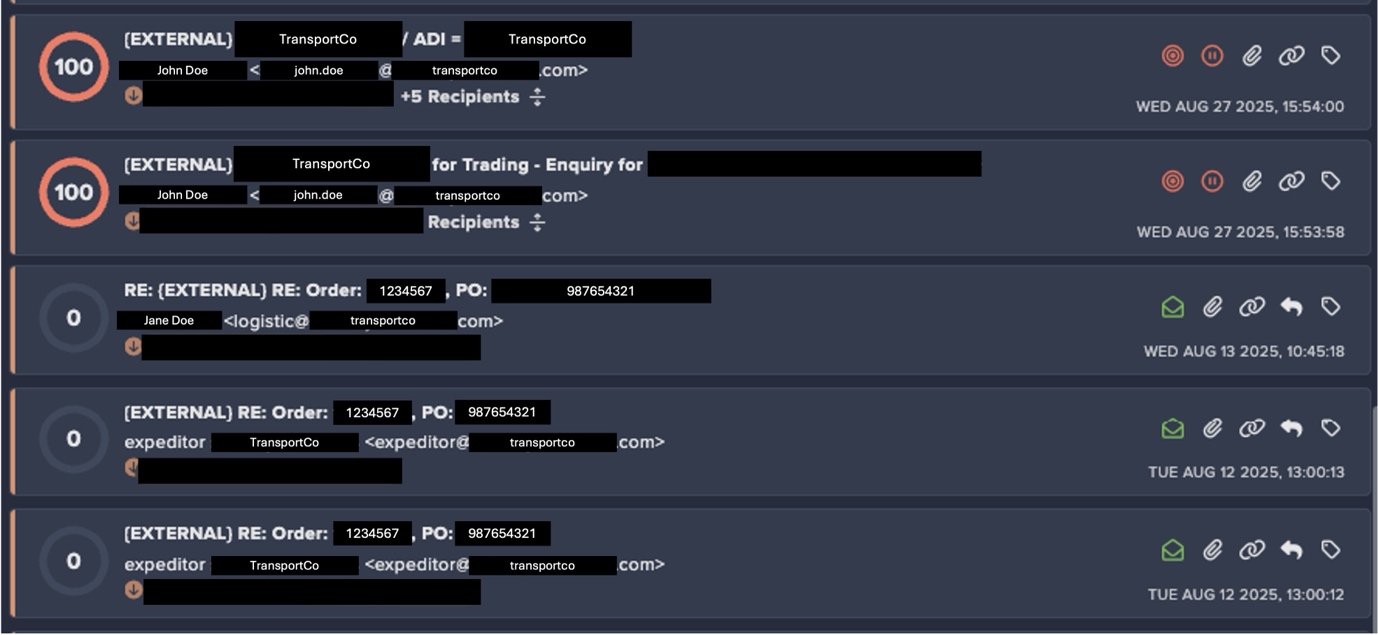

Out-of-character behavior caught in real-time

In another customer environment around the same time Darktrace / EMAIL detected multiple emails with carefully crafted, contextually appropriate subject lines sent from an established correspondent being sent to 30 different recipients. In many cases, the attacker hijacked existing threads and inserted their malicious emails into an ongoing conversation in an effort to blend in and avoid detection. As in the previous, the attacker leveraged a well-known service, this time ClickFunnels, to host a document containing another malicious link. Once again, they were assigned a Microsoft Spam Confidence Level of 1, indicating that they were not considered malicious.

This time, however, the customer had Darktrace / EMAIL fully enabled to take Autonomous Response against suspicious emails. As a result, when Darktrace detected the out-of-character behavior, specifically, the sharing of a link to a previously unused file-sharing domain, and identified the likely malicious intent of the message, it held the email, preventing it from reaching recipients’ inboxes and effectively shutting down the attack.

*To preserve anonymity, all real customer names, email addresses, and other identifying details have been redacted and replaced with fictitious placeholders.

Legitimate messages in the conversation were assigned an Anomaly Score of 0, while the newly inserted malicious emails identified and were flagged with the maximum score of 100.

Key takeaways for defenders

Phishing remains big business, and as the landscape evolves, today’s campaigns often look very different from earlier versions. As with network-based attacks, threat actors are increasingly leveraging legitimate tools and exploiting trusted relationships to carry out their malicious goals, often staying under the radar of security teams and traditional email defenses.

As attackers continue to exploit trusted relationships between organizations and their third-party associates, security teams must remain vigilant to unexpected or suspicious email activity. Protecting the digital estate requires an email solution capable of identifying malicious characteristics, even when they originate from otherwise trusted senders.

Credit to Jennifer Beckett (Cyber Analyst), Patrick Anjos (Senior Cyber Analyst), Ryan Traill (Analyst Content Lead), Kiri Addison (Director of Product)

Appendices

IoC - Type - Description + Confidence

- http://pub-ac94c05b39aa4f75ad1df88d384932b8.r2[.]dev/offline[.]html#p – fake Microsoft login page

- https://s3.us-east-1.amazonaws[.]com/s3cure0line-0365cql0.19db86c3-b2b9-44cc-b339-36da233a3be2ml0qin/s3cccql0.19db86c3-b2b9-44cc-b339-36da233a3be2%26l0qn[.]html# - link to domain used in homoglyph attack

MITRE ATT&CK Mapping

Tactic – Technique – Sub-Technique

Initial Access - Phishing – (T1566)

References

1. https://gitnux.org/third-party-risk-statistics/

2. https://learn.microsoft.com/en-us/defender-office-365/anti-spam-spam-confidence-level-scl-about

The content provided in this blog is published by Darktrace for general informational purposes only and reflects our understanding of cybersecurity topics, trends, incidents, and developments at the time of publication. While we strive to ensure accuracy and relevance, the information is provided “as is” without any representations or warranties, express or implied. Darktrace makes no guarantees regarding the completeness, accuracy, reliability, or timeliness of any information presented and expressly disclaims all warranties.

Nothing in this blog constitutes legal, technical, or professional advice, and readers should consult qualified professionals before acting on any information contained herein. Any references to third-party organizations, technologies, threat actors, or incidents are for informational purposes only and do not imply affiliation, endorsement, or recommendation.

Darktrace, its affiliates, employees, or agents shall not be held liable for any loss, damage, or harm arising from the use of or reliance on the information in this blog.

The cybersecurity landscape evolves rapidly, and blog content may become outdated or superseded. We reserve the right to update, modify, or remove any content

The Darktrace / EMAIL Solution Brief

Learn more about how Darktrace / EMAIL stops block novel threats up to 13 days earlier than other tools

.jpg)