Understanding supply chain risk in manufacturing

For industries running Industrial Control Systems (ICS) such as manufacturing and fast-moving consumer goods (FMCG), complex supply chains mean that disruption to one weak node can have serious impacts to the entire ecosystem. However, supply chain risk does not always originate from outside an organization’s ICS network.

The implicit trust placed on software or shared services for maintenance within an ICS can be considered a type of insider threat [1], where defenders also need to look ‘from within’ to protect against supply chain risk. Attackers have frequently mobilised this form of insider threat:

- Many ICS and SCADA systems were compromised during the 2014 Havex Watering Hole attack, where via operators’ implicit trust in the trojanized versions of legitimate applications, on legitimate but compromised websites [2].

- In 2018, the world’s largest manufacturer of semiconductors and processers shut down production for three days after a supplier installed tainted software that spread to over 10,000 machines in the manufacturer’s network [3].

- During the 2020 SolarWinds supply chain attack, attackers compromised a version of Orion software that was deployed from SolarWinds’ own servers during a software update to thousands of customers, including tech manufacturing companies such as Intel and Nvidia [4].

Traditional approaches to ICS security have focused on defending against everything from outside the castle walls, or outside of the ICS network. As ICS attacks become more sophisticated, defenders must not solely rely on static perimeter defenses and prevention.

A critical part of active defense is understanding the ICS environment and how it operates, including all possible attack paths to the ICS including network connections, remote access points, the movement of data across zones and conduits and access from mobile devices. For instance, original equipment manufacturers (OEMs) and vendors often install remote access software or third-party equipment in ICS networks to facilitate legitimate maintenance and support activities, which can unintentionally expand the ICS’ attack surface.

This blog describes an example of the convergence between supply chain risk and insider risk, when a vendor left a Raspberry Pi device in a manufacturing customer’s ICS network without the customer’s knowledge.

Case study: Using unsupervised machine learning to detect pre-existing security issues

Raspberry Pi devices are commonly used in SCADA environments as low-cost, remotely accessible data collectors [5][6][7]. They are often paired with Industrial Internet of Things (IIoT) for monitoring and tracking [8]. However, these devices also represent a security risk because their small physical size and time-consuming nature of physical inspection makes them easy to overlook. This poses a security risk, as these devices have previously been used to carry out USB-based attacks or to emulate Ethernet-over-USB connections to exfiltrate sensitive data [8][9].

In this incident, a Darktrace customer was unaware that their supplier had installed a Raspberry Pi device on their ICS network. Crucially, the installation occurred prior to Darktrace’s deployment on the customer’s network.

For other anomaly detection tools, this order of events meant that this third-party device would likely have been treated as part of the customer’s existing infrastructure. However, after Darktrace was deployed, it analyzed the metadata from the encrypted HTTPS and DNS connections that the Raspberry Pi made to ‘call home’ to the supplier and determined that these connections were unusual compared to the rest of the devices in the network, even in the absence of any malicious indicators of compromise (IoCs).

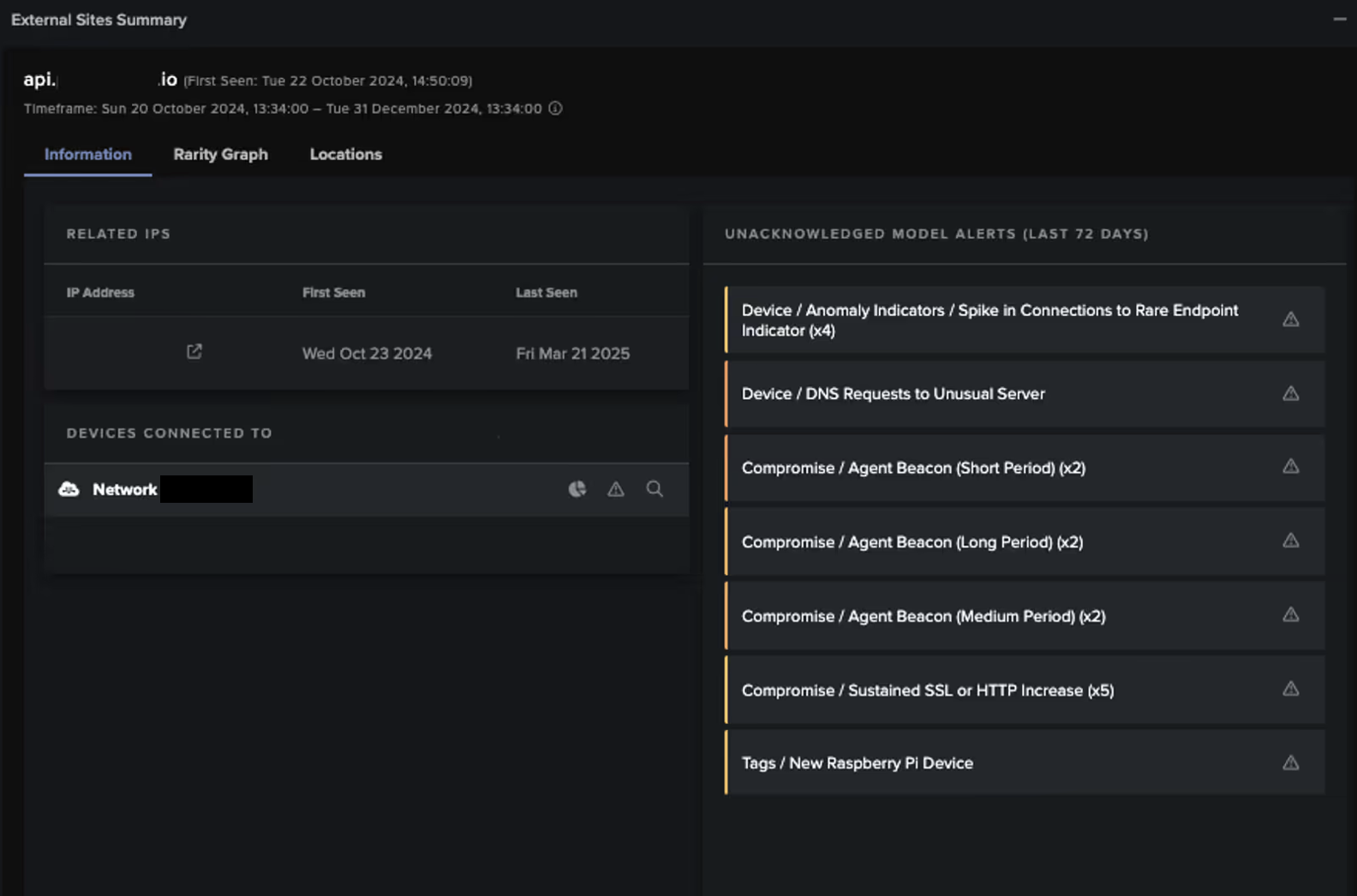

Darktrace triggered the following alerts for this unusual activity that consequently notified the customer to the pre-existing threat of an unmanaged device already present in their network:

- Compromise / Sustained SSL or HTTP Increase

- Compromise / Agent Beacon (Short Period)

- Compromise / Agent Beacon (Medium Period)

- Compromise / Agent Beacon (Long Period)

- Tags / New Raspberry Pi Device

- Device / DNS Requests to Unusual Server

- Device / Anomaly Indicators / Spike in Connections to Rare Endpoint Indicator

Darktrace’s Cyber AI Analyst launched an autonomous investigation into the activity, correlating related events into a broader incident and generating a report outlining the potential threat along with supporting technical details.

Darktrace’s anomaly-based detection meant that the Raspberry Pi device did not need to be observed performing clearly malicious behavior to alert the customer to the security risk, and neither can defenders afford to wait for such escalation.

Why is this significant?

In 2021 a similar attack took place. Aiming to poison a Florida water treatment facility, attackers leveraged a TeamViewer instance that had been dormant on the system for six months, effectively allowing the attacker to ‘live off the land’ [10].

The Raspberry Pi device in this incident also remained outside the purview of the customer’s security team at first. It could have been leveraged by a persistent attacker to pivot within the internal network and communicate externally.

A proactive approach to active defense that seeks to minimize and continuously monitor the attack surface and network is crucial.

The growing interest in manufacturing from attackers and policymakers

Significant motivations for targeting the manufacturing sector and increasing regulatory demands make the convergence of supply chain risk, insider risk, and the prevalence of stealthy living-off-the-land techniques particularly relevant to this sector.

Manufacturing is consistently targeted by cybercriminals [11], and the sector’s ‘just-in-time’ model grants attackers the opportunity for high levels of disruption. Furthermore, under NIS 2, manufacturing and some food and beverage processing entities are now designated as ‘important’ entities. This means stricter incident reporting requirements within 24 hours of detection, and enhanced security requirements such as the implementation of zero trust and network segmentation policies, as well as measures to improve supply chain resilience [12][13][14].

How can Darktrace help?

Ultimately, Darktrace successfully assisted a manufacturing organization in detecting a potentially disruptive 'near-miss' within their OT environment, even in the absence of traditional IoCs. Through passive asset identification techniques and continuous network monitoring, the customer improved their understanding of their network and supply chain risk.

While the swift detection of the rogue device allowed the threat to be identified before it could escalate, the customer could have reduced their time to respond by using Darktrace’s built-in response capabilities, had Darktrace’s Autonomous Response capability been enabled. Darktrace’s Autonomous Response can be configured to target specific connections on a rogue device either automatically upon detection or following manual approval from the security team, to stop it communicating with other devices in the network while allowing other approved devices to continue operating. Furthermore, the exportable report generated by Cyber AI Analyst helps security teams to meet NIS 2’s enhanced reporting requirements.

Sophisticated ICS attacks often leverage insider access to perform in-depth reconnaissance for the development of tailored malware capabilities. This case study and high-profile ICS attacks highlight the importance of mitigating supply chain risk in a similar way to insider risk. As ICS networks adapt to the introduction of IIoT, remote working and the increased convergence between IT and OT, it is important to ensure the approach to secure against these threats is compatible with the dynamic nature of the network.

Credit to Nicole Wong (Principal Cyber Analyst), Matthew Redrup (Senior Analyst and ANZ Team Lead)

[related-resource]

Appendices

MITRE ATT&CK Mapping

- Infrastructure / New Raspberry Pi Device - INITIAL ACCESS - T1200 Hardware Additions

- Device / DNS Requests to Unusual Server - CREDENTIAL ACCESS, COLLECTION - T1557 Man-in-the-Middle

- Compromise / Agent Beacon - COMMAND AND CONTROL - T1071.001 Web Protocols

References

[1] https://www.cisa.gov/topics/physical-security/insider-threat-mitigation/defining-insider-threats

[3]https://thehackernews.com/2018/08/tsmc-wannacry-ransomware-attack.html

[4] https://www.theverge.com/2020/12/21/22194183/intel-nvidia-cisco-government-infected-solarwinds-hack

[5] https://www.centreon.com/monitoring-ot-with-raspberry-pi-and-centreon/

[6] https://ieeexplore.ieee.org/document/9107689

[7] https://www.linkedin.com/pulse/webicc-scada-integration-industrial-raspberry-pi-devices-mryff

[8] https://www.rowse.co.uk/blog/post/how-is-the-raspberry-pi-used-in-the-iiot

[10] https://edition.cnn.com/2021/02/10/us/florida-water-poison-cyber/index.html

[11] https://www.mxdusa.org/2025/02/13/top-cyber-threats-in-manufacturing/

Darktrace & Manufacturing

Cyber-attacks are evolving, and manufacturing organizations remain vulnerable to disruptions, learn how Darktrace can help.

.avif)

.avif)

%201.png)