Read the first part: Part one — A perimeter in ruins

Earlier this month, I discussed some of the most critical challenges that today’s institutions face in their efforts to reinforce the network perimeter. Eliminating common attack vectors, from unauthorized uploads in the cloud to outdated protocol usage on-premise, is an essential step toward a more secure digital future.

Ultimately, however, I concluded that even flawless cyber hygiene at the perimeter will never be a panacea for all possible cyber-threats, since defenders cannot possibly address vulnerabilities about which they aren’t yet aware. Building strong borders is vital, clearly, but as attackers continue to launch novel attacks, even 50-foot walls are imperiled by 50-foot ladders.

Of course, such concerns become merely academic when your walls aren’t placed correctly, or watched attentively, or expanded when the digital estate grows. For countless employees and organizations alike, the allure of convenience has weakened the perimeter in all of these ways and more, rendering the work of cyber-criminals exponentially easier. Yet given the complexity of the modern enterprise, discovering exactly where users have cut corners is often difficult for human security teams alone. Spotting cyber hygiene issues caused by a lack of due diligence — like the five detailed below — therefore requires AI tools that alert on critical changes to network activity in real time.

Issue #6: Not keeping an inventory of hardware on the network

As all manner of non-traditional IT makes its way into workplaces around the world, keeping an inventory of these seamlessly integrated devices often proves an arduous undertaking, one that many organizations shirk altogether. Between app-controlled thermostats and smart refrigerators, connected cameras and Bluetooth sensors, few security teams possess a rigorous list of the hardware under their care.

Yet attaining 100% network visibility is a prerequisite to any viable security posture. Attackers are increasingly targeting poorly secured IoT devices to bypass the perimeter at its weakest points, before moving laterally to compromise more sensitive databases and machines. By analyzing all traffic from the entire enterprise, Darktrace detects when new devices come online and alert on any unusual activity from them with its AI models, some of which are:

- Device / New Device with Attack Tools

- Unusual Activity / Anomalous SMB Read & Write from New Device

- Unusual Activity / Sustained Unusual Activity from New Device

- Unusual Activity / Unusual Activity from New Device

Issue #7: Using corporate devices for private use

While the divide between corporate and private networks is a primary facet of cyber hygiene, few employees are immune to the temptation and convenience of using company devices for personal use. Whether it’s torrenting movies, visiting social media websites, or checking personal email accounts during the workday, these activities all expose carefully guarded corporate environments to ones that are far less secure. At the same time, many organizations lack visibility over their own online traffic, preventing their security teams from catching such risky behavior until it’s already too late.

Employees have also been known to violate internal compliance policies by downloading unauthorized software for private purposes, which introduces serious security risks and opens the door for supply chain attacks. Darktrace has detected a plethora of threats related to such downloads across our customer base, including outdated software, network scanners, BitTorrent clients, and crypto-mining programs. Such compliance issues trigger a number of Darktrace’s behavioral models, for example:

- Anomalous File / EXE from Rare External Location

- Anomalous File / Incoming RAR File

- Compliance / BitTorrent

- Compliance / Crypto Currency Mining Activity

To bypass compliance policies and access resources blocked by network administrators, employees often turn to VPNs as well as onion routing services like Tor, which facilitate anonymous communication. These services are equivalent to inhibiting security controls on the offending device; consequently, companies must have the ability to detect and terminate them whenever they are used on the network. Because Darktrace provides 100% visibility across the digital infrastructure, it can flag private VPN and Tor sessions with the following example models:

- Anomalous Connection / New Outbound VPN

- Compliance / Privacy VPN

- Compliance / Tor Usage

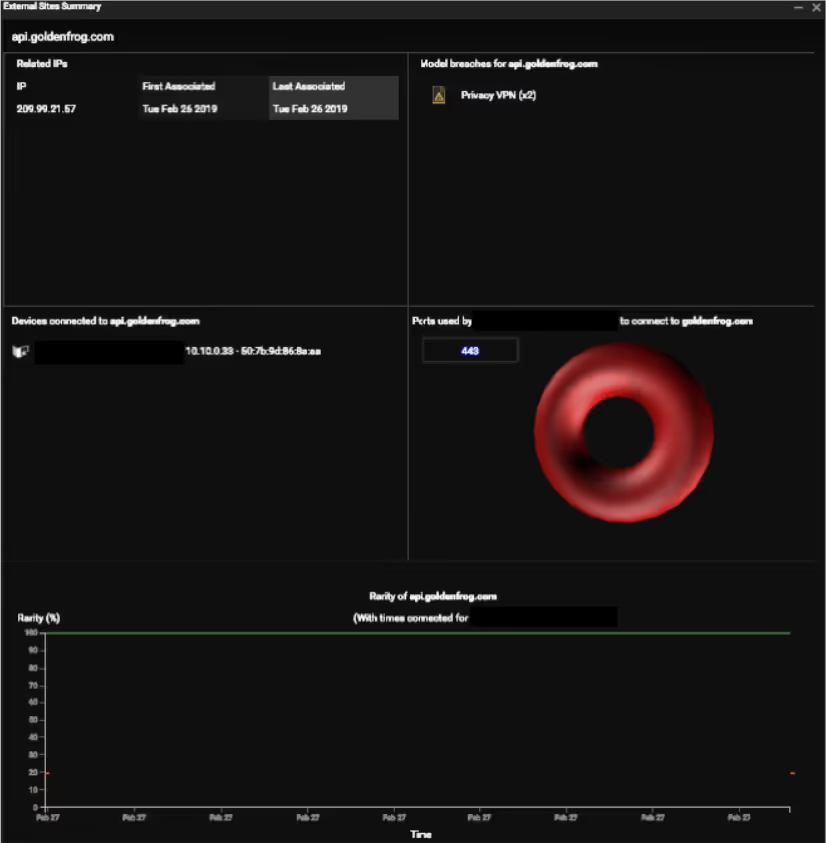

Darktrace detected one such case earlier this year wherein a corporate device connected to a third-party VPN. Although this activity is not inherently risky or threatening in all situations, Darktrace’s understanding of the company’s network revealed that the device was the only one using the VPN — strongly suggesting a compliance violation. Moreover, when the device was not using the VPN service, it was seen making a large amount of HTTP post requests to another rare destination and displaying other signs of infection. It turned out that the device was infected with the elusive Ursnif trojan.

Figure 1: Darktrace’s external site summary showing that only one device in the network connected to the VPN.

Issue #8: Lack of strong access management

Ensuring that only rightful users have access to private company resources is a foundational component of cyber security. Yet as these users and their privileges continuously evolve, maintaining strong access management can be time-consuming and difficult.

Out of all the users in the network, the accounts to which the most attention should be paid are those with administrator or root privileges. While it is common to keep a tight control on high-privilege accounts, there are still organizations that find it hard to manage the access control well, making their devices more vulnerable to both malware and insider threats. In fact, even well-intentioned insiders can jeopardize the organization in the absence of strong access management, such as employees who download unauthorized software without understanding its associated risks.

Darktrace has a list of models to detect the unusual usage of credentials, including:

- User / New Admin Credentials on Client

- User / Overactive User Credential

- SaaS / Unusual SaaS Administration

Issue #9: TFTP Usage

Trivial File Transfer Protocol (TFTP) is an application layer protocol commonly employed to transfer files between devices. Due to its relatively simplistic design and easy implementation, TFTP was very popular in the past. In the context of today’s sophisticated cyber-threats, however, TFTP has become highly insecure. Among the protocol’s numerous weaknesses from a cyber hygiene perspective is its lack of authentication mechanisms, a flaw which allows essentially anyone to read and write resources on the exposed device.

Darktrace’s Compliance / External TFTP model enables network administrators to detect any incoming TFTP connections from external IP addresses that don’t normally connect to the network. Crucially, Darktrace AI’s understanding what constitutes “normal” versus “abnormal” for each particular network serves to differentiate the most serious threats, as TFTP connections from a rare IP address are much more likely to be malicious than similar connections between known IP addresses on the network.

TFTP is just one example of insecure protocol usage – Darktrace monitors for the abnormal usage of various other attack-prone protocols as well. Another example is Telnet.

Issue #10: Unencrypted data transferred between internal and external devices

While encrypting communication can be a hassle, cleartext messages are liable to be intercepted or even altered by malicious actors — with potentially devastating ramifications. Indeed, Darktrace’s Compliance / FTP / Unusual Outbound FTP model has frequently flagged credentials being sent via unencrypted channels, which attackers could have used to access privileged resources within the company’s network.

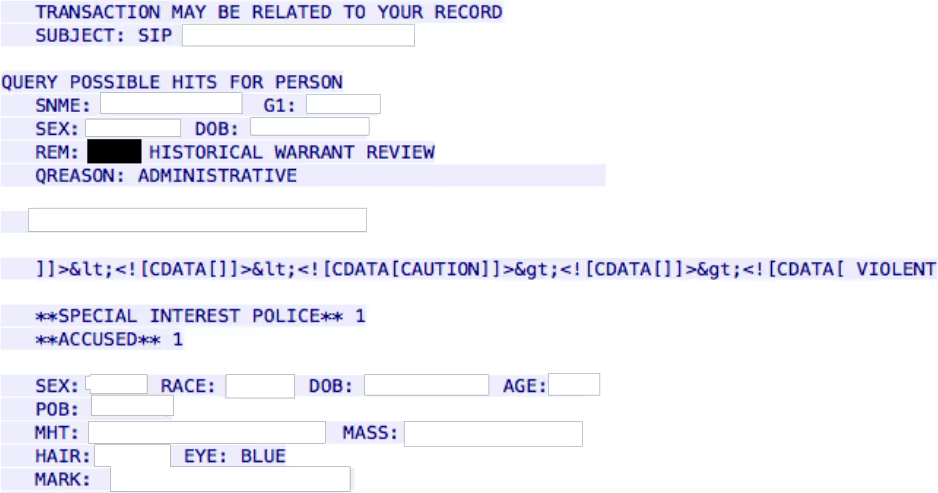

In the first few months of 2019, Darktrace detected an unusual connection made to an external device on port 1414 using the IBM WebSphere MQ Protocol. When potentially sensitive information was transmitted in cleartext, Darktrace AI alerted the customer in real time.

Figure 2: Packet capture showing that potential sensitive information was captured

Sacrificing convenience for security in these most egregious cases remains the foundation of robust cyber hygiene, whether that means not torrenting Shrek 2 on a work laptop or taking inventory of the smart juicer in the office kitchen. Of course, just as no perimeter defenses are formidable enough to keep motivated attackers at bay, so too is there no level of due diligence sufficient to close off all possible attack vectors or ensure that all employees are compliant with internal policies. With cyber AI defenses like Darktrace, security teams have an extra set of eyes watching out for poor cyber hygiene practices across the entire digital infrastructure, empowering them to grow those infrastructures with confidence.

%201.png)