DEMIST-2 is Darktrace’s latest embedding model, built to interpret and classify security data with precision. It performs highly specialized tasks and can be deployed in any environment. Unlike generative language models, DEMIST-2 focuses on providing reliable, high-accuracy detections for critical security use cases.

DEMIST-2 Core Capabilities:

- Enhances Cyber AI Analyst’s ability to triage and reason about security incidents by providing expert representation and classification of security data, and as a part of our broader multi-layered AI system

- Classifies and interprets security data, in contrast to language models that generate unpredictable open-ended text responses

- Incorporates new innovations in language model development and architecture, optimized specifically for cybersecurity applications

- Deployable across cloud, on-prem, and edge environments, DEMIST-2 delivers low-latency, high-accuracy results wherever it runs. It enables inference anywhere.

Cybersecurity is constantly evolving, but the need to build precise and reliable detections remains constant in the face of new and emerging threats. Darktrace’s Embedding Model for Investigation of Security Threats (DEMIST-2) addresses these critical needs and is designed to create stable, high-fidelity representations of security data while also serving as a powerful classifier. For security teams, this means faster, more accurate threat detection with reduced manual investigation. DEMIST-2's efficiency also reduces the need to invest in massive computational resources, enabling effective protection at scale without added complexity.

As an embedding language model, DEMIST-2 classifies and creates meaning out of complex security data. This equips our Self-Learning AI with the insights to compare, correlate, and reason with consistency and precision. Classifications and embeddings power core capabilities across our products where accuracy is not optional, as a part of our multi-layered approach to AI architecture.

Perhaps most importantly, DEMIST-2 features a compact architecture that delivers analyst-level insights while meeting diverse deployment needs across cloud, on-prem, and edge environments. Trained on a mixture of general and domain-specific data and designed to support task specialization, DEMIST-2 provides privacy-preserving inference anywhere, while outperforming larger general-purpose models in key cybersecurity tasks.

This proprietary language model reflects Darktrace's ongoing commitment to continually innovate our AI solutions to meet the unique challenges of the security industry. We approach AI differently, integrating diverse insights to solve complex cybersecurity problems. DEMIST-2 shows that a refined, optimized, domain-specific language model can deliver outsized results in an efficient package. We are redefining possibilities for cybersecurity, but our methods transfer readily to other domains. We are eager to share our findings to accelerate innovation in the field.

The evolution of DEMIST-2

Key concepts:

- Tokens: The smallest units processed by language models. Text is split into fragments based on frequency patterns allowing models to handle unfamiliar words efficiently

- Low-Rank Adaptors (LoRA): Small, trainable components added to a model that allow it to specialize in new tasks without retraining the full system. These components learn task-specific behavior while the original foundation model remains unchanged. This approach enables multiple specializations to coexist, and work simultaneously, without drastically increasing processing and memory requirements.

Darktrace began using large language models in our products in 2022. DEMIST-2 reflects significant advancements in our continuous experimentation and adoption of innovations in the field to address the unique needs of the security industry.

It is important to note that Darktrace uses a range of language models throughout its products, but each one is chosen for the task at hand. Many others in the artificial intelligence (AI) industry are focused on broad application of large language models (LLMs) for open-ended text generation tasks. Our research shows that using LLMs for classification and embedding offers better, more reliable, results for core security use cases. We’ve found that using LLMs for open-ended outputs can introduce uncertainty through inaccurate and unreliable responses, which is detrimental for environments where precision matters. Generative AI should not be applied to use cases, such as investigation and threat detection, where the results can deeply matter. Thoughtful application of generative AI capabilities, such as drafting decoy phishing emails or crafting non-consequential summaries are helpful but still require careful oversight.

Data is perhaps the most important factor for building language models. The data used to train DEMIST-2 balanced the need for general language understanding with security expertise. We used both publicly available and proprietary datasets. Our proprietary dataset included privacy-preserving data such as URIs observed in customer alerts, anonymized at source to remove PII and gathered via the Call Home and aianalyst.darktrace.com services. For additional details, read our Technical Paper.

DEMIST-2 is our way of addressing the unique challenges posed by security data. It recognizes that security data follows its own patterns that are distinct from natural language. For example, hostnames, HTTP headers, and certificate fields often appear in predictable ways, but not necessarily in a way that mirrors natural language. General-purpose LLMs tend to break down when used in these types of highly specialized domains. They struggle to interpret structure and context, fragmenting important patterns during tokenization in ways that can have a negative impact on performance.

DEMIST-2 was built to understand the language and structure of security data using a custom tokenizer built around a security-specific vocabulary of over 16,000 words. This tokenizer allows the model to process inputs more accurately like encoded payloads, file paths, subdomain chains, and command-line arguments. These types of data are often misinterpreted by general-purpose models.

When the tokenizer encounters unfamiliar or irregular input, it breaks the data into smaller pieces so it can still be processed. The ability to fall back to individual bytes is critical in cybersecurity contexts where novel or obfuscated content is common. This approach combines precision with flexibility, supporting specialized understanding with resilience in the face of unpredictable data.

Along with our custom tokenizer, we made changes to support task specialization without increasing model size. To do this, DEMIST-2 uses LoRA . LoRA is a technique that integrates lightweight components with the base model to allow it to perform specific tasks while keeping memory requirements low. By using LoRA, our proprietary representation of security knowledge can be shared and reused as a starting point for more highly specialized models, for example, it takes a different type of specialization to understand hostnames versus to understand sensitive filenames. DEMIST-2 dynamically adapts to these needs and performs them with purpose.

The result is that DEMIST-2 is like having a room of specialists working on difficult problems together, while sharing a basic core set of knowledge that does not need to be repeated or reintroduced to every situation. Sharing a consistent base model also improves its maintainability and allows efficient deployment across diverse environments without compromising speed or accuracy.

Tokenization and task specialization represent only a portion of the updates we have made to our embedding model. In conjunction with the changes described above, DEMIST-2 integrates several updated modeling techniques that reduce latency and improve detections. To learn more about these details, our training data and methods, and a full write-up of our results, please read our scientific whitepaper.

DEMIST-2 in action

In this section, we highlight DEMIST-2's embeddings and performance. First, we show a visualization of how DEMIST-2 classifies and interprets hostnames, and second, we present its performance in a hostname classification task in comparison to other language models.

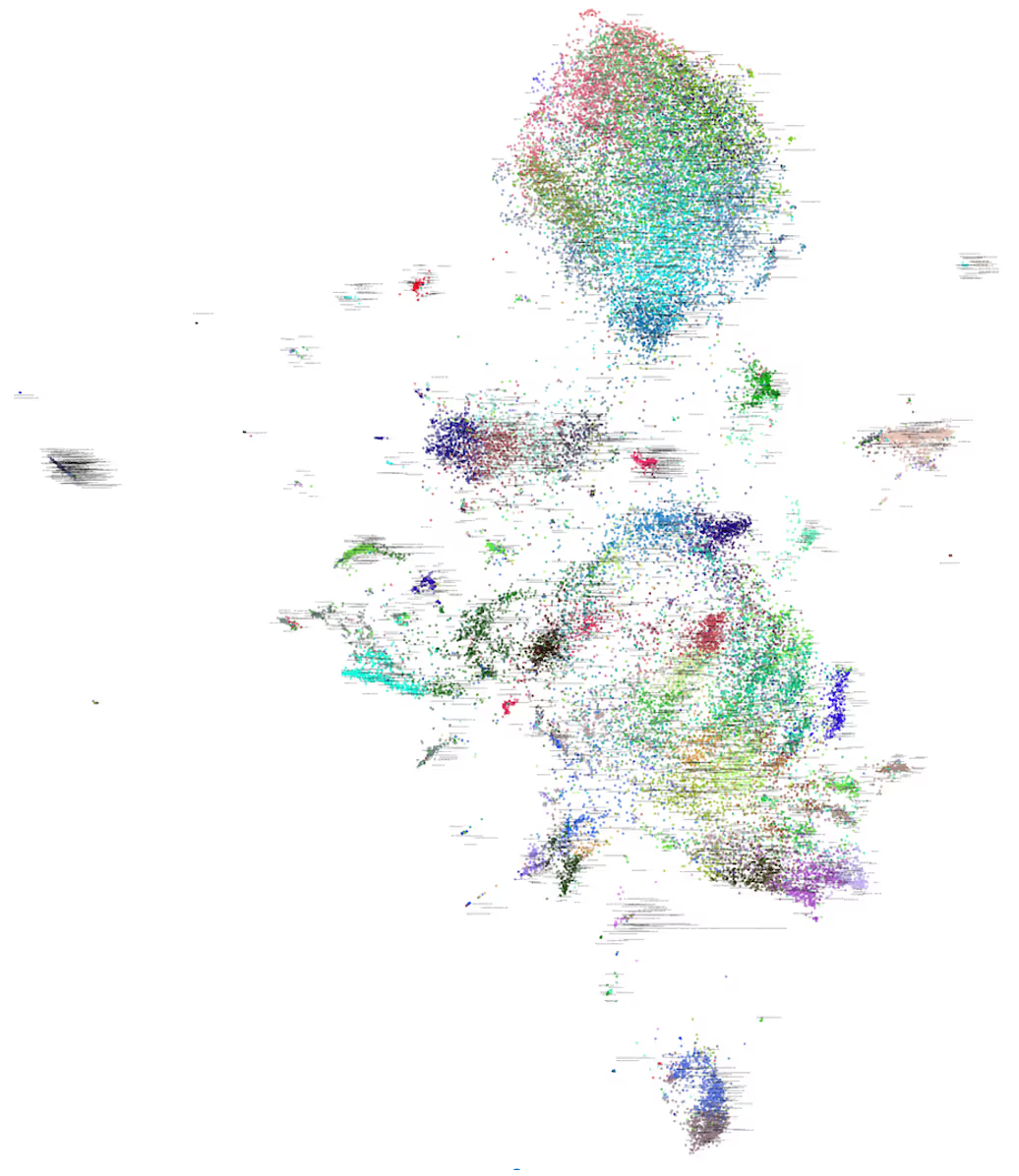

Embeddings can often feel abstract, so let’s make them real. Figure 1 below is a 2D visualization of how DEMIST-2 classifies and understands hostnames. In reality, these hostnames exist across many more dimensions, capturing details like their relationships with other hostnames, usage patterns, and contextual data. The colors and positions in the diagram represent a simplified view of how DEMIST-2 organizes and interprets these hostnames, providing insights into their meaning and connections. Just like an experienced human analyst can quickly identify and group hostnames based on patterns and context, DEMIST-2 does the same at scale.

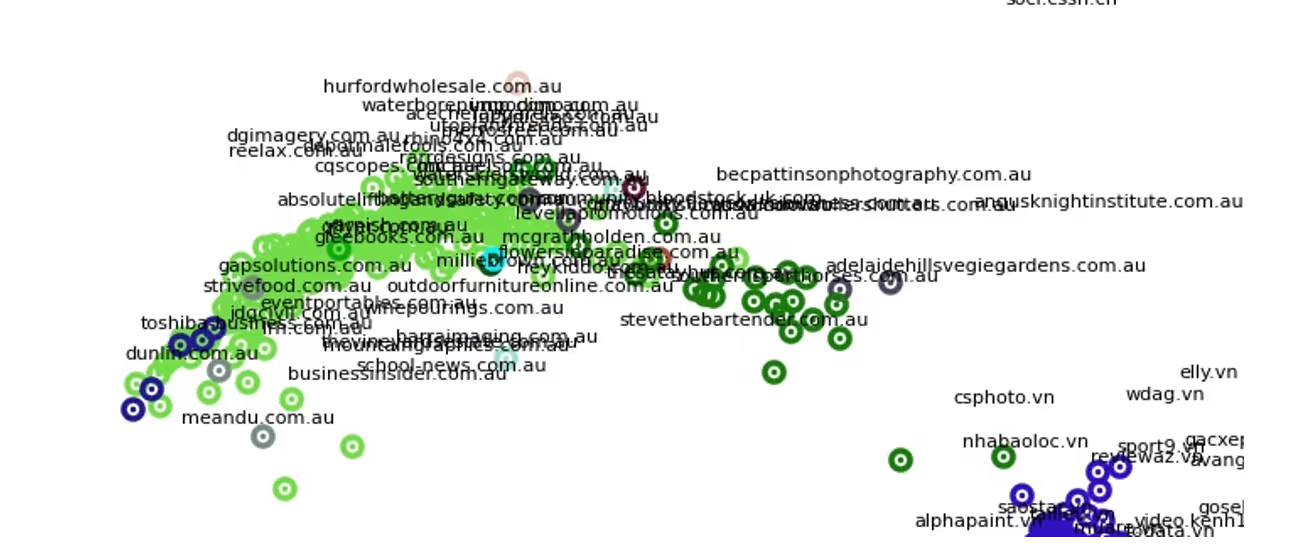

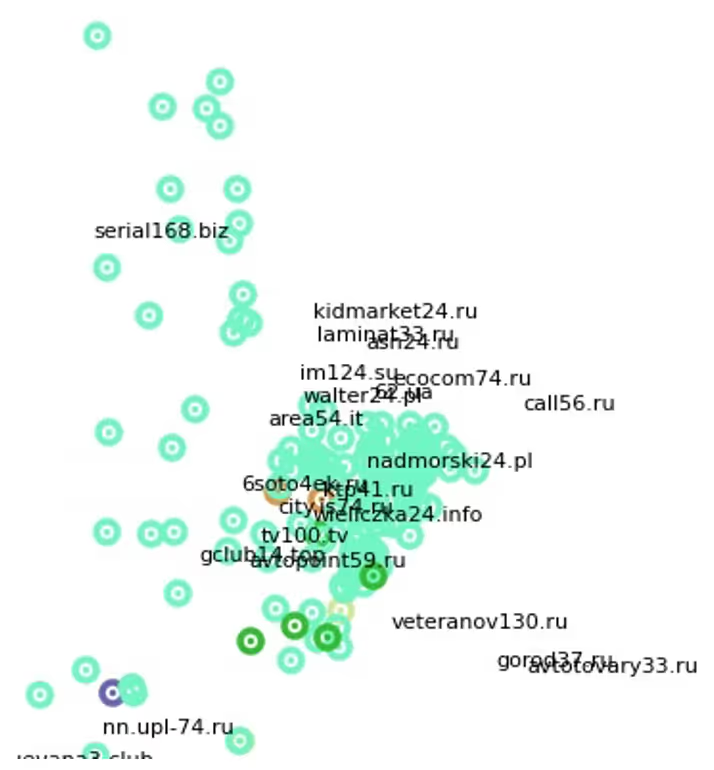

Next, let’s zoom in on two distinct clusters that DEMIST-2 recognizes. One cluster represents small businesses (Figure 2) and the other, Russian and Polish sites with similar numerical formats (Figure 3). These clusters demonstrate how DEMIST-2 can identify specific groupings based on real-world attributes such as regional patterns in website structures, common formats used by small businesses, and other properties such as its understanding of how websites relate to each other on the internet.

The previous figures provided a view of how DEMIST-2 works. Figure 4 highlights DEMIST-2’s performance in a security-related classification task. The chart shows how DEMIST-2, with just 95 million parameters, achieves nearly 94% accuracy—making it the highest-performing model in the chart, despite being the smallest. In comparison, the larger model with 278 million parameters achieves only about 89% accuracy, showing that size doesn’t always mean better performance. Small models don’t mean poor performance. For many security-related tasks, DEMIST-2 outperforms much larger models.

With these examples of DEMIST-2 in action, we’ve shown how it excels in embedding and classifying security data while delivering high performance on specialized security tasks.

The DEMIST-2 advantage

DEMIST-2 was built for precision and reliability. Our primary goal was to create a high-performance model capable of tackling complex cybersecurity tasks. Optimizing for efficiency and scalability came second, but it is a natural outcome of our commitment to building a strong, effective solution that is available to security teams working across diverse environments. It is an enormous benefit that DEMIST-2 is orders of magnitude smaller than many general-purpose models. However, and much more importantly, it significantly outperforms models in its capabilities and accuracy on security tasks.

Finding a product that fits into an environment’s unique constraints used to mean that some teams had to settle for less powerful or less performant products. With DEMIST-2, data can remain local to the environment, is entirely separate from the data of other customers, and can even operate in environments without network connectivity. The size of our model allows for flexible deployment options while at the same time providing measurable performance advantages for security-related tasks.

As security threats continue to evolve, we believe that purpose-built AI systems like DEMIST-2 will be essential tools for defenders, combining the power of modern language modeling with the specificity and reliability that builds trust and partnership between security practitioners and AI systems.

Conclusion

DEMIST-2 has additional architectural and deployment updates that improve performance and stability. These innovations contribute to our ability to minimize model size and memory constraints and reflect our dedication to meeting the data handling and privacy needs of security environments. In addition, these choices reflect our dedication to responsible AI practices.

DEMIST-2 is available in Darktrace 6.3, along with a new DIGEST model that uses GNNs and RNNs to score and prioritize threats with expert-level precision.

[related-resource]

Want more details?

Read the full research paper to explore how DEMIST-2 was built, trained, and optimized to meet the unique challenges of cybersecurity

.avif)

%201.png)

%201.png)