DIGEST advances how Cyber AI Analyst scores and prioritizes incidents. Trained on over a million anonymized incident graphs, our model brings deeper context to severity scoring by analyzing how threats are structured and how they evolve. DIGEST assesses threats as an expert, before damage is done. For more details beyond this overview, please read our Technical Research Paper.

Darktrace combines machine learning (ML) and artificial intelligence (AI) approaches using a multi-layered, multi-method approach. The result is an AI system that continuously ingests data from across an organization’s environment, learns from it, and adapts in real time. DIGEST adds a new layer to this system, specifically to our Cyber AI Analyst, the first and most experienced AI Analyst in cybersecurity, dedicated to refining how incidents are scored and prioritized. DIGEST improves what your team uses to focus on what matters the most first.

To build DIGEST, we combined Graph Neural Networks (GNNs) to interpret incident structure with Recurrent Neural Networks (RNNs) to analyze how incidents evolve over time. This pairing allows DIGEST to reliably determine the potential severity of an incident even at an early stage to give the Cyber AI Analyst a critical edge in identifying high-risk threats early and recognizing when activity is unlikely to escalate.

DIGEST works locally in real-time regardless of whether your Darktrace deployment is on prem or in the cloud, without requiring data to be sent externally for decisions to be made. It was built to support teams in all environments, including those with strict data controls and limited connectivity.

Our approach to AI is unique, drawing inspiration from multiple disciplines to tackle the toughest cybersecurity challenges. DIGEST demonstrates how a novel application of GNNs and RNNs improves the prioritization and triage of security incidents. By blending interdisciplinary expertise with innovative AI techniques, we are able to push the boundaries of what’s possible and deliver it where it is needed most. We are eager to share our findings to accelerate progress throughout the broader field of AI development.

DIGEST: Pattern, progression, and prioritization

Most security incidents start quietly. A device contacting an unusual domain. Credentials are used at unexpected hours. File access patterns shift. The fundamental challenge is not always detecting these anomalies but knowing what to address first. DIGEST gives us this capability.

To understand DIGEST, it helps to start with Cyber AI Analyst, a critical component of our Self-Learning AI system and a front-line triage partner in security investigations. It combines supervised and unsupervised machine learning (ML) techniques, natural language processing (NLP), and graph-based reasoning to investigate and summarize security incidents.

DIGEST was built as an additional layer of analysis within Cyber AI Analyst. It enhances its capabilities by refining how incidents are scored and prioritized, helping teams focus on what matters most more quickly. For a general view of the ML and AI methods that power Darktrace products, read our AI Arsenal whitepaper. This paper provides insights regarding the various approaches we use to detect, investigate, and prioritize threats.

Cyber AI Analyst is constantly investigating alerts and produces millions of critical incidents every year. The dynamic graphs produced by Cyber AI Analyst investigations represent an abstract understanding of security incidents that is fully anonymized and privacy preserving. This allowed us to use the Call Home and aianalyst.darktrace.com services to produce a dataset comprising the broad structure of millions of incidents that Cyber AI analyst detected on customer deployments, without containing any sensitive data. (Read our technical research paper for more details about our dataset).

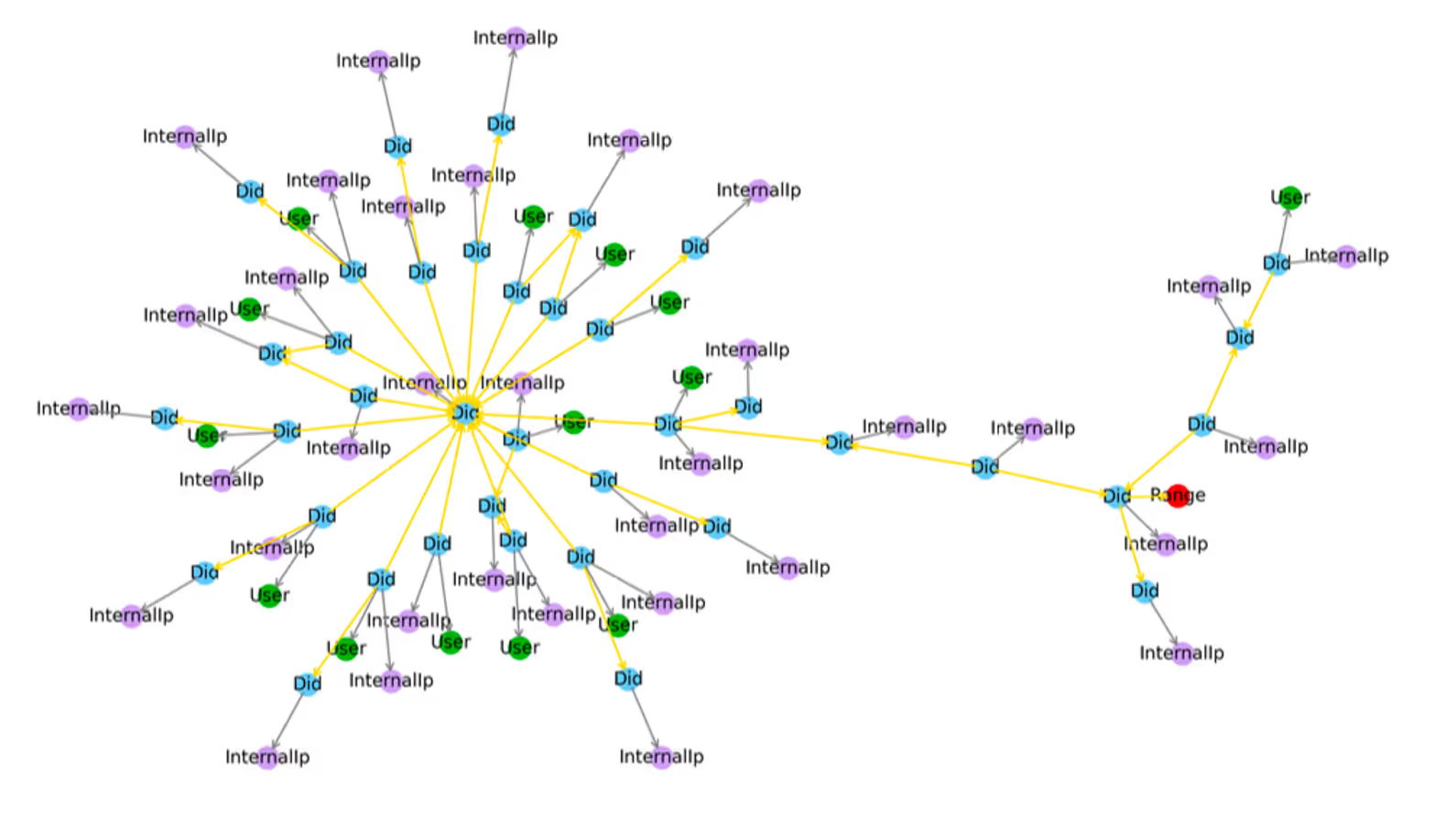

The dynamic graphs from Cyber AI Analyst capture the structure of security incidents where nodes represent entities like users, devices or resources, and edges represent the multitude of relationships between them. As new activity is observed, the graph expands, capturing the progression of incidents over time. Our dataset contained everything from benign administrative behavior to full-scale ransomware attacks.

Unique data, unmatched insights

Key terms

Graph Neural Networks (GNNs): A type of neural network designed to analyze and interpret data structured as graphs, capturing relationships between nodes.

Recurrent Neural Networks (RNNs): A type of neural network designed to model sequences where the order of events matters, like how activity unfolds in a security incident.

The Cyber AI Analyst dataset used to train DIGEST reflects over a decade of work in AI paired with unmatched expertise in cybersecurity. Prior to training DIGEST on our incident graph data set, we performed rigorous data preprocessing to ensure to remove issues such as duplicate or ill-formed incidents. Additionally, to validate DIGEST’s outputs, expert security analysts assessed and verified the model’s scoring.

Transforming data into insights requires using the right strategies and techniques. Given the graphical nature of Cyber AI Analyst incident data, we used GNNs and RNNs to train DIGEST to understand incidents and how they are likely to change over time. Change does not always mean escalation. DIGEST’s enhanced scoring also keeps potentially legitimate or low-severity activity from being prioritized over threats that are more likely to get worse. At the beginning, all incidents might look the same to a person. To DIGEST, it looks like the beginning of a pattern.

As a result, DIGEST enhances our understanding of security incidents by evaluating the structure of the incident, probable next steps in an incident’s trajectory, and how likely it is to grow into a larger event.

To illustrate these capabilities in action, we are sharing two examples of DIGEST’s scoring adjustments from use cases within our customers’ environments.

First, Figure 1 shows the graphical representation of a ransomware attack, and Figure 2 shows how DIGEST scored incident progression of that ransomware attack. At hour two, DIGEST’s score escalated to 95% well before observation of data encryption. This means that prior to seeing malicious encryption behaviors, DIGEST understood the structure of the incident and flagged these early activities as high-likelihood precursors to a severe event. Early detection, especially when flagged prior to malicious encryption behaviors, gives security teams a valuable head start and can minimize the overall impact of the threat, Darktrace Autonomous Response can also be enabled by Cyber AI Analyst to initiate an immediate action to stop the progression, allowing the human security team time to investigate and implement next steps.

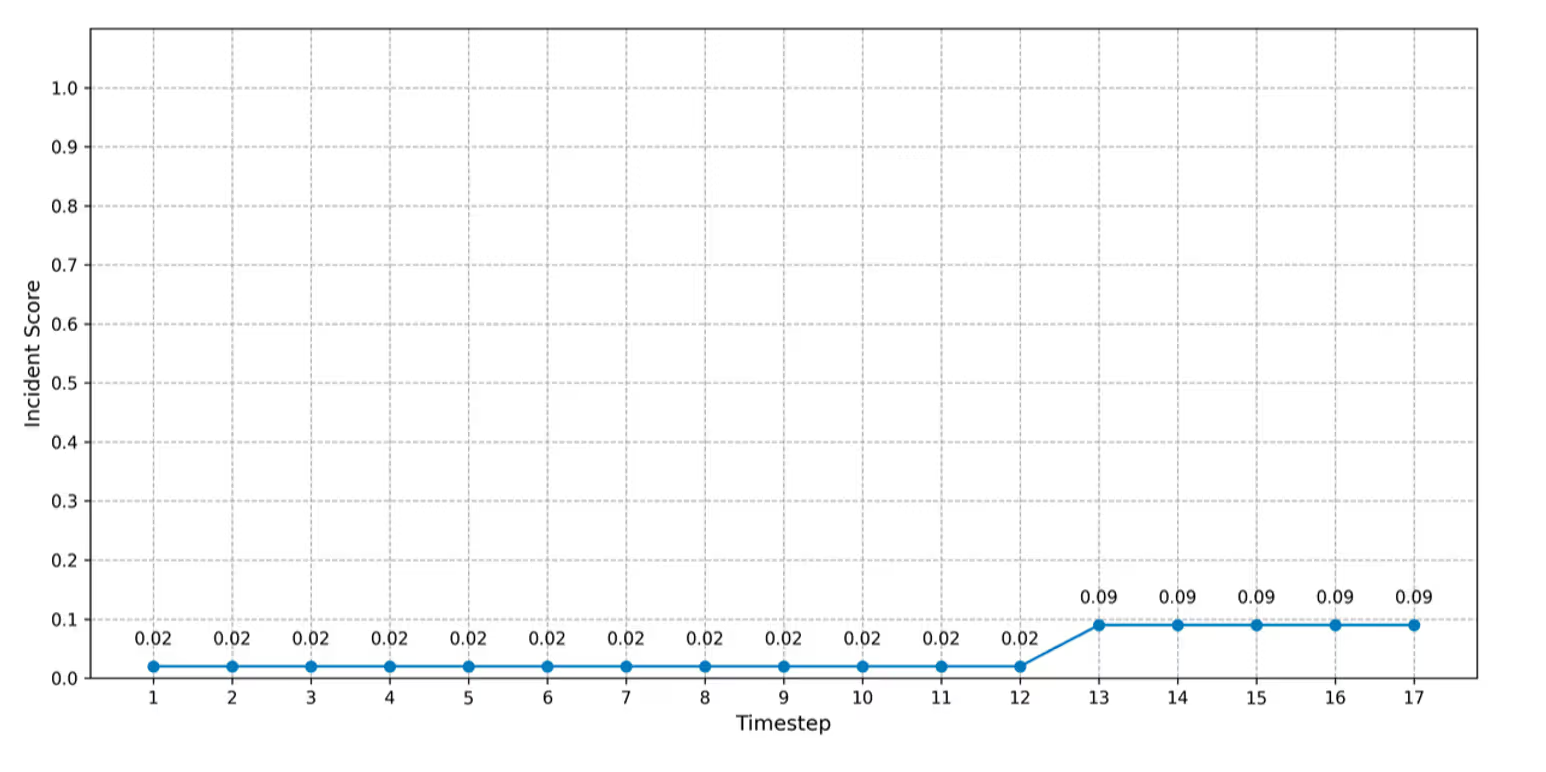

In contrast, our second example shown in Figure 3 and Figure 4 illustrates how DIGEST’s analysis of an incident can help teams avoid wasting time on lower risk scenarios. In this instance, Figure 3 illustrates a graph of unusual administrative activity, where we observed connection to a large group of devices. However, the incident score remained low because DIGEST determined that high risk malicious activity was unlikely. This determination was based on what DIGEST observed in the incident's structure, what it assessed as the probable next steps in the incident lifecycle and how likely it was to grow into a larger adverse event.

These examples show the value of enhanced scoring. DIGEST helps teams act sooner on the threats that count and spend less time chasing the ones that do not.

The next phase of advanced detection is here

Darktrace understands what incidents look like. We have seen, investigated, and learned from them at scale, including over 90 million investigations in 2024. With DIGEST, we can share our deep understanding of incidents and their behaviors with you and triage these incidents using Cyber AI Analyst.

Our ability to innovate in this space is grounded in the maturity of our team and the experiences we have built upon in over a decade of building AI solutions for cybersecurity. This experience, along with our depth of understanding of our data, techniques, and strategic layering of AI/ML components has shaped every one of our steps forward.

With DIGEST, we are entering a new phase, with another line of defense that helps teams prioritize and reason over incidents and threats far earlier in an incident’s lifecycle. DIGEST understands your incidents when they start, making it easier for your team to act quickly and confidently.

DIGEST is available in Darktrace 6.3, along with a new embedding model – DEMIST-2 – designed to provide reliable, high-accuracy detections for critical security use cases.

[related-resource]

Want to learn more?

If you are curious about the details of DIGEST’s dataset, model design, training, experiments, and model deployment, read our technical brief.

.avif)

%201.png)

%201.png)