Introduction

Last month Darktrace identified an advanced malware infection on a customer’s device, which used a sophisticated Command & Control (C2) channel to communicate with the attacker. The attacker spent a lot of effort in engineering a C2 channel that was meant to stay covert for months.

The malware used changing domains generated by Domain Generation Algorithms (DGAs). It also sent HTTP POST requests to malicious IP addresses while using reputable domain names for the hostname of the HTTP requests in order to blend in with normal web browsing. The attacker effectively tried to make the C2 communication look like a user browsing the well-known car rental website sixt.com and the luxury watch manufacturer breitling.com. Without using blacklists or signatures, Darktrace instantly identified this anomalous behavior, and as a result, the security team immediately isolated the infected device.

Beaconing to DGA websites

A laptop appeared on the network and made anomalous HTTP requests. The initial HTTP requests were made to the DGA domain tequbvchrjar[.]com on IP address 66.220.23[.]114. Within the next two days, several hundred HTTP POST requests were made to either this domain or to jckdxdvvm[.]com or cqyegwug[.]com, all hosted on the IP 66.220.23[.]114. Darktrace identified this behavior as beaconing – repeated connections often used in C2 communication – to DGA-domains.

What made this even more suspicious is that the POST requests used 5 different Internet Explorer User Agents for the HTTP requests. This was unusual behavior for the laptop as Darktrace had previously only observed Google Chrome User Agents. Darktrace’s unsupervised machine learning identified the User Agents as new and in conjunction with the DGA-domains as unusual activity.

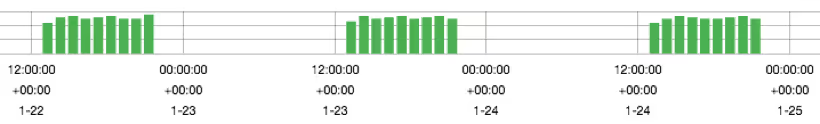

The beaconing followed a steady pattern during afternoon to evening hours when the laptop was being used. This is visualized in the following graph over several days:

Malicious beaconing to reputable domains

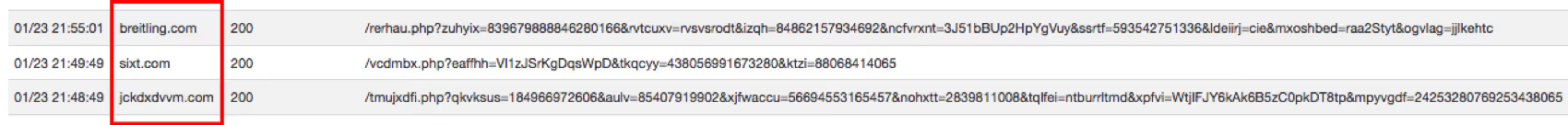

In addition to beaconing to the DGA-domains, the device made several hundred HTTP POST requests using the hostnames sixt.com and breitling.com. Both domains are rather well-known and no public record exists of these domains having been compromised. The HTTP POST requests were made without prior GET requests and continued for several days – this is highly unusual behavior and does not resemble a user browsing those websites.

Upon closer inspection it became clear that the malware used indeed the hostnames sixt.com and breitling.com for the HTTP requests – but it was sending the HTTP requests to IP addresses owned by the attacker, not to the IP addresses that sixt.com and breitling.com resolve to on non-infected devices.

The requests for sixt.com were sent to the IP 184.105.76[.]250 while the requests for breitling.com were sent to 64.71.188[.]178. These two IP addresses, as well as the IP address hosting the DGA-domains, were hosted in the same ASN, AS6939 Hurricane Electric, which made this behavior even more suspicious. It is unlikely that all domains would be hosted in the same ASN by chance.

The malware authors used the trick of beaconing to well-known hostnames to circumvent reputation-based security controls and domain-based filters such as domain-blacklists, and to divert attention from security analysts investigating the beaconing. After all, the behavior looked on the surface like a user was browsing rental cars and luxury watches.

Further rapid investigation

Darktrace quickly revealed more details about the C2 communication. All requests were made to suspiciously-looking PHP endpoints and returned HTTP status code 200, ‘OK’, in all cases. The following shows an example of requests to three domains.

Darktrace instantly alerted on this as anomalous behavior:

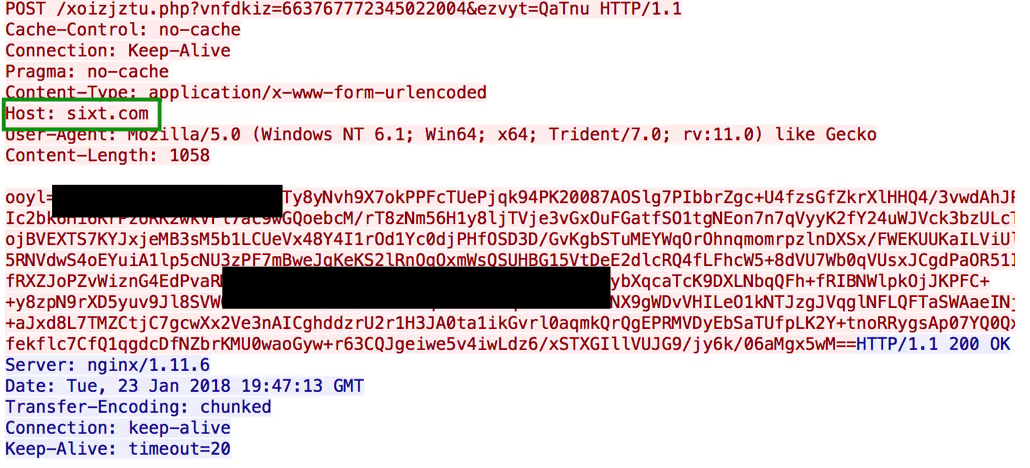

A PCAP was directly downloaded from the Darktrace interface to inspect the suspicious C2 traffic:

The actual POST data appears to be encoded. Using an encoded POST request and a Content-Type of ‘x-www-form-urlencoded’ is commonly seen in malware communication.

Actively developed malware strain

It appears that this malware strain is under active development.

Open source research suggests that malware that behaves similarly has been circulated at least since the end of 2016. Some sources have attributed the malware families Razy and Nymaim to the executables seen. However, little research on these strains exist and both malware strains are generic in nature. Below are two samples from 2016:

Sample 1: [reverse.it]

Sample 2: [hybrid-analysis.com]

These pieces of malware likely represent a prior version of the malware identified by Darktrace. The 2016 version also communicated with sixt.com and breitling.com, but also made HTTP requests to carvezine.com and sievecnda.com. No DGA domains were observed in the 2016 version.

The PHP endpoints in the URI have also changed. In the version from 2016, the PHP endpoints always ended in ‘/[DGA-string]/index.php’. C2 traffic is often seen to be sent to ‘index.php’ endpoints. Defenders started monitoring the static URI Indicator of Compromise (IoC) ‘index.php’. The malware authors know this as well and have adapted their C2 communication accordingly. As shown in the above screenshots, the PHP endpoint is now in the format of ‘[DGA-string].php’. This further shows that legacy controls – such as static monitoring for quickly outdated Indicators of Compromise – do not scale in today’s threat landscape.

Conclusion

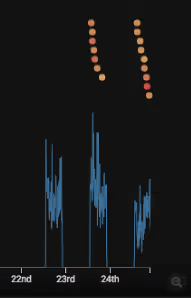

Although the malware authors intended for their implant to stay covert and defeat common security controls, Darktrace instantly alerted on the anomalous behavior. Darktrace’s detections could not have been clearer. The following graphic shows a part of the communication exhibited by the infected device around the time of the infection. Blue lines represent outgoing connections from the device. Every colored dot represents a high-level Darktrace alert:

Using no blacklists or signatures, Darktrace detected this highly anomalous malware behavior instantly. A piece of malware that was meant to stay covert for months was quickly identified using anomaly detection on network data.

Indicators of Compromise:

tequbvchrjar[.]com

jckdxdvvm[.]com

cqyegwug[.]com

66.220.23[.]114

64.71.188[.]178

184.105.76[.]250