Familiarity with AI has increased starkly since 2024, when 26% of participants were either only vaguely familiar with generative AI, or hadn’t heard of it. Now most are using it in their cybersecurity stacks.

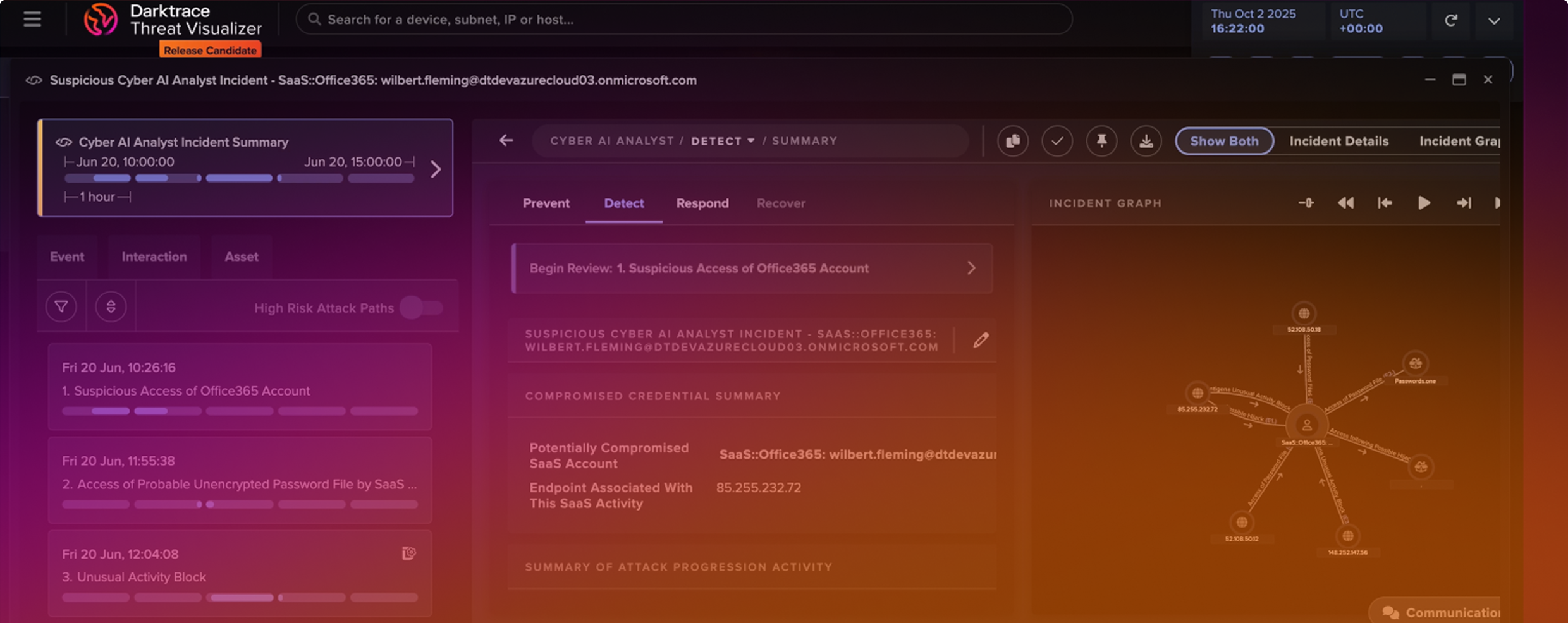

But the AI spectrum extends well beyond LLMs, to NLPs and GANs to unsupervised machine learning. Each have their own specific use cases, strengths and limitations. Darktrace has broken these down for defenders in our whitepaper The AI Arsenal: Understanding the Tools Shaping Cybersecurity