Cloud platforms transform the way we build digital infrastructure, allowing us to create incredibly innovative environments for business – but often, it’s at the cost of visibility and control.

With complex hybrid and multi-cloud infrastructures becoming an essential part of increasingly diverse digital estates, the journey to the cloud has fundamentally reshaped the traditional paradigm of the network perimeter, while expanding the attack surface at an alarming rate. Meanwhile, traditional security controls still only offer point solutions that rely on retrospective rules and threat signatures and fail to stop novel and advanced attacks.

To shoulder the weight of shared responsibility for cloud security, organizations require the approach offered by Darktrace DETECT & RESPOND. With Self-Learning AI, DETECT continuously learns what normal ‘patterns of life’ look like for every user, device, virtual machine, and container across an organization. By actively developing a bespoke understanding of ‘self,’ the DETECT can identify the subtle anomalies that point to an advanced attack, without any pre-defined assumptions of ‘good’ or ‘bad' and RESPOND can autonomously interfere to stop emerging threats without disrupting business operations.

As more and more businesses turn to AWS to leverage the benefits of cloud infrastructure, gaining visibility and security for AWS-hosted data and applications is absolutely crucial. The advent of AWS VPC traffic mirroring has allowed Darktrace to shine a light on blind spots in our customers’ AWS environments, ensuring that our Cyber AI security platform can stop any type of threat that emerges. With the AI-powered security securing your AWS environment, you can embrace all the benefits of the cloud with confidence.

Self-learning Cyber AI with granular, real-time visibility

VPC traffic mirroring gives our Self-Learning AI access to granular packet data, allowing DETECT to extract hundreds of features from the raw data and build rich behavioral models for our customers’ AWS cloud environments. This real-time visibility to the underlying fabric of AWS environments provided by VPC traffic mirroring helps Darktrace Cyber AI learn ‘on the job,’ continuously adapting as your business evolves. Darktrace provides the only security solution that learns in real time, a critical feature given the speed and scale of development in the cloud.

Unified control: Correlating patterns across infrastructure

Taking a fundamentally unique approach, DETECT actively correlates activity across AWS and beyond – whether your digital ecosystem includes other cloud environments, SaaS applications, or any range of on- and off-premise infrastructure. From a threat detection perspective, this is crucial, as security events detected in one part of an organization are often part of a broader security incident. This ensures that threats in the cloud are not siloed from monitoring of the rest of the infrastructure, nor are the implications for cloud security ignored when intrusions occur elsewhere in the network.

Neutralizing sophisticated and novel attacks

Legacy security controls miss novel and advanced attacks targeting cloud infrastructure. With VPC traffic mirroring supporting Darktrace Cyber AI’s understanding of an organization’s AWS environment, any slight changes from normal behavior that may indicate a potential threat can be detected immediately. This allows the DETECT to catch the full range of cloud-based attacks, from zero-day malware, to stealthy insider threats.

“Darktrace represents a new frontier in AI-based cyber defense. Our team now has complete real-time coverage across our SaaS applications and cloud containers.”

— CIO, City of Las Vegas

How it works: Using VPC traffic mirroring to analyze AWS traffic

For customers leveraging AWS within an IaaS model, Darktrace uses VPC traffic mirroring to collect metadata from mirrored VPC packets in a Darktrace probe known as a ‘vSensor’. The vSensor captures real-time traffic and selectively forwards relevant metadata to a Darktrace cloud instance or on-premise probe. From here, DETECT correlates VPC traffic with cloud, email, network, and SaaS traffic across a customer’s hybrid and multi-cloud infrastructure for analysis.

By utilizing VPC traffic mirroring in this way, the Immune System can perform deep packet inspection on traffic in the customer’s AWS cloud environment, up to and including the application layer. Hundreds of features are extracted from the raw data, ranging from high-level metrics of data flow quantities, to peer relationship meta-data, to specific application layer events. These features allow Darktrace Cyber AI to build rich behavioral models that let it understand normal patterns of life for the organization and detect malicious activity. It is important that Darktrace is able to construct these metrics from the raw data rather than relying on flow logs alone, as flow logs don't provide the required level of granularity or real-time events within connections.

For non-Nitro AWS instances, we deploy lightweight agents known as ‘OS-Sensors’ that feed relevant traffic to a local vSensor and, in turn, to a Darktrace cloud instance or on-premise probe. Once configured, OS-Sensors can easily be scaled as new instances are spun up. Darktrace also offers a specialized OS-Sensor that provides coverage in containerized systems like Docker and Kubernetes.

Richer context with AWS CloudTrail logs

In addition to analyzing data with VPC traffic mirroring, the DETECT also monitors management and data events within AWS. It does so via HTTP requests for logfiles generated by AWS CloudTrail, which monitors events from all AWS services, including:

- EC2

- IAM

- S3

- VPC

- Lambda

Different event types produced via CloudTrail are organized by Darktrace into categories based on the action type and the AWS services that generate it. These different categories show up as metrics in the DETECT user interface, the Threat Visualizer. This information is used to provide even richer context in connection with mirrored traffic in VPCs, as well as all cloud, network, email, and SaaS traffic across a customer’s entire digital environment.

Darktrace deployment scenarios for AWS customers

For IaaS environments, Darktrace deploys a vSensor in each cloud environment. Within AWS environments, the vSensor captures real-time traffic with AWS VPC traffic mirroring. The receiving vSensor processes the data and feeds it back to the cloud-based Darktrace instance. AWS customers additionally have the option of deploying a ‘Darktrace Security Module’ to monitor IaaS management and data events at the API level, such as logins, editing virtual servers, or creating new access credentials.

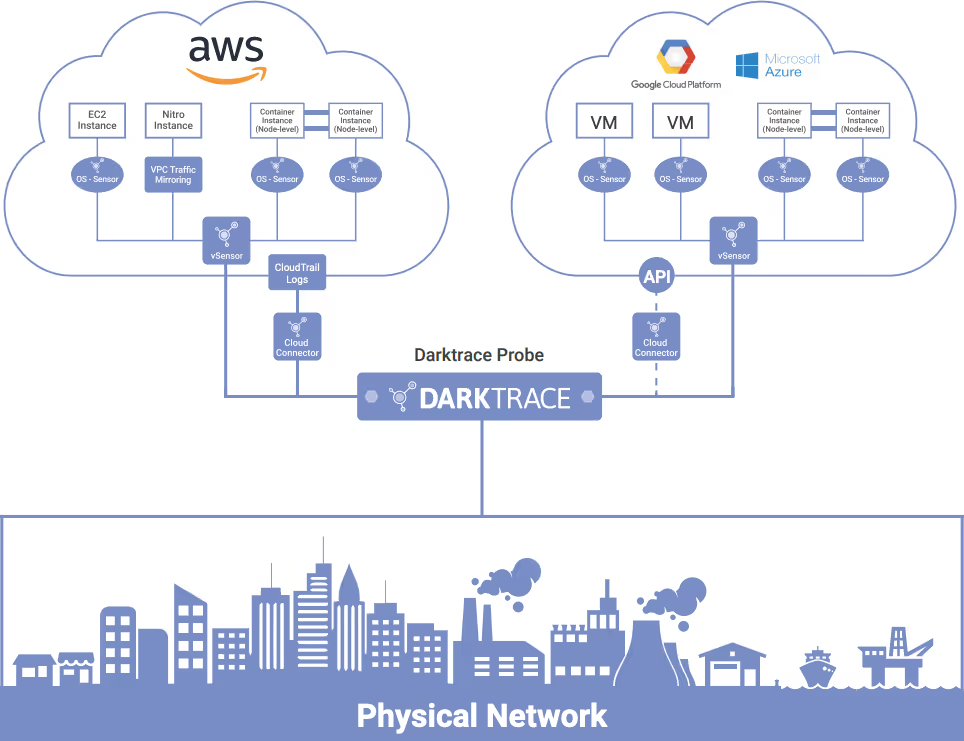

Figure 1: A cloud-only deployment scenario — Darktrace manages a master cloud probe which receives traffic from sensors and connectors in IaaS and/or SaaS environments.

For hybrid IaaS deployments, Darktrace will similarly deploy vSensors, and OS-Sensors as appropriate. Cloud traffic and event data from AWS and any other cloud environments is then fed to a Darktrace probe in the cloud or on-premise network. For the latter scenario, Darktrace will deploy a physical appliance that ingests real-time network traffic via a SPAN port or network tap, allowing it to correlate patterns across the entire digital ecosystem.

Figure 2: A hybrid cloud deployment scenario, with multi-cloud infrastructure across AWS, Azure and GCP

For hybrid SaaS deployments, Darktrace will deploy provider-specific Darktrace Security Modules on either a physical or cloud-based Darktrace probe, in addition to any other relevant vSensors and OS-Sensors in place. SaaS data is then analyzed and correlated with traffic and user behaviors across AWS, other cloud environments, and any on- and off- premise cyber-physical infrastructure.

Defense against the full range of threats in the cloud

With the deep insight and powerful reaction capabilities of Cyber AI, Darktrace DETECT & RESPOND are the only proven technologies to stop the full range of cyber-threats in the cloud, including:

- Critical misconfigurations

- Insider threat

- Compromised credentials

- Novel and advanced malware

- Password brute-force attacks

- Data exfiltration

- Lateral movement

- Man-in-the-middle attacks

- Crypto-jacking

- Violations of policy

Case Studies

Crypto mining malware inadvertently installed

Darktrace detected a mistake from a junior DevOps engineer in a multinational organization with workloads across AWS and Azure and leveraging containerized systems like Docker and Kubernetes. The engineer accidentally downloaded an update that included a crypto miner, which led to an infection across multiple cloud production systems.

After the initial infection, the malware started beaconing out to an external command and control server, which was immediately picked up by Darktrace. With the external connection established and the attack mission instructions delivered, the crypto malware infection was then able to rapidly spread across the organization’s expansive cloud infrastructure at machine speed, infecting 20 cloud servers in under 15 seconds.

Extensive visibility into the organization’s AWS environment via VPC traffic mirroring was a key factor allowing Darktrace Cyber AI to identify the scale of the attack. With the dynamic and unified view across the company’s sprawling hybrid and multi-cloud infrastructure provided by Darktrace, the company’s security team was able to contain the attack within minutes, rather than hours or days. Even though the attack moved at machine speed, by leveraging solutions like VPC traffic mirroring to continuously analyze behavior in the cloud, Darktrace caught the threat at an early enough stage – well before the costs could start to mount.

Developer misuse of AWS cloud infrastructure

At an insurance group, a DevOps Engineer was attempting to build a parallel back-up infrastructure within AWS to replicate the organization’s data center production systems. The technical implementation was perfect, and the back-up systems were created – however, the cost of running the system would have been several million dollars per year.

The DevOps Engineer was unaware of the costs associated with the project and kept management in the dark. The cloud infrastructure was launched, and the costs started rising. Yet with real-time access to the company’s AWS environment provided by VPC traffic mirroring, Darktrace’s Cyber AI was immediately alerted to this unusual behavior, allowing the security team to take preventative action immediately.

With Darktrace Cyber AI, embrace the benefits of AWS

As organizations increasingly turn to the cloud and the threat surface continues to expand, security teams need self-learning AI on their side to gain the strongest insights, illuminate every blind spot, and stop all attacks.

By providing an enterprise-wide Cyber AI platform, Darktrace helps teams overcome the traditional security challenge of manually piecing together incidents across disparate corners of an organization. The unified visibility and control offered by Darktrace PREVENT, DETECT, RESPOND, & HEAL reduces the complexity and dashboard fatigue that many teams continue to struggle with, while the system’s multi-dimensional insight enhances its decision-making and threat confidence. Darktrace further augments this process with the Immune System’s AI Analyst capability, which takes the additional step of automatically investigating threats detected by Darktrace and producing concise, AI-generated reports that communicate the full scope of an incident.

With the granular, real-time visibility of VPC traffic mirroring Darktrace, you can be certain your AWS cloud environments are always protected.

%201.png)