What is SEO poisoning?

Search Engine Optimization (SEO) is the legitimate marketing technique of improving the visibility of websites in organic search engine results. Businesses, publishers, and organizations use SEO to ensure their content is easily discoverable by users. Techniques may include optimizing keywords, creating backlinks, or even ensuring mobile compatibility.

SEO poisoning occurs when attackers use these same techniques for malicious purposes. Instead of improving the visibility of legitimate content, threat actors use SEO to push harmful or deceptive websites to the top of search results. This method exploits the common assumption that top-ranking results are trustworthy, leading users to click on URLs without carefully inspecting them.

As part of SEO poisoning, the attacker will first register a typo-squatted domain, slightly misspelled or otherwise deceptive versions of real software sites, such as putty[.]run or puttyy[.]org. These sites are optimized for SEO and often even backed by malicious Google ads, increasing the visibility when users search for download links. To achieve that, threat actors may embed pages with strategically chosen, high-value keywords or replicate content from reputable sources to elevate the domain’s perceived authority in search engine algorithms [4]. In more advanced operations, these tactics are reinforced with paid promotion, such as Google ads, enabling malicious domains to appear above organic search results as sponsored links. This placement not only accelerates visibility but also impacts an unwarranted sense of legitimacy to unsuspected users.

Once a user lands on one of these fake pages, they are presented with what looks like a legitimate software download option. Upon clicking the download indicator, the user will be redirected to another separate domain that actually hosts the payload. This hosting domain is usually unrelated to the nominally referenced software. These third-party sites can involve recently registered domains but may also include legitimate websites that have been recently compromised. By hosting malware on a variety of infrastructure, attackers can prolong the availability of distribution methods for these malicious files before they are taken down.

What is the Oyster backdoor?

Oyster, also known as Broomstick or CleanUpLoader, is a C++ based backdoor malware first identified in July 2023. It enables remote access to infected systems, offering features such as command-line interaction and file transfers.

Oyster has been widely adopted by various threat actors, often as an entry point for ransomware attacks. Notable examples include Vanilla Tempest and Rhysida ransomware groups, both of which have been observed leveraging the Oyster backdoor to enhance their attack capabilities. Vanilla Tempest is known for using Oyster’s stealth persistence to maintain long-term access within targeted networks, often aligning their operations with ransomware deployment [5]. Rhysida has taken this further by deploying Oyster as an initial access tool in ransomware campaigns, using it to conduct reconnaissance and move laterally before executing encryption activities [6].

Once installed, the backdoor gathers basic system information before communicating with a command-and-control (C2) server. The malware largely relies on a ‘cmd.exe’ instance to execute commands and launch other files [1].

In previous SEO poisoning cases, the file downloaded from the fake pages is not just PuTTY, but a trojanized version that includes the stealthy Oyster backdoor. PuTTY is a free and open-source terminal emulator for Windows that allows users to connect to remote servers and devices using protocols like SSH and Telnet. In the recent campaign, once a user visits the fake software download site, ranked highly through SEO poisoning, the malicious payload is downloaded through direct user interaction and subsequently installed on the local device, initiating the compromise. The malware then performs two actions simultaneously: it installs a fully functional version of PuTTY to avoid user suspicion, while silently deploying the Oyster backdoor. Given PuTTY’s nature, it is prominently used by IT administrators with highly privileged account as opposed to standard users in a business, possibly narrowing the scope of the targets.

Oyster’s persistence mechanism involves creating a Windows Scheduled Task that runs every few minutes. Notably, the infection uses Dynamic Link Library (DLL) side loading, where a malicious DLL, often named ‘twain_96.dll’, is executed via the legitimate Windows utility ‘rundll32.exe’, which is commonly used to run DLLs [2]. This technique is frequently used by malicious actors to blend their activity with normal system operations.

Darktrace’s Coverage of the Oyster Backdoor

In June 2025, security analysts at Darktrace identified a campaign leveraging search engine manipulation to deliver malware masquerading as the popular SSH client, PuTTY. Darktrace / NETWORK’s anomaly-based detection identified signs of malicious activity, and when properly configured, its Autonomous Response capability swiftly shut down the threat before it could escalate into a more disruptive attack. Subsequent analysis by Darktrace’s Threat Research team revealed that the payload was a variant of the Oyster backdoor.

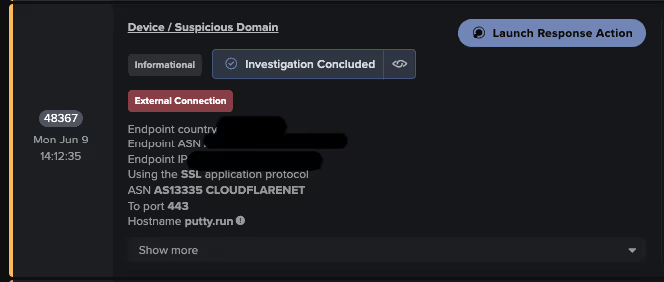

The first indicators of an emerging Oyster SEO campaign typically appeared when user devices navigated to a typosquatted domain, such as putty[.]run or putty app[.]naymin[.]com, via a TLS/SSL connection.

The device would then initiate a connection to a secondary domain that hosts the malicious installer, likely triggered by user interaction with redirect elements on the landing page. This secondary site may not have any immediate connection to PuTTY itself but is instead a hijacked blog, a file-sharing service, or a legitimate-looking content delivery subdomain.

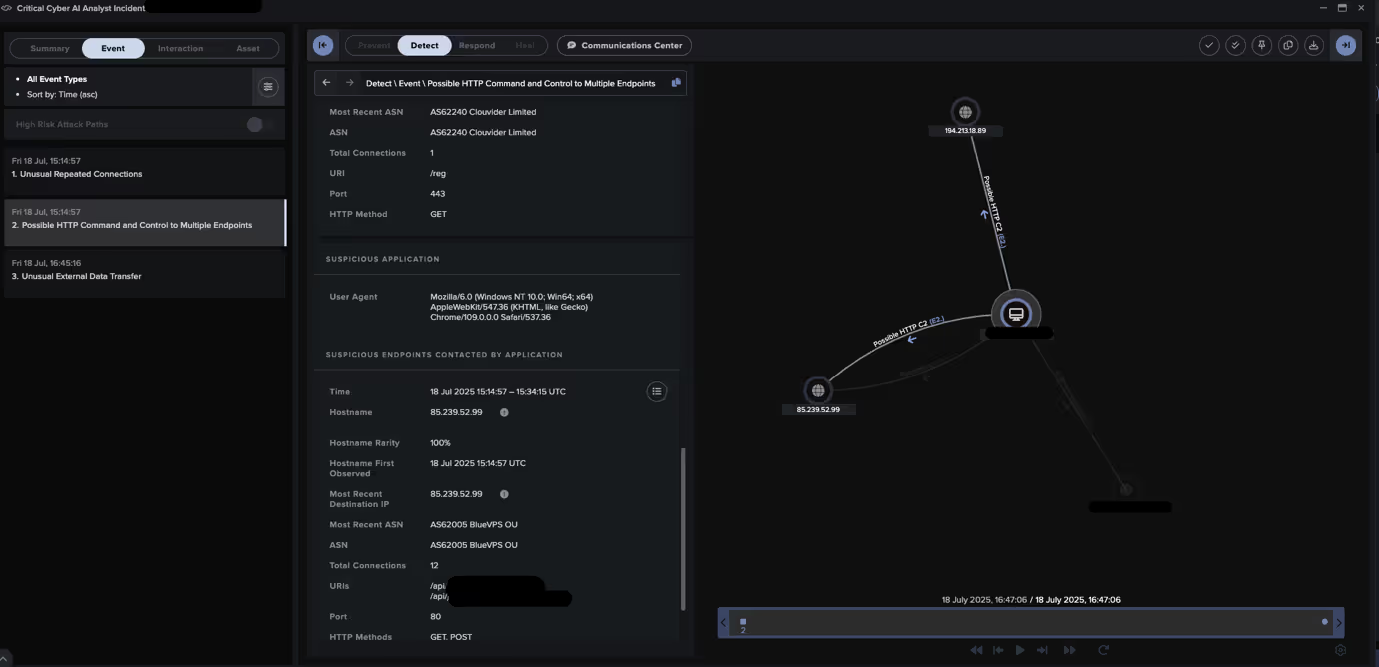

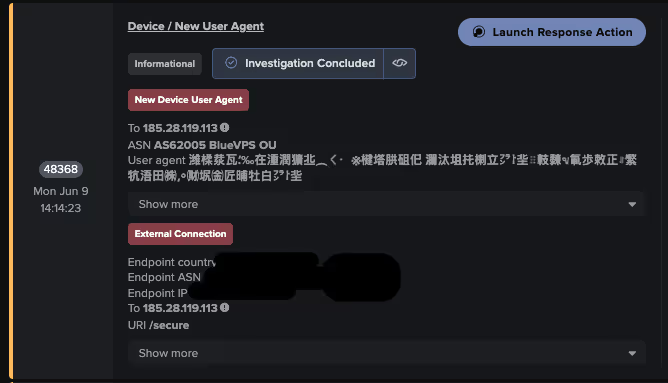

Following installation, multiple affected devices were observed attempting outbound connectivity to rare external IP addresses, specifically requesting the ‘/secure’ endpoint as noted within the declared URIs. After the initial callback, the malware continued communicating with additional infrastructure, maintaining its foothold and likely waiting for tasking instructions. Communication patterns included:

· Endpoints with URIs /api/kcehc and /api/jgfnsfnuefcnegfnehjbfncejfh

· Endpoints with URI /reg and user agent “WordPressAgent”, “FingerPrint” or “FingerPrintpersistent”

This tactic has been consistently linked to the Oyster backdoor, which has shown similar URI patterns across multiple campaigns [3].

Darktrace analysts also noted the sophisticated use of spoofed user agent strings across multiple investigated customer networks. These headers, which are typically used to identify the application making an HTTP request, are carefully crafted to appear benign or mimic legitimate software. One common example seen in the campaign is the user agent string “WordPressAgent”. While this string references a legitimate web application or plugin, it does not appear to correspond to any known WordPress services or APIs. Its inclusion is most likely designed to mimic background web traffic commonly associated with WordPress-based content management systems.

Case-Specific Observations

While the previous section focused on tactics and techniques common across observed Oyster infections, a closer examination reveals notable variations and unique elements in specific cases. These distinct features offer valuable insights into the diverse operational approaches employed by threat actors. These distinct features, from unusual user agent strings to atypical network behavior, offer valuable insights into the diverse operational approaches employed by the threat actors. Crucially, the divergence in post-exploitation activity reflects a broader trend in the use of widely available malware families like Oyster as flexible entry points, rather than fixed tools with a single purpose. This modular use of the backdoor reflects the growing Malware-as-a-Service (MaaS) ecosystem, where a single initial infection can be repurposed depending on the operator’s goals.

From Infection to Data Egress

In one observed incident, Darktrace observed an infected device downloading a ZIP file named ‘host[.]zip’ via curl from the URI path /333/host[.]zip, following the standard payload delivery chain. This file likely contained additional tools or payloads intended to expand the attacker’s capabilities within the compromised environment. Shortly afterwards, the device exhibited indicators of probable data exfiltration, with outbound HTTP POST requests featuring the URI pattern: /upload?dir=NAME_FOLDER/KEY_KEY_KEY/redacted/c/users/public.

This format suggests the malware was actively engaged in local host data staging and attempting to transmit files from the target machine. The affected device, identified as a laptop, aligns with the expected target profile in SEO poisoning scenarios, where unsuspecting end users download and execute trojanized software.

Irregular RDP Activity and Scanning Behavior

Several instances within the campaign revealed anomalous or unexpected Remote Desktop Protocol (RDP) sessions occurring shortly after DNS requests to fake PuTTY domains. Unusual RDP connections frequently followed communication with Oyster backdoor C2 servers. Additionally, Darktrace detected patterns of RDP scanning, suggesting the attackers were actively probing for accessible systems within the network. This behavior indicates a move beyond initial compromise toward lateral movement and privilege escalation, common objectives once persistence is established.

The presence of unauthorized and administrative RDP sessions following Oyster infections aligns with the malware’s historical role as a gateway for broader impact. In previous campaigns, Oyster has often been leveraged to enable credential theft, lateral movement, and ultimately ransomware deployment. The observed RDP activity in this case suggests a similar progression, where the backdoor is not the final objective but rather a means to expand access and establish control over the target environment.

Cryptic User Agent Strings?

In multiple investigated cases, the user agent string identified in these connections featured formatting that appeared nonsensical or cryptic. One such string containing seemingly random Chinese-language characters translated into an unusual phrase: “Weihe river is where the water and river flow.” Legitimate software would not typically use such wording, suggesting that the string was intended as a symbolic marker rather than a technical necessity. Whether meant as a calling card or deliberately crafted to frame attribution, its presence highlights how subtle linguistic cues can complicate analysis.

Strategic Implications

What makes this campaign particularly noteworthy is not simply the use of Oyster, but its delivery mechanism. SEO poisoning has traditionally been associated with cybercriminal operations focused on opportunistic gains, such as credential theft and fraud. Its strength lies in casting a wide net, luring unsuspecting users searching for popular software and tricking them into downloading malicious binaries. Unlike other campaigns, SEO poisoning is inherently indiscriminate, given that the attacker cannot control exactly who lands on their poisoned search results. However, in this case, the use of PuTTY as the luring mechanism possibly indicates a narrowed scope - targeting IT administrators and accounts with high privileges due to the nature of PuTTY’s functionalities.

This raises important implications when considered alongside Oyster. As a backdoor often linked to ransomware operations and persistent access frameworks, Oyster is far more valuable as an entry point into corporate or government networks than small-scale cybercrime. The presence of this malware in an SEO-driven delivery chain suggests a potential convergence between traditional cybercriminal delivery tactics and objectives often associated with more sophisticated attackers. If actors with state-sponsored or strategic objectives are indeed experimenting with SEO poisoning, it could signal a broadening of their targeting approaches. This trend aligns with the growing prominence of MaaS and the role of initial access brokers in today’s cybercrime ecosystem.

Whether the operators seek financial extortion through ransomware or longer-term espionage campaigns, the use of such techniques blurs the traditional distinctions. What looks like a mass-market infection vector might, in practice, be seeding footholds for high-value strategic intrusions.

Credit to Christina Kreza (Cyber Analyst) and Adam Potter (Senior Cyber Analyst)

Appendices

MITRE ATT&CK Mapping

· T1071.001 – Command and Control – Web Protocols

· T1008 – Command and Control – Fallback Channels

· T0885 – Command and Control – Commonly Used Port

· T1571 – Command and Control – Non-Standard Port

· T1176 – Persistence – Browser Extensions

· T1189 – Initial Access – Drive-by Compromise

· T1566.002 – Initial Access – Spearphishing Link

· T1574.001 – Persistence – DLL

Indicators of Compromise (IoCs)

· 85.239.52[.]99 – IP address

· 194.213.18[.]89/reg – IP address / URI

· 185.28.119[.]113/secure – IP address / URI

· 185.196.8[.]217 – IP address

· 185.208.158[.]119 – IP address

· putty[.]run – Endpoint

· putty-app[.]naymin[.]com – Endpoint

· /api/jgfnsfnuefcnegfnehjbfncejfh

· /api/kcehc

Darktrace Model Detections

· Anomalous Connection / New User Agent to IP Without Hostname

· Anomalous Connection / Posting HTTP to IP Without Hostname

· Compromise / HTTP Beaconing to Rare Destination

· Compromise / Large Number of Suspicious Failed Connections

· Compromise / Beaconing Activity to External Rare

· Compromise / Quick and Regular Windows HTTP Beaconing

· Device / Large Number of Model Alerts

· Device / Initial Attack Chain Activity

· Device / Suspicious Domain

· Device / New User Agent

· Antigena / Network / Significant Anomaly / Antigena Breaches Over Time Block

· Antigena / Network / External Threat / Antigena Suspicious Activity Block

· Antigena / Network / Significant Anomaly / Antigena Significant Anomaly from Client Block

References

[1] https://malpedia.caad.fkie.fraunhofer.de/details/win.broomstick

[3] https://hunt.io/blog/oysters-trail-resurgence-infrastructure-ransomware-cybercrime

[4] https://www.crowdstrike.com/en-us/cybersecurity-101/social-engineering/seo-poisoning/

[6] https://areteir.com/article/rhysida-using-oyster-backdoor-in-attacks/

The content provided in this blog is published by Darktrace for general informational purposes only and reflects our understanding of cybersecurity topics, trends, incidents, and developments at the time of publication. While we strive to ensure accuracy and relevance, the information is provided “as is” without any representations or warranties, express or implied. Darktrace makes no guarantees regarding the completeness, accuracy, reliability, or timeliness of any information presented and expressly disclaims all warranties.

Nothing in this blog constitutes legal, technical, or professional advice, and readers should consult qualified professionals before acting on any information contained herein. Any references to third-party organizations, technologies, threat actors, or incidents are for informational purposes only and do not imply affiliation, endorsement, or recommendation.

Darktrace, its affiliates, employees, or agents shall not be held liable for any loss, damage, or harm arising from the use of or reliance on the information in this blog.

The cybersecurity landscape evolves rapidly, and blog content may become outdated or superseded. We reserve the right to update, modify, or remove any content without notice.

%201.png)