What is email bombing?

An email bomb attack, also known as a "spam bomb," is a cyberattack where a large volume of emails—ranging from as few as 100 to as many as several thousand—are sent to victims within a short period.

How does email bombing work?

Email bombing is a tactic that typically aims to disrupt operations and conceal malicious emails, potentially setting the stage for further social engineering attacks. Parallels can be drawn to the use of Domain Generation Algorithm (DGA) endpoints in Command-and-Control (C2) communications, where an attacker generates new and seemingly random domains in order to mask their malicious connections and evade detection.

In an email bomb attack, threat actors typically sign up their targeted recipients to a large number of email subscription services, flooding their inboxes with indirectly subscribed content [1].

Multiple threat actors have been observed utilizing this tactic, including the Ransomware-as-a-Service (RaaS) group Black Basta, also known as Storm-1811 [1] [2].

Darktrace detection of email bombing attack

In early 2025, Darktrace detected an email bomb attack where malicious actors flooded a customer's inbox while also employing social engineering techniques, specifically voice phishing (vishing). The end goal appeared to be infiltrating the customer's network by exploiting legitimate administrative tools for malicious purposes.

The emails in these attacks often bypass traditional email security tools because they are not technically classified as spam, due to the assumption that the recipient has subscribed to the service. Darktrace / EMAIL's behavioral analysis identified the mass of unusual, albeit not inherently malicious, emails that were sent to this user as part of this email bombing attack.

Email bombing attack overview

In February 2025, Darktrace observed an email bombing attack where a user received over 150 emails from 107 unique domains in under five minutes. Each of these emails bypassed a widely used and reputable Security Email Gateway (SEG) but were detected by Darktrace / EMAIL.

The emails varied in senders, topics, and even languages, with several identified as being in German and Spanish. The most common theme in the subject line of these emails was account registration, indicating that the attacker used the victim’s address to sign up to various newsletters and subscriptions, prompting confirmation emails. Such confirmation emails are generally considered both important and low risk by email filters, meaning most traditional security tools would allow them without hesitation.

Additionally, many of the emails were sent using reputable marketing tools, such as Mailchimp’s Mandrill platform, which was used to send almost half of the observed emails, further adding to their legitimacy.

While the individual emails detected were typically benign, such as the newsletter from a legitimate UK airport shown in Figure 3, the harmful aspect was the swarm effect caused by receiving many emails within a short period of time.

Traditional security tools, which analyze emails individually, often struggle to identify email bombing incidents. However, Darktrace / EMAIL recognized the unusual volume of new domain communication as suspicious. Had Darktrace / EMAIL been enabled in Autonomous Response mode, it would have automatically held any suspicious emails, preventing them from landing in the recipient’s inbox.

Following the initial email bombing, the malicious actor made multiple attempts to engage the recipient in a call using Microsoft Teams, while spoofing the organizations IT department in order to establish a sense of trust and urgency – following the spike in unusual emails the user accepted the Teams call. It was later confirmed by the customer that the attacker had also targeted over 10 additional internal users with email bombing attacks and fake IT calls.

The customer also confirmed that malicious actor successfully convinced the user to divulge their credentials with them using the Microsoft Quick Assist remote management tool. While such remote management tools are typically used for legitimate administrative purposes, malicious actors can exploit them to move laterally between systems or maintain access on target networks. When these tools have been previously observed in the network, attackers may use them to pursue their goals while evading detection, commonly known as Living-off-the-Land (LOTL).

Subsequent investigation by Darktrace’s Security Operations Centre (SOC) revealed that the recipient's device began scanning and performing reconnaissance activities shortly following the Teams call, suggesting that the user inadvertently exposed their credentials, leading to the device's compromise.

Darktrace’s Cyber AI Analyst was able to identify these activities and group them together into one incident, while also highlighting the most important stages of the attack.

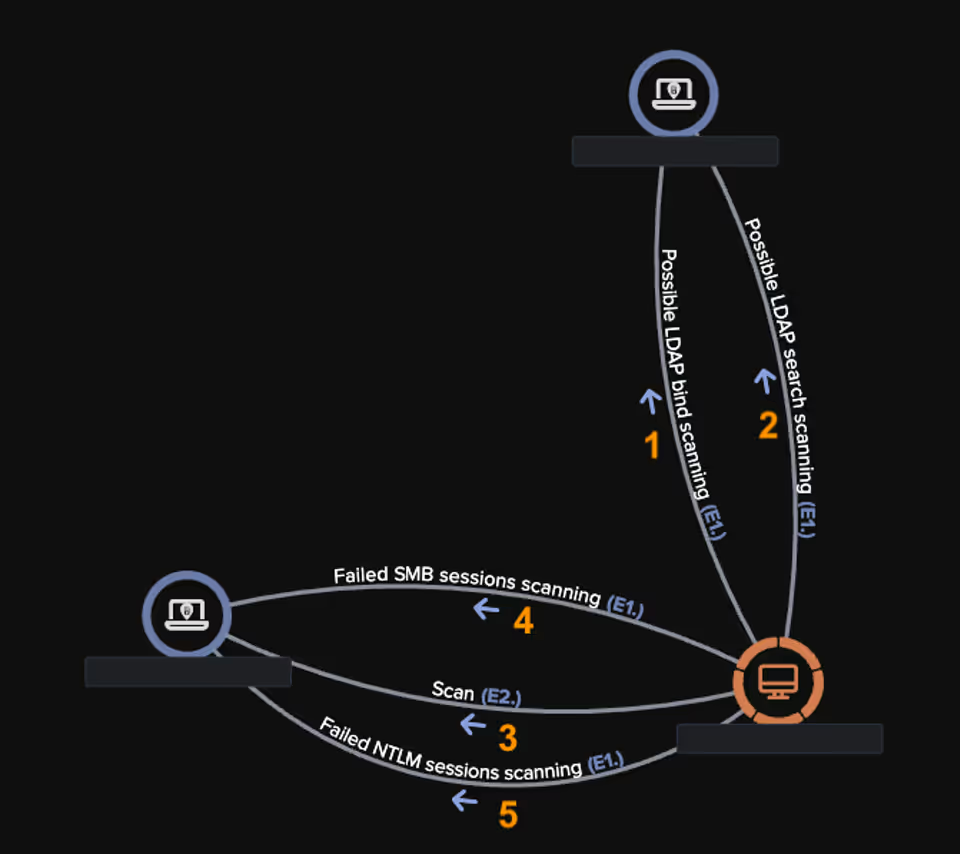

The first network-level activity observed on this device was unusual LDAP reconnaissance of the wider network environment, seemingly attempting to bind to the local directory services. Following successful authentication, the device began querying the LDAP directory for information about user and root entries. Darktrace then observed the attacker performing network reconnaissance, initiating a scan of the customer’s environment and attempting to connect to other internal devices. Finally, the malicious actor proceeded to make several SMB sessions and NTLM authentication attempts to internal devices, all of which failed.

While Darktrace’s Autonomous Response capability suggested actions to shut down this suspicious internal connectivity, the deployment was configured in Human Confirmation Mode. This meant any actions required human approval, allowing the activities to continue until the customer’s security team intervened. If Darktrace had been set to respond autonomously, it would have blocked connections to port 445 and enforced a “pattern of life” to prevent the device from deviating from expected activities, thus shutting down the suspicious scanning.

Conclusion

Email bombing attacks can pose a serious threat to individuals and organizations by overwhelming inboxes with emails in an attempt to obfuscate potentially malicious activities, like account takeovers or credential theft. While many traditional gateways struggle to keep pace with the volume of these attacks—analyzing individual emails rather than connecting them and often failing to distinguish between legitimate and malicious activity—Darktrace is able to identify and stop these sophisticated attacks without latency.

Thanks to its Self-Learning AI and Autonomous Response capabilities, Darktrace ensures that even seemingly benign email activity is not lost in the noise.

Credit to Maria Geronikolou (Cyber Analyst and SOC Shift Supervisor) and Cameron Boyd (Cyber Security Analyst), Steven Haworth (Senior Director of Threat Modeling), Ryan Traill (Analyst Content Lead)

[related-resource]

Appendices

[2] https://thehackernews.com/2024/12/black-basta-ransomware-evolves-with.html

Darktrace Models Alerts

Internal Reconnaissance

· Device / Suspicious SMB Scanning Activity

· Device / Anonymous NTLM Logins

· Device / Network Scan

· Device / Network Range Scan

· Device / Suspicious Network Scan Activity

· Device / ICMP Address Scan

· Anomalous Connection / Large Volume of LDAP Download

· Device / Suspicious LDAP Search Operation

· Device / Large Number of Model Alerts

Get the latest insights on emerging cyber threats

This report explores the latest trends shaping the cybersecurity landscape and what defenders need to know in 2025

.avif)

.png)

%201.png)