Fragmented Tools are Failing SOC Teams in the Cloud Era

The cloud has transformed how businesses operate, reshaping everything from infrastructure to application delivery. But cloud security has not kept pace. Most tools still rely on traditional models of logging, policy enforcement, and posture management; approaches that provide surface-level visibility but lack the depth to detect or investigate active attacks.

Meanwhile, attackers are exploiting vulnerabilities, delivering cloud-native exploits, and moving laterally in ways that posture management alone cannot catch fast enough. Critical evidence is often missed, and alerts lack the forensic depth SOC analysts need to separate noise from true risk. As a result, organizations remain exposed: research shows that nearly nine in ten organizations have suffered a critical cloud breach despite investing in existing security tools [1].

SOC teams are left buried in alerts without actionable context, while ephemeral workloads like containers and serverless functions vanish before evidence can be preserved. Point tools for logging or forensics only add complexity, with 82% of organizations using multiple platforms to investigate cloud incidents [2].

The result is a broken security model: posture tools surface risks but don’t connect them to active attacker behaviors, while investigation tools are too slow and fragmented to provide timely clarity. Security teams are left reactive, juggling multiple point solutions and still missing critical signals. What’s needed is a unified approach that combines real-time detection and response for active threats with automated investigation and cloud posture management in a single workflow.

Just as security teams once had to evolve beyond basic firewalls and antivirus into network and endpoint detection, response, and forensics, cloud security now requires its own next era: one that unifies detection, response, and investigation at the speed and scale of the cloud.

A Powerful Combination: Real-Time CDR + Automated Cloud Forensics

Darktrace / CLOUD now uniquely unites detection, investigation, and response into one workflow, powered by Self-Learning AI. This means every alert, from any tool in your stack, can instantly become actionable evidence and a complete investigation in minutes.

With this release, Darktrace / CLOUD delivers a more holistic approach to cloud defense, uniting real-time detection, response, and investigation with proactive risk reduction. The result is a single solution that helps security teams stay ahead of attackers while reducing complexity and blind spots.

- Automated Cloud Forensic Investigations: Instantly capture and analyze volatile evidence from cloud assets, reducing investigation times from days to minutes and eliminating blind spots

- Enhanced Cloud-Native Threat Detection: Detect advanced attacker behaviors such as lateral movement, privilege escalation, and command-and-control in real time

- Enhanced Live Cloud Topology Mapping: Gain continuous insight into cloud environments, including ephemeral workloads, with live topology views that simplify investigations and expose anomalous activity

- Agentless Scanning for Proactive Risk Reduction: Continuously monitor for misconfigurations, vulnerabilities, and risky exposures to reduce attack surface and stop threats before they escalate.

Automated Cloud Forensic Investigations

Darktrace / CLOUD now includes capabilities introduced with Darktrace / Forensic Acquisition & Investigation, triggering automated forensic acquisition the moment a threat is detected. This ensures ephemeral evidence, from disks and memory to containers and serverless workloads can be preserved instantly and analyzed in minutes, not days. The integration unites detection, response, and forensic investigation in a way that eliminates blind spots and reduces manual effort.

Enhanced Cloud-Native Threat Detection

Darktrace / CLOUD strengthens its real-time behavioral detection to expose early attacker behaviors that logs alone cannot reveal. Enhanced cloud-native detection capabilities include:

• Reconnaissance & Discovery – Detects enumeration and probing activity post-compromise.

• Privilege Escalation via Role Assumption – Identifies suspicious attempts to gain elevated access.

• Malicious Compute Resource Usage – Flags threats such as crypto mining or spam operations.

These enhancements ensure active attacks are detected earlier, before adversaries can escalate or move laterally through cloud environments.

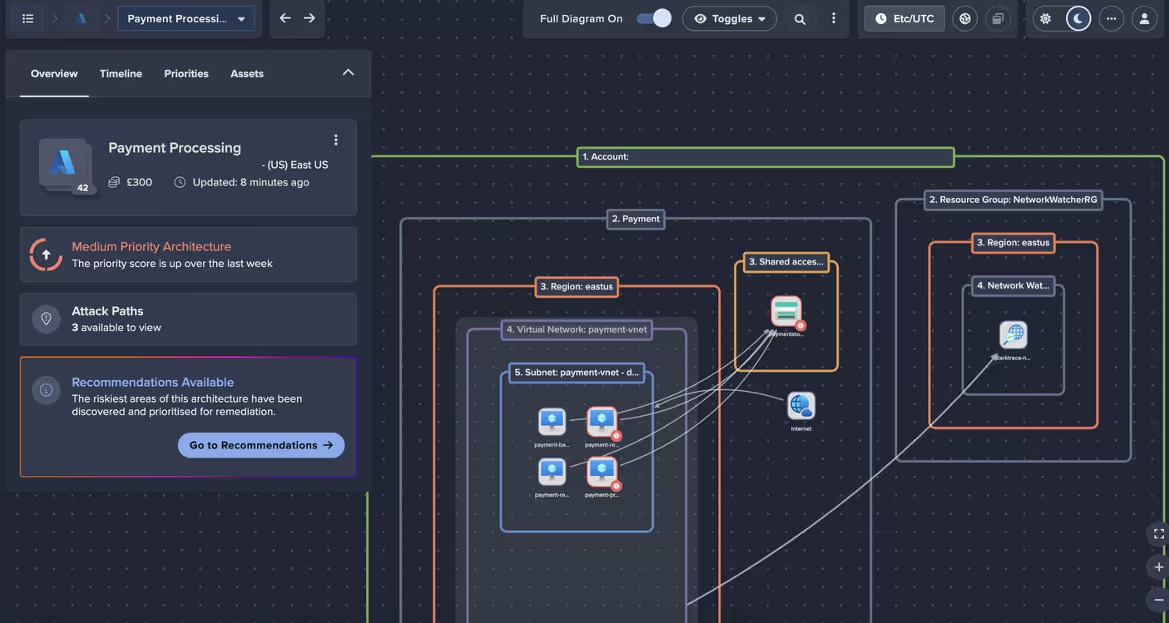

Enhanced Live Cloud Topology Mapping

New enhancements to live topology provide real-time mapping of cloud environments, attacker movement, and anomalous behavior. This dynamic visibility helps SOC teams quickly understand complex environments, trace attack paths, and prioritize response. By integrating with Darktrace / Proactive Exposure Management (PEM), these insights extend beyond the cloud, offering a unified view of risks across networks, endpoints, SaaS, and identity — giving teams the context needed to act with confidence.

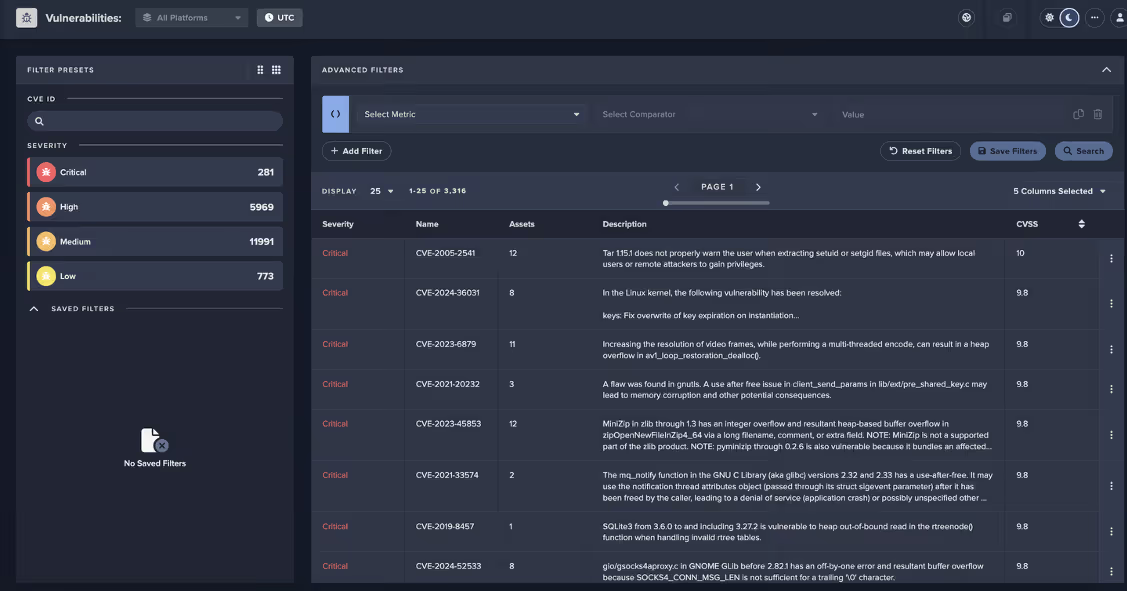

Agentless Scanning for Proactive Risk Reduction

Darktrace / CLOUD now introduces agentless scanning to uncover malware and vulnerabilities in cloud assets without impacting performance. This lightweight, non-disruptive approach provides deep visibility into cloud workloads and surfaces risks before attackers can exploit them. By continuously monitoring for misconfigurations and exposures, the solution strengthens posture management and reduces attack surface across hybrid and multi-cloud environments.

Together, these capabilities move cloud security operations from reactive to proactive, empowering security teams to detect novel threats in real time, reduce exposures before they are exploited, and accelerate investigations with forensic depth. The result is faster triage, shorter MTTR, and reduced business risk — all delivered in a single, AI-native solution built for hybrid and multi-cloud environments.

Accelerating the Evolution of Cloud Security

Cloud security has long been fragmented, forcing teams to stitch together posture tools, log-based monitoring, and external forensics to get even partial coverage. With this release, Darktrace / CLOUD delivers a holistic, unified approach that covers every stage of the cloud lifecycle, from proactive posture management and risk identification to real-time detection, to automated investigation and response.

By bringing these capabilities together in a single AI-native solution, Darktrace is advancing cloud security beyond incremental change and setting a new standard for how organizations protect their hybrid and multi-cloud environments.

With Darktrace / CLOUD, security teams finally gain end-to-end visibility, response, and investigation at the speed of the cloud, transforming cloud defense from fragmented and reactive to unified and proactive.

[related-resource]

Sources: [1], [2] Darktrace Report: Organizations Require a New Approach to Handle Investigations in the Cloud

Darktrace Innovation Launch: Automated Cloud Forensics

Discover the industry's first truly automated cloud forensics solution in this live broadcast with experts from AWS and Forrester.

%201.png)