As reported in Darktrace’s 2024 Annual Threat Report, the exploitation of Common Vulnerabilities and Exposures (CVEs) in edge infrastructure has consistently been a significant concern across the threat landscape, with internet-facing assets remaining highly attractive to various threat actors.

Back in January 2024, the Darktrace Threat Research team investigated a surge of malicious activity from zero-day vulnerabilities such as those at the time on Ivanti Connect Secure (CS) and Ivanti Policy Secure (PS) appliances. These vulnerabilities were disclosed by Ivanti in January 2024 as CVE-2023-46805 (Authentication bypass vulnerability) and CVE-2024-21887 (Command injection vulnerability), where these two together allowed for unauthenticated, remote code execution (RCE) on vulnerable Ivanti systems.

What are the latest vulnerabilities in Ivanti products?

In early January 2025, two new vulnerabilities were disclosed in Ivanti CS and PS, as well as their Zero Trust Access (ZTA) gateway products.

- CVE-2025-0282: A stack-based buffer overflow vulnerability. Successful exploitation could lead to unauthenticated remote code execution, allowing attackers to execute arbitrary code on the affected system [1]

- CVE-2025-0283: When combined with CVE-2025-0282, this vulnerability could allow a local authenticated attacker to escalate privileges, gaining higher-level access on the affected system [1]

Ivanti also released a statement noting they are currently not aware of any exploitation of CVE-2025-0283 at the time of disclosure [1].

Darktrace coverage of Ivanti

The Darktrace Threat Research team investigated the new Ivanti vulnerabilities across their customer base and discovered suspicious activity on two customer networks. Indicators of Compromise (IoCs) potentially indicative of successful exploitation of CVE-2025-0282 were identified as early as December 2024, 11 days before they had been publicly disclosed by Ivanti.

Case 1: December 2024

Authentication with a Privileged Credential

Darktrace initially detected suspicious activity connected with the exploitation of CVE-2025-0282 on December 29, 2024, when a customer device was observed logging into the network via SMB using the credential “svc_negbackups”, before authenticating with the credential “svc_negba” via RDP.

This likely represented a threat actor attempting to identify vulnerabilities within the system or application and escalate their privileges from a basic user account to a more privileged one. Darktrace / NETWORK recognized that the credential “svc_negbackups” was new for this device and therefore deemed it suspicious.

Likely Malicious File Download

Shortly after authentication with the privileged credential, Darktrace observed the device performing an SMB write to the C$ share, where a likely malicious executable file, ‘DeElevate64.exe’ was detected. While this is a legitimate Windows file, it can be abused by malicious actors for Dynamic-Link Library (DLL) sideloading, where malicious files are transferred onto other devices before executing malware. There have been external reports indicating that threat actors have utilized this technique when exploiting the Ivanti vulnerabilities [2].

Shortly after, a high volume of SMB login failures using the credential “svc_counteract-ext” was observed, suggesting potential brute forcing activity. The suspicious nature of this activity triggered an Enhanced Monitoring model alert that was escalated to Darktrace’s Security Operations Center (SOC) for further investigation and prompt notification, as the customer was subscribed to the Security Operations Support service. Enhanced Monitoring are high-fidelity models detect activities that are more likely to be indicative of compromise

Suspicious Scanning and Internal Reconnaissance

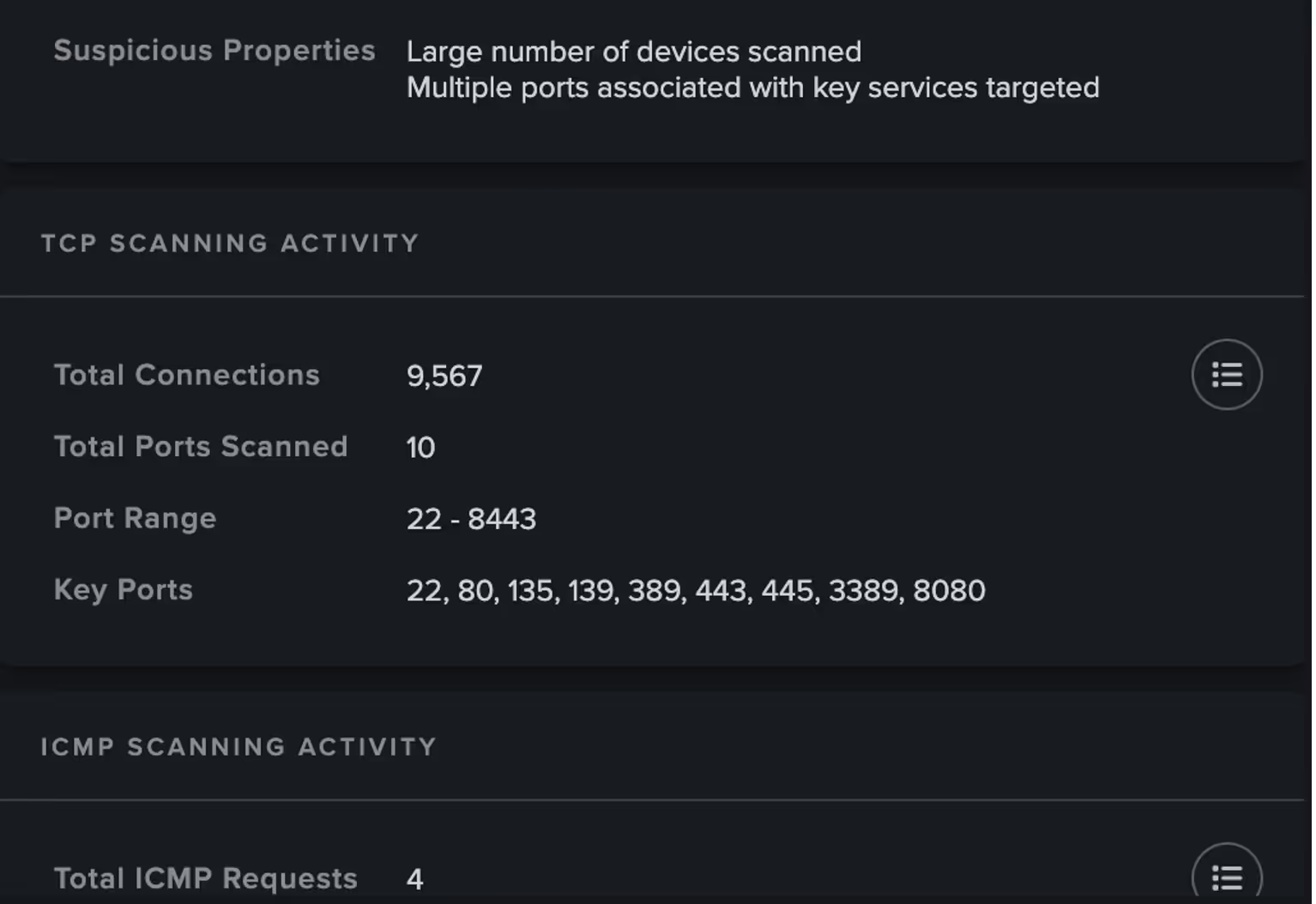

Darktrace then went on to observe the device carrying out network scanning activity as well as anomalous ITaskScheduler activity. Threat actors can exploit the task scheduler to facilitate the initial or recurring execution of malicious code by a trusted system process, often with elevated permissions. The same device was also seen carrying out uncommon WMI activity.

Case 2: January 2025

Suspicious File Downloads

On January 13, 2025, Darktrace began to observe activity related to the exploitation of CVE-2025-0282 on the network of another customer, with one in particular device attempting to download likely malicious files.

Firstly, Darktrace observed the device making a GET request for the file “DeElevator64.dll” hosted on the IP 104.238.130[.]185. The device proceeded to download another file, this time “‘DeElevate64.exe”. from the same IP. This was followed by the download of “DeElevator64.dll”, similar to the case observed in December 2024. External reporting indicates that this DLL has been used by actors exploiting CVE-2025-0282 to sideload backdoor into infected systems [2]

Suspicious Internal Activity

Just like the previous case, on January 15, the same device was observed making numerous internal connections consistent with network scanning activity, as well as DCE-RPC requests.

Just a few minutes later, Darktrace again detected the use of a new administrative credential, observing the following details:

- domain=REDACTED hostname=DESKTOP-1JIMIV3 auth_successful=T result=success ntlm_version=2 .

The hostname observed by Darktrace, “DESKTOP-1JIMIV3,” has also been identified by other external vendors and was associated with a remote computer name seen accessing compromised accounts [2].

Darktrace also observed the device performing an SMB write of an additional file, “to.bat,” which may have represented another malicious file loaded from the DLL files that the device had downloaded earlier. It is possible this represented the threat actor attempting to deploy a remote scheduled task.

Further investigation revealed that the device was likely a Veeam server, with its MAC address indicating it was a VMware device. It also appeared that the Veeam server was capturing activities referenced from the hostname DESKTOP-1JIMIV3. This may be analogous to the remote computer name reported by external researchers as accessing accounts [2]. However, this activity might also suggest that while the same threat actor and tools could be involved, they may be targeting a different vulnerability in this instance.

Autonomous Response

In this case, the customer had Darktrace’s Autonomous Response capability enabled on their network. As a result, Darktrace was able to contain the compromise and shut down any ongoing suspicious connectivity by blocking internal connections and enforcing a “pattern of life” on the affected device. This action allows a device to make its usual connections while blocking any that deviate from expected behavior. These mitigative actions by Darktrace ensured that the compromise was promptly halted, preventing any further damage to the customer’s environment.

Conclusion

If the previous blog in January 2024 was a stark reminder of the threat posed by malicious actors exploiting Internet-facing assets, the recent activities surrounding CVE-2025-0282 and CVE-2025-0283 emphasize this even further.

Based on the telemetry available to Darktrace, a wide range of malicious activities were identified, including the malicious use of administrative credentials, the download of suspicious files, and network scanning in the cases investigated .

These activities included the download of suspicious files such as “DeElevate64.exe” and “DeElevator64.dll” potentially used by attackers to sideload backdoors into infected systems. The suspicious hostname DESKTOP-1JIMIV3 was also observed and appears to be associated with a remote computer name seen accessing compromised accounts. These activities are far from exhaustive, and many more will undoubtedly be uncovered as threat actors evolve.

Fortunately, Darktrace was able to swiftly detect and respond to suspicious network activity linked to the latest Ivanti vulnerabilities, sometimes even before these vulnerabilities were publicly disclosed.

Credit to: Nahisha Nobregas, Senior Cyber Analyst, Emma Foulger, Principle Cyber Analyst, Ryan Trail, Analyst Content Lead and the Darktrace Threat Research Team

Appendices

Darktrace Model Detections

Case 1

· Anomalous Connection / Unusual Admin SMB Session

· Anomalous File / EXE from Rare External Location

· Anomalous File / Internal / Unusual SMB Script Write

· Anomalous File / Multiple EXE from Rare External Locations

· Anomalous File / Script from Rare External Location

· Compliance / SMB Drive Write

· Device / Multiple Lateral Movement Model Alerts

· Device / Network Range Scan

· Device / Network Scan

· Device / New or Uncommon WMI Activity

· Device / RDP Scan

· Device / Suspicious Network Scan Activity

· Device / Suspicious SMB Scanning Activity

· User / New Admin Credentials on Client

· User / New Admin Credentials on Server

Case 2

· Anomalous Connection / Unusual Admin SMB Session

· Anomalous Connection / Unusual Admin RDP Session

· Compliance / SMB Drive Write

· Device / Multiple Lateral Movement Model Alerts

· Device / SMB Lateral Movement

· Device / Possible SMB/NTLM Brute Force

· Device / Suspicious SMB Scanning Activity

· Device / Network Scan

· Device / RDP Scan

· Device / Large Number of Model Alerts

· Device / Anomalous ITaskScheduler Activity

· Device / Suspicious Network Scan Activity

· Device / New or Uncommon WMI Activity

List of IoCs Possible IoCs:

· DeElevator64.dll

· deelevator64.dll

· DeElevate64.exe

· deelevator64.dll

· deelevate64.exe

· to.bat

Mid-high confidence IoCs:

- 104.238.130[.]185

- http://104.238.130[.]185/DeElevate64.exe

- http://104.238.130[.]185/DeElevator64.dll

- DESKTOP-1JIMIV3

References:

2. https://unit42.paloaltonetworks.com/threat-brief-ivanti-cve-2025-0282-cve-2025-0283/

%201.png)

%201.png)