From automation to intelligence

There’s a lot of attention around AI in cybersecurity right now, similar to how important automation felt about 15 years ago. But this time, the scale and speed of change feel different.

In the context of cybersecurity investigations, the application of AI can significantly enhance an organization's ability to detect, respond to, and recover from incidents. It enables a more proactive approach to cybersecurity, ensuring a swift and effective response to potential threats.

At Darktrace, we’ve learned that no single AI technique can solve cybersecurity on its own. We employ a multi-layered AI approach, strategically integrating a diverse set of techniques both sequentially and hierarchically. This layered architecture allows us to deliver proactive, adaptive defense tailored to each organization’s unique environment.

Darktrace uses a range of AI techniques to perform in-depth analysis and investigation of anomalies identified by lower-level alerts, in particular automating Levels 1 and 2 of the Security Operations Centre (SOC) team’s workflow. This saves teams time and resources by automating repetitive and time-consuming tasks carried out during investigation workflows. We call this core capability Cyber AI Analyst.

How Darktrace’s Cyber AITM Analyst works

Cyber AI Analyst mimics the way a human carries out a threat investigation: evaluating multiple hypotheses, analyzing logs for involved assets, and correlating findings across multiple domains. It will then generate an alert with full technical details, pulling relevant findings into a single pane of glass to track the entire attack chain.

Learn more about how Cyber AI Analyst accomplishes this here:

- Introducing Darktrace Incident Graph Evaluation for Security Threats (DIGEST)

- Introducing Version 2 of Darktrace’s Embedding Model for Investigation of Security Threats (DEMIST-2)

This blog will highlight four examples where Darktrace’s agentic AI, Cyber AI Analyst, successfully identified the activity of sophisticated threat actors, including nation state adversaries. The final example will include step-by-step details of the investigations conducted by Cyber AI Analyst.

[related-resource]

Case 1: Cyber AI Analyst vs. ShadowPad Malware: East Asian Advanced Persistent Threat (APT)

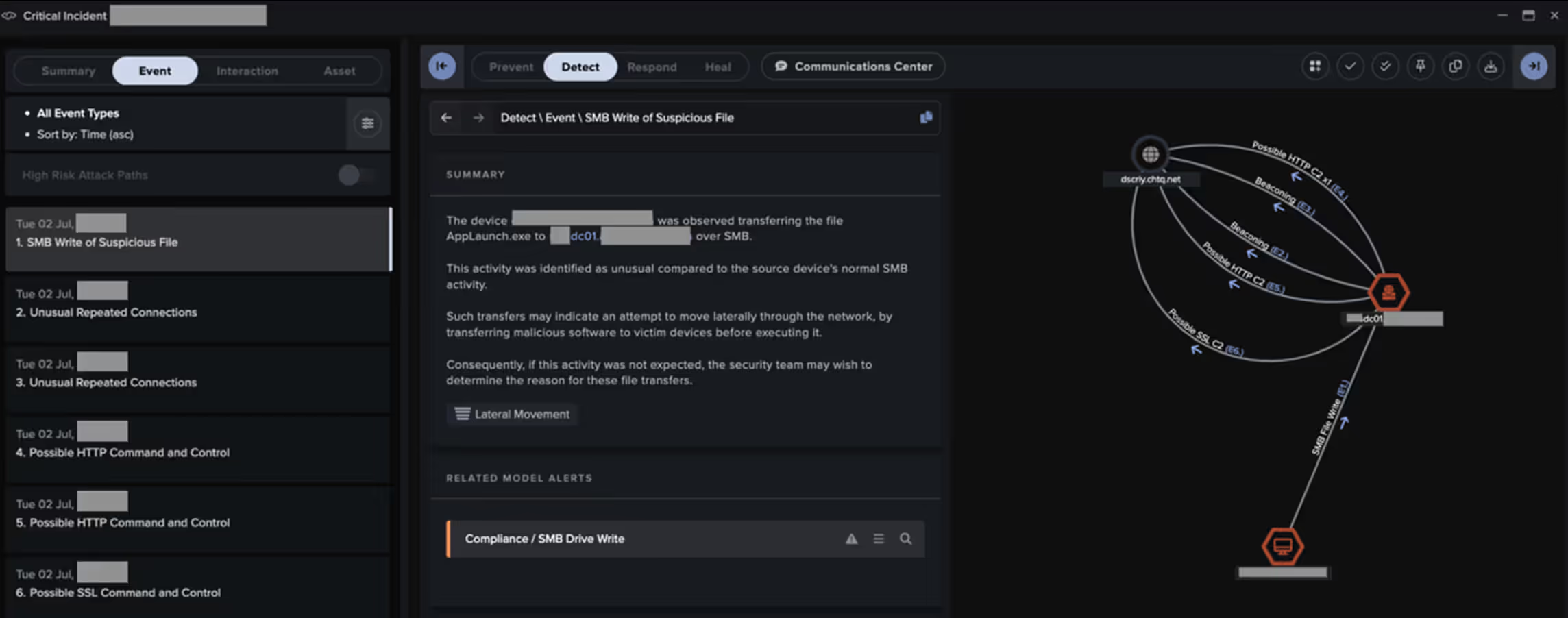

In March 2025, Darktrace detailed a lengthy investigation into two separate threads of likely state-linked intrusion activity in a customer network, showcasing Cyber AI Analyst’s ability to identify different activity threads and piece them together.

The first of these threads...

occurred in July 2024 and involved a malicious actor establishing a foothold in the customer’s virtual private network (VPN) environment, likely via the exploitation of an information disclosure vulnerability (CVE-2024-24919) affecting Check Point Security Gateway devices.

Using compromised service account credentials, the actor then moved laterally across the network via RDP and SMB, with files related to the modular backdoor ShadowPad being delivered to targeted internal systems. Targeted systems went on to communicate with a C2 server via both HTTPS connections and DNS tunnelling.

The second thread of activity...

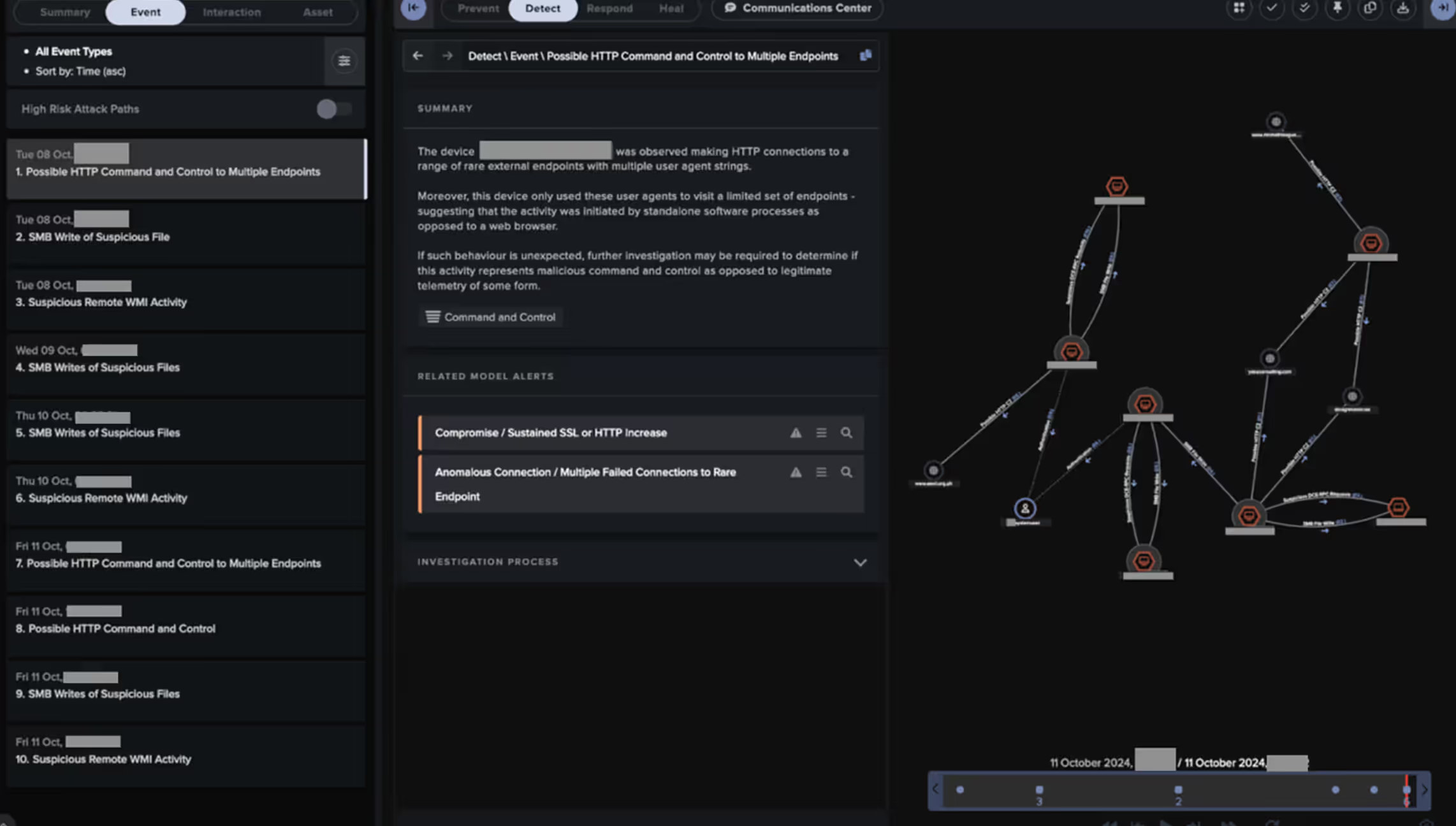

Which occurred several months earlier in October 2024, involved a malicious actor infiltrating the customer's desktop environment via SMB and WMI.

The actor used these compromised desktops to discriminately collect sensitive data from a network share before exfiltrating such data to a web of likely compromised websites.

For each of these threads of activity, Cyber AI Analyst was able to identify and piece together the relevant intrusion steps by hypothesizing, analyzing, and then generating a singular view of the full attack chain.

These Cyber AI Analyst investigations enabled a quicker understanding of the threat actor’s sequence of events and, in some cases, led to faster containment.

Read the full detailed blog on Darktrace’s ShadowPad investigation here!

Case 2: Cyber AI Analyst vs. Blind Eagle: South American APT

Since 2018, APT-C-36, also known as Blind Eagle, has been observed performing cyber-attacks targeting various sectors across multiple countries in Latin America, with a particular focus on Colombia.

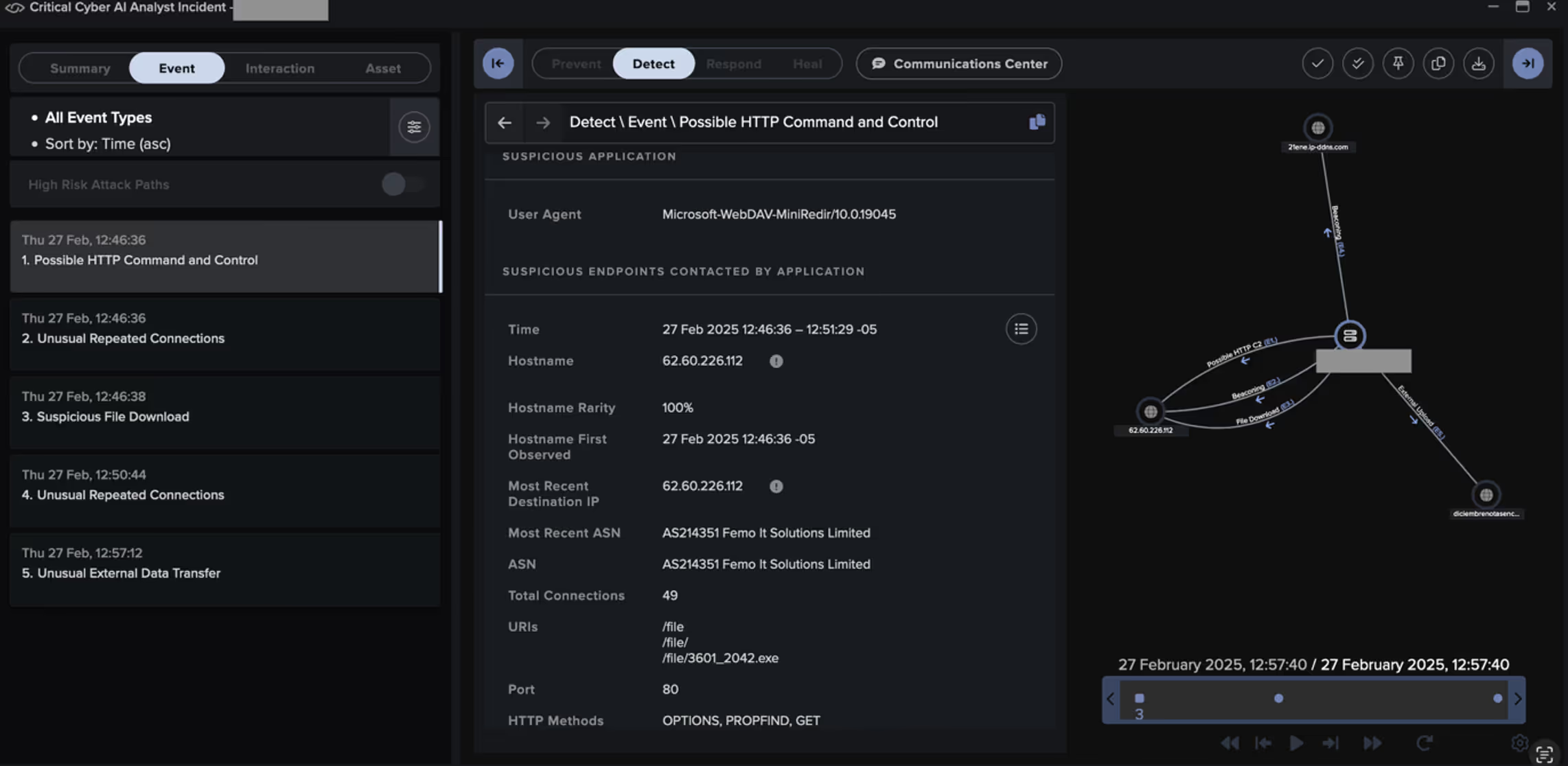

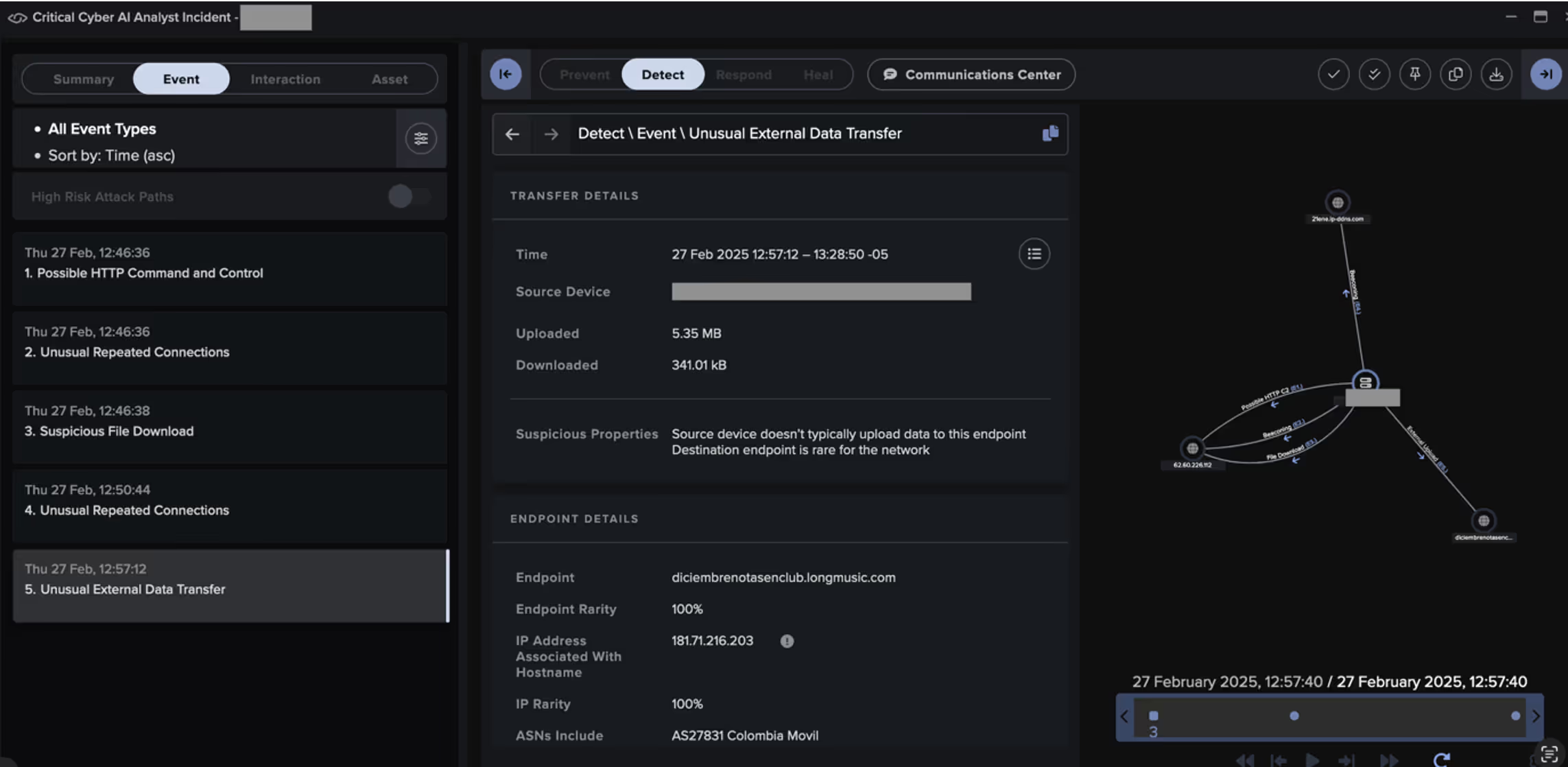

In February 2025, Cyber AI Analyst provided strong coverage of a Blind Eagle intrusion targeting a South America-based public transport provider, identifying and correlating various stages of the attack, including tooling.

In this campaign, threat actors have been observed using phishing emails to deliver malicious URL links to targeted recipients, similar to the way threat actors have previously been observed exploiting CVE-2024-43451, a vulnerability in Microsoft Windows that allows the disclosure of a user’s NTLMv2 password hash upon minimal interaction with a malicious file [4].

In late February 2025, Darktrace observed activity assessed with medium confidence to be associated with Blind Eagle on the network of a customer in Colombia. Darktrace observed a device on the customer’s network being directed over HTTP to a rare external IP, namely 62[.]60[.]226[.]112, which had never previously been seen in this customer’s environment and was geolocated in Germany.

Read the full Blind Eagle threat story here!

Case 3: Cyber AI Analyst vs. Ransomware Gang

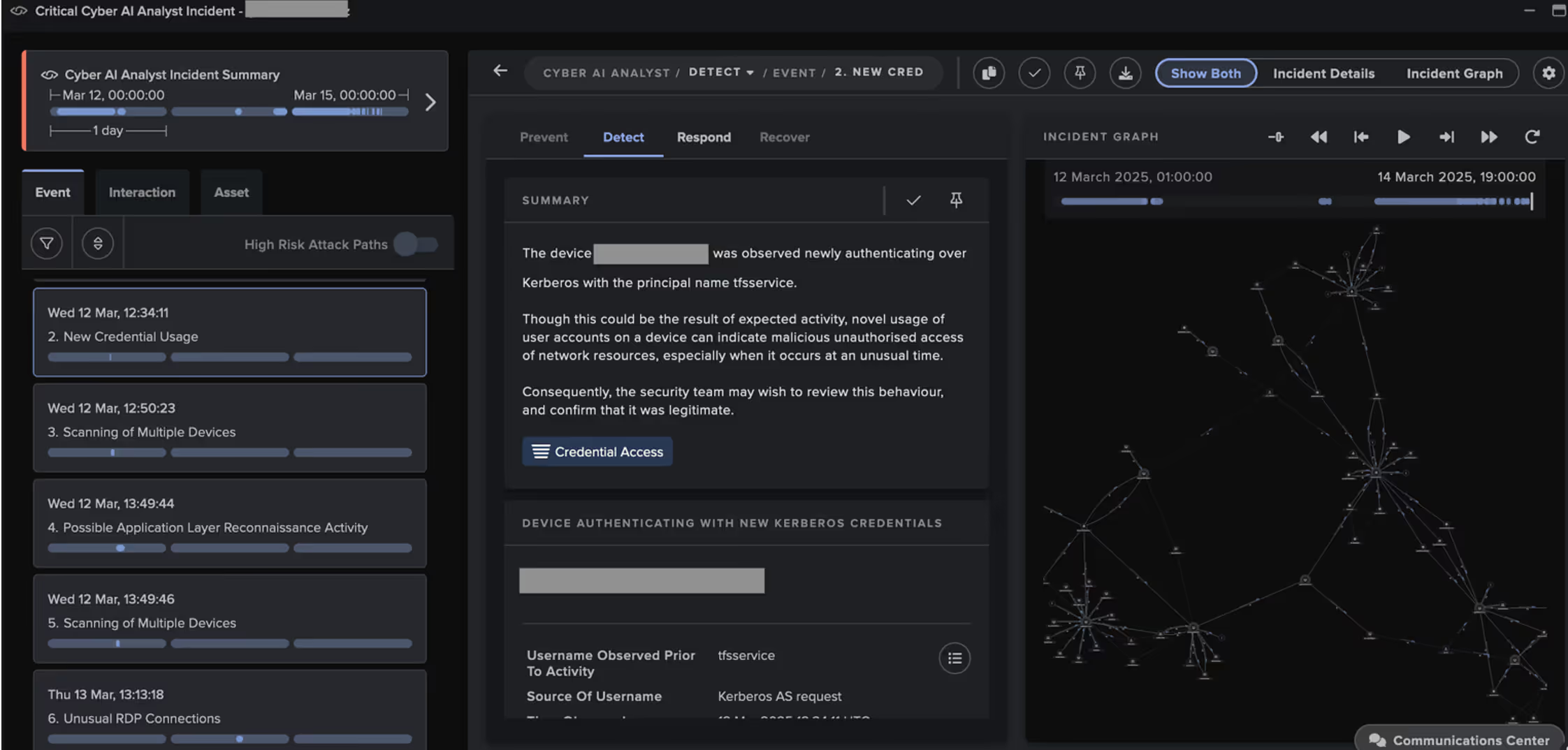

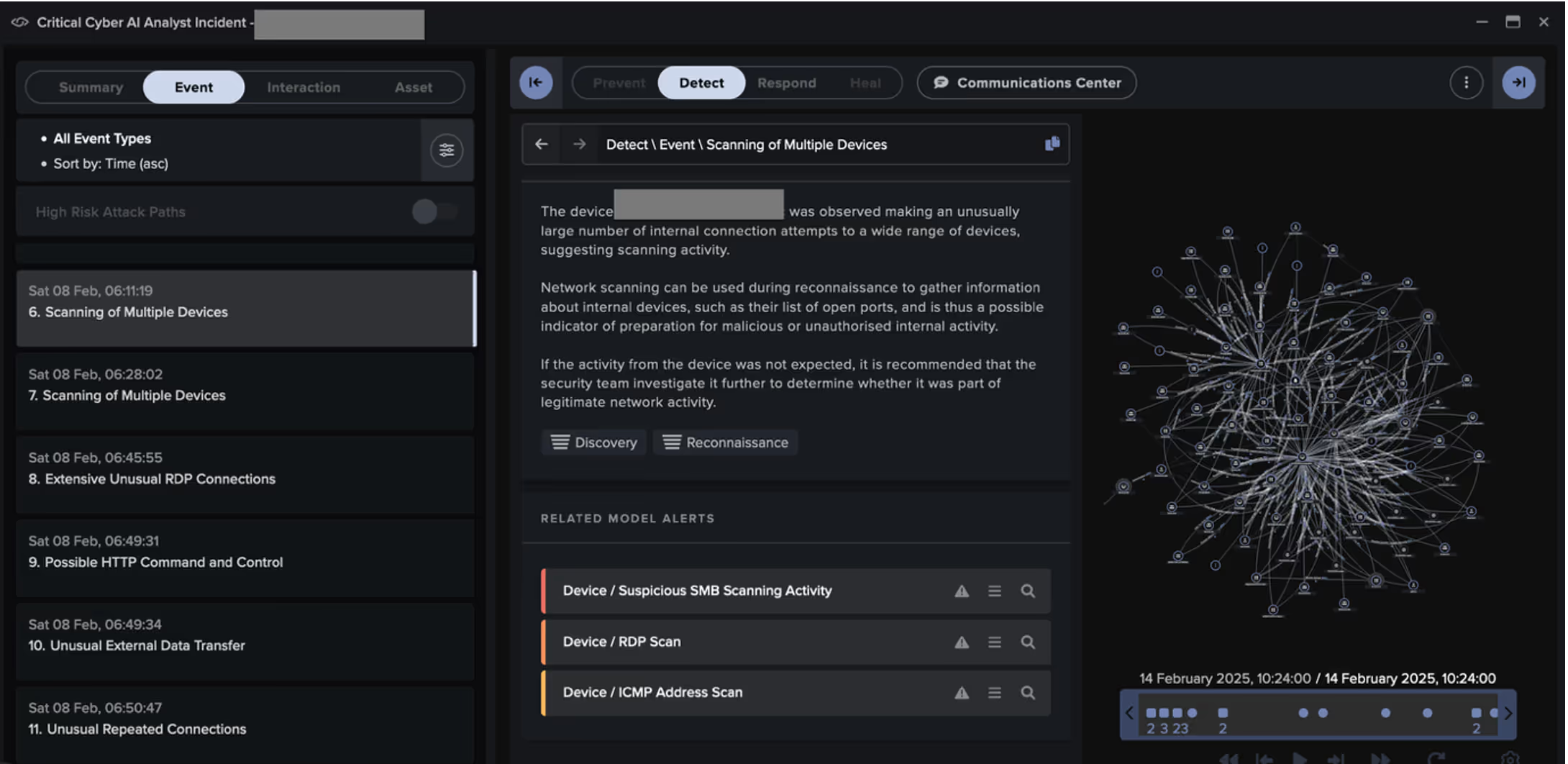

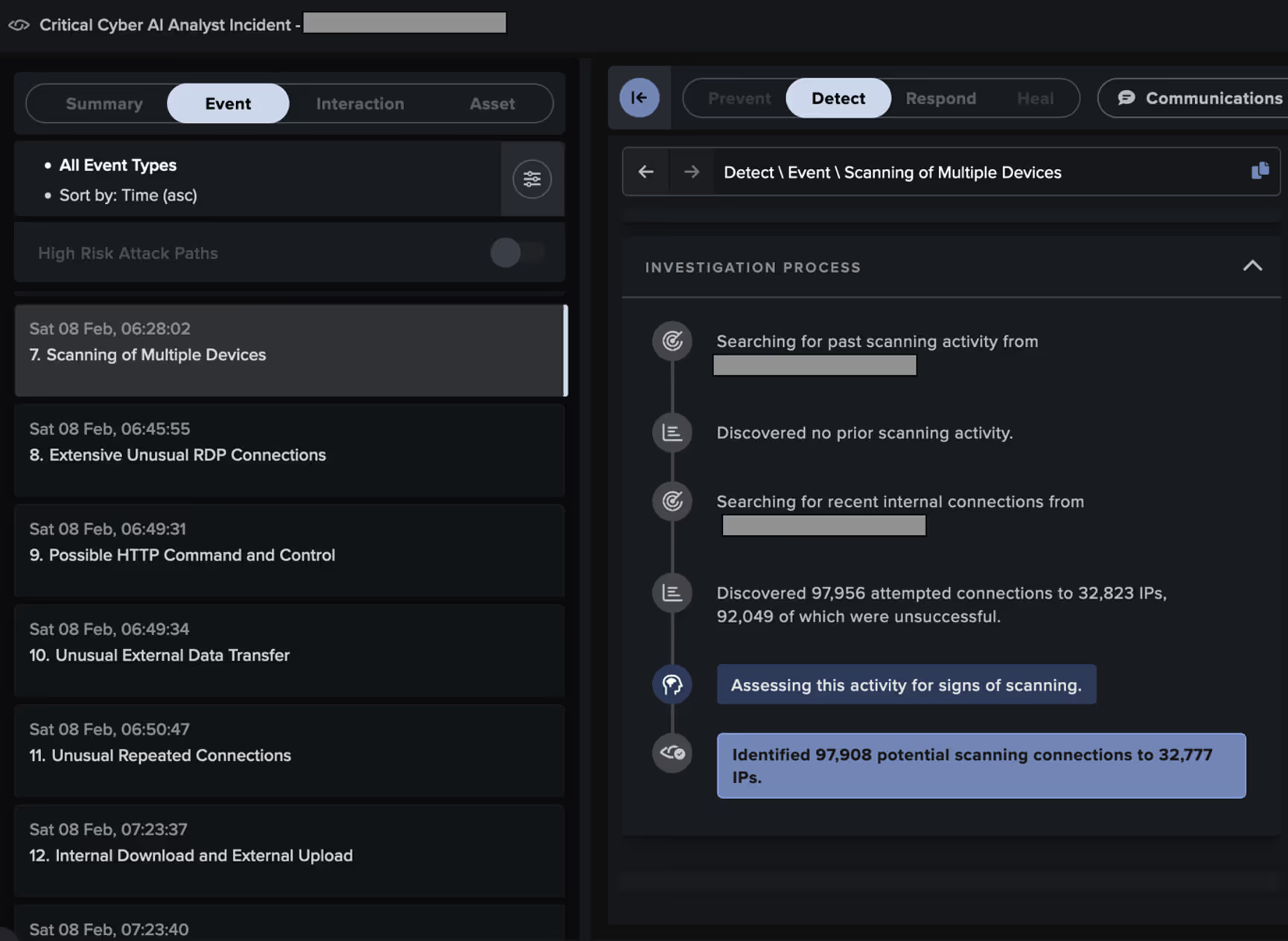

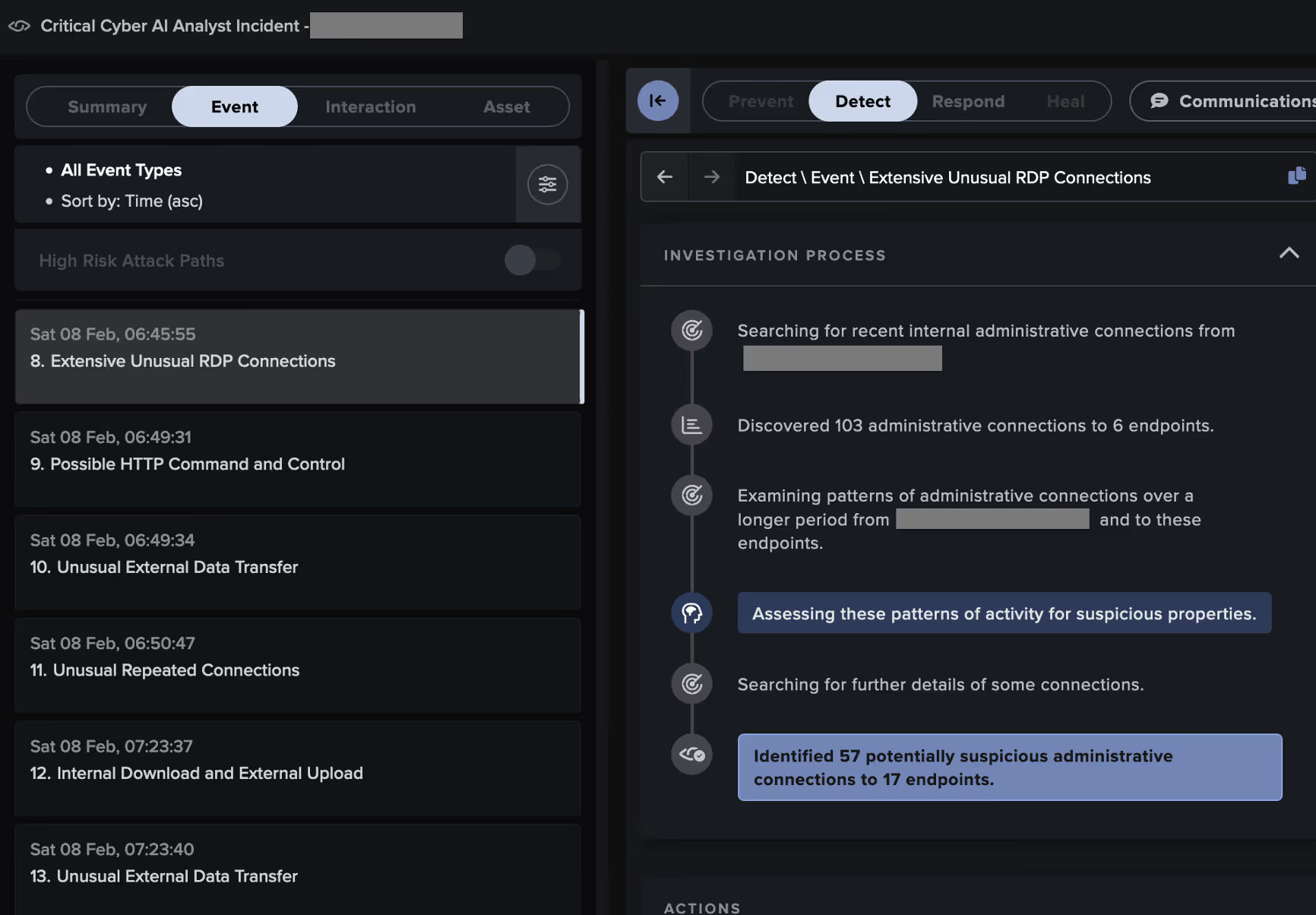

In mid-March 2025, a malicious actor gained access to a customer’s network through their VPN. Using the credential 'tfsservice', the actor conducted network reconnaissance, before leveraging the Zerologon vulnerability and the Directory Replication Service to obtain credentials for the high-privilege accounts, ‘_svc_generic’ and ‘administrator’.

The actor then abused these account credentials to pivot over RDP to internal servers, such as DCs. Targeted systems showed signs of using various tools, including the remote monitoring and management (RMM) tool AnyDesk, the proxy tool SystemBC, the data compression tool WinRAR, and the data transfer tool WinSCP.

The actor finally collected and exfiltrated several gigabytes of data to the cloud storage services, MEGA, Backblaze, and LimeWire, before returning to attempt ransomware detonation.

Cyber AI Analyst identified, analyzed, and reported on all corners of this attack, resulting in a threat tray made up of 34 Incident Events into a singular view of the attack chain.

Cyber AI Analyst identified activity associated with the following tactics across the MITRE attack chain:

- Initial Access

- Persistence

- Privilege Escalation

- Credential Access

- Discovery

- Lateral Movement

- Execution

- Command and Control

- Exfiltration

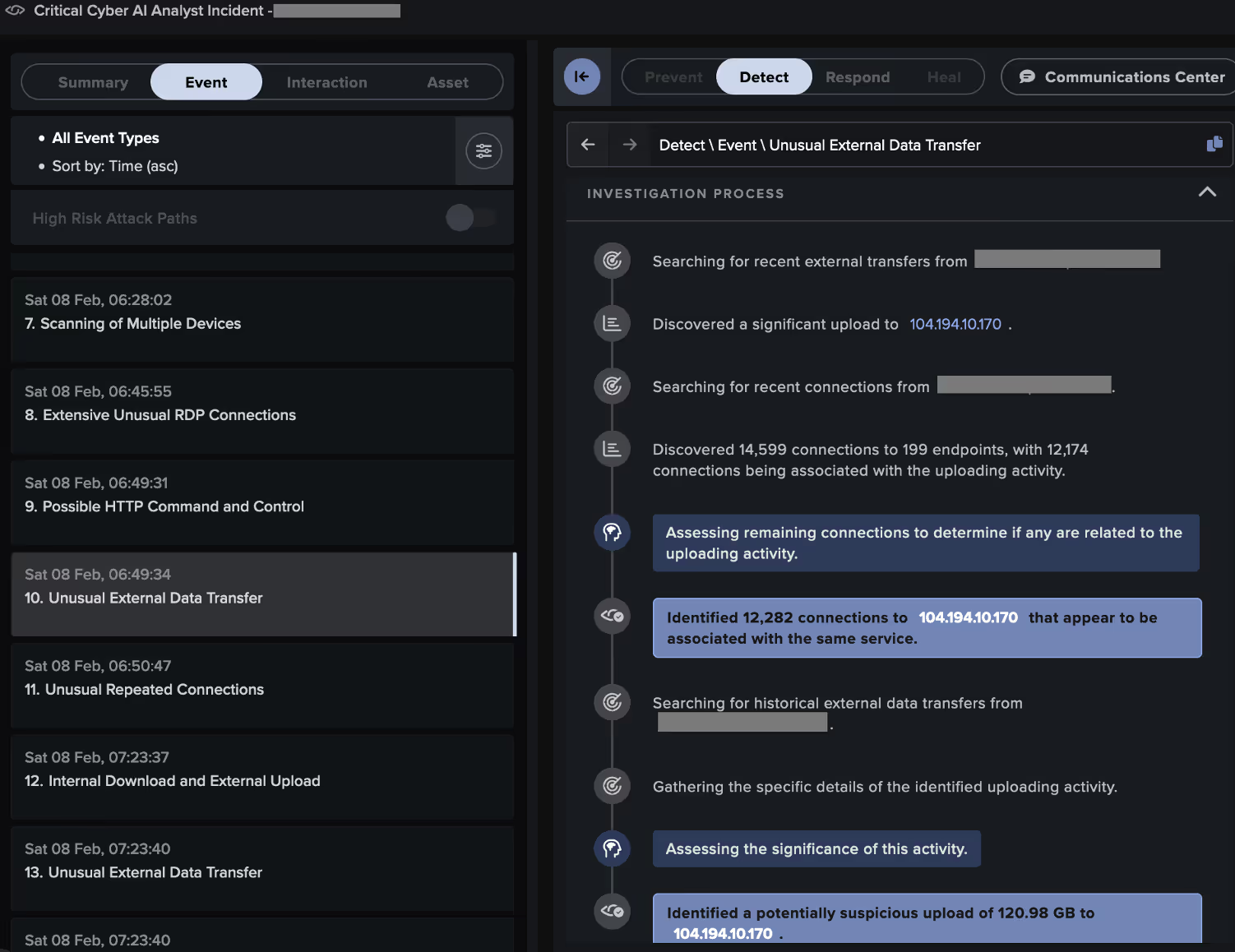

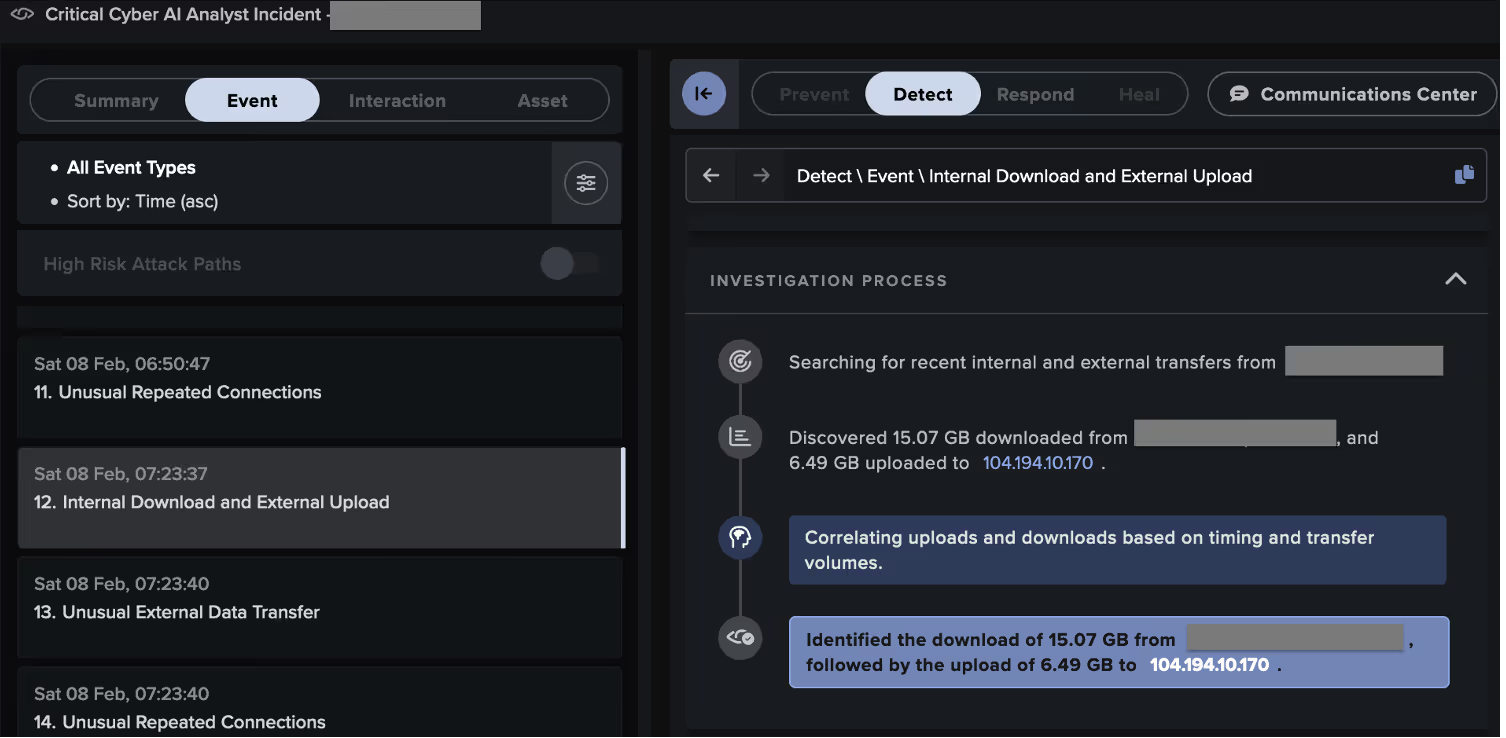

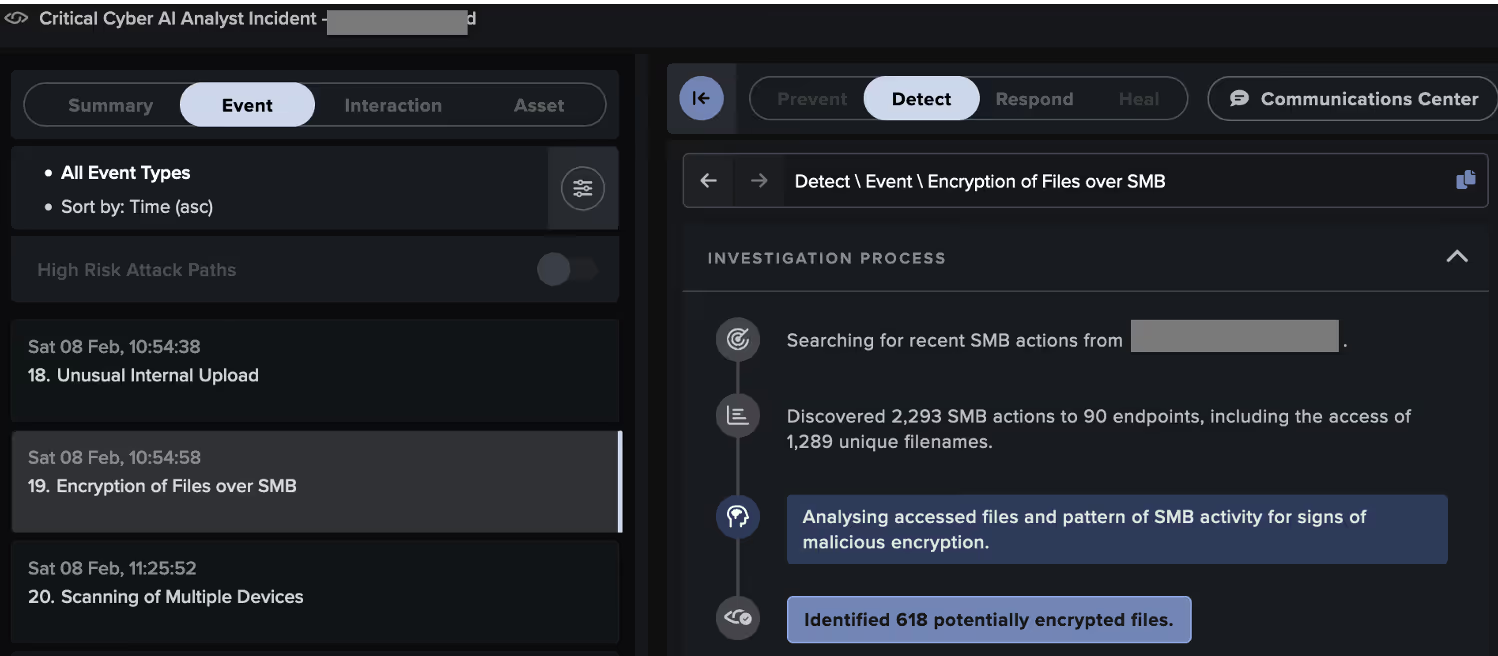

Case 4: Cyber AI Analyst vs Ransomhub

A malicious actor appeared to have entered the customer’s network their VPN, using a likely attacker-controlled device named 'DESKTOP-QIDRDSI'. The actor then pivoted to other systems via RDP and distributed payloads over SMB.

Some systems targeted by the attacker went on to exfiltrate data to the likely ReliableSite Bare Metal server, 104.194.10[.]170, via HTTP POSTs over port 5000. Others executed RansomHub ransomware, as evidenced by their SMB-based distribution of ransom notes named 'README_b2a830.txt' and their addition of the extension '.b2a830' to the names of files in network shares.

Through its live investigation of this attack, Cyber AI Analyst created and reported on 38 Incident Events that formed part of a single, wider incident, providing a full picture of the threat actor’s behavior and tactics, techniques, and procedures (TTPs). It identified activity associated with the following tactics across the MITRE attack chain:

- Execution

- Discovery

- Lateral Movement

- Collection

- Command and Control

- Exfiltration

- Impact (i.e., encryption)

Conclusion

Security teams are challenged to keep up with a rapidly evolving cyber-threat landscape, now powered by AI in the hands of attackers, alongside the growing scope and complexity of digital infrastructure across the enterprise.

Traditional security methods, even those that use some simple machine learning, are no longer sufficient, as these tools cannot keep pace with all possible attack vectors or respond quickly enough machine-speed attacks, given their complexity compared to known and expected patterns. Security teams require a step up in their detection capabilities, leveraging machine learning to understand the environment, filter out the noise, and take action where threats are identified. This is where Cyber AI Analyst steps in to help.

Credit to Nathaniel Jones (VP, Security & AI Strategy, FCISO), Sam Lister (Security Researcher), Emma Foulger (Global Threat Research Operations Lead), and Ryan Traill (Analyst Content Lead)

[related-resource]

Learn more about Cyber AI Analyst

Discover how Cyber AI Analyst boosts SOC efficiency with faster threat triage, investigation, and response.

.png)

%201.png)