What is Blind Eagle?

Since 2018, APT-C-36, also known as Blind Eagle, has been observed performing cyber-attacks targeting various sectors across multiple countries in Latin America, with a particular focus on Colombian organizations.

Blind Eagle characteristically targets government institutions, financial organizations, and critical infrastructure [1][2].

Attacks carried out by Blind Eagle actors typically start with a phishing email and the group have been observed utilizing various Remote Access Trojans (RAT) variants, which often have in-built methods for hiding command-and-control (C2) traffic from detection [3].

What we know about Blind Eagle from a recent campaign

Since November 2024, Blind Eagle actors have been conducting an ongoing campaign targeting Colombian organizations [1].

In this campaign, threat actors have been observed using phishing emails to deliver malicious URL links to targeted recipients, similar to the way threat actors have previously been observed exploiting CVE-2024-43451, a vulnerability in Microsoft Windows that allows the disclosure of a user’s NTLMv2 password hash upon minimal interaction with a malicious file [4].

Despite Microsoft patching this vulnerability in November 2024 [1][4], Blind Eagle actors have continued to exploit the minimal interaction mechanism, though no longer with the intent of harvesting NTLMv2 password hashes. Instead, phishing emails are sent to targets containing a malicious URL which, when clicked, initiates the download of a malicious file. This file is then triggered by minimal user interaction.

Clicking on the file triggers a WebDAV request, with a connection being made over HTTP port 80 using the user agent ‘Microsoft-WebDAV-MiniRedir/10.0.19044’. WebDAV is a transmission protocol which allows files or complete directories to be made available through the internet, and to be transmitted to devices [5]. The next stage payload is then downloaded via another WebDAV request and malware is executed on the target device.

Attackers are notified when a recipient downloads the malicious files they send, providing an insight into potential targets [1].

Darktrace’s coverage of Blind Eagle

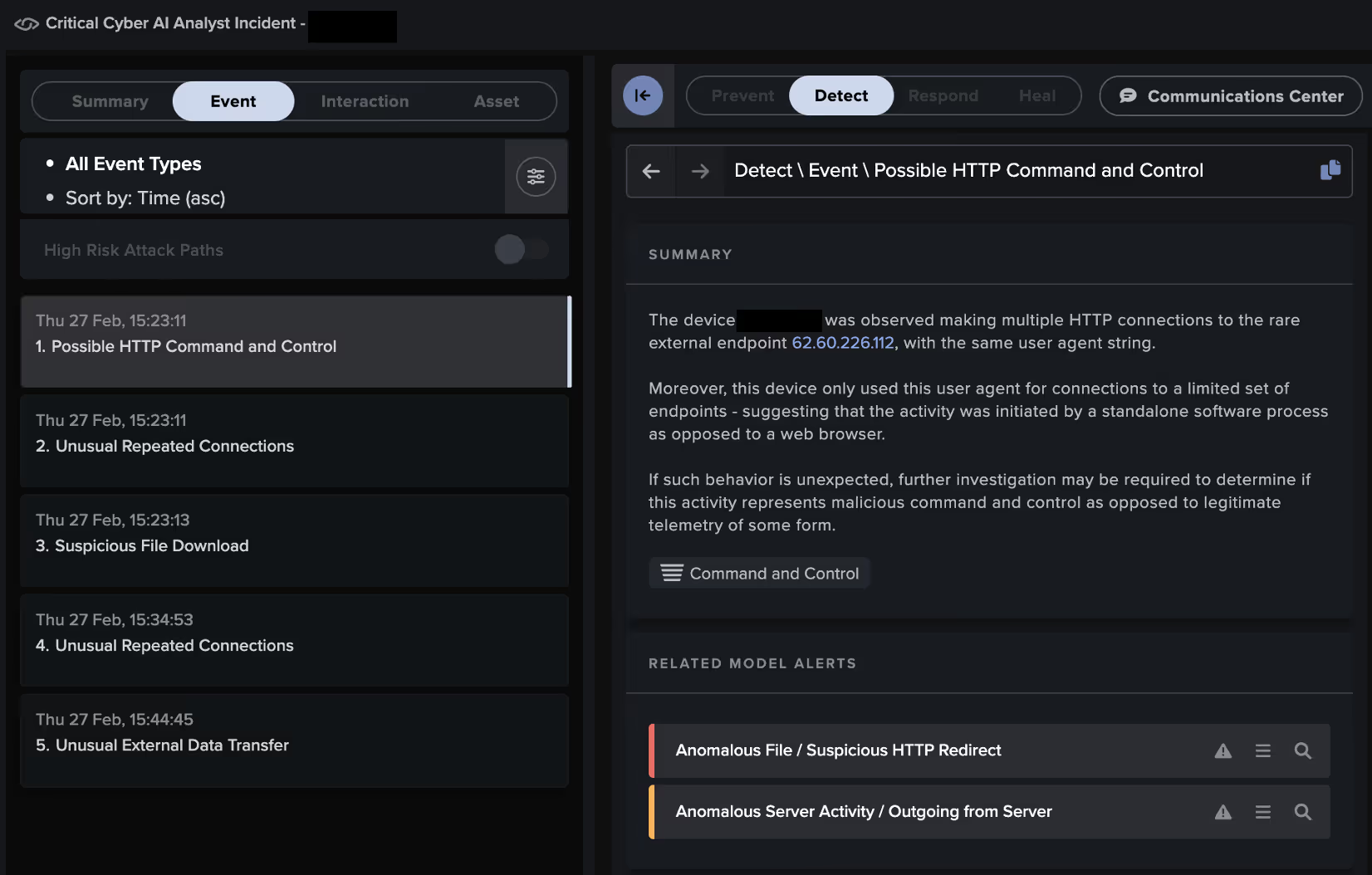

In late February 2025, Darktrace observed activity assessed with medium confidence to be associated with Blind Eagle on the network of a customer in Colombia.

Within a period of just five hours, Darktrace / NETWORK detected a device being redirected through a rare external location, downloading multiple executable files, and ultimately exfiltrating data from the customer’s environment.

Since the customer did not have Darktrace’s Autonomous Response capability enabled on their network, no actions were taken to contain the compromise, allowing it to escalate until the customer’s security team responded to the alerts provided by Darktrace.

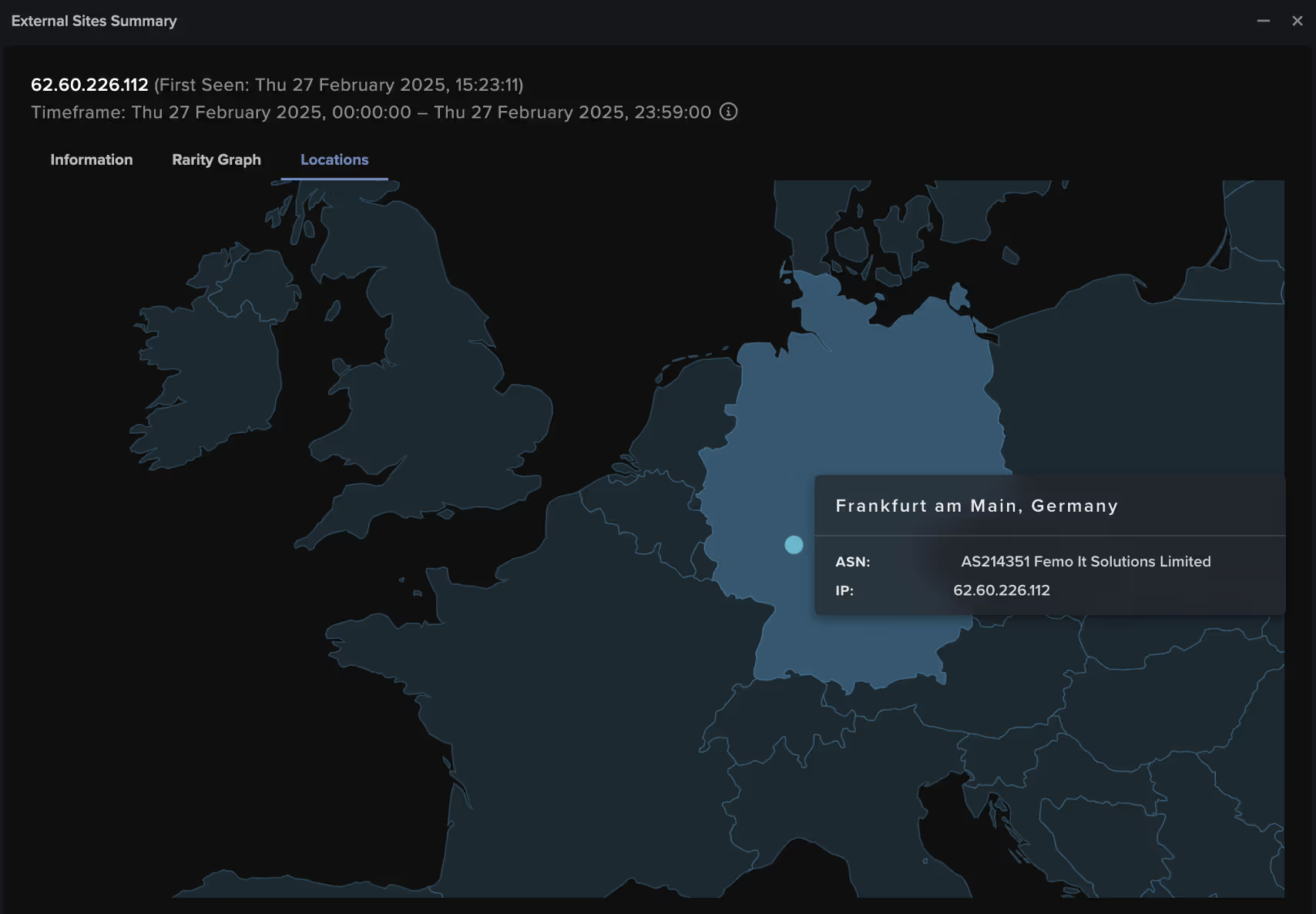

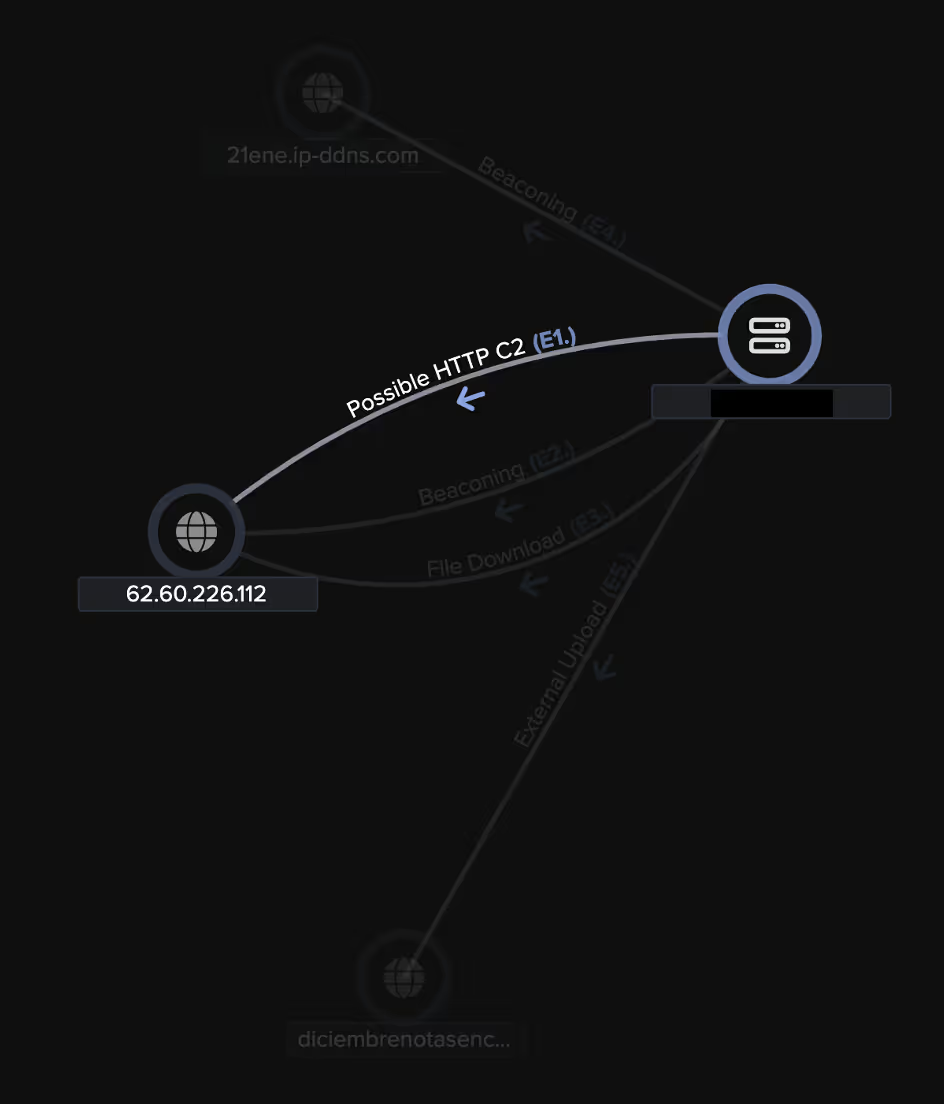

Darktrace observed a device on the customer’s network being directed over HTTP to a rare external IP, namely 62[.]60[.]226[.]112, which had never previously been seen in this customer’s environment and was geolocated in Germany. Multiple open-source intelligence (OSINT) providers have since linked this endpoint with phishing and malware campaigns [9].

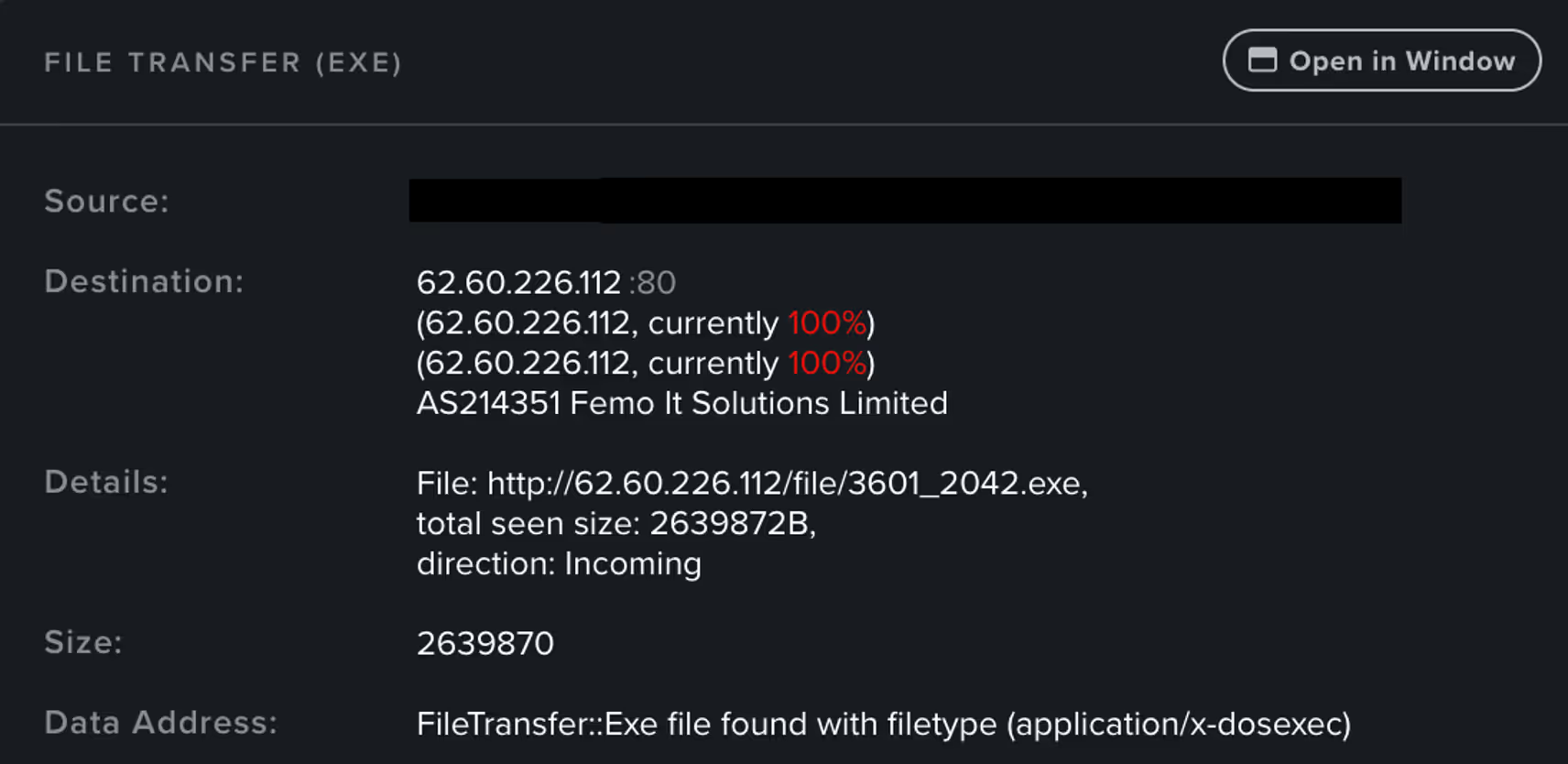

The device then proceeded to download the executable file hxxp://62[.]60[.]226[.]112/file/3601_2042.exe.

The device was then observed making unusual connections to the rare endpoint 21ene.ip-ddns[.]com and performing unusual external data activity.

This dynamic DNS endpoint allows a device to access an endpoint using a domain name in place of a changing IP address. Dynamic DNS services ensure the DNS record of a domain name is automatically updated when the IP address changes. As such, malicious actors can use these services and endpoints to dynamically establish connections to C2 infrastructure [6].

Further investigation into this dynamic endpoint using OSINT revealed multiple associations with previous likely Blind Eagle compromises, as well as Remcos malware, a RAT commonly deployed via phishing campaigns [7][8][10].

![Darktrace’s detection of the affected device connecting to the suspicious dynamic DNS endpoint, 21ene.ip-ddns[.]com.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/685c5e35886ec88c0cfe90d1_Screenshot%202025-06-25%20at%201.34.48%E2%80%AFPM.avif)

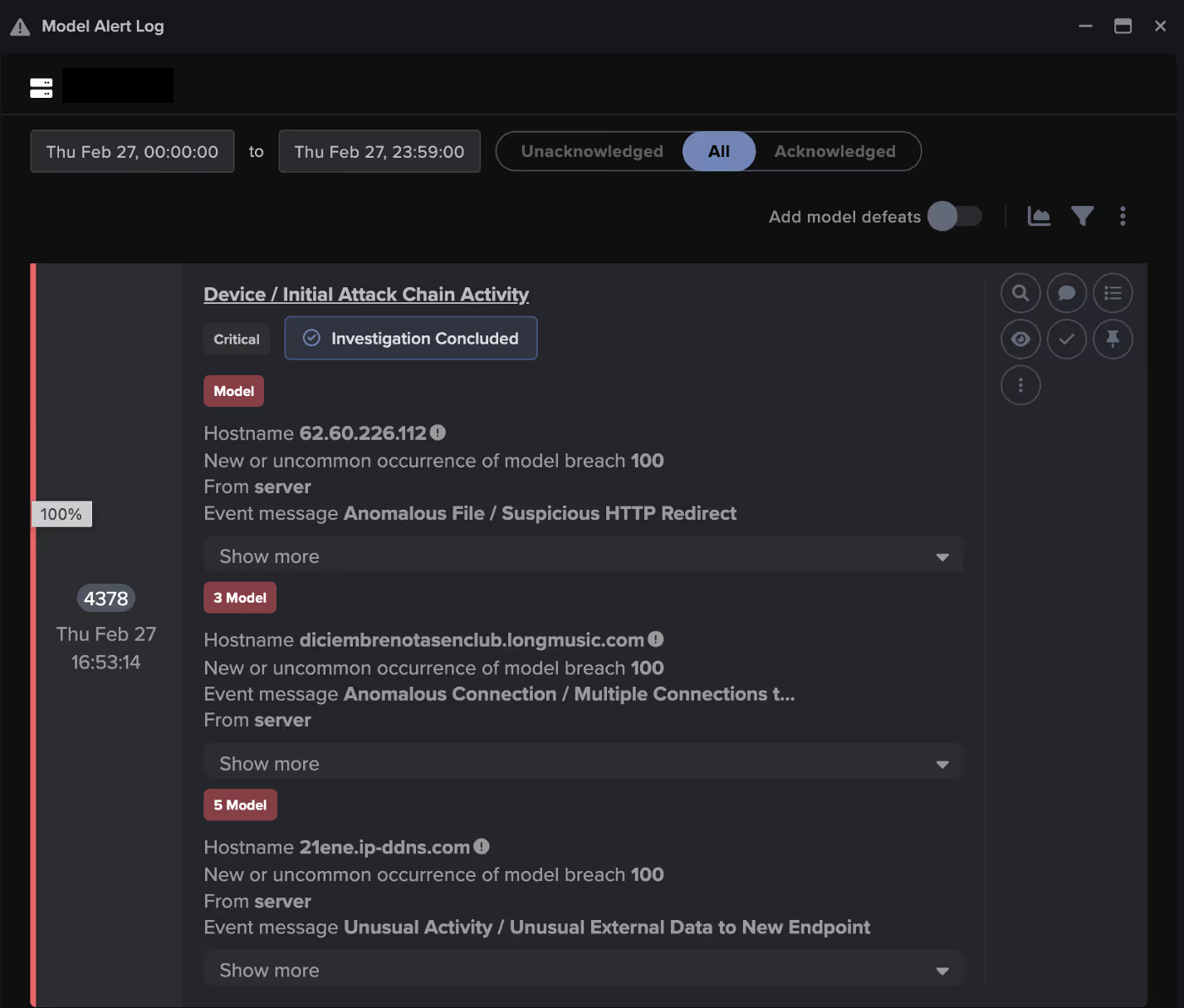

Shortly after this, Darktrace observed the user agent ‘Microsoft-WebDAV-MiniRedir/10.0.19045’, indicating usage of the aforementioned transmission protocol WebDAV. The device was subsequently observed connected to an endpoint associated with Github and downloading data, suggesting that the device was retrieving a malicious tool or payload. The device then began to communicate to the malicious endpoint diciembrenotasenclub[.]longmusic[.]com over the new TCP port 1512 [11].

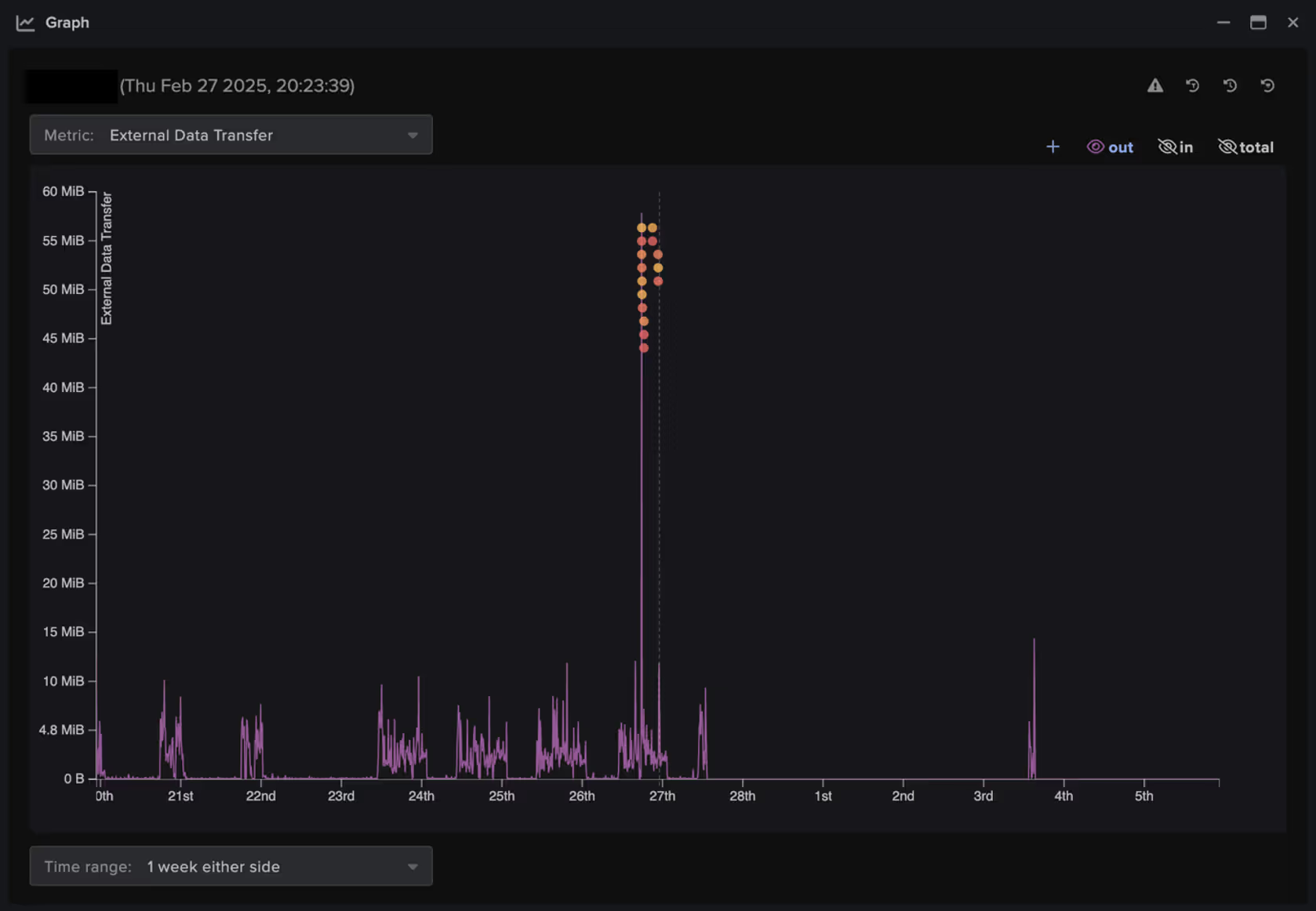

Around this time, the device was also observed uploading data to the endpoints 21ene.ip-ddns[.]com and diciembrenotasenclub[.]longmusic[.]com, with transfers of 60 MiB and 5.6 MiB observed respectively.

This chain of activity triggered an Enhanced Monitoring model alert in Darktrace / NETWORK. These high-priority model alerts are designed to trigger in response to higher fidelity indicators of compromise (IoCs), suggesting that a device is performing activity consistent with a compromise.

A second Enhanced Monitoring model was also triggered by this device following the download of the aforementioned executable file (hxxp://62[.]60[.]226[.]112/file/3601_2042.exe) and the observed increase in C2 activity.

Following this activity, Darktrace continued to observe the device beaconing to the 21ene.ip-ddns[.]com endpoint.

Darktrace’s Cyber AI Analyst was able to correlate each of the individual detections involved in this compromise, identifying them as part of a broader incident that encompassed C2 connectivity, suspicious downloads, and external data transfers.

As the affected customer did not have Darktrace’s Autonomous Response configured at the time, the attack was able to progress unabated. Had Darktrace been properly enabled, it would have been able to take a number of actions to halt the escalation of the attack.

For example, the unusual beaconing connections and the download of an unexpected file from an uncommon location would have been shut down by blocking the device from making external connections to the relevant destinations.

Conclusion

The persistence of Blind Eagle and ability to adapt its tactics, even after patches were released, and the speed at which the group were able to continue using pre-established TTPs highlights that timely vulnerability management and patch application, while essential, is not a standalone defense.

Organizations must adopt security solutions that use anomaly-based detection to identify emerging and adapting threats by recognizing deviations in user or device behavior that may indicate malicious activity. Complementing this with an autonomous decision maker that can identify, connect, and contain compromise-like activity is crucial for safeguarding organizational networks against constantly evolving and sophisticated threat actors.

Credit to Charlotte Thompson (Senior Cyber Analyst), Eugene Chua (Principal Cyber Analyst) and Ryan Traill (Analyst Content Lead)

Appendices

IoCs

IoC – Type - Confidence

Microsoft-WebDAV-MiniRedir/10.0.19045 – User Agent

62[.]60[.]226[.]112 – IP – Medium Confidence

hxxp://62[.]60[.]226[.]112/file/3601_2042.exe – Payload Download – Medium Confidence

21ene.ip-ddns[.]com – Dynamic DNS Endpoint – Medium Confidence

diciembrenotasenclub[.]longmusic[.]com - Hostname – Medium Confidence

Darktrace’s model alert coverage

Anomalous File / Suspicious HTTP Redirect

Anomalous File / EXE from Rare External Location

Anomalous File / Multiple EXE from Rare External Location

Anomalous Server Activity / Outgoing from Server

Unusual Activity / Unusual External Data to New Endpoint

Device / Anomalous Github Download

Anomalous Connection / Multiple Connections to New External TCP Port

Device / Initial Attack Chain Activity

Anomalous Server Activity / Rare External from Server

Compromise / Suspicious File and C2

Compromise / Fast Beaconing to DGA

Compromise / Large Number of Suspicious Failed Connections

Device / Large Number of Model Alert

Mitre Attack Mapping:

Tactic – Technique – Technique Name

Initial Access - T1189 – Drive-by Compromise

Initial Access - T1190 – Exploit Public-Facing Application

Initial Access ICS - T0862 – Supply Chain Compromise

Initial Access ICS - T0865 – Spearphishing Attachment

Initial Access ICS - T0817 - Drive-by Compromise

Resource Development - T1588.001 – Malware

Lateral Movement ICS - T0843 – Program Download

Command and Control - T1105 - Ingress Tool Transfer

Command and Control - T1095 – Non-Application Layer Protocol

Command and Control - T1571 – Non-Standard Port

Command and Control - T1568.002 – Domain Generation Algorithms

Command and Control ICS - T0869 – Standard Application Layer Protocol

Evasion ICS - T0849 – Masquerading

Exfiltration - T1041 – Exfiltration Over C2 Channel

Exfiltration - T1567.002 – Exfiltration to Cloud Storage

References

1) https://research.checkpoint.com/2025/blind-eagle-and-justice-for-all/

4) https://msrc.microsoft.com/update-guide/vulnerability/CVE-2024-43451

5) https://www.ionos.co.uk/digitalguide/server/know-how/webdav/

6) https://vercara.digicert.com/resources/dynamic-dns-resolution-as-an-obfuscation-technique

7) https://threatfox.abuse.ch/ioc/1437795

8) https://www.checkpoint.com/cyber-hub/threat-prevention/what-is-malware/remcos-malware/

9) https://www.virustotal.com/gui/url/b3189db6ddc578005cb6986f86e9680e7f71fe69f87f9498fa77ed7b1285e268

10) https://www.virustotal.com/gui/domain/21ene.ip-ddns.com

11) https://www.virustotal.com/gui/domain/diciembrenotasenclub.longmusic.com/community

.png)

%201.png)