In late 2024 and early 2025, the Darktrace Security Operations Center (SOC) investigated alerts regarding separate cases of Software-as-a-Service (SaaS) account compromises on two customer environments that presented several similarities, suggesting they were part of a wider phishing campaign.

This campaign was found to leverage the project collaboration and note-taking application, Milanote, and the Tycoon 2FA phishing kit.

Legitimate services abused

As highlighted in Darktrace's 2024 Annual Threat Report [1], threat actors are abusing legitimate services, like Milanote, in their phishing campaigns. By leveraging these trusted platforms and domains, malicious actors can bypass traditional security measures, making their phishing emails appear benign and increasing the likelihood of successful attacks.

Darktrace categorizes these senders and platforms as free content senders. These services allow users to send emails containing custom content (e.g., files) from fully validated, fixed service address belonging to legitimate corporations. Although some of these services permit full body and subject customization by attackers, the structure of these emails is generally consistent, making it challenging to differentiate between legitimate and malicious emails.

What is Tycoon 2FA?

Tycoon 2FA is an Adversary-in-the-Middle (AitM) phishing kit, first seen in August 2023 and distributed via the Phishing-as-a-Service (PhaaS) model [2]. It targets multi-factor authentication (MFA) by intercepting credentials and MFA tokens during authentication on fake Microsoft or Google login pages. The attacker captures session cookies after MFA is completed, allowing them to replay the session and access the user account, even if credentials are reset. The rise in MFA use has increased the popularity of AitM phishing kits like Tycoon 2FA and Mamba 2FA, another AiTM phishing kit investigated by Darktrace.

Initial access via phishing email

At the beginning of 2025, Darktrace observed phishing emails leveraging Milanote being sent to multiple internal recipients in an organization. In this attack, the same email was sent to 19 different users, all of which were held by Darktrace.

The subject line of the emails mentioned both a legitimate internal user of the company, the company name, as well as a Milanote board regarding a “new agreement” in German. It is a common social engineering technique to mention urgent matters, such as unpaid invoices, expired passwords, or awaiting voicemails, in the subject line to prompt immediate action from the user. However, this tactic is now widely covered in phishing awareness training, making users more suspicious of such emails. In this case, while the subject mentioned a “new agreement,” likely raising the recipient’s curiosity, the tone remained professional and not overly alarming. Additionally, the mention of a colleague and the standardized language typical of free content sender emails further helped dispel concerns regarding the email.

These emails were sent by the legitimate address support@milanote[.]com and referenced "Milanote" in the personal field of the header but originated from the freemail address “ahnermatternk.ef.od.13@gmail[.]com”. Darktrace / EMAIL recognized that none of the recipients had previously received a file share email from Milanote, making this sender unfamiliar in the customer's email environment

The emails contained several benign links to legitimate Milanote endpoints (including an unsubscribe link) which were not flagged by Darktrace. However, they also included a malicious link designed to direct recipients to a pre-filled credential harvesting page hosted on Milanote, prompting them to register for an account. Despite not blocking the legitimate Milanote links in the same email, Darktrace locked the malicious link, preventing users from visiting the credential harvester.

Around one minute later, one recipient received a legitimate email from Milanote confirming their successful account registration, indicating they had accessed the phishing page. This email had a lower anomaly score and was not flagged by Darktrace / EMAIL because, unlike the first email, it did not contain any suspicious links and was a genuine account registration notification. Similarly, in the malicious Milanote email, only the link leading to the phishing page was blocked, while the benign and legitimate Milanote links remained accessible, demonstrating Darktrace’s precise and targeted actioning.

Around the same time, Darktrace / NETWORK observed the same user’s device making DNS query for the domain name “lrn.ialeahed[.]com” , which has been flagged as a Tycoon 2FA domain [2], suggesting the use of this phishing platform.

Once the user had entered their details in the credential harvester, it is likely that they were presented a document hosted on Milanote that contained the final payload link – likely hidden behind text instructing users to access a “new agreement” document.

External research indicates that the user was likely directed to a Cloudflare Turnstile challenge meant to reroute unwanted traffic, such as automated security scripts and penetration testing tools [2] [3]. After these checks and other background processes are completed, the user is directed to the final landing page. In this case, it was likely a fake login prompt hosted on the attacker’s server, where the user is asked to authenticate to their account using MFA. By burrowing malicious links and files in this manner, threat actors can evade analysis by traditional security email gateways, effectively bypassing their protection.

Darktrace’s analysis of the structure and word content of the phishing emails resulted in an 82% probability score that the email was malicious, and the email further received a 67% phishing inducement score, representing how closely the structure and word content of the emails compared to typical phishing emails.

All these unusual elements triggered multiple alerts in Darktrace / EMAIL, focusing on two main suspicious aspects: a new, unknown sender with no prior correspondence with the recipients or the environment, and the inclusion of a link to a previously unseen file storage solution.

After detecting the fifth email, the “Sender Surge” model alert was triggered in Darktrace / EMAIL due to a significant number of recipients being emailed by this new suspicious sender in a short period. These recipients were from various departments across the customer’s organization, including sales, marketing, purchasing, and production. Darktrace / EMAIL determined that the emails were sent to a highly unusual group of internal recipients, further raising doubts about the business legitimacy.

Darktrace / EMAIL suggested actions to contain the attack by holding all Milanote phishing emails back from recipient’s inboxes, except for the detailed email with locked links. However, autonomous actions were not enabled at the time, allowing the initial email to reach recipients' inboxes, providing a brief window for interaction. Unfortunately, during this window, one recipient clicked on the Milanote payload link, leading to the compromise of their account.

SaaS account takeover

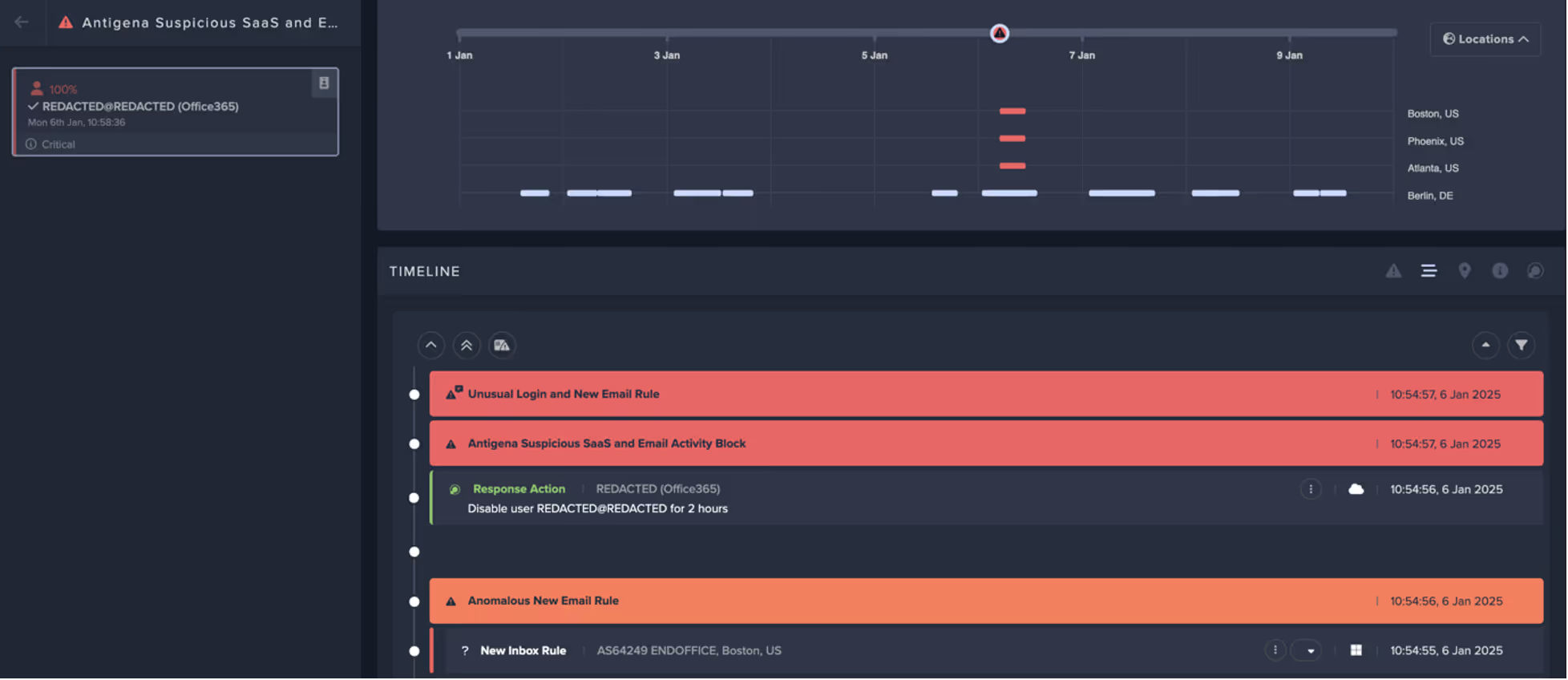

About three minutes after the malicious Milanote email was received, Darktrace / IDENTITY detected an unusual login to the email recipient’s SaaS account. The SaaS actor was observed accessing files from their usual location in Germany, while simultaneously, a 100% rare login occurred from a location in the US that had never been seen in the customer’s environment before. This login was also flagged as suspicious by Microsoft 365, triggering a 'Conditional Access Policy' that required MFA authentication, which was successfully completed.

![Tycoon 2FA adnimistration panel login page dated from October 2023 [3].](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/6807b3eb07498494e192803a_Screenshot%202025-04-22%20at%208.20.57%E2%80%AFAM.avif)

Despite the successful authentication, Darktrace / IDENTITY recognized that the login from this unusual location, coupled with simultaneous activity in another geographically distant location, were highly suspicious. Darktrace went on to observe MFA-validated logins from three separate US-based IP addresses: 89.185.80[.]19, 5.181.3[.]68, and 38.242.7[.]252. Most of the malicious activity was performed from the latter, which is associated with the Hide My Ass (HMA) VPN network [5].

Following this, the malicious actor accessed the user’s inbox and created a new mailbox rule named “GTH” that deleted any incoming email containing the string “milanote” in the subject line or body. Rules like this are a common technique used by attackers to leverage compromised accounts for launching phishing campaigns and concealing replies to phishing emails that might raise suspicions among legitimate account holders. Using legitimate, albeit compromised, accounts to send additional phishing emails enhances the apparent legitimacy of the malicious emails. This tactic has been reported as being used by Tycoon 2FA attackers [4].

The attacker accessed over 140 emails within the legitimate user’s inbox, including both the inbox and the “Sent Items” folder. Notably, the attacker accessed five emails in the “Sent Items” folder and modified their attachments. These emails were mainly related to invoices, suggesting the threat actor may have been looking to hijack those email threads to send fake invoices or replicate previous invoice emails.

Darktrace’s Cyber AI AnalystTM launched autonomous investigations into the individual events surrounding this suspicious activity. It connected these separate events into a single, broad account takeover incident, providing the customer with a clearer view of the ongoing compromise.

Darktrace's response

Within three minutes of the first unusual login alert, Darktrace’s Autonomous Response intervened, disabling the compromised user account for two hours.

As the impacted customer was subscribed to the Managed Threat Detection Service, Darktrace’s SOC team investigated the activity further and promptly alerted the customer’s security team. With the user’s account still disabled by Autonomous Response, the attack was contained, allowing the customer’s security team valuable time to investigate and remediate. Within ten minutes of receiving the alert from Darktrace’s SOC, they reset the user’s password, closed all active SaaS sessions, and deleted the malicious email rule. Darktrace’s SOC further supported the customer through the Security Operations Service Support service by providing information about the data accessed and identifying any other affected users.

A wider Milanote phishing campaign?

Around a month before this compromise activity, Darktrace alerted another customer to similar activities involving two compromised user accounts. These accounts created new inbox rules named “GFH” and “GVB” to delete all incoming emails containing the string “milanote” in their subject line and/or body.

The phishing emails that led to the compromise of these user accounts were similar to the ones discussed above. Specifically, these emails were sent via the Milanote platform and referenced a “new agreement” (in Spanish) being shared by a colleague. Additionally, the payload link included in the phishing emails showed the same UserPrincipalName (UPN) attribute (i.e., click?upn=u001.qLX9yCzR), which has been seen in other Milanote phishing emails leveraging Tycoon 2FA reported by OSINT sources [6]. Interestingly, in some cases, the email also referenced a “new agreement” in Portuguese, indicating a global campaign.

Based on the similarities in the rule’s naming convention and action, as well as the similarities in the phishing email subjects, it is likely that these were part of the same campaign leveraging Milanote and Tycoon 2FA to compromise user accounts. Since its introduction, the Tycoon 2FA phishing kit has undergone several enhancements to increase its stealth and obfuscation methods, making it harder for security tools to detect. For example, the latest versions contain special source code to obstruct web page analysis by defenders, prevent users from copying meaningful text from the phishing webpages, and disable the right-click menu to prevent offline analysis [4].

Conclusion

Threat actors are continually employing new methods to bypass security detection tools and measures. As highlighted in this blog, even robust security mechanisms like MFA can be compromised using AitM phishing kits. The misuse of legitimate services such as Milanote for malicious purposes can help attackers evade traditional email security solutions by blurring the distinction between legitimate and malicious content.

This is why security tools based on anomaly detection are crucial for defending against such attacks. However, user awareness is equally important. Delays in processing can impact the speed of response, making it essential for users to be informed about these threats.

[related-resource]

Appendices

References

[1] https://www.darktrace.com/resources/annual-threat-report-2024

[2] https://www.validin.com/blog/tycoon_2fa_analyzing_and_hunting_phishing-as-a-service_domains

[4] https://blog.barracuda.com/2025/01/22/threat-spotlight-tycoon-2fa-phishing-kit

[5] https://spur.us/context/38.242.7.252

[6] https://any.run/report/5ef1ac94e4c6c1dc35579321c206453aea80d414108f9f77abd2e2b03ffbd658/be5351d9-53c0-470b-8708-ee2e29300e70

Indicators of Compromise (IoCs)

IoC Type Description + Probability

89.185.80[.]19 - IP Address - Malicious login

5.181.3[.]68 - IP Address -Malicious login

38.242.7[.]252 - IP Address - Malicious login and new email inbox rule creation - Hide My Ass VPN

lrn.ialeahed[.]com – Hostname - Likely Tycoon 2FA domain

Darktrace Model Detections

Email alerts

Platforms / Free Content Sender + High Sender Surge

Platforms / Free Content Sender + Sender Surge

Platforms / Free Content Sender + Unknown Initiator

Platforms / Free Content Sender

Platforms / Free Content Sender + First Time Recipient

Unusual / New Sender Surge

Unusual / Sender Surge

Antigena Anomaly / High Antigena Anomaly

Association / Unknown Sender

History / New Sender

Link / High Rarity Link to File Storage

Link/ Link To File Storage

Link / Link to File Storage + Unknown Sender

Link / Low Link Association

Platforms / Free Content Sender + First Time Initiator

Platforms / Free Content Sender + Unknown Initiator + Freemail

Platforms / Free Content Sender Link

Unusual / Anomalous Association

Unusual / Unlikely Recipient Association

IDENTITY

SaaS / Access / Unusual External Source for SaaS Credential Use

SaaS / Compromise / Login from Rare High Risk Endpoint

SaaS / Access / M365 High Risk Level Login

SaaS / Compromise / Login From Rare Endpoint While User Is Active

SaaS / Access / MailItemsAccessed from Rare Endpoint

SaaS / Unusual Activity / Multiple Unusual SaaS Activities

SaaS / Unusual Activity / Multiple Unusual External Sources For SaaS Credential

SaaS / Compliance / Anomalous New Email Rule

SaaS / Compromise / Unusual Login and New Email Rule

SaaS / Compromise / SaaS Anomaly Following Anomalous Login

Antigena / SaaS / Antigena Suspicious SaaS Activity Block

Antigena / SaaS / Antigena Enhanced Monitoring from SaaS User Block

Antigena / SaaS / Antigena Unusual Activity Block

Antigena / SaaS / Antigena Suspicious SaaS and Email Activity Block

Cyber AI Analyst Incident

Possible Hijack of Office365 Account

MITRE ATT&CK Mapping

Tactic – Technique

DEFENSE EVASION, PERSISTENCE, PRIVILEGE ESCALATION, INITIAL ACCESS - Cloud Accounts

INITIAL ACCESS - Phishing

CREDENTIAL ACCESS - Steal Web Session Cookie

PERSISTENCE - Account Manipulation

PERSISTENCE - Outlook Rules

RESOURCE DEVELOPMENT - Email Accounts

RESOURCE DEVELOPMENT - Compromise Accounts

Experts breakdown of Identity Security in the Cloud Era

This white paper discusses the current identity threat landscape and how defenders can adopt new tools to better secure their users and data.

.avif)

%201.png)