What is DragonForce?

DragonForce is a Ransomware-as-a-Service (RaaS) platform that emerged in late 2023, offering broad-scale capabilities and infrastructure to threat actors. Recently, DragonForce has been linked to attacks targeting the UK retail sector, resulting in several high-profile cases [1][2]. Moreover, the group launched an affiliate program offering a revenue share of roughly 20%, significantly lower than commissions reported across other RaaS platforms [3].

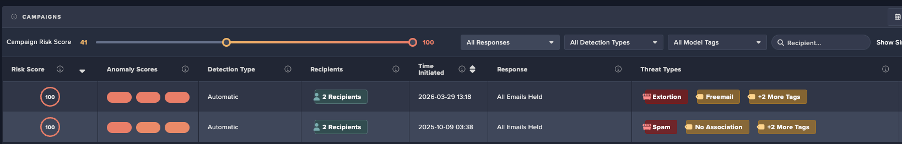

This Darktrace case study examines a DragonForce-linked RaaS infection within the manufacturing industry. The earliest signs of compromise were observed during working hours in August 2025, where an infected device started performing network scans and attempted to brute-force administrative credentials. After eight days of inactivity, threat actors returned and multiple devices began encrypting files via the SMB protocol using a DragonForce-associated file extension. Ransom notes referencing the group were also dropped, suggesting the threat actor is claiming affiliation with DragonForce, though this has not been confirmed.

Despite Darktrace’s detection of the attack in its early stages, the customer’s deployment did not have Darktrace’s Autonomous Response capability configured, allowing the threat to progress to data exfiltration and file encryption.

Darktrace's Observations

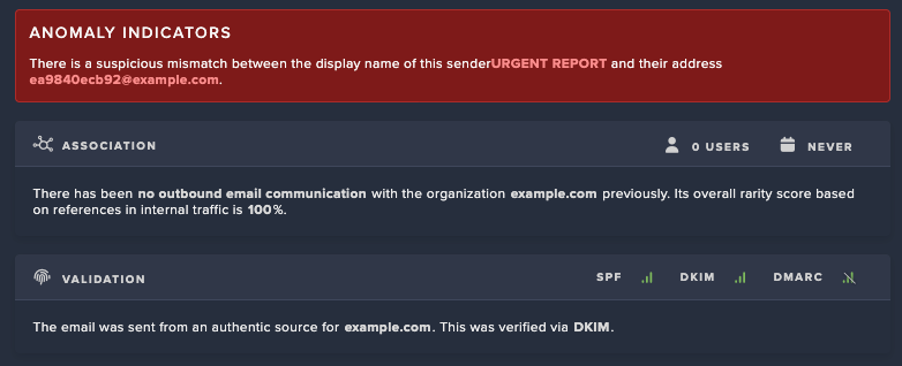

While the initial access vector was not clearly defined in this case study, it was likely achieved through common methods previously employed out by DragonForce affiliates. These include phishing emails leveraging social engineering tactics, exploitation of public-facing applications with known vulnerabilities, web shells, and/or the abuse of remote management tools.

Darktrace’s analysis identified internal devices performing internal network scanning, brute-forcing credentials, and executing unusual Windows Registry operations. Notably, Windows Registry events involving "Schedule\Taskcache\Tasks" contain subkeys for individual tasks, storing GUIDs that can be used to locate and analyze scheduled tasks. Additionally, Control\WMI\Security holds security descriptors for WMI providers and Event Tracing loggers that use non-default security settings respectively.

Furthermore, Darktrace identified data exfiltration activity over SSH, including connections to an ASN associated with a malicious hosting service geolocated in Russia.

1. Network Scan & Brute Force

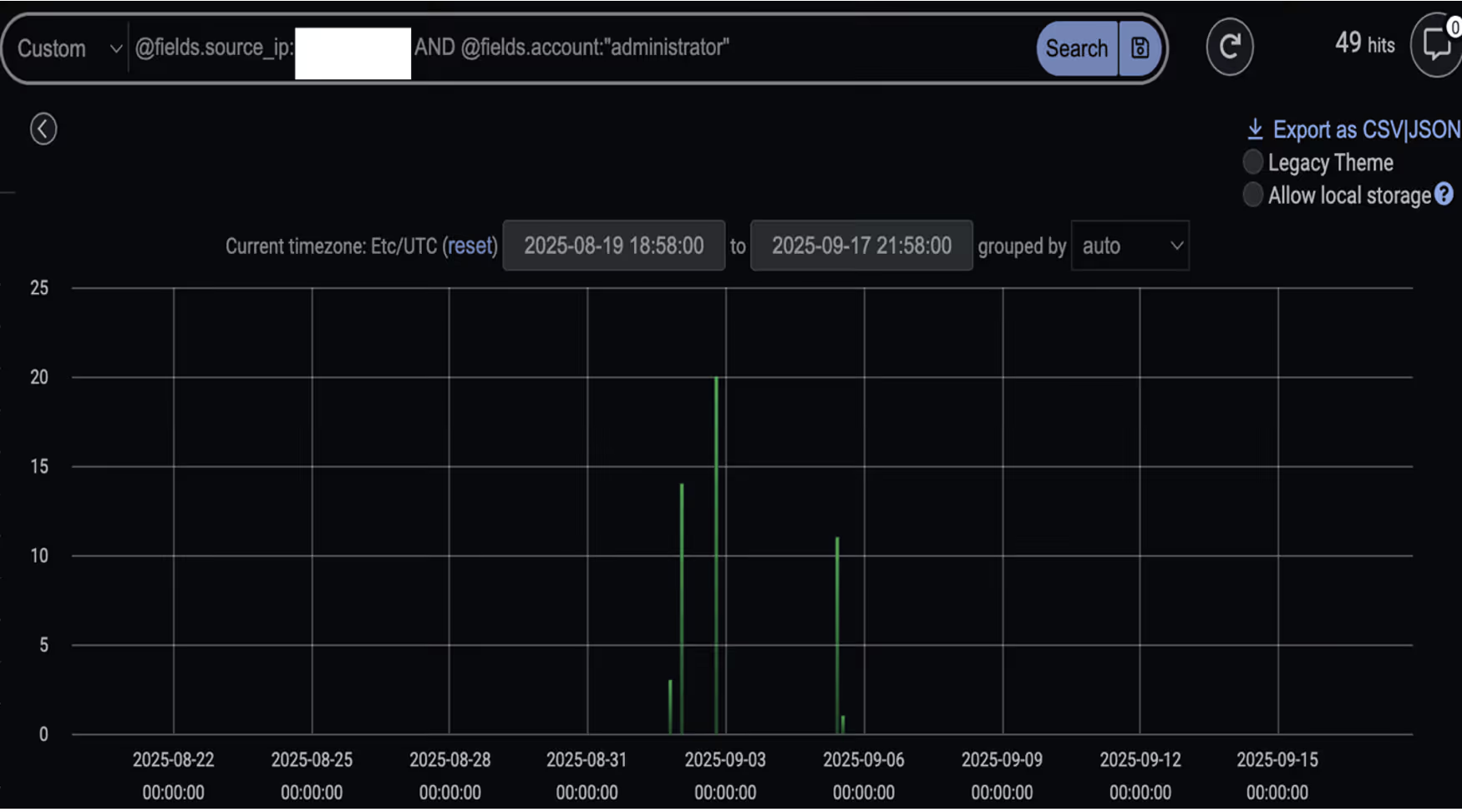

Darktrace identified anomalous behavior in late August to early September 2025, originating from a source device engaging in internal network scanning followed by brute-force attempts targeting administrator credential, including “administrator”, “Admin”, “rdpadmin”, “ftpadmin”.

Upon further analysis, one of the HTTP connections seen in this activity revealed the use of the user agent string “OpenVAS-VT”, suggesting that the device was using the OpenVAS vulnerability scanner. Subsequently, additional devices began exhibiting network scanning behavior. During this phase, a file named “delete.me” was deleted by multiple devices using SMB protocol. This file is commonly associated with network scanning and penetration testing tool NetScan.

2. Windows Registry Key Update

Following the scanning phase, Darktrace observed the initial device then performing suspicious Winreg operations. This included the use of the ”BaseRegOpenKey” function across multiple registry paths.

Additional operations such as “BaseRegOpenKey” and “BaseRegQueryValue” were also seen around this time. These operations are typically used to retrieve specific registry key values and allow write operations to registry keys.

The registry keys observed included “SYSTEM\CurrentControlSet\Control\WMI\Security” and “Software\Microsoft\Windows NT\CurrentVersion\Schedule\Taskcache\Tasks”. These keys can be leveraged by malicious actors to update WMI access controls and schedule malicious tasks, respectively, both of which are common techniques for establishing persistence within a compromised system.

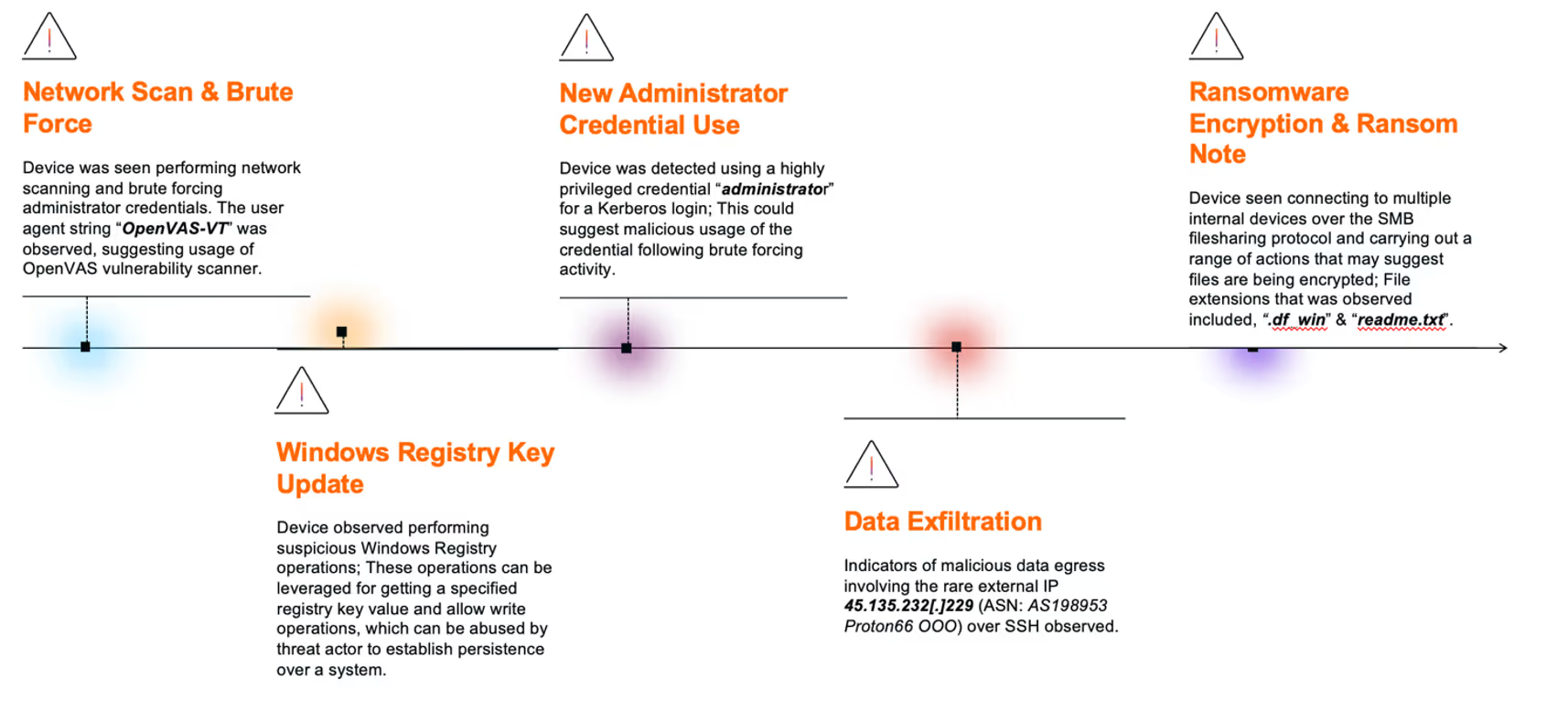

3. New Administrator Credential Usage

Darktrace subsequently detected the device using a highly privileged credential, “administrator”, via a successful Kerberos login for the first time. Shortly after, the same credential was used again for a successful SMB session.

These marked the first instances of authentication using the “administrator” credential across the customer’s environment, suggesting potential malicious use of the credential following the earlier brute-force activity.

4. Data Exfiltration

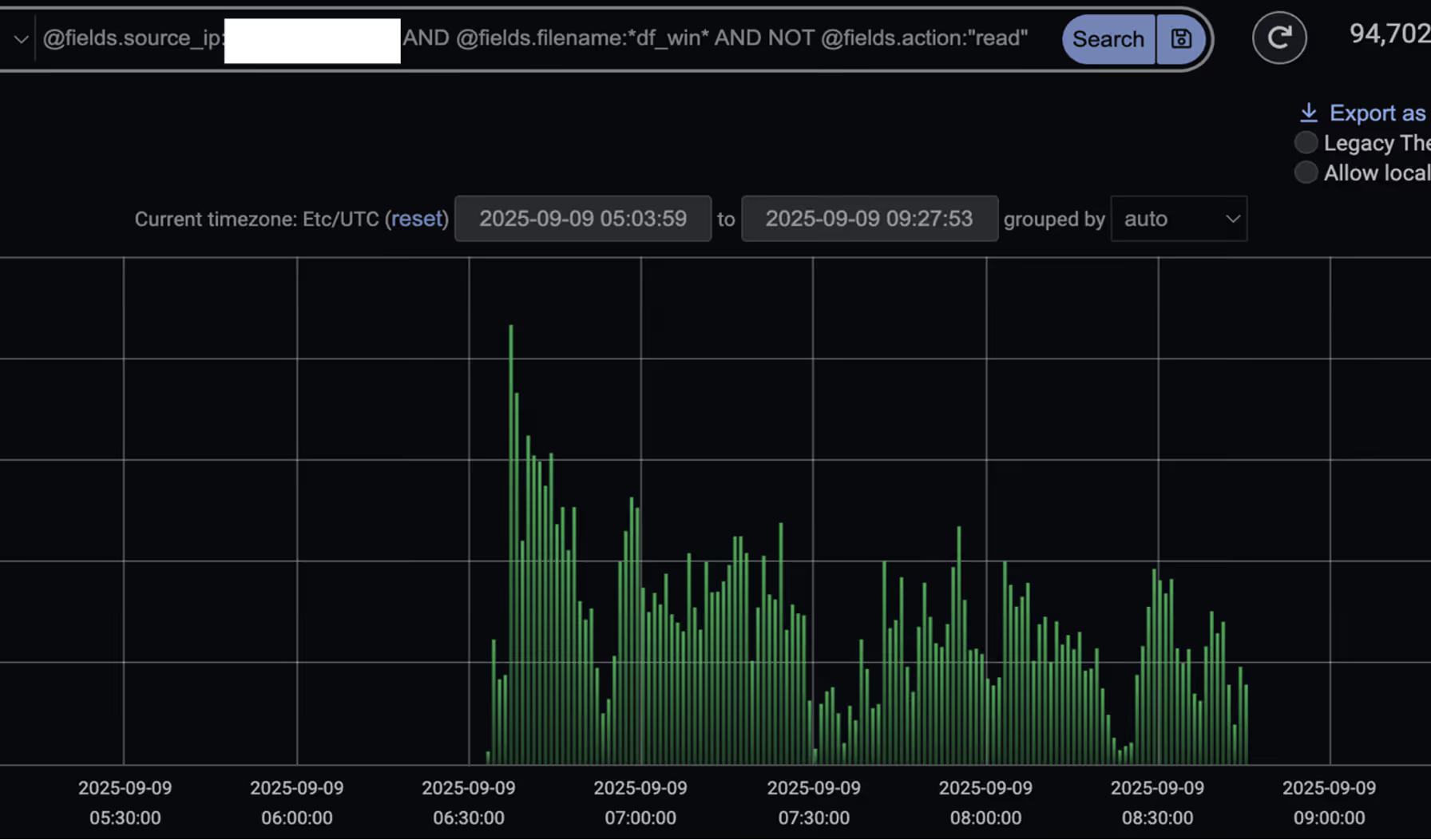

Prior to ransomware deployment, several infected devices were observed exfiltrating data to the malicious IP 45.135.232[.]229 via SSH connections [7][8]. This was followed by the device downloading data from other internal devices and transferring an unusually large volume of data to the same external endpoint.

The IP address was first seen on the network on September 2, 2025 - the same date as the observed data exfiltration activity preceding ransomware deployment and encryption.

Further analysis revealed that the endpoint was geolocated in Russia and registered to the malicious hosting provider Proton66. Multiple external researchers have reported malicious activity involving the same Proton66 ASN (AS198953 Proton66 OOO) as far back as April 2025. These activities notably included vulnerability scanning, exploitation attempts, and phishing campaigns, which ultimately led to malware [4][5][6].

Data Exfiltration Endpoint details.

- Endpoint: 45.135.232[.]229

- ASN: AS198953 Proton66 OOO

- Transport protocol: TCP

- Application protocol: SSH

- Destination port: 22

![Darktrace’s summary of the external IP 45.135.232[.]229, first detected on September 2, 2025. The right-hand side showcases model alerts triggered related to this endpoint including multiple data exfiltration related model alerts.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/690baa3e2f4def9f29b1ecae_Screenshot%202025-11-05%20at%2011.48.57%E2%80%AFAM.avif)

Further investigation into the endpoint using open-source intelligence (OSINT) revealed that it led to a Microsoft Internet Information Services (IIS) Manager console webpage. This interface is typically used to configure and manage web servers. However, threat actors have been known to exploit similar setups, using fake certificate warnings to trick users into downloading malware, or deploying malicious IIS modules to steal credentials.

![Live screenshot of the destination (45.135.232[.]229), captured via OSINT sources, displaying a Microsoft IIS Manager console webpage.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/690baa68a5b7b13fba715069_Screenshot%202025-11-05%20at%2011.49.52%E2%80%AFAM.avif)

5. Ransomware Encryption & Ransom Note

Multiple devices were later observed connecting to internal devices via SMB and performing a range of actions indicative of file encryption. This suspicious activity prompted Darktrace’s Cyber AI Analyst to launch an autonomous investigation, during which it pieced together associated activity and provided concrete timestamps of events for the customer’s visibility.

During this activity, several devices were seen writing a file named “readme.txt” to multiple locations, including network-accessible webroot paths such as inetpub\ and wwwroot\. This “readme.txt” file, later confirmed to be the ransom note, claimed the threat actors were affiliated with DragonForce.

At the same time, devices were seen performing SMB Move, Write and ReadWrite actions involving files with the “.df_win” extension across other internal devices, suggesting that file encryption was actively occurring.

Conclusion

The rise of Ransomware-as-a-Service (RaaS) and increased attacker customization is fragmenting tactics, techniques, and procedures (TTPs), making it increasingly difficult for security teams to prepare for and defend against each unique intrusion. RaaS providers like DragonForce further complicate this challenge by enabling a wide range of affiliates, each with varying levels of sophistication [9].

In this instance, Darktrace was able to identify several stages of the attack kill chain, including network scanning, the first-time use of privileged credentials, data exfiltration, and ultimately ransomware encryption. Had the customer enabled Darktrace’s Autonomous Response capability, it would have taken timely action to interrupt the attack in its early stages, preventing the eventual data exfiltration and ransomware detonation.

Credit to Justin Torres, Senior Cyber Analyst, Nathaniel Jones, VP, Security & AI Strategy, FCISO, & Emma Foulger, Global Threat Research Operations Lead.

Edited by Ryan Traill (Analyst Content Lead)

[related-resource]

Appendices

References:

1. https://www.infosecurity-magazine.com/news/dragonforce-goup-ms-coop-harrods/

2. https://www.picussecurity.com/resource/blog/dragonforce-ransomware-attacks-retail-giants

3. https://blog.checkpoint.com/security/dragonforce-ransomware-redefining-hybrid-extortion-in-2025/

7. https://www.virustotal.com/gui/ip-address/45.135.232.229

8. https://spur.us/context/45.135.232.229

9. https://www.group-ib.com/blog/dragonforce-ransomware/

IoC - Type - Description + Confidence

· 45.135.232[.]229 - Endpoint Associated with Data Exfiltration

· .readme.txt – Ransom Note File Extension

· .df_win – File Encryption Extension Observed

MITRE ATT&CK Mapping

DragonForce TTPs vs Darktrace Models

Initial Access:

· Anomalous Connection::Callback on Web Facing Device

Command and Control:

· Compromise::SSL or HTTP Beacon

· Compromise::Beacon to Young Endpoint

· Compromise::Beaconing on Uncommon Port

· Compromise::Suspicious SSL Activity

· Anomalous Connection::Devices Beaconing to New Rare IP

· Compromise::Suspicious HTTP and Anomalous Activity

· DNS Tunnel with TXT Records

Tooling:

· Anomalous File::EXE from Rare External Location

· Anomalous File::Masqueraded File Transfer

· Anomalous File::Numeric File Download

· Anomalous File::Script from Rare External Location

· Anomalous File::Uncommon Microsoft File then Exe

· Anomalous File::Zip or Gzip from Rare External Location

· Anomalous File::Uncommon Microsoft File then Exe

· Anomalous File::Internet Facing System File Download

Reconnaissance:

· Device::Suspicious SMB Query

· Device::ICMP Address Scan

· Anomalous Connection::SMB Enumeration

· Device::Possible SMB/NTLM Reconnaissance

· Anomalous Connection::Possible Share Enumeration Activity

· Device::Possible Active Directory Enumeration

· Anomalous Connection::Large Volume of LDAP Download

· Device::Suspicious LDAP Search Operation

Lateral Movement:

· User::Suspicious Admin SMB Session

· Anomalous Connection::Unusual Internal Remote Desktop

· Anomalous Connection::Unusual Long Remote Desktop Session

· Anomalous Connection::Unusual Admin RDP Session

· User::New Admin Credentials on Client

· User::New Admin Credentials on Server

· Multiple Device Correlations::Spreading New Admin Credentials

· Anomalous Connection::Powershell to Rare External

· Device::New PowerShell User Agent

· Anomalous Active Directory Web Services

· Compromise::Unusual SVCCTL Activity

Evasion:

· Unusual Activity::Anomalous SMB Delete Volume

· Persistence

· Device::Anomalous ITaskScheduler Activity

· Device::AT Service Scheduled Task

· Actions on Objectives

· Compromise::Ransomware::Suspicious SMB Activity (EM)

· Anomalous Connection::Sustained MIME Type Conversion

· Compromise::Ransomware::SMB Reads then Writes with Additional Extensions

· Compromise::Ransomware::Possible Ransom Note Write

· Data Sent to Rare Domain

· Uncommon 1 GiB Outbound

· Enhanced Unusual External Data Transfer

Darktrace Cyber AI Analyst Coverage/Investigation Events:

· Web Application Vulnerability Scanning of Multiple Devices

· Port Scanning

· Large Volume of SMB Login Failures

· Unusual RDP Connections

· Widespread Web Application Vulnerability Scanning

· Unusual SSH Connections

· Unusual Repeated Connections

· Possible Application Layer Reconnaissance Activity

· Unusual Administrative Connections

· Suspicious Remote WMI Activity

· Extensive Unusual Administrative Connections

· Suspicious Directory Replication Service Activity

· Scanning of Multiple Devices

· Unusual External Data Transfer

· SMB Write of Suspicious File

· Suspicious Remote Service Control Activity

· Access of Probable Unencrypted Password Files

· Internal Download and External Upload

· Possible Encryption of Files over SMB

· SMB Writes of Suspicious Files to Multiple Devices

The content provided in this blog is published by Darktrace for general informational purposes only and reflects our understanding of cybersecurity topics, trends, incidents, and developments at the time of publication. While we strive to ensure accuracy and relevance, the information is provided “as is” without any representations or warranties, express or implied. Darktrace makes no guarantees regarding the completeness, accuracy, reliability, or timeliness of any information presented and expressly disclaims all warranties.

Nothing in this blog constitutes legal, technical, or professional advice, and readers should consult qualified professionals before acting on any information contained herein. Any references to third-party organizations, technologies, threat actors, or incidents are for informational purposes only and do not imply affiliation, endorsement, or recommendation.

Darktrace, its affiliates, employees, or agents shall not be held liable for any loss, damage, or harm arising from the use of or reliance on the information in this blog.

The cybersecurity landscape evolves rapidly, and blog content may become outdated or superseded. We reserve the right to update, modify, or remove any content.

Get the latest insights on emerging cyber threats

This report explores the latest trends shaping the cybersecurity landscape and what defenders need to know in 2026.

.png)

%201.png)

.jpg)