Introduction

On October 14, 2025, Microsoft disclosed a new critical vulnerability affecting the Windows Server Update Service (WSUS), CVE-2025-59287. Exploitation of the vulnerability could allow an unauthenticated attacker to remotely execute code [1][6].

WSUS allows for centralized distribution of Microsoft product updates [3]; a server running WSUS is likely to have significant privileges within a network making it a valuable target for threat actors. While WSUS servers are not necessarily expected to be open to the internet, open-source intelligence (OSINT) has reported thousands of publicly exposed instances that may be vulnerable to exploitation [2].

Microsoft’s initial ‘Patch Tuesday’ update for this vulnerability did not fully mitigate the risk, and so an out-of-band update followed on October 23 [4][5] . Widespread exploitation of this vulnerability started to be observed shortly after the security update [6], prompting CISA to add CVE-2025-59287 to its Known Exploited Vulnerability Catalog (KEV) on October 24 [7].

Attack Overview

The Darktrace Threat Research team have recently identified multiple potential cases of CVE-2025-59287 exploitation, with two detailed here. While the likely initial access method is consistent across the cases, the follow-up activities differed, demonstrating the variety in which such a CVE can be exploited to fulfil each attacker’s specific goals.

The first signs of suspicious activity across both customers were detected by Darktrace on October 24, the same day this vulnerability was added to CISA’s KEV. Both cases discussed here involve customers based in the United States.

Case Study 1

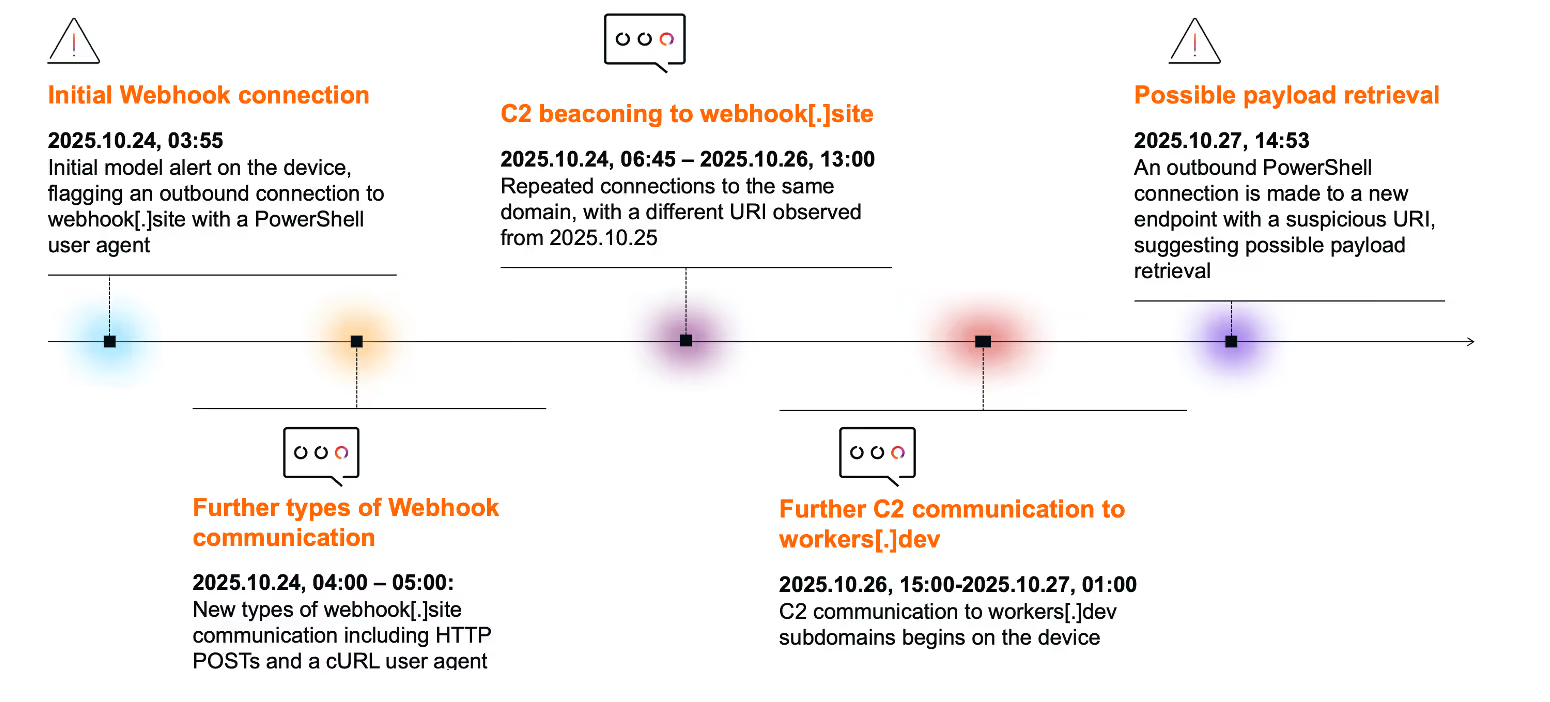

The first case, involving a customer in the Information and Communication sector, began with an internet-facing device making an outbound connection to the hostname webhook[.]site. Observed network traffic indicates the device was a WSUS server.

OSINT has reported abuse of the workers[.]dev service in exploitation of CVE-2025-59287, where enumerated network information gathered through running a script on the compromised device was exfiltrated using this service [8].

In this case, the majority of connectivity seen to webhook[.]site involved a PowerShell user agent; however, cURL user agents were also seen with some connections taking the form of HTTP POSTs. This connectivity appears to align closely with OSINT reports of CVE-2025-59287 post-exploitation behaviour [8][9].

Connections to webhook[.]site continued until October 26. A single URI was seen consistently until October 25, after which the connections used a second URI with a similar format.

Later on October 26, an escalation in command-and-control (C2) communication appears to have occurred, with the device starting to make repeated connections to two rare workers[.]dev subdomains (royal-boat-bf05.qgtxtebl.workers[.]dev & chat.hcqhajfv.workers[.]dev), consistent with C2 beaconing. While workers[.]dev is associated with the legitimate Cloudflare Workers service, the service is commonly abused by malicious actors for C2 infrastructure. The unusual connections to both webhook[.]site and workers[.]dev triggered multiple alerts in Darktrace, including high-fidelity Enhanced Monitoring alerts and Autonomous Response actions.

Infrastructure insight

Hosted on royal-boat-bf05.qgtxtebl.workers[.]dev is a Microsoft Installer file (MSI) named v3.msi.

Contained in the MSI file is two Cabinet files named “Sample.cab” and “part2.cab”. After extracting the contents of the cab files, a file named “Config” and a binary named “ServiceEXE”. ServiceEXE is the legitimate DFIR tool Velociraptor, and “Config” contains the configuration details, which include chat.hcqhajfv.workers[.]dev as the server_url, suggesting that Velociraptor is being used as a tunnel to the C2. Additionally, the configuration points to version 0.73.4, a version of Velociraptor that is vulnerable to CVE-2025-6264, a privilege escalation vulnerability.

Velociraptor, a legitimate security tool maintained by Rapid7, has been used recently in malicious campaigns. A vulnerable version of tool has been used by threat actors for command execution and endpoint takeover, while other campaigns have used Velociraptor to create a tunnel to the C2, similar to what was observed in this case [10] .

The workers[.]dev communication continued into the early hours of October 27. The most recent suspicious behavior observed on the device involved an outbound connection to a new IP for the network - 185.69.24[.]18/singapure - potentially indicating payload retrieval.

The payload retrieved from “/singapure” is a UPX packed Windows binary. After unpacking the binary, it is an open-source Golang stealer named “Skuld Stealer”. Skuld Stealer has the capabilities to steal crypto wallets, files, system information, browser data and tokens. Additionally, it contains anti-debugging and anti-VM logic, along with a UAC bypass [11].

Case Study 2

The second case involved a customer within the Education sector. The affected device was also internet-facing, with network traffic indicating it was a WSUS server

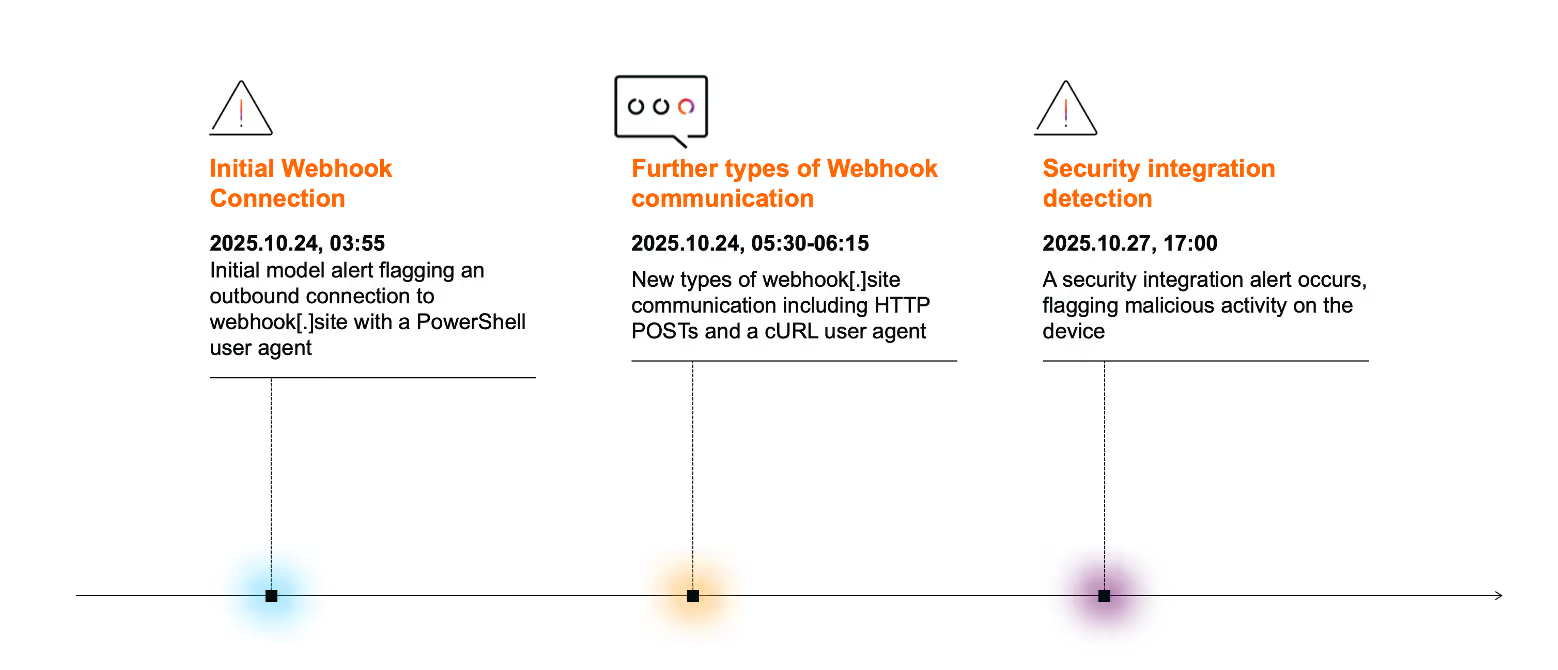

Suspicious activity in this case once again began on October 24, notably only a few seconds after initial signs of compromise were observed in the first case. Initial anomalous behaviour also closely aligned, with outbound PowerShell connections to webhook[.]site, and then later connections, including HTTP POSTs, to the same endpoint with a cURL user agent.

While Darktrace did not observe any anomalous network activity on the device after October 24, the customer’s security integration resulted in an additional alert on October 27 for malicious activity, suggesting that the compromise may have continued locally.

By leveraging Darktrace’s security integrations, customers can investigate activity across different sources in a seamless manner, gaining additional insight and context to an attack.

Conclusion

Exploitation of a CVE can lead to a wide range of outcomes. In some cases, it may be limited to just a single device with a focused objective, such as exfiltration of sensitive data. In others, it could lead to lateral movement and a full network compromise, including ransomware deployment. As the threat of internet-facing exploitation continues to grow, security teams must be prepared to defend against such a possibility, regardless of the attack type or scale.

By focussing on detection of anomalous behaviour rather than relying on signatures associated with a specific CVE exploit, Darktrace is able to alert on post-exploitation activity regardless of the kind of behaviour seen. In addition, leveraging security integrations provides further context on activities beyond the visibility of Darktrace / NETWORKTM, enabling defenders to investigate and respond to attacks more effectively.

With adversaries weaponizing even trusted incident response tools, maintaining broad visibility and rapid response capabilities becomes critical to mitigating post-exploitation risk.

Credit to Emma Foulger (Global Threat Research Operations Lead), Tara Gould (Threat Research Lead), Eugene Chua (Principal Cyber Analyst & Analyst Team Lead), Nathaniel Jones (VP, Security & AI Strategy, Field CISO),

Edited by Ryan Traill (Analyst Content Lead)

[related-resource]

Appendices

References

1. https://nvd.nist.gov/vuln/detail/CVE-2025-59287

5. https://msrc.microsoft.com/update-guide/vulnerability/CVE-2025-59287

6. https://thehackernews.com/2025/10/microsoft-issues-emergency-patch-for.html

7. https://www.cisa.gov/known-exploited-vulnerabilities-catalog

9. https://unit42.paloaltonetworks.com/microsoft-cve-2025-59287/

10. https://blog.talosintelligence.com/velociraptor-leveraged-in-ransomware-attacks/

11. https://github.com/hackirby/skuld

Darktrace Model Detections

· Device / New PowerShell User Agent

· Anomalous Connection / Powershell to Rare External

· Compromise / Possible Tunnelling to Bin Services

· Compromise / High Priority Tunnelling to Bin Services

· Anomalous Server Activity / New User Agent from Internet Facing System

· Device / New User Agent

· Device / Internet Facing Device with High Priority Alert

· Anomalous Connection / Multiple HTTP POSTs to Rare Hostname

· Anomalous Server Activity / Rare External from Server

· Compromise / Agent Beacon (Long Period)

· Device / Large Number of Model Alerts

· Compromise / Agent Beacon (Medium Period)

· Device / Long Agent Connection to New Endpoint

· Compromise / Slow Beaconing Activity To External Rare

· Security Integration / Low Severity Integration Detection

· Antigena / Network / Significant Anomaly / Antigena Alerts Over Time Block

· Antigena / Network / Significant Anomaly / Antigena Enhanced Monitoring from Server Block

· Antigena / Network / External Threat / Antigena Suspicious Activity Block

· Antigena / Network / Significant Anomaly / Antigena Significant Server Anomaly Block

List of Indicators of Compromise (IoCs)

IoC - Type - Description + Confidence

o royal-boat-bf05.qgtxtebl.workers[.]dev – Hostname – Likely C2 Infrastructure

o royal-boat-bf05.qgtxtebl.workers[.]dev/v3.msi - URI – Likely payload

o chat.hcqhajfv.workers[.]dev – Hostname – Possible C2 Infrastructure

o 185.69.24[.]18 – IP address – Possible C2 Infrastructure

o 185.69.24[.]18/bin.msi - URI – Likely payload

o 185.69.24[.]18/singapure - URI – Likely payload

The content provided in this blog is published by Darktrace for general informational purposes only and reflects our understanding of cybersecurity topics, trends, incidents, and developments at the time of publication. While we strive to ensure accuracy and relevance, the information is provided “as is” without any representations or warranties, express or implied. Darktrace makes no guarantees regarding the completeness, accuracy, reliability, or timeliness of any information presented and expressly disclaims all warranties.

Nothing in this blog constitutes legal, technical, or professional advice, and readers should consult qualified professionals before acting on any information contained herein. Any references to third-party organizations, technologies, threat actors, or incidents are for informational purposes only and do not imply affiliation, endorsement, or recommendation.

Darktrace, its affiliates, employees, or agents shall not be held liable for any loss, damage, or harm arising from the use of or reliance on the information in this blog.

The cybersecurity landscape evolves rapidly, and blog content may become outdated or superseded. We reserve the right to update, modify, or remove any content

Get the latest insights on emerging cyber threats

This report explores the latest trends shaping the cybersecurity landscape and what defenders need to know in 2026.

.png)

.png)

%201.png)