Secure your AI with Darktrace

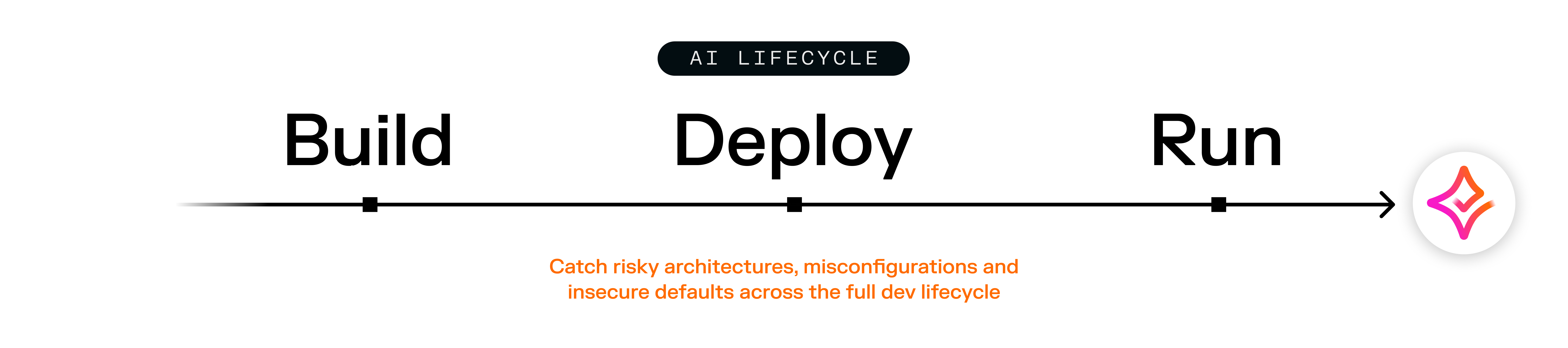

AI is accelerating faster than governance can keep up, expanding attack surfaces and creating unseen risks. From data and models to AI agents and integrations, security starts by knowing what to protect.

AI adoption is outpacing enterprise control

Gen AI assistants are getting embedded in SaaS applications. Autonomous agents now make decisions in real time. And suppliers embedding no-code tools are enabling teams to build their own AI solutions.

But while adoption and innovation accelerate, security is still catching up. AI systems behave in ways that traditional defenses were never designed to monitor:

Sensitive data can be exposed through prompts that don’t carry a classification like traditional DLP requires

AI agents can have greater privileges than the user they are interacting with, allowing unintended access

Misconfigurations can create hidden pathways to critical systems

Employees and third parties can very easily introduce unmonitored “shadow AI” tools without realizing the risks.

To safely and responsibly adopt AI at scale, organizations must have visibility over how and where AI is used, understand how it behaves, and be able to intervene when that behavior deviates from what is expected.

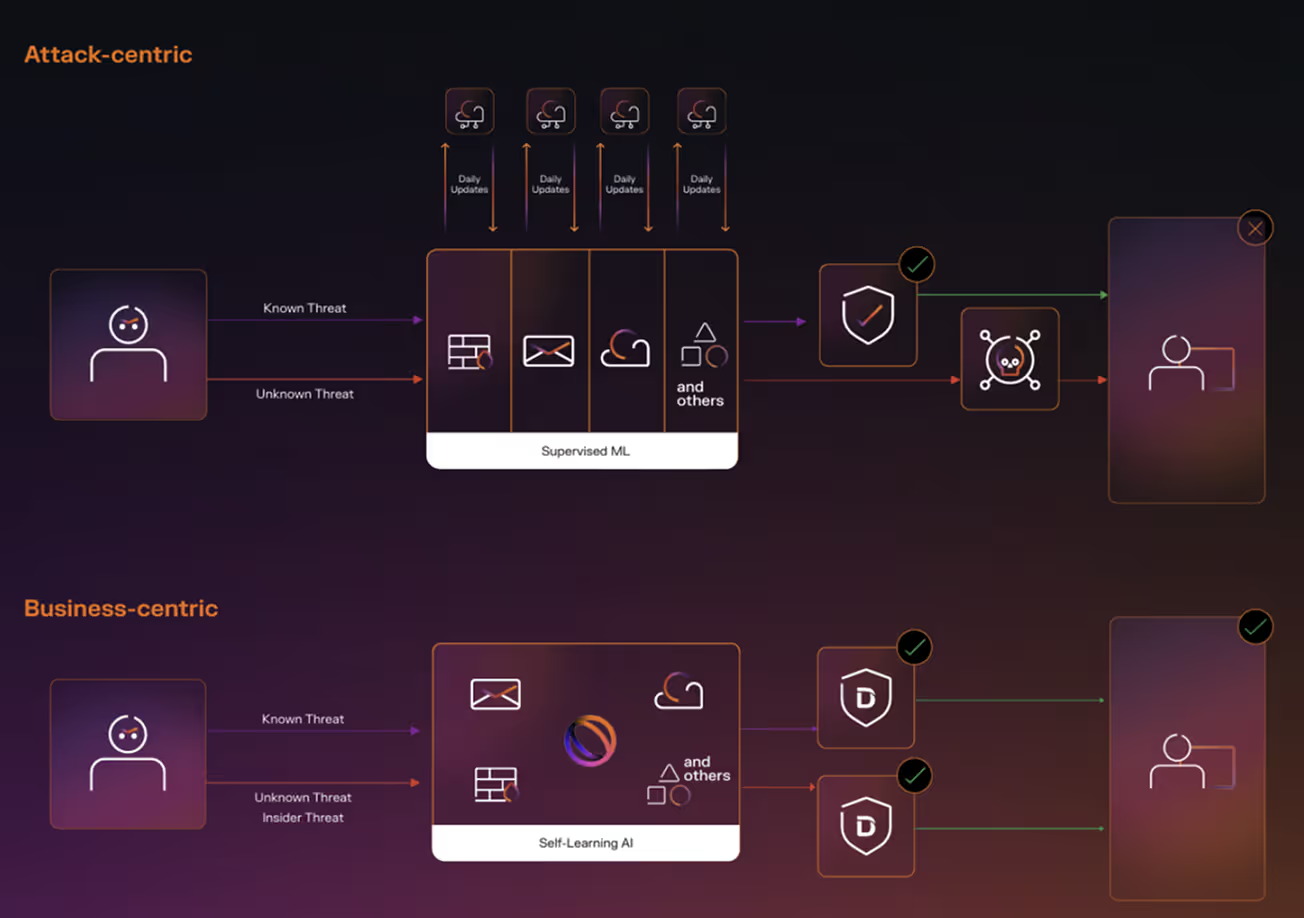

AI creates complexity that needs a business-centric approach

Most of the cybersecurity industry relies on a common approach: using historical attack patterns to stop future threats. This method was never built to handle the complexity and subtlety of AI‑driven behavior. Securing AI requires interpreting ambiguous interactions, uncovering subtle intent within extended conversations, understanding how access accumulates over time, and recognizing when behavior — human or machine — begins to drift into malicious behavior.

While other vendors are only now beginning to explore behavioral analysis, often by layering GenAI onto older architectures, Darktrace has spent more than a decade building AI purposefully designed to understand and adapt to evolving behavior in complex environments. We take a business-centric approach, building technology that learns directly from the environment it protects then identifies any malicious actions that deviate from normal operations. This means we can stop novel or AI-related threats on the first encounter - without relying on databases of known attacks.

Monitor the prompts driving GenAI agents and assistants

Real‑time inspection of prompts, sessions, and responses across:

Enterprise GenAI, like Microsoft Copilot and ChatGPT Enterprise

Leading low‑code SaaS environments like Microsoft Copilot Studio

High‑code AI environments like Amazon Bedrock and SageMaker

General SaaS suppliers like Salesforce and Microsoft 365

SASE

Behavioral analytics separate business-aligned activities from significant and risky deviations, with detection of clever conversational prompt attacks and malicious chaining and the ability to block unsafe actions where supported.

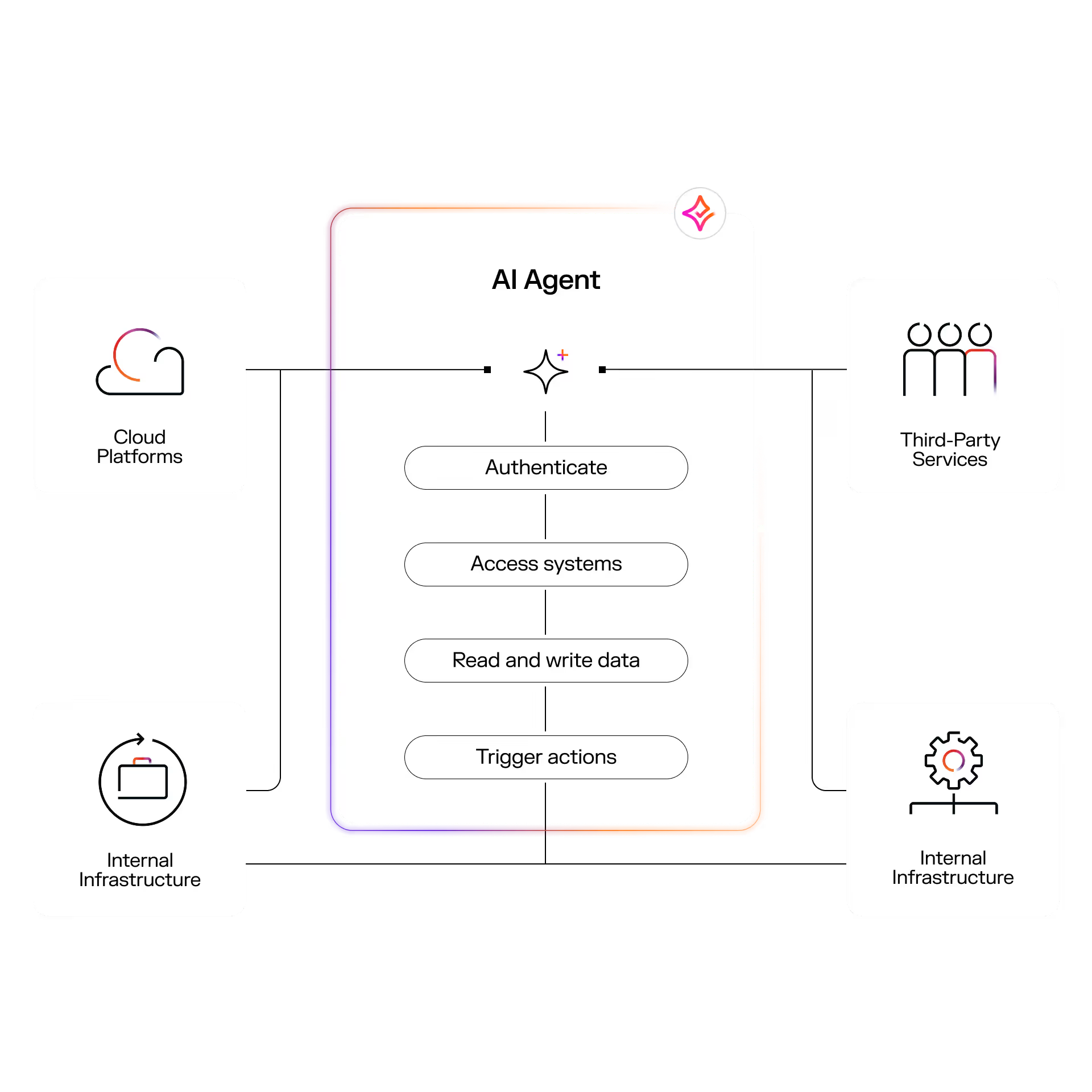

Secure business AI Agent identities in real time

Discover live agent identities acting across suppliers and environments, map their connections and interactions via MCP and services like Amazon S3, and audit behavior across SaaS, cloud, network, endpoint, OT, and email.

Embrace AI with confidence

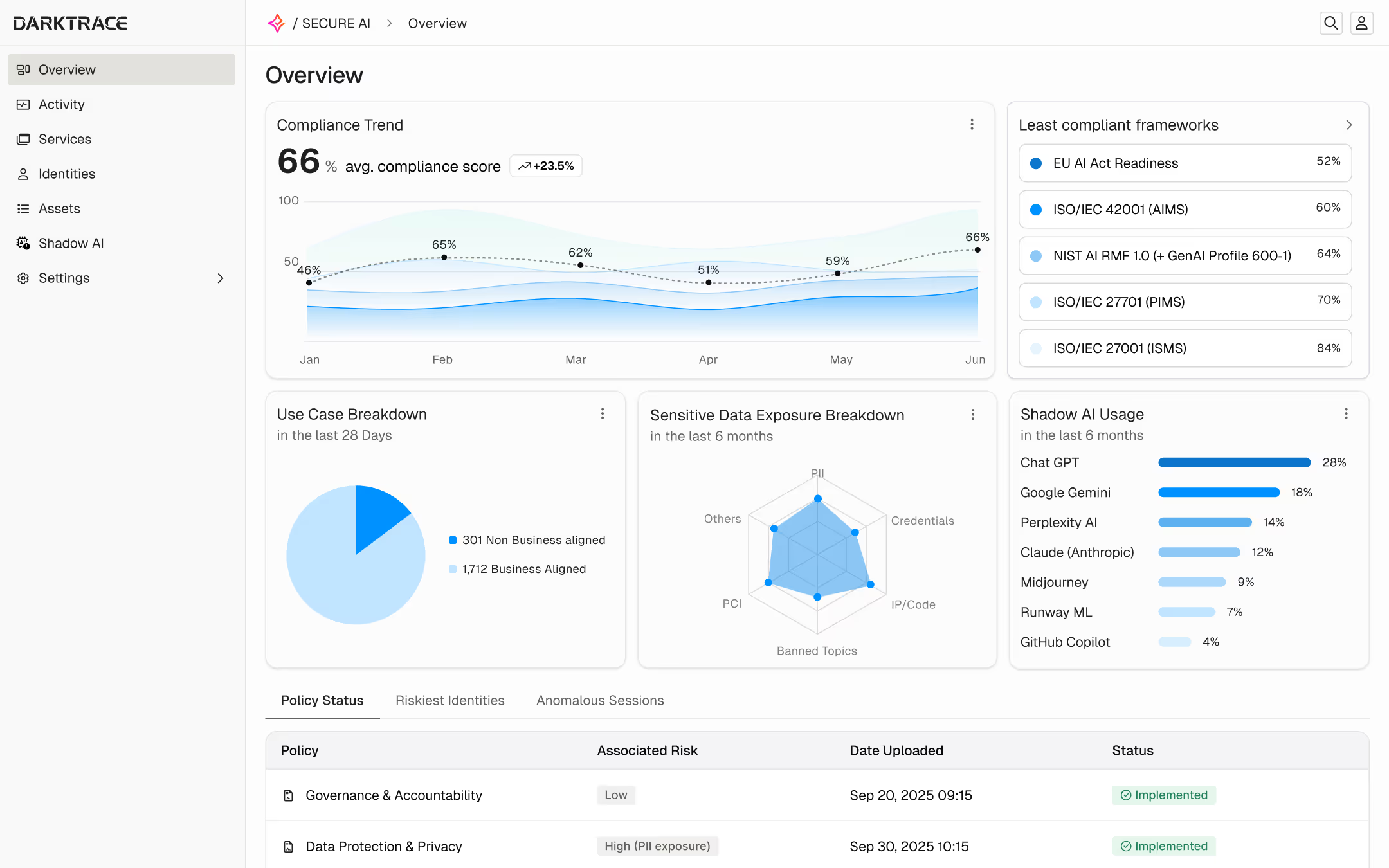

Darktrace / SECURE AI brings every AI interaction in your business into a single view, helping teams understand intent, assess risk, protect sensitive data, and enforce policy across both human and AI Agent activity.

Discover and control Shadow AI

Surface unsanctioned or unexpected AI activity where it appears, distinguish misuse of legitimate tools and unapproved services, and apply policy to contain data exposure while guiding users toward sanctioned options.

Get a deep dive

Wherever you are in your securing AI journey, we have something for you

Securing AI Starts Here

See our survey findings for how security leaders are thinking about security AI and discover our framework

Watch our Launch Broadcast

Catch up on our launch of new Secure AI innovations and discover how you can deploy AI agents with confidence

Stay on Top of Securing AI

Get exclusive access to product updates, thought leadership, and opportunities to connect with peers

Get in Touch

Speak with a Darktrace expert to understand your AI risks and get practical, tailored guidance