The challenge of reliably attributing cyber-threats has amplified in recent years, as adversaries adopt a collection of techniques to ensure that even if their attacks are caught, they themselves escape detection and avoid punishment.

Detecting a threat is, of course, a very different technical challenge compared to tracing that activity back to a human operator. Nevertheless, at some point after the dust has settled, during the post-hoc incident analysis for example, someone somewhere may need to know who the suspects are. And in spite of all of our other advances, and also some recent successes in attributing offensive and cyber-criminal acts, only three out of every 100,000 cyber-crimes are prosecuted. Put simply, this is still an unsolved set of problems. Many of the successes we do have can be attributed more to operational security fails on the criminals’ end than any other active approaches. In fact, some recent trends have actually made reliable attribution even more challenging.

The four cyber-threat trends that make attribution difficult

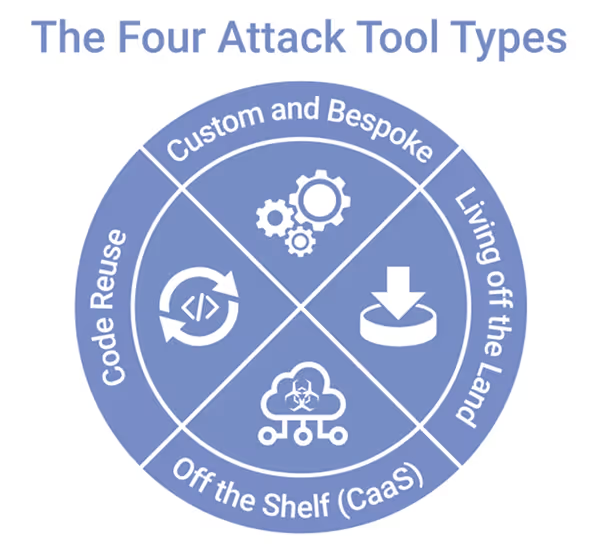

There are four related trends in how threat-actors can procure and obtain attack capabilities that have resulted in an increase in complexity when attempting to reliably identify Tools, Techniques, and Procedures (TTPs) and attributing them to distinct threat-actors.

A Cybercrime-as-a-Service economy and supply chain allowing cyber-criminals to mix and match off the shelf offensive cyber capabilities.

Expansion of ‘Living off the Land’ (LoL) tool usage by threat-actors to evade traditional, signature-based security defenses, and to obfuscate their activity.

While Code Reuse has always existed in the hacker community, copying nation-state-grade attack code has recently become possible.

The barrier to entry for criminally motivated operators has been lowered, providing the means for less technical criminals, who are only limited by time and their imagination.

Figure 1: The four cyber-threat trends

Threat-actors can mix and match attack tools, creating attack stacks that can be tailored for a variety of campaigns.

Between a professional marketplace of cyber-crime tools and services, the increasing adoption of ‘Living off the Land’ techniques, and the reusing of code leaked from nation-state intelligence services, threat-actors with even the most limited technical ability can conduct highly sophisticated criminal campaigns. Prospective cyber-criminals now have four primary types of attack tools to choose from – with three of them brand new or greatly enhanced. Even more importantly, these threat-actors can mix and match attack tools, creating tactically flexible attack stacks that can be tailored for a variety of campaigns against a diverse set of victims.

Off the shelf attacks

The burgeoning and increasingly professional Cybercrime-as-a-Service market (estimated at $1.6B) provides a thriving marketplace of microservices, attack code, and attack platforms. Anyone with a motive and enough bitcoin and enthusiasm can become the next ‘cyber Don Corleone’. Many of these services offer dedicated account management and professional support 24 hours a day. The commercialization of the cyber-crime supply chain has raised the barrier to entry for Cybercrime-as-a-Service vendors, while at the same time lowering it for cyber-criminal operators.

Living off the Land

‘Living off the Land’ (LoL) and “malware-less” attacks have been on the rise for some time now. What makes these attack methods so dangerous is that they leverage standard operating system tools to conduct their nefarious business, making signature-based approaches that look for malware heuristics ineffective – including signature-based Intrusion Protection Systems.

These attacks in particular demonstrate the need for an approach to cyber security that goes beyond looking at what malware is being used. Rather than relying on static blacklists, security teams are instead turning to a more sophisticated approach that learns ‘normal’ for every user and device across an entire business. From that evolving baseline, this approach to defense can identify and contain anomalous activity indicative of a cyber-threat – all in real time.

Code reuse and repurpose

What is new, and unprecedented, is that cyber-criminals are gaining access to intelligence and nation-state grade attack code.

Hackers have always begged, borrowed, and stolen code from others, including attack code – just two notable examples include the Zeus trojan and RIG exploit kit code leaks that provided the code base for much of the current generation of threats. What is new and unprecedented is that, whether through malice or incompetence, cyber-criminals are gaining access to intelligence and nation-state grade attack code. The Shadowbroker leaks that resulted in Wannacry is one recent example of this trend, and one we expect to accelerate – especially with intelligence services actively outing each other’s methods.

Custom and bespoke techniques

The practice of hackers creating their own tools and researching their own exploits has a long and hallowed tradition, with headline-grabbing zero-days becoming more and more common. Nation-state actors in particular often make a distinction between attack operators and attack code developers, with the ability to request tailored and bespoke code and tools – not unlike the model that has been replicated in the Cybercrime-as-a-Service market. Even when developing custom tools, threat-actors frequently integrate code and exploits from other parties.

Figure 2: The four main attack tool types

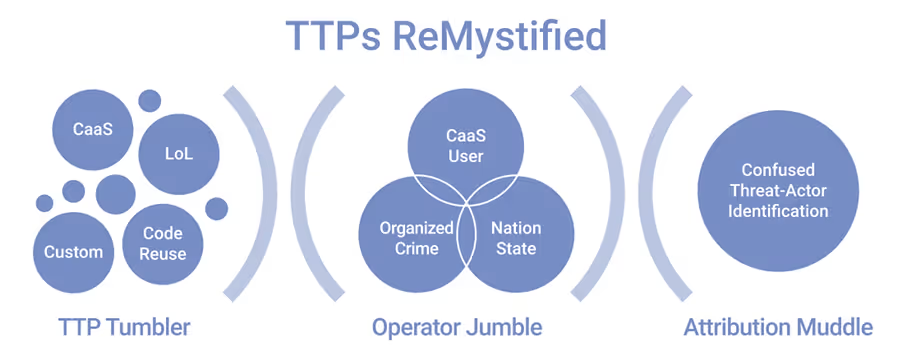

When determining who is actually behind these attacks, though, what is most important is the ability to combine all four types of attack tools – this provides a further layer of obfuscation against methods that rely on pattern matching for detection whilst causing additional confusion for would-be investigators. An attacker can use any combination and variation of these tool types to create a different “Chimera” attack stack – making it that much more difficult to identify who is really the operator. Telling apart the operator from the Cybercrime-as-a-Service vendor, for example, is difficult when most of the TTPs that are evaluated are technical and derive from the tooling.

Figure 3: The TTP and Attribution Confusion Chain

Conclusion

As the challenge of attribution intensifies, our focus must turn to defending against cyber-attacks themselves.

The combination of the four threat trends outlined above has lowered the barrier to entry for criminally motivated operators. Less technical adversaries are now able to launch attacks at a speed and scale previously confined to the most organized and well-financed cyber-criminal rings. This change in circumstances has made attribution of offensive cyber activity drastically more complex, and it may be some time before the prosecution rate for cyber-crime gets good enough that it can act as a greater disincentive.

As the challenge of attribution intensifies, our focus must turn to defending against cyber-attacks themselves. You may not ever know who is attacking you, but if you can successfully thwart the full range of threats, new and old, your organization can continue to operate as normal.

Fortunately, defenders’ abilities to detect and respond to cyber-threats have significantly advanced in recent years, thanks to the latest developments in AI and machine learning. Over 3,500 organizations now rely on Cyber AI to detect and contain cyber-threats – whether attackers use pre-existing OS tools to masquerade their attacks or use bespoke and entirely new techniques to bypass rules and signatures. When a threat is identified, AI can respond autonomously by enforcing a user or device’s ‘pattern of life’, allowing ‘business as usual’ whilst ensuring the organization is protected from harm.

%201.png)