Since the beginning of the internet, we have seen a near, if not an exponential, surge of information sharing amongst users in cyberspace. Not long after, we saw how the emergence of social media ushered an access to public online platforms where other internet users worldwide could share, discuss, promote, and consume information, whether by deliberate choice or not.

These platforms, which are now wealthy in users, enabled the effectual sharing of a wide range of information and has facilitated the emergence of online communities, forums, webpages, and blogs - where everyone could create content and share it with other users leading to near infinite number of sources.

Public and private organisations have been able to leverage these platforms to communicate directly with the public, share relevant knowledge with their audiences, and expand users’ exposure to their organisation’s online presence – often by providing the users a direct link to websites and domains containing supplementary information on their organisations. However, there are some issues that organisations and users face when using such platforms.

Misinformation vs Disinformation

The ever-growing catalogue of informational sources and contributing users has introduced an old challenge with a more complex twist: distinguishing which information is truth and which is not. Two terms are used to describe inaccurate information – misinformation and disinformation.

Misinformation is “false information that is spread, regardless of whether there is intent or mislead”. For example, someone can read a compelling story on social media and share it with others without checking whether this story is, in fact, true.

During the COVID-19 pandemic, many people were rightfully concerned and anxious about their health, so they wanted to inform themselves as much as possible on the looming health risk. However, when they went looking for answers – they were overloaded with varying opinions and ‘fake facts’ that it became increasingly difficult to distinguish true facts from fiction.

Subsequently, at times a social media post - or two - that contained false information was shared by a friend, relative, or acquaintance who initially had good intentions in sharing what they had learned, but unfortunately, they were misinformed.

Disinformation instead means “deliberately misleading or biased information; manipulated narrative or facts; propaganda”, which can be interpreted as the intentional spreading of misinformation.

The main difference between misinformation and disinformation is the presence of clear intent in the latter. For example, during political conflict – or even wars – it is not uncommon for one, or both, opposing parties to broadcast news narratives to their own domestic audiences in the way that portrays them as either the righteous liberator or the unsuspecting victim.

Disinformation and Geopolitics

During turbulent times – such as (geo)political conflicts, national strife, digital revolutions, and pandemics – one can see the prevalence of massive disinformation campaigns being arranged by nation-state actors, independent threat actors and other ideologically driven actors. The likes of such campaigns are targeting businesses, governments, and individuals alike.

One of the most common channels used to spread disinformation would be social media platforms. In essence, any piece of information shared on social media can spread rapidly to all kinds of audiences across the globe. This is amplified by maliciously motivated actors’ use of “bots” to speed up the momentum of which disinformation is spread.

A bot is a “computer program that operates as an agent for a user or other program to stimulate a human activity. It is used to perform specific tasks repeatedly and autonomously. There is a plethora of these bots actively used to spread disinformation throughout the most popular social platforms including Facebook, Twitter and Instagram.

Impact of Disinformation on Organizations

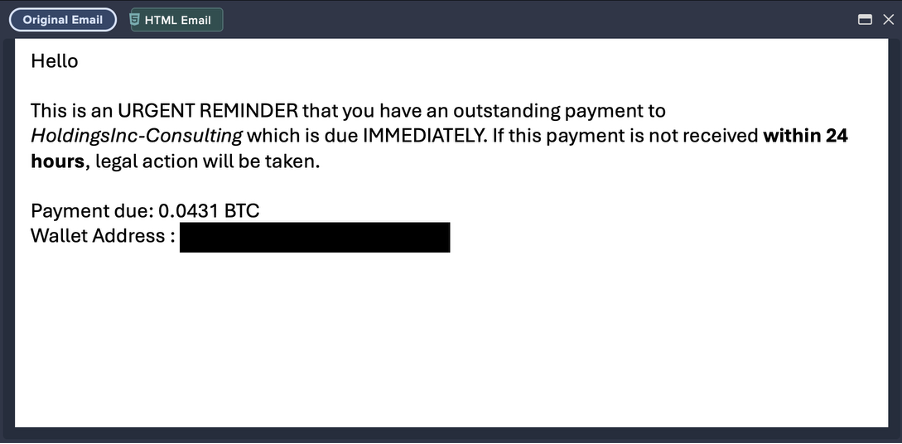

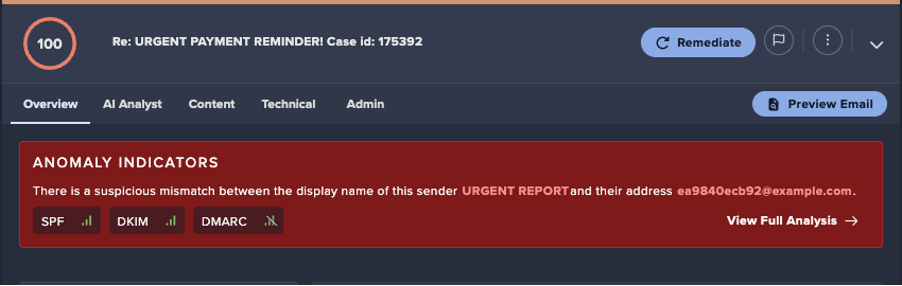

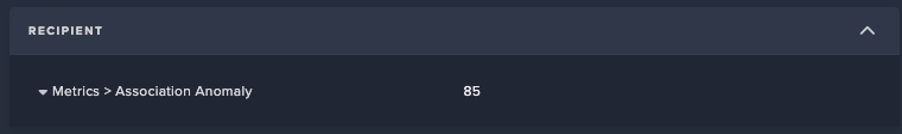

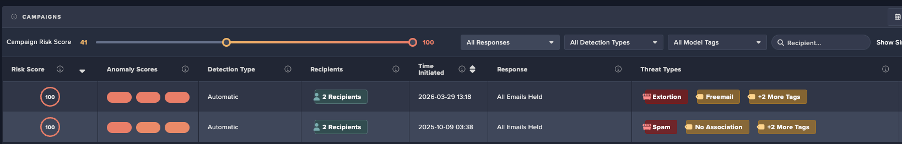

When organisations are targeted by disinformation campaigns, malicious actors aim to leverage the discord and uncertainty on topics that are shrouded in controversy. Malicious actors like online scammers aim to exploit this induced discord by e.g., creating phishing emails that are more compelling to recipients – who are just trying to navigate between what is real and not real.

For example, a campaign stating that data held by a big telecommunication company was breached is used to craft emails in which scammers would prompt the recipients to check whether their personal data was also affected by this ‘breach’.

Regardless of whether this information is correct or not, the flux of news floating around the internet makes it increasingly difficult for a person to decide whether this information is accurate.

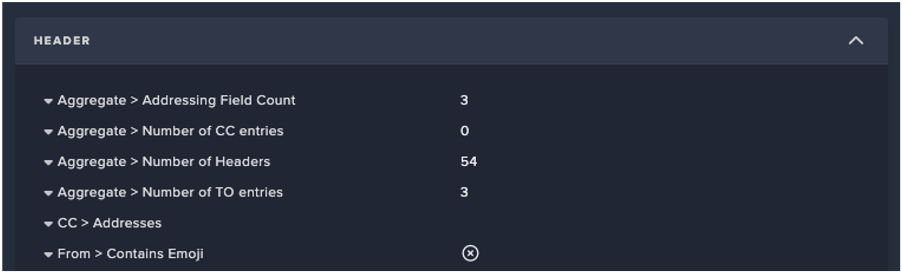

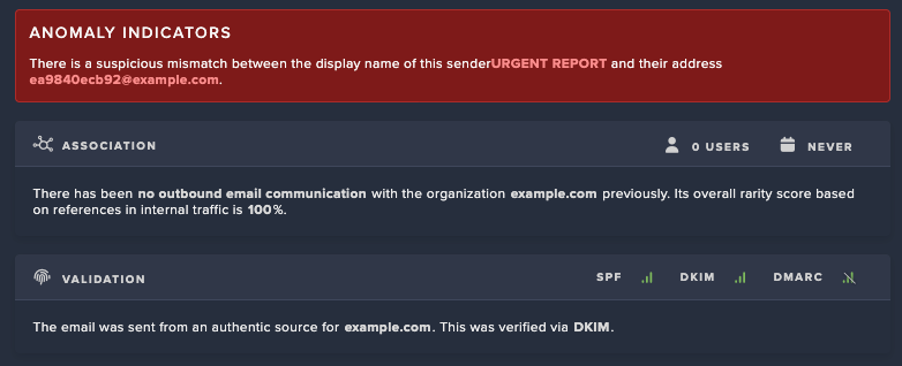

In parallel, the recipient may be experiencing feelings of anxiety and uncertainty regarding the breach – and the news about the breach – which often affects the recipients' decision to immediately react to new information on the topic. Since scammers use domains that are carefully crafted to seem legitimate to an untrained eye – e.g., domains containing near uncanny resemblance to the official organisation’s domain – it further increases the recipient’s susceptibility to trusting dubious sources. Thus, increasing the likelihood that recipients of phishing emails would be more compelled to e.g., click on a link attached to an email to verify whether their data was also leaked, or not.

The Future of Disinformation

Organisations who are already dealing with the social strains created by disinformation campaigns are now facing an additional risk: their audiences may be more susceptible to phishing campaigns in times of widespread uncertainty. To make a convincing phishing campaign, malign actors often use compromised domains, or attempt to mimic legitimate domains through a method called ‘typo squatting’.

Typo squatting is the act of registering domains with intentionally misspelled names of popular or official web presences and often filling these with untrustworthy content – to give their victims a false sense of legitimacy surrounding the source.

Once this false sense of legitimacy has been established between the attacker’s source and the victim’s susceptibility in trusting that source, it will be nearly entirely up to the victim to avoid being misled. Consequently, this means the attack surface of an organisation is growing as fast as disinformation and false domains can be created and shared to its audience.

Combatting Disinformation with Attack Surface Management

Organisations trying to protect their audiences from being misled by false domains will need get better visibility on domains associated with their brand. A brand-centric approach to discovering domains can shine light on:

- The state of existing domains that are currently managed by your organisation – if they are being well maintained and properly secured.

- The influx of ‘new’ domains that are attempting to impersonate your organisation’s brand.

Visibility on these types of domains and how your audience often interact with these domains enables an organisation to be more vigilant and responsive to the malign actors attempting to manipulate, hijack or impersonate your brand. Since an organisation’s brand pervades all sorts of publicly accessible assets – like domains – it has become of significant importance to include them in your organisation’s attack surface management regimen. Utilising a brand-centric approach to attack surface management will give your organisation a clearer view of your attack surface from a reputation risk perspective.

An attack surface management solution bolstered by such an approach will help your organisation’s security team to efficiently determine which domains – or other external facing digital assets – are posing a risk to your audience and reputation. It will help remove the repetitive work needed to identify these domains (and other assets), detect the risks associated with them, and help you manage any changes or actions required to protect both your audience and your organisation.

%201.png)

.avif)

.jpg)