What is FlowerStorm?

FlowerStorm is a Phishing-as-a-Service (PhaaS) platform believed to have gained traction following the decline of the former PhaaS platform Rockstar2FA. It employs Adversary-in-the-Middle (AitM) attacks to target Microsoft 365 credentials. After Rockstar2FA appeared to go dormant, similar PhaaS portals began to emerge under the name FlowerStorm. This naming is likely linked to the plant-themed terminology found in the HTML titles of its phishing pages, such as 'Sprout' and 'Blossom'. Given the abrupt disappearance of Rockstar2FA and the near-immediate rise of FlowerStorm, it is possible that the operators rebranded to reduce exposure [1].

External researchers identified several similarities between Rockstar2FA and FlowerStorm, suggesting a shared operational overlap. Both use fake login pages, typically spoofing Microsoft, to steal credentials and multi-factor authentication (MFA) tokens, with backend infrastructure hosted on .ru and .com domains. Their phishing kits use very similar HTML structures, including randomized comments, Cloudflare turnstile elements, and fake security prompts. Despite Rockstar2FA typically being known for using automotive themes in their HTML titles, while FlowerStorm shifted to a more botanical theme, the overall design remained consistent [1].

Despite these stylistic differences, both platforms use similar credential capture methods and support MFA bypass. Their domain registration patterns and synchronized activity spikes through late 2024 suggest shared tooling or coordination [1].

FlowerStorm, like Rockstar2FA, also uses their phishing portal to mimic legitimate login pages such as Microsoft 365 for the purpose of stealing credentials and MFA tokens while the portals are relying heavily on backend servers using top-level domains (TLDs) such as .ru, .moscow, and .com. Starting in June 2024, some of the phishing pages began utilizing Cloudflare services with domains such as pages[.]dev. Additionally, usage of the file “next.php” is used to communicate with their backend servers for exfiltration and data communication. FlowerStorm’s platform focuses on credential harvesting using fields such as email, pass, and session tracking tokens in addition to supporting email validation and MFA authentications via their backend systems [1].

Darktrace’s coverage of FlowerStorm Microsoft phishing

While multiple suspected instances of the FlowerStorm PhaaS platform were identified during Darktrace’s investigation, this blog will focus on a specific case from March 2025. Darktrace’s Threat Research team analyzed the affected customer environment and discovered that threat actors were accessing a Software-as-a-Service (SaaS) account from several rare external IP addresses and ASNs.

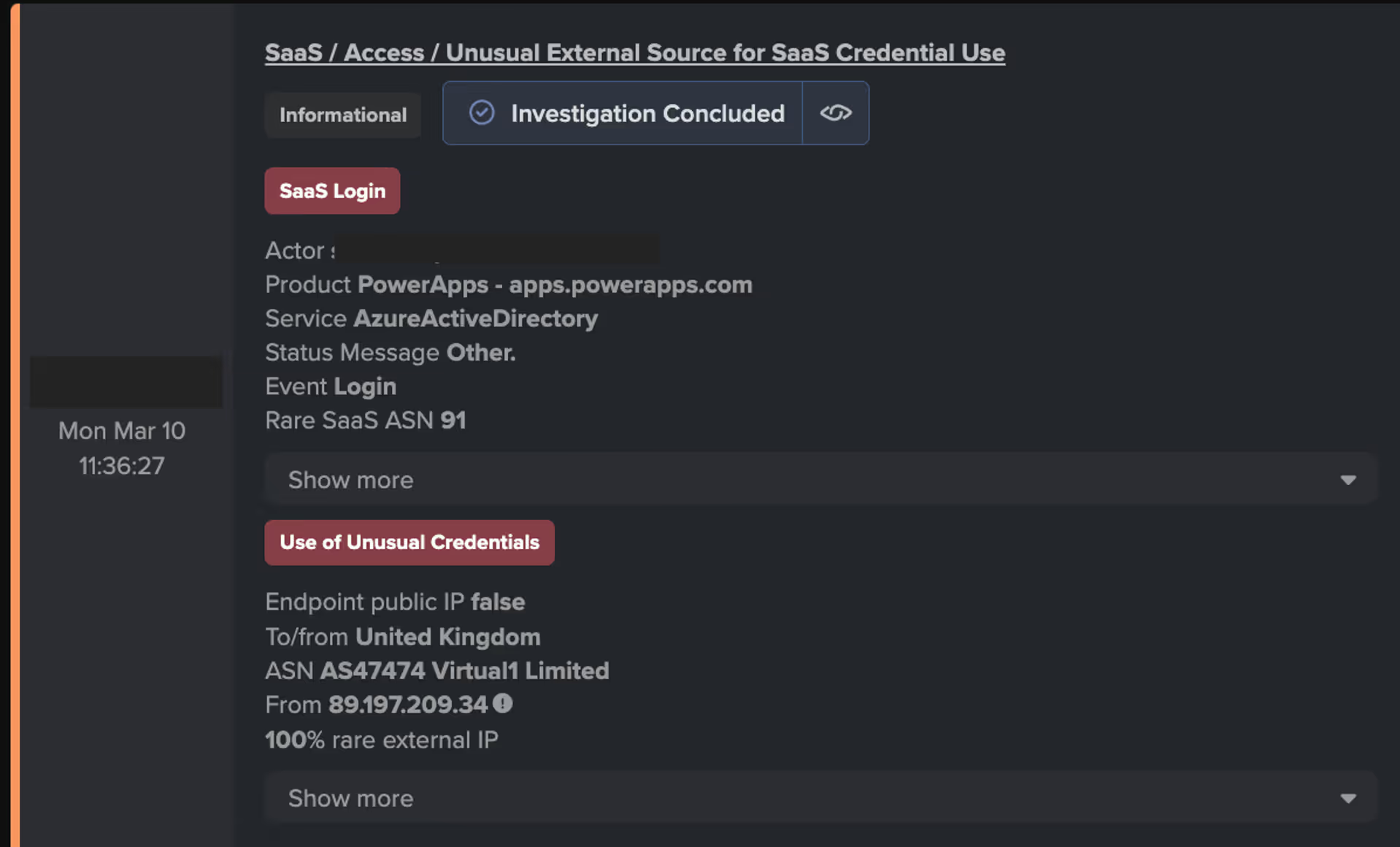

Around a week before the first indicators of FlowerStorm were observed, Darktrace detected anomalous logins via Microsoft Office 365 products, including Office365 Shell WCSS-Client and Microsoft PowerApps. Although not confirmed in this instance, Microsoft PowerApps could potentially be leveraged by attackers to create phishing applications or exploit vulnerabilities in data connections [2].

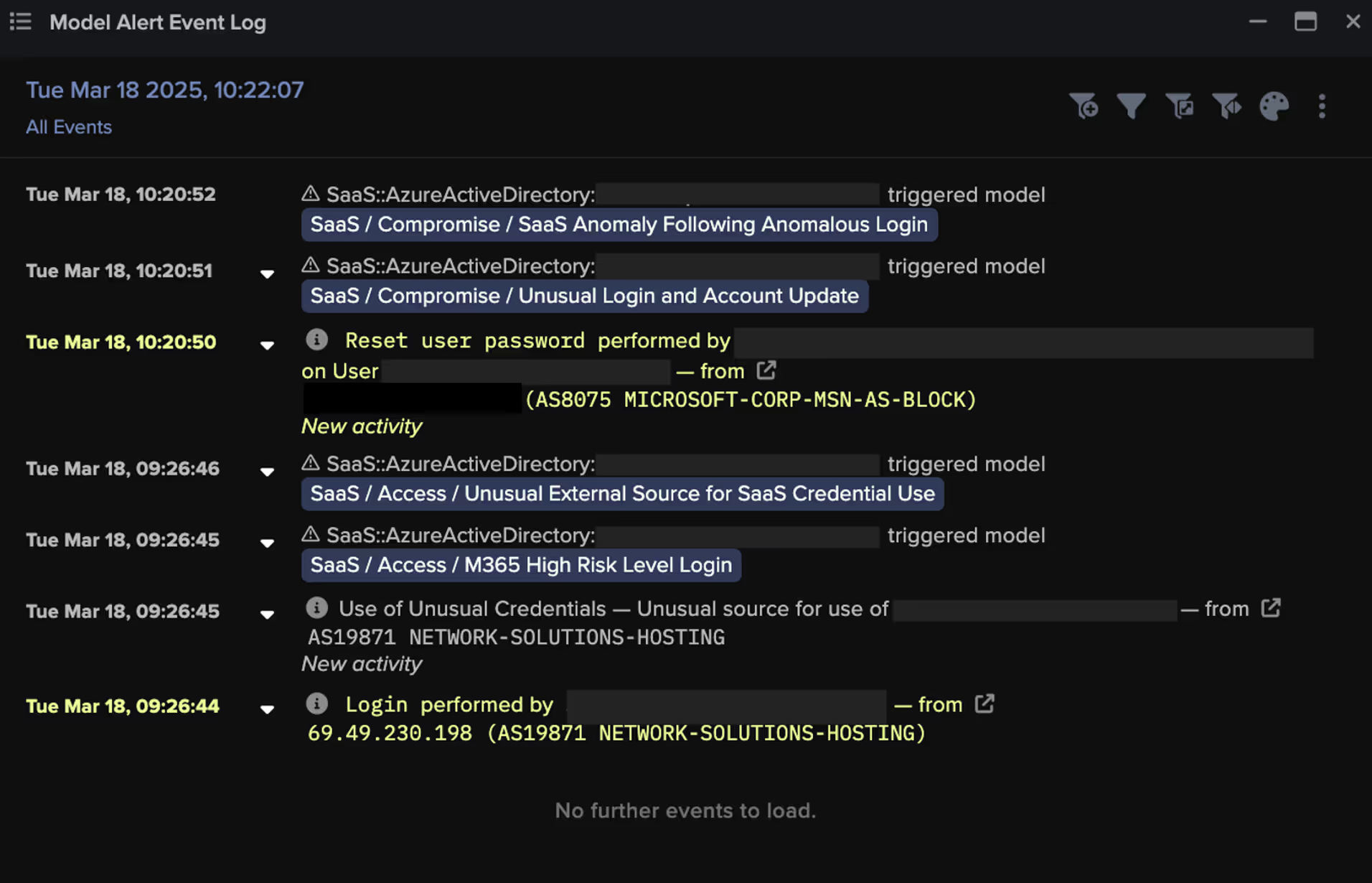

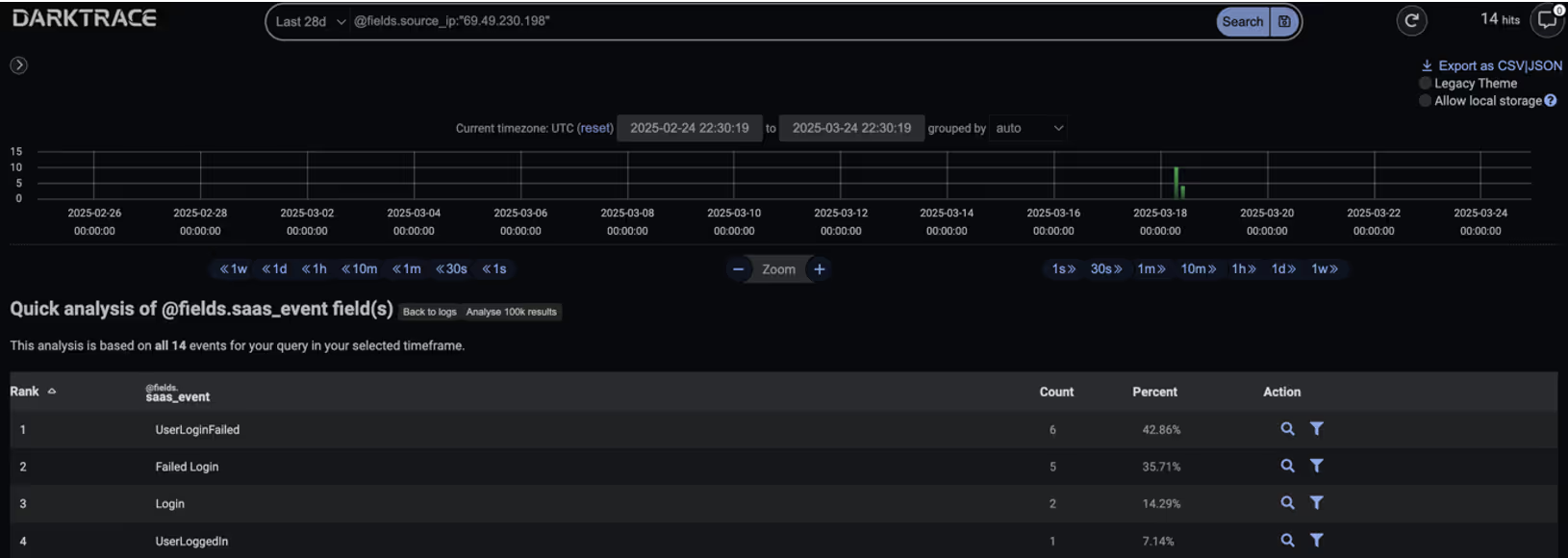

Following this initial login, Darktrace observed subsequent login activity from the rare source IP, 69.49.230[.]198. Multiple open-source intelligence (OSINT) sources have since associated this IP with the FlowerStorm PhaaS operation [3][4]. Darktrace then observed the SaaS user resetting the password on the Core Directory of the Azure Active Directory using the user agent, O365AdminPortal.

Given FlowerStorm’s known use of AitM attacks targeting Microsoft 365 credentials, it seems highly likely that this activity represents an attacker who previously harvested credentials and is now attempting to escalate their privileges within the target network.

Notably, Darktrace’s Cyber AI Analyst also detected anomalies during a number of these login attempts, which is significant given FlowerStorm’s known capability to bypass MFA and steal session tokens.

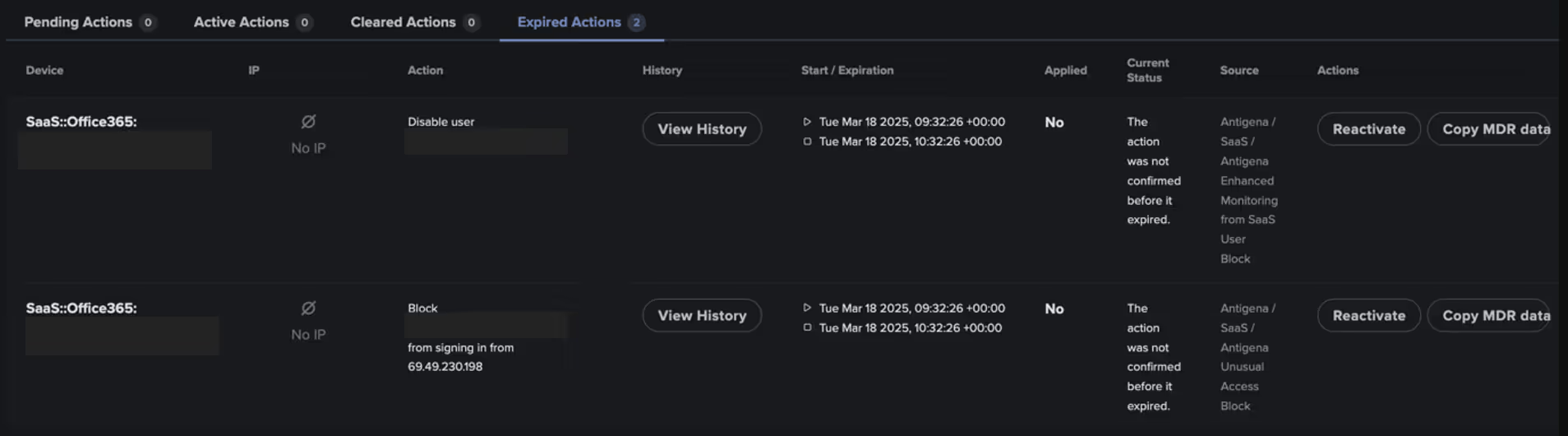

In response to the suspicious SaaS activity, Darktrace recommended several Autonomous Response actions to contain the threat. These included blocking the user from making further connections to the unusual IP address 69.49.230[.]198 and disabling the user account to prevent any additional malicious activity. In this instance, Darktrace’s Autonomous Response was configured in Human Confirmation mode, requiring manual approval from the customer’s security team before any mitigative actions could be applied. Had the system been configured for full autonomous response, it would have immediately blocked the suspicious connections and disabled any users deviating from their expected behavior—significantly reducing the window of opportunity for attackers.

Conclusion

The FlowerStorm platform, along with its predecessor, RockStar2FA is a PhaaS platform known to leverage AitM attacks to steal user credentials and bypass MFA, with threat actors adopting increasingly sophisticated toolkits and techniques to carry out their attacks.

In this incident observed within a Darktrace customer's SaaS environment, Darktrace detected suspicious login activity involving abnormal VPN usage from a previously unseen IP address, which was subsequently linked to the FlowerStorm PhaaS platform. The subsequent activity, specifically a password reset, was deemed highly suspicious and likely indicative of an attacker having obtained SaaS credentials through a prior credential harvesting attack.

Darktrace’s prompt detection of these SaaS anomalies and timely notifications from its Security Operations Centre (SOC) enabled the customer to mitigate and remediate the threat before attackers could escalate privileges and advance the attack, effectively shutting it down in its early stages.

Credit to Justin Torres (Senior Cyber Analyst), Vivek Rajan (Cyber Analyst), Ryan Traill (Analyst Content Lead)

Appendices

Darktrace Model Alert Detections

· SaaS / Access / M365 High Risk Level Login

· SaaS / Access / Unusual External Source for SaaS Credential Use

· SaaS / Compromise / Login from Rare High-Risk Endpoint

· SaaS / Compromise / SaaS Anomaly Following Anomalous Login

· SaaS / Compromise / Unusual Login and Account Update

· SaaS / Unusual Activity / Unusual MFA Auth and SaaS Activity

Cyber AI Analyst Coverage

· Suspicious Access of Azure Active Directory

· Suspicious Access of Azure Active Directory

List of Indicators of Compromise (IoCs)

IoC - Type - Description + Confidence

69.49.230[.]198 – Source IP – Malicious IP Associated with FlowerStorm, Observed in Login Activity

MITRE ATT&CK Mapping

Tactic – Technique – Sub-Technique

Cloud Accounts - DEFENSE EVASION, PERSISTENCE, PRIVILEGE ESCALATION, INITIAL ACCESS - T1078.004 - T1078

Cloud Service Dashboard - DISCOVERY - T1538

Compromise Accounts - RESOURCE DEVELOPMENT - T1586

Steal Web Session Cookie - CREDENTIAL ACCESS - T1539

References:

[2] https://learn.microsoft.com/en-us/security/operations/incident-response-playbook-compromised-malicious-app

[3] https://www.virustotal.com/gui/ip-address/69.49.230.198/community

.png)

%201.png)