Introduction

For years there has been a general belief that macOS systems are immune to malware. While MacOS has a reputation for being secure, macOS malware has been trending up in recent years with the emergence of Silver Sparrow [1], KeRanger [2], and Atomic Stealer [3], among others. Recently, Cado Security has identified a malware-as-a-service (MaaS) targeting macOS users named “Cthulhu Stealer”. This blog will explore the functionality of this malware and provide insight into how its operators carry out their activities.

Technical analysis

File details:

Language: Go

Not signed

Stripped

Multiarch: x86_64 and arm

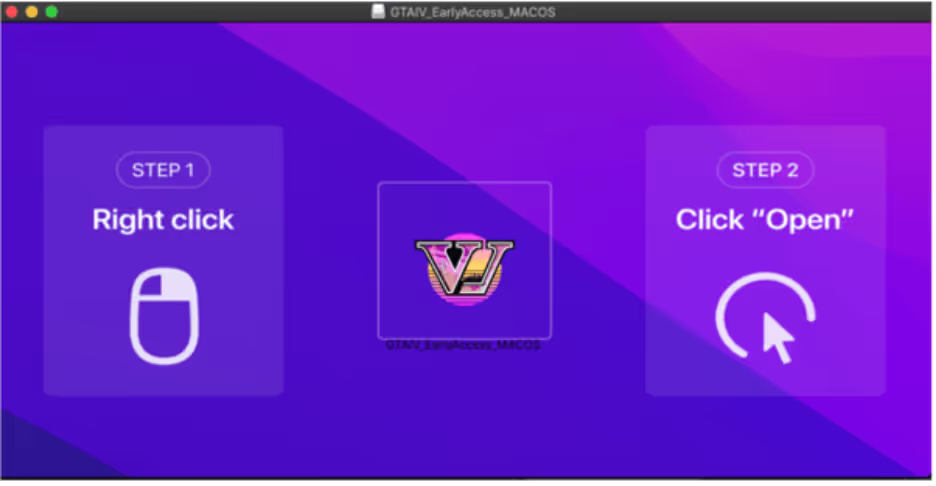

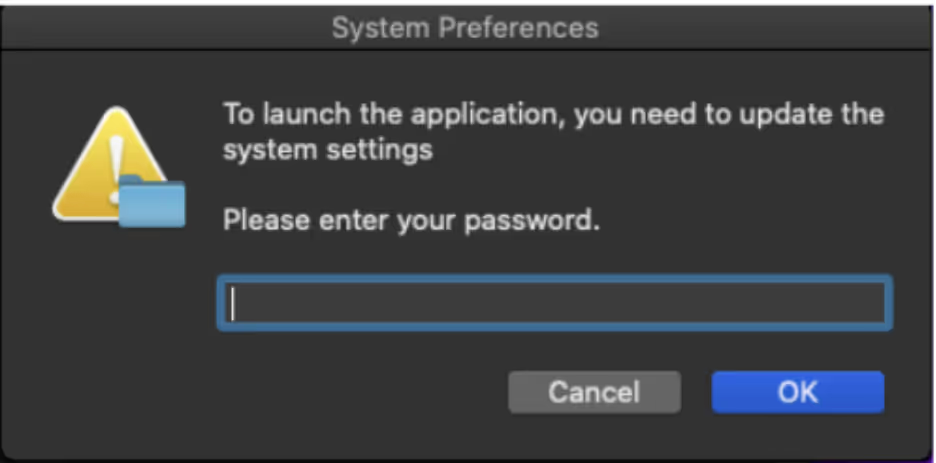

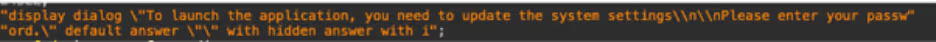

Cthulhu Stealer is an Apple disk image (DMG) that is bundled with two binaries, depending on the architecture. The malware is written in GoLang and disguises itself as legitimate software. Once the user mounts the dmg, the user is prompted to open the software. After opening the file, “osascript”, the macOS command-line tool for running AppleScript and JavaScript is used to prompt the user for their password.

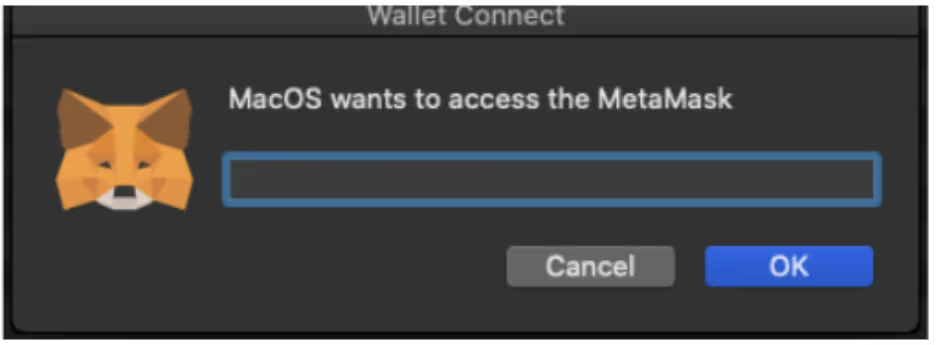

Once the user enters their password, a second prompt requests the user’s MetaMask [4] password. A directory is created in ‘/Users/Shared/NW’ with the credentials stored in textfiles. Chainbreak [5] is used to dump Keychain passwords and stores the details in “Keychain.txt”.

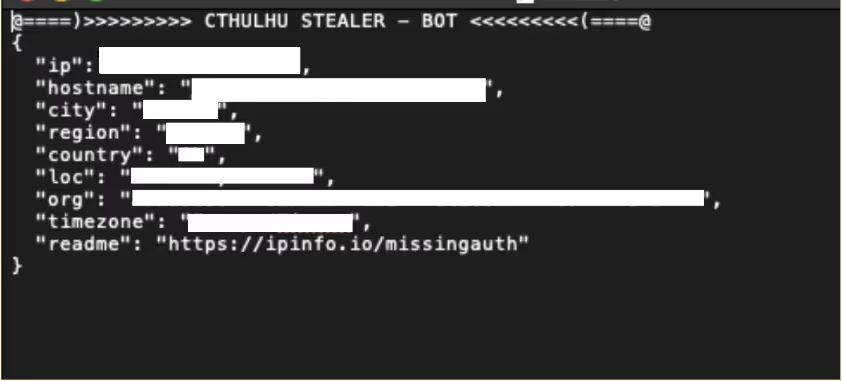

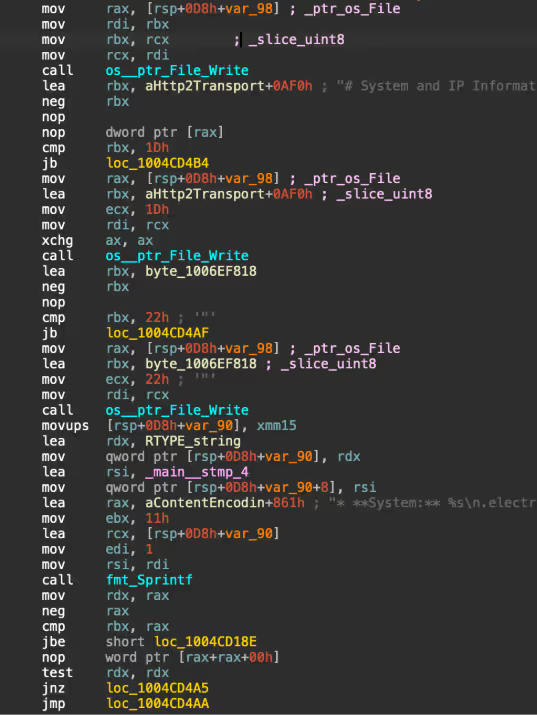

A zip file containing the stolen data is created in: “/Users/Shared/NW/[CountryCode]Cthulhu_Mac_OS_[date]_[time].zip.” Additionally, a notification is sent to the C2, to alert to new logs. The malware fingerprints the victim’s system, gathering information including IP, with IP details that are retrieved from ipinfo.io.

System information including system name, OS version, hardware and software information is also gathered and stored in a text file.

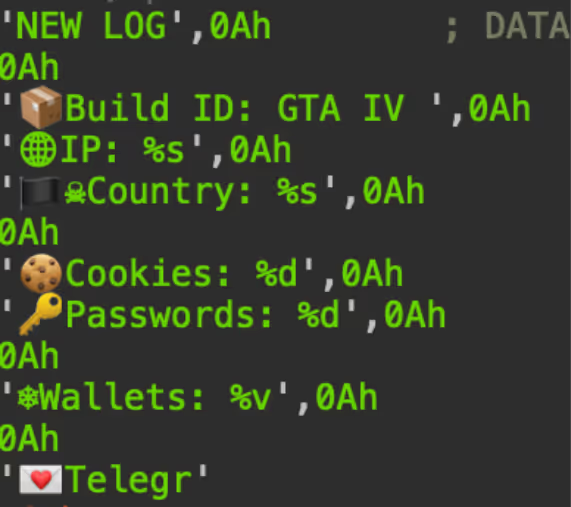

Cthulhu Stealer impersonates disk images of legitimate software that include:

- CleanMyMac

- Grand Theft Auto IV (appears to be a typo for VI)

- Adobe GenP

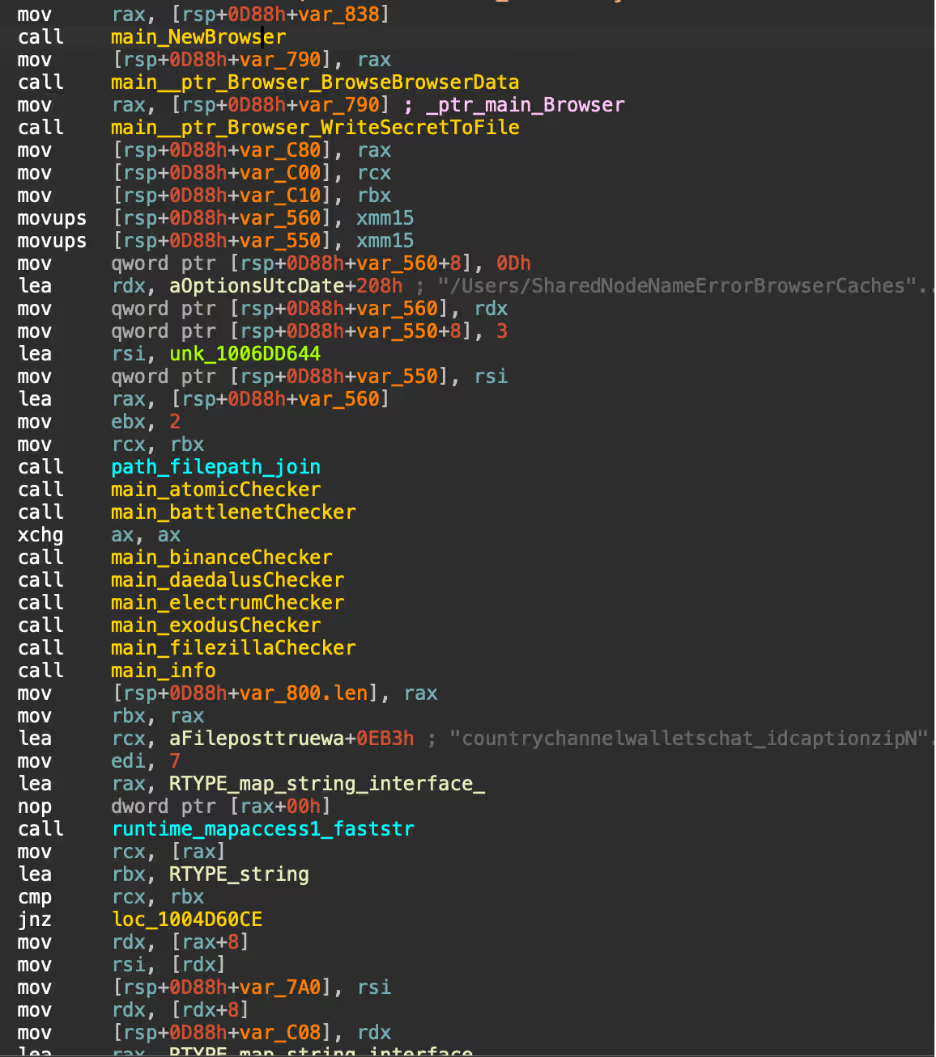

The main functionality of Cthulhu Stealer is to steal credentials and cryptocurrency wallets from various stores, including game accounts. Shown in Figure 10, there are multiple checker functions that check in the installation folders of targeted file stores, typically in “Library/Application Support/[file store]”. A directory is created in “/Users/Shared/NW” and the contents of the installation folder are dumped into text files for each store.

A list of stores Cthulhu Stealer steals from is shown in the list below:

- Browser Cookies

- Coinbase Wallet

- Chrome Extension Wallets

- Telegram Tdata account information

- Minecraft user information

- Wasabi Wallet

- MetaMask Wallet

- Keychain Passwords

- SafeStorage Passwords

- Battlenet game, cache and log data

- Firefox Cookies

- Daedalus Wallet

- Electrum Wallet

- Atomic Wallet

- Binanace Wallet

- Harmony Wallet

- Electrum Wallet

- Enjin Wallet

- Hoo Wallet

- Dapper Wallet

- Coinomi Wallet

- Trust Wallet

Comparison to atomic stealer

Atomic Stealer [6] is an information-stealer that targets macOS written in Go that was first identified in 2023. Atomic Stealer steals crypto wallets, browser credentials, and keychain. The stealer is sold on Telegram to affiliates for $1,000 per month. The functionality and features of Cthulhu Stealer are very similar to Atomic Stealer, indicating the developer of Cthulhu Stealer probably took Atomic Stealer and modified the code. The use of “osascript” to prompt the user for their password is similar in Atomic Stealer and Cthulhu, even including the same spelling mistakes.

Forum and operators

The developers and affiliates of Cthulhu Stealer operate as “Cthulhu Team” using Telegram for communications. The stealer appears to be being rented out to individuals for $500 USD/month, with the main developer paying out a percentage of earnings to affiliates based on their deployment. Each affiliate of the stealer is responsible for the deployment of the malware. Cado has found Cthulhu Stealer sold on two well-known malware marketplaces which are used for communication, arbitration and advertising of the stealer, along with Telegram. The user “Cthulhu” (also known as Balaclavv), first started advertising Cthulhu Stealer at the end of 2023 and appeared to be operating for the first few months of 2024, based on timestamps from the binaries.

Various affiliates of the stealer started lodging complaints against Cthulhu in 2024 with regards to payments not being received. Users complained that Cthulhu had stolen money that was owed to them and accused the threat actor of being a scammer or participating in an exit scam. As a result, the threat actor received a permanent ban from the marketplace.

Key takeaways

In conclusion, while macOS has long been considered a secure system, the existence of malware targeting Mac users remains an increasing security concern. Although Cthulhu Team no longer appears to be active, this serves as a reminder that Apple users are not immune to cyber threats. It’s crucial to remain vigilant and exercise caution, particularly when installing software from unofficial sources.

To protect yourself from potential threats, always download software from trusted sources, such as the Apple App Store or the official websites of reputable developers. Enable macOS’s built-in security features such as Gatekeeper, which helps prevent the installation of unverified apps. Keep your system and applications up to date with the latest security patches. Additionally, consider using reputable antivirus software to provide an extra layer of protection.

By staying informed and taking proactive steps, you can significantly reduce the risk of falling victim to Mac malware and ensure your system remains secure.

Indicators of compromise

Launch.dmg

6483094f7784c424891644a85d5535688c8969666e16a194d397dc66779b0b12

GTAIV_EarlyAccess_MACOS_Release.dmg

e3f1e91de8af95cd56ec95737669c3512f90cecbc6696579ae2be349e30327a7

AdobeGenP.dmg

f79b7cbc653696af0dbd867c0a5d47698bcfc05f63b665ad48018d2610b7e97b

Setup2024.dmg

de33b7fb6f3d77101f81822c58540c87bd7323896913130268b9ce24f8c61e24

CleanMyMac.dmg

96f80fef3323e5bc0ce067cd7a93b9739174e29f786b09357125550a033b0288

Network indicators

89[.]208.103.185

89[.]208.103.185:4000/autocheckbytes

89[.]208.103.185:4000/notification_archive

MITRE ATTACK

User Execution

T1204

Command and Scripting Interpreter: Apple Script

T1059.002

Credentials From Password Stores

T1555

Credentials From Password Stores: Keychain

T1555.001

Credentials From Password Stores: Credentials From Web Browser

T1555.003

Account Discovery

T1087

System Information Discovery

T1082

Data Staged

T1074

Data From Local System

T1005

Exfiltration Over C2 Channel

T1041

Financial Theft

Detection

Yara

rule MacoOS_CthulhuStealer {

meta:

Description = "Detects Cthulhu MacOS Stealer Binary"

author = "Cado Security"

date = "14/08/2024"

md5 = "897384f9a792674b969388891653bb58"

strings:

$mach_o_x86_64 = {CF FA ED FE 07 00 00 01 00 00 00 00 00 00 00 00}

$mach_o_arm64 = {CF FA ED FE 0C 00 00 01 00 00 00 00 00 00 00 00} $c2 = "http://89.208.103.185:4000"

$path1 = "/Users/Shared/NW" fullword $path2 = "/Users/admin/Desktop/adwans/Builder/6987368329/generated_script.go" fullword $path3 = "ic.png" fullword

$zip = "@====)>>>>>>>>> CTHULHU STEALER - BOT <<<<<<<<<(====@\n" fullword $func1 = "copyKeychainFile"

$func2 = "grabberA1"

$func3 = "grabberA2"

$func4 = "decodeIPInfo"

$func5 = "battlenetChecker"

$func6 = "binanceChecker"

$func7 = "daedalusChecker"

$func8 = "CCopyFFolderContents"

$func9 = "electrumChecker"

condition:

$mach_o_x86_64 or $mach_o_arm64

and any of ($func*) or any of ($path*) or ($c2) or ($zip) }

References

[1] https://redcanary.com/blog/threat-intelligence/clipping-silver-sparrows-wings/

[2] https://unit42.paloaltonetworks.com/new-os-x-ransomware-keranger-infected-transmission-bittorrent-client-installer/

[3] https://www.sentinelone.com/blog/atomic-stealer-threat-actor-spawns-second-variant-of-macos-malware-sold-on-telegram/

[4] https://metamask.io/

[5] https://github.com/n0fate/chainbreaker

[6] https://www.sentinelone.com/blog/atomic-stealer-threat-actor-spawns-second-variant-of-macos-malware-sold-on-telegram/

![Darktrace’s detection of the unusual external connection to 142.11[.]206[.]73 via port 8000.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/69fa6d343ee828935a4a00f5_Screenshot%202026-05-05%20at%203.20.33%E2%80%AFPM.png)