Darktrace delivers the next evolution of unified and proactive NDR

Darktrace Network Endpoint eXtended Telemetry (NEXT) is revolutionizing NDR with the industry’s first mixed-telemetry agent using Self-Learning AI.

The combined context of native network and endpoint process data significantly reduces incident triage and investigation times for threats spanning both domains. Our business-centric approach learns what normal looks like for each endpoint, and now uses process context to extend our ability to identify novel threats that existing EDR/XDR tools often miss.

Summary of what’s new:

- Native endpoint process telemetry combined with NDR, bridging the EDR gap

- Self-Learning AI on the endpoint to stop novel threats missed by EDR

- Sophisticated Agentic AI to automate SecOps investigations across all major IT domains

- AI-native, real-time threat detection, investigation, and response (TDIR) for cross-domain activity throughout the enterprise

Why is this an important next step in NDR?

Security analysts are buried under a flood of alerts that lack the context needed to separate genuine threats from noise. The root problem is that most security tools only see one slice of the environment. IT and OT networks, endpoints, and cloud systems are monitored in isolation, with little correlation between them.

As a result, investigations are highly manual. Analysts are forced to pivot between siloed point-products, each providing only a fragment of the incident. This slows response, creates blind spots, and limits the team’s ability to understand and contain threats effectively.

In many cases, the high degree of skill it takes to pivot tools and conduct investigations leads even the most experienced analysts closer to burnout, especially when they are already exhausted by the quantity of alerts. Ultimately, the human personnel managing these systems are using their skills to accommodate for the lack of synergy between tools they are using in their security stack, rather than developing the higher-value expertise needed to anticipate, prevent, and respond to emerging threats.

Many organizations have attempted to overcome this challenge by implementing XDR solutions. But, XDR does not cover NDR related use cases. This is especially true in OT/CPS environments where it is not possible to install an agent on devices.

XDR is an Endpoint-focused tool that cannot see the full picture of threats moving laterally across the network, targeting unmanaged devices, or blending into legitimate traffic. While XDR is still a strong tool in the arsenal, attackers are noticing where the gaps are:

- Threat actors are actively targeting and disabling EDR agents, using tools like EDRKillShifter, EDRSilencer, and variants of Terminator, even "legitimate" apps like HRSword are being exploited to undermine endpoint security.

- A CISA Red Team assessment found that one U.S. critical infrastructure organization suffered prolonged compromise because it overly relied on host‑based EDR and lacked sufficient network-layer defenses.

Bottom line: Without native network detection and response (NDR), critical incidents slip through undetected.

Not all NDR tools are built the same

When it comes to NDR, the details matter. Here are a few reasons why not all NDR solutions are created equal:

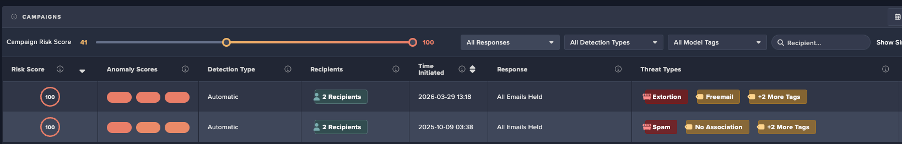

- Most NDR solutions depend on EDR/XDR integrations to ingest endpoint alerts, which are raised based on activity that is already known to be malicious

- They can’t investigate beyond what the EDR already flags, lacking process-level context in network investigations

- Almost no NDR solutions have a native endpoint agent to extend NDR visibility to remote worker devices

This reliance on EDR leaves critical gaps in network coverage, since EDRs themselves don’t provide network-level visibility.

The NEXT evolution of NDR

Darktrace Network Endpoint eXtended Telemetry (NEXT) is revolutionizing NDR with the industry’s first mixed-telemetry agent using Self-Learning AI.

The combined context of native network and endpoint process data significantly reduces incident triage and investigation times for threats spanning both domains, our business-centric approach with new data also extends our ability to identify novel threats that existing EDR/XDR may miss.

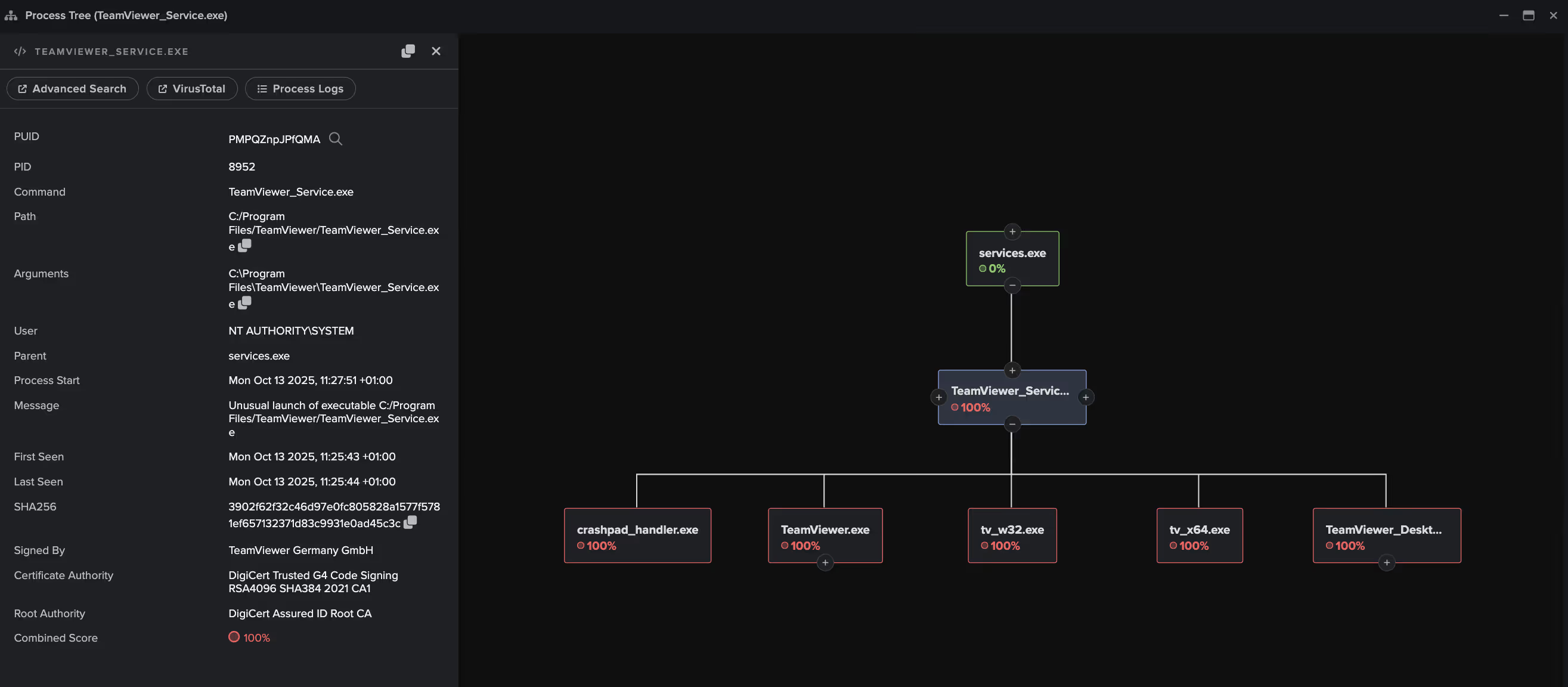

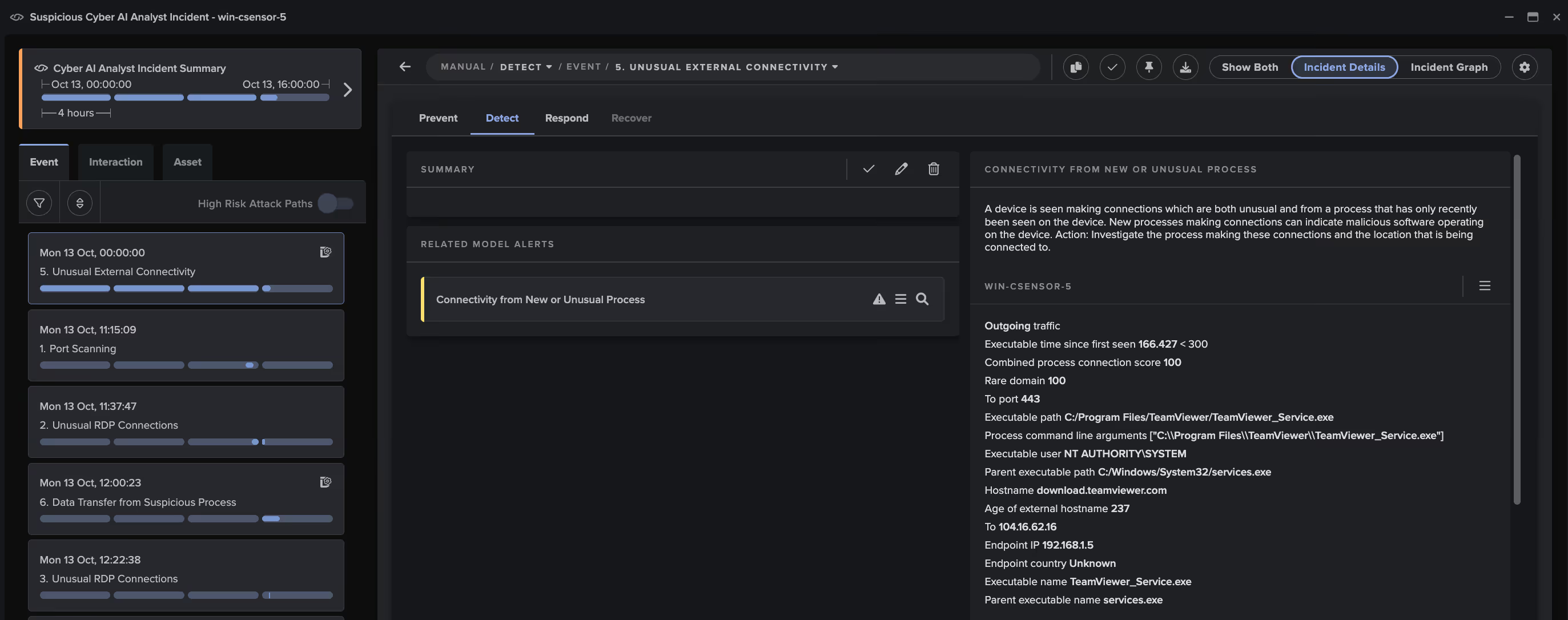

Darktrace / ENDPOINT agents are now able to utilize new Network Endpoint eXtended Telemetry (NEXT) capabilities. This combines full network visibility with native endpoint process data, enabling autonomous investigations that trace threats from initial network activity all the way to the root cause at the endpoint, without manual correlation or tool switching. This bridges the gap between NDR and the endpoint, while adding value to existing EDR investments.

Leveraging this data in investigations

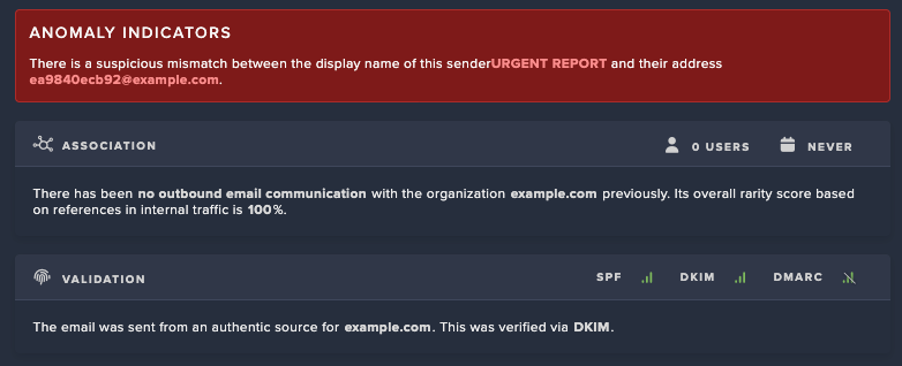

This additional context is then leveraged by Cyber AI Analyst, a sophisticated agentic AI system that autonomously performs end-to-end investigations of all relevant alerts and prioritizes incidents. With the new endpoint process visibility, Cyber AI Analyst now incorporates process context into its decision-making, which improves detection accuracy, filters out benign activity, and enhances incident narratives with process-level insights.

This makes Darktrace the first NDR to natively investigate threats across network and endpoint telemetry with an autonomous, agentic AI analyst. And with our Self-Learning AI, Darktrace continuously evolves by understanding what’s normal for each unique environment, now adding process data to extend visibility and range of detections. This enables Darktrace to detect and contain novel threats, including zero-days, insider threats, and emerging attack techniques, up to 8 days before public disclosure.

This is more than a solution to a visibility problem. It’s a fundamental evolution in how threats are detected, investigated, and stopped. By applying agentic AI, Darktrace empowers security teams to move from reactive alert triage to proactive, autonomous defense, surfacing and blocking threats that others simply can’t see.

Continued innovation in detection and response

Darktrace also continues to invest in our core NDR capabilities, delivering enhancements and innovations to solve modern network security challenges. In the latest release, Darktrace / NETWORK has been enhanced to increase detection efficacy and performance. This includes increased protocol detection fidelity and new support for custom port mappings, plus expanded visibility into HTTP traffic to support more targeted threat hunting across a wider range of application layer activity. In addition, vSensor performance has been upgraded for tunnel protocols such as Geneve.

We have also released enhancements to Autonomous Response, which is already trusted by thousands of organizations to contain threats at the earliest stages without causing business disruption. This includes enhanced support for highly complex and segmented networks, plus the ability to extend Autonomous Response actions to more areas with additional firewall integration support. This enables faster and more effective response to network threats, and continues Darktrace’s proven ability to contain zero-day threats up to 8 days before public disclosure.

Providing seamless operations with the new Darktrace ActiveAI Security Portal

As part of Darktrace’s commitment to breaking down silos across the cyber defense lifecycle, this release also introduces major platform enhancements that tackle often-overlooked operational gaps specifically around user access, permissions, and integration workflows. With the launch of the new Darktrace ActiveAI Security Portal, organizations can now manage security at scale across diverse environments, making it ideal for large enterprises, MSSPs, and partners overseeing multiple tenants. These updates ensure that visibility, control, and scalability extend beyond detection and response and into how teams manage and interact with the platform itself.

Committed to innovation

These updates are part of the broader Darktrace release, which also included:

1. Major innovations in cloud security with the launch of the industry’s first fully automated cloud forensics solution, reinforcing Darktrace’s leadership in AI-native security.

2. Innovations to our suite of Exposure Management & Attack Surface Management products including:

- Exploit Prediction Assessment: Continuously validates whether top-priority exposures are actually exploitable in your environment without waiting for patch cycles or formal pen tests.

- Deep & Dark Web Monitoring: Extends visibility across millions of sources in the deep and dark web to detect leaked credentials linked to your confirmed domains.

- Confidence Score: our newly developed AI classification platform will compare newly discovered assets to assets that are known to belong to your organization. The more these newly discovered assets look similar to assets that belong to your organization, the higher the score will be.

- No-Telemetry Endpoint: Collects installed software data and maps it to known CVEs—without network traffic—providing device-level vulnerability context and operational relevance.

- Cost-Benefit Analysis for Patching: Calculates ROI by comparing patching effort with potential exploit impact, factoring in headcount time, device count, patch difficulty, and automation availability.

Visit these blogs to learn more about updates:

- Darktrace Announces Extended Visibility Between Confirmed Assets and Leaked Credentials from the Deep and Dark Web

- Patch Smarter, Not Harder: Now Empowering Security Teams with Business-Aligned Threat Context Agents

As attackers exploit gaps between tools, the Darktrace ActiveAI Security Platform delivers unified detection, automated investigation, and autonomous response across cloud, endpoint, email, network, and OT. With full-stack visibility and AI-native workflows, Darktrace empowers security teams to detect, understand, and stop novel threats before they escalate.

Join our Live Launch Event

When?

December 9, 2025

What will be covered?

Join our live broadcast to experience how Darktrace is eliminating blind spots for detection and response across your complete enterprise with new innovations in Agentic AI across our ActiveAI Security platform. Industry leaders from IDC will join Darktrace customers to discuss challenges in cross-domain security, with a live walkthrough reshaping the future of Network Detection & Response, Endpoint Detection & Response, Email Security, and SecOps in novel threat detection and autonomous investigations.

.jpg)