Introduction

The speed of today’s most advanced threats can be devastating. In the few minutes it takes a security analyst to step away from her screen to grab a coffee, ransomware can take down thousands of computers before human teams or traditional tools have the chance to respond. And while big, fast threats are more likely to grab the headlines, cyber-attacks which do the opposite can be just as dangerous. The latest escalation in the cyber arms race sees attackers choosing stealth over speed and cunning over chaos.

As defenders work to rapidly deploy new security and detection technologies, malware authors have been similarly innovative, working to find a means of evading them. New ‘low and slow’ attacks are able to bypass traditional security tools because each individual action compiling the larger threat is too small to detect. These attacks are designed to operate over a longer period of time – and by minimizing disruption to any data transfer or connectivity levels, they blend into legitimate traffic.

For advanced and well-resourced actors like nation states in search of valuable intellectual property or sensitive political records, subtle and prolonged exposure to the systems they attack is a significant benefit. When it comes to the most sophisticated threats, slow and steady really can win the race.

Nevertheless, detection of low and slow attacks is possible with advanced machine learning techniques. To do so, contextual knowledge is critical; by modeling the subtle and unique ‘patterns of life’ of every user, device, and the network as a whole, AI-powered defenses are, for the first time, winning this battle.

This blog explores how attackers use low and slow techniques during multiple stages of the kill chain to achieve their eventual goal. We examine three real-world case studies, drawn from over 7,000 deployments of the Enterprise Immune System, to demonstrate how cyber AI detects low and slow reconnaissance, data exfiltration, and command-and-control activity.

Low and slow reconnaissance

By monitoring the behavioral pattern of devices and users, Darktrace AI is able to learn an evolving profile for expected activity. Armed with this understanding of ‘normal’ for the network, it can then identify significant anomalies indicative of a threat. It does all this without relying on training sets of historical data, enabling the technology to spot threats that other tools miss.

On the network of a European financial services firm, Darktrace discovered a server conducting port scans of various internal computers. This type of network scanning is regularly performed for legitimate testing purposes by administrative devices, but it is also a tactic for attackers to identify vulnerabilities and points of compromise – an early stage of an attack.

Over a duration of 7 days, the server made around 214,000 failed connections to 276 unique devices. However, only a small number of ports were targeted per day. The attack was sequential, but slow over time. Measured in one day, the level of disturbance was minimal enough to evade all rules-based defenses. Nevertheless, by learning ‘self’ across the entire digital business over time, cyber AI can detect even the subtlest deviation from ‘normal’ relative to the individual device, user, or network. Darktrace recognized the longer pattern of network scanning and alerted the customer immediately.

Advanced search view showing regular connections to closed ports over the scanning period.

Low and slow data exfiltration

At an industrial manufacturing company, a desktop was identified establishing over 2,000 connections to a rare host over a 7-day period. During this time, a total of 9.15GB of data was transferred externally. No single connection transmitted more than a few MB of data – an amount which, if viewed in isolation, would not be cause for concern. However, the destination for these connections was 100% rare for the network and maintained that level of rarity for the entire period of exfiltration. This not only flagged the activity as initially suspicious, but also prevented it from being absorbed into legitimate traffic. Combined with the accumulated volume of data leaving the network, Darktrace AI identified this as significant deviation in the device’s behavior, indicating a threat in progress.

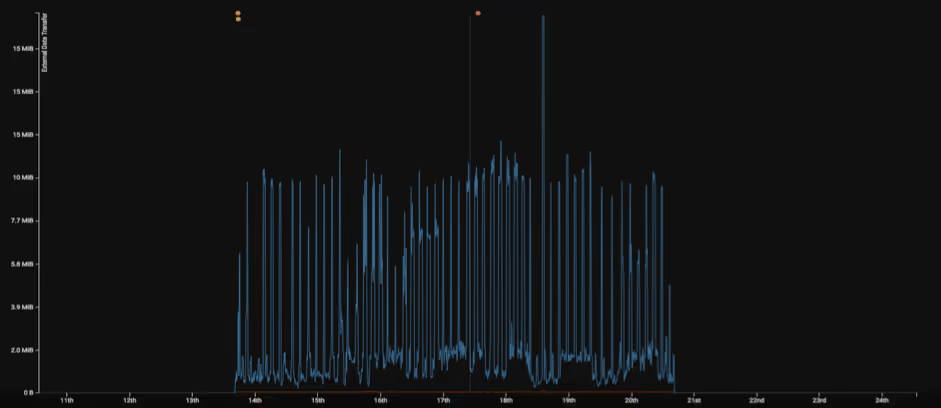

Steady exfiltration of data over a 7-day period.

A series of model breaches (orange circles) occurring throughout the period of steady external data exfiltration (blue line).

Low and slow command and control

Darktrace is extremely successful in finding malware infections before they appear on open-source threat lists, a crucial ability when stopping the most serious, never-before-seen threats. This is achieved in large part by detecting beaconing patterns rather than relying on signatures. Beaconing occurs when a malicious program attempts to establish contact with its online infrastructure. Similar to network scanning, it creates a surge in outgoing connections.

Darktrace was deployed in a corporate network where a device was found making connections at steady intervals to a malicious browser extension. The average rate of connection was 11 connections every 4 hours – a low activity level which could easily have blended into legitimate internet traffic. Having identified the regularity of these connections, Darktrace’s AI assigned a high beaconing score, which indicated that they were likely initiated by an automated process. If we include the fact that the destination was rare, it became clear that this was caused by a malicious background program that was running unbeknownst to the user.

As cyber security advances, attackers will develop increasingly sophisticated methods to operate under the radar. Traditional cyber security tools which work in binary ways based on historical data – either the upload exceeded a predefined limit or not – cannot keep up. This new era will see AI proven crucial because of its ability to learn a constantly-evolving ‘pattern of life’ for a network over the duration of its deployment. This allows Darktrace AI to effectively locate the disturbances in connectivity levels – no matter how small – that have been caused by malicious or non-compliant activity. Fundamentally, this enables Darktrace to discover in-progress attacks and then autonomously respond, neutralizing them before they become a crisis.

High-profile, fast-moving attacks like NotPetya and WannaCry have encouraged some organizations to focus on preventing certain types of threat, at the expense of others – and hackers are catching on. By leveraging powerful AI, Darktrace empowers customers to prevent not just the fastest-moving attacks, but also the slowest and subtlest.