On March 11 and 12, 2021, Darktrace detected multiple attempts by a broad campaign to attack vulnerable servers in customer environments. The campaign targeted Internet-facing Microsoft Exchange servers, exploiting the recently discovered ProxyLogon vulnerability (CVE-2021-26855).

While this exploit was initially attributed to a group known as Hafnium, Microsoft has announced that the vulnerability is also being rapidly weaponized by other threat actors. These new, unattributed campaigns, which have never been seen before, have been disrupted by Cyber AI in real time.

Hafnium copycats

As soon as a vulnerability is made public it is common for there to be an influx of attacks as hackers capitalize on the chaos and attempt to compromise vulnerable networks.

Patches are rapidly reverse-engineered by hackers once they have been published by the vendor, leading to mass high-impact exploits. At the same time, the offensive tooling trickles down from the first adopters, such as nation-state actors, to ransomware gangs and other opportunistic attackers. Darktrace has observed this exact phenomenon as a result of Hafnium’s attacks against vulnerable Microsoft Exchange email servers this month.

Exchange servers attacked: AI analysis

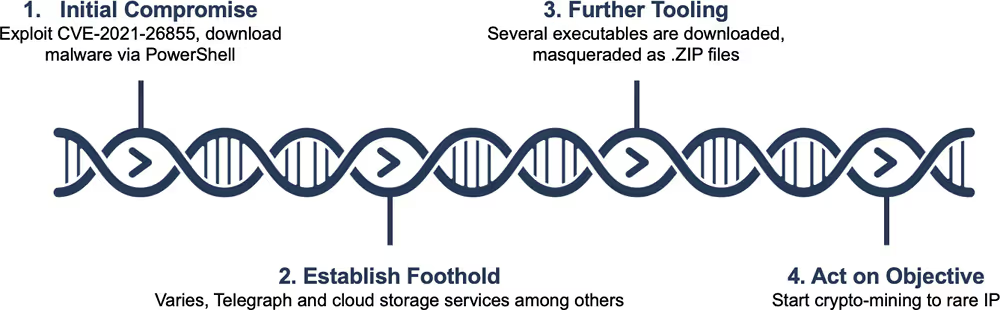

Cyber AI has observed threat actors attempting to download and install malware using ProxyLogon as the initial attack vector. For customers with Autonomous Response, the malicious payload was intercepted at this point, stopping the attack before any developments.

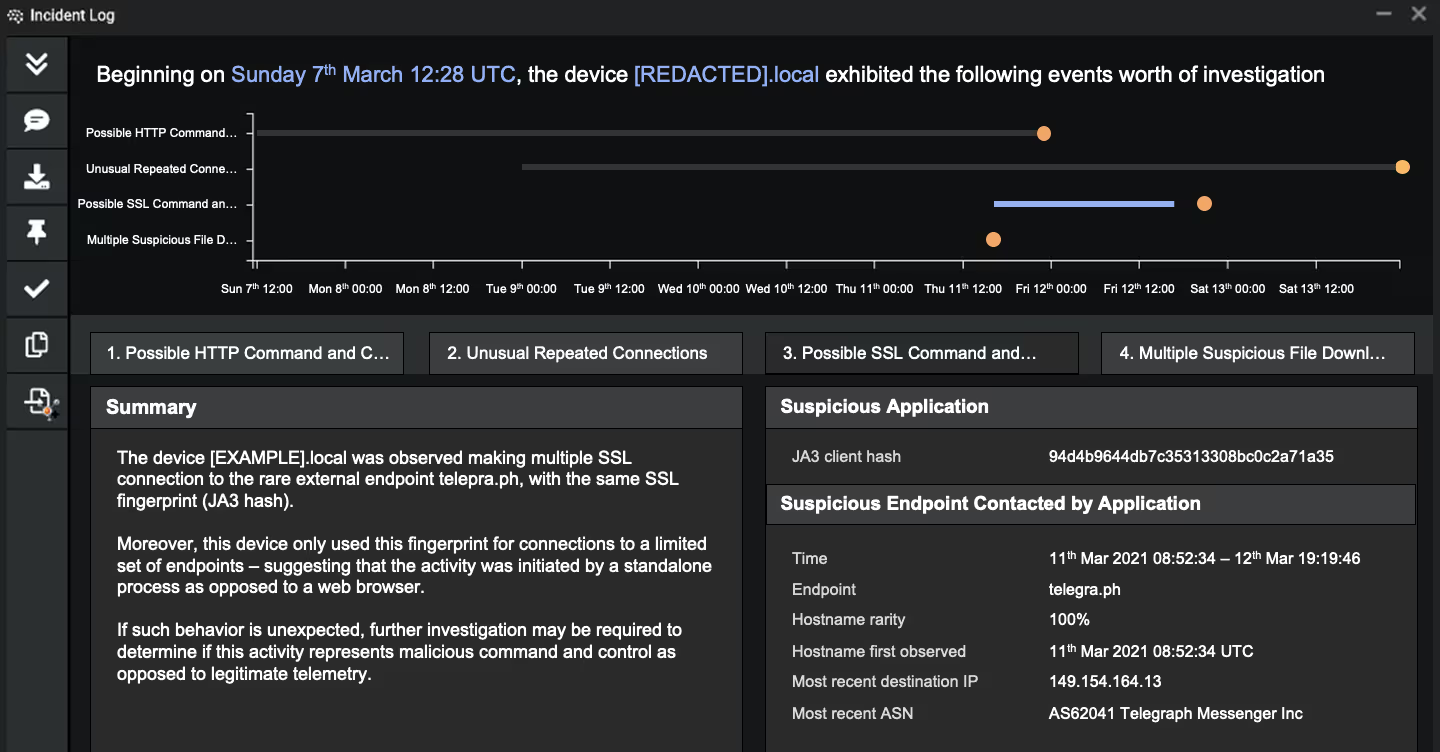

In other Darktrace customer environments, the Darktrace Immune System identified and alerted on every stage of the attack. Generally, the malware has been observed acting as a generic backdoor, without much follow-up activity. Various forms of command and control (C2) channels were detected, including Telegra[.]ph. In a few intrusions, the attackers installed cryptocurrency miners.

Once a foothold has been established in the digital environment, it is likely that the actors will begin a hands-on-keyboard attack, exfiltrating data, moving laterally, or deploying ransomware.

Figure 1: Timeline of a typical ProxyLogon exploit

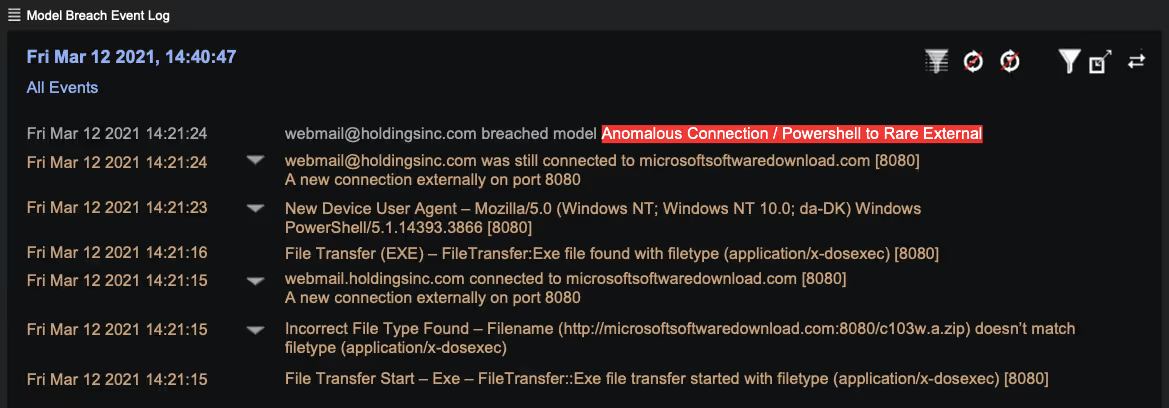

After the ProxyLogon vulnerability was exploited, the Exchange servers reached out to the malicious domain microsoftsoftwaredownload[.]com, utilizing a PowerShell User Agent. Darktrace flagged this anomalous behavior as the particular User Agent had never been used before by the Exchange server, let alone to access a malicious domain which had never been observed in the network.

Figure 2: Darktrace revealing an anomalous PowerShell connection

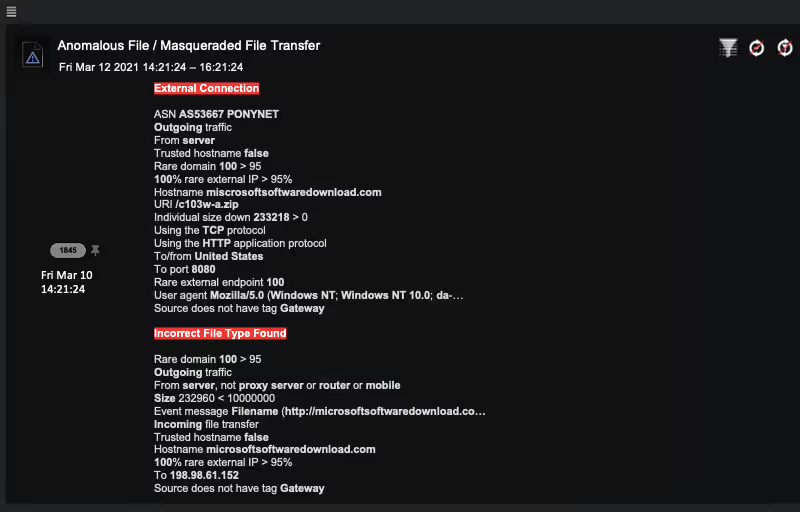

The malware executable was masqueraded as a ZIP file, further trying to obfuscate the attack. Darktrace identified this highly anomalous file download and the masqueraded file.

Figure 3: Darktrace revealing key information around the anomalous file download

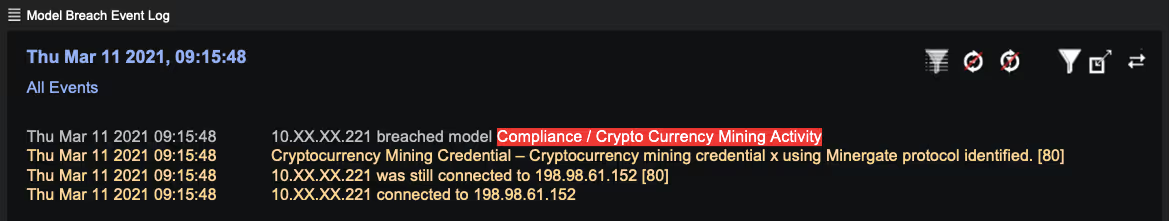

In some cases, Darktrace AI also observed cryptocurrency mining seconds or minutes after the initial malware download.

Figure 4: Darktrace’s Crypto Currency Mining model is breached

In terms of C2 traffic, Darktrace has observed various potential channels. Around the time of the malware download, some of the Exchange servers began to beacon out to several external destinations using unusual SSL or TLS encrypted connections.

- Telegra[.]ph — popular messenger application

- dev.opendrive[.]com — cloud storage service

- od[.]lk — cloud storage service

In this case, Darktrace recognized that none of these three external domains had ever been contacted before by anybody in the organization, let alone in a beaconing fashion. The fact that these communications started around the same time as the malware downloads strongly suggests a correlation. Darktrace’s Cyber AI Analyst automatically began an investigation into the incident, stitching together these events into one coherent narrative.

Investigating with AI

Cyber AI Analyst then automatically created a summary incident report about the activity, covering the malware download as well as the various C2 channels observed.

Figure 5: Cyber AI Analyst automatically generating a high-level incident summary

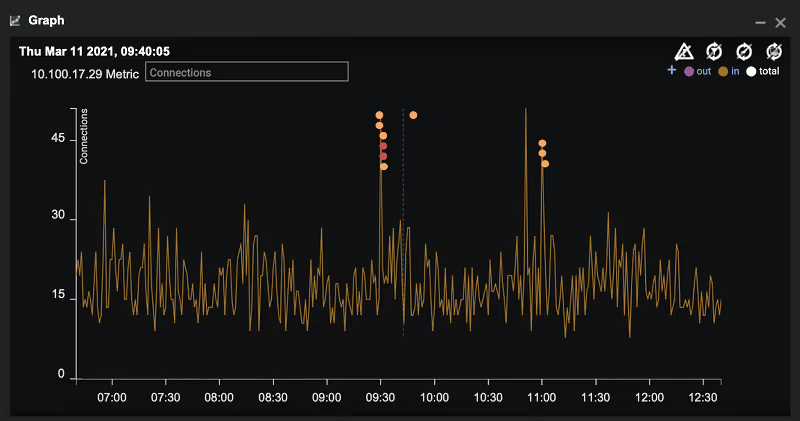

Looking at an infected Exchange server ([REDACTED].local) from a birds-eye perspective shows that Darktrace created various alerts when the attack hit. Every one of the colored dots in the graph below represents a major anomaly detected by Darktrace.

Figure 6: Darktrace reveals the anomalous number of connections and subsequent model breaches

This activity was prioritized as the most urgent incident in Cyber AI Analyst among a full week’s worth of data. In this particular organization, there were only four incidents for that week in total in Cyber AI Analyst. Such precise and clear alerting allows security teams to immediately understand the top threats facing their digital environment, without being overwhelmed by unnecessary alerts and false positives.

Machine-speed response

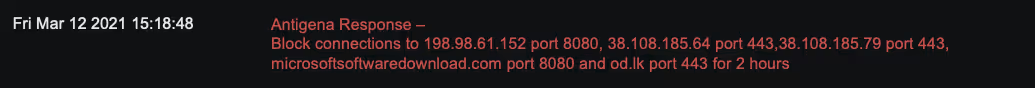

For customers with Darktrace Antigena, Antigena autonomously acted to block all outgoing traffic to malicious external endpoints on the relevant ports. This behavior is held for several hours to interrupt the threat actor from escalating the attack, while giving security teams time to react and remediate.

Antigena responded within seconds of the attack starting, effectively containing the attack in its earliest stage – without interrupting regular business activity (emails could still be sent and received), and despite this being a zero-day campaign.

Figure 7: Darktrace Antigena autonomously responds

Catching a zero-day exploit

This is not the first time Darktrace has stopped an attack leveraging a zero-day or a freshly released n-day vulnerability. Back in March 2020, Darktrace detected APT41 exploiting the Zoho ManageEngine vulnerability, two weeks before public attribution.

It is highly likely that there will be more cyber-criminals exploiting ProxyLogon in the wake of Hafnium. And while the recent Exchange server vulnerabilities were today’s threat, next time it might be a software or hardware supply chain attack, or a different zero-day. Novel threats are emerging every week. In this climate we now find ourselves in, where ‘known unknowns’ which are difficult or impossible to pre-define are the new norm, we need to be more adaptable and proactive than ever.

As soon as an attacker begins to exhibit unusual activity, Darktrace AI will detect it, even if there is no threat intelligence associated with the attack. This is where Darktrace works best, autonomously detecting, investigating and responding to advanced and never-before-seen threats in real time.

Learn more about the Darktrace Immune System

Example Darktrace model detections:

- Antigena / Network / Compliance / Antigena Crypto Currency Mining Block

- Compliance / Crypto Currency Mining Activity

- Antigena / Network / Significant Anomaly / Antigena Breaches Over Time Block

- Anomalous Connection / Suspicious Expired SSL

- Antigena / Network / Significant Anomaly / Antigena Significant Anomaly from Client Block

- Antigena / Network / Significant Anomaly / Antigena Enhanced Monitoring from Client Block

- Device / Initial Breach Chain Compromise

- Antigena / Network / Significant Anomaly / Antigena Breaches Over Time Block

- Anomalous File / Masqueraded File Transfer

- Anomalous File / EXE from Rare External Location

- Antigena / Network / External Threat / Antigena Suspicious File Block

- Antigena / Network / External Threat / Antigena File then New Outbound Block

- Antigena / Network / Significant Anomaly / Antigena Controlled and Model Breach

- Anomalous File / Internet Facing System File Download

- Device / New PowerShell User Agent

- Anomalous File / Multiple EXE from Rare External Locations

- Anomalous Connection / Powershell to Rare External