The threat of interoperability

As the “as-a-Service” market continues to grow, indicators of compromise (IoCs) and malicious infrastructure are often interchanged and shared between multiple malware strains and attackers. This presents organizations and their security teams with a new threat: interoperability.

Interoperable threats not only enable malicious actors to achieve their objectives more easily by leveraging existing infrastructure and tools to launch new attacks, but the lack of clear attribution often complicates identification for security teams and incident responders, making it challenging to mitigate and contain the threat.

One such threat observed across the Darktrace customer base in late 2023 was Quasar, a legitimate remote administration tool that has becoming increasingly popular for opportunistic attackers in recent years. Working in tandem, the anomaly-based detection of Darktrace DETECT™ and the autonomous response capabilities of Darktrace RESPOND™ ensured that affected customers were promptly made aware of any suspicious activity on the attacks were contained at the earliest possible stage.

What is Quasar?

Quasar is an open-source remote administration tool designed for legitimate use; however, it has evolved to become a popular tool used by threat actors due to its wide array of capabilities.

How does Quasar work?

For instance, Quasar can perform keylogging, take screenshots, establish a reverse proxy, and download and upload files on a target device [1]. A report released towards the end of 2023 put Quasar back on threat researchers’ radars as it disclosed the new observation of dynamic-link library (DLL) sideloading being used by malicious versions of this tool to evade detection [1]. DLL sideloading involves configuring legitimate Windows software to run a malicious file rather than the legitimate file it usually calls on as the software loads. The evolving techniques employed by threat actors using Quasar highlights defenders’ need for anomaly-based detections that do not rely on pre-existing knowledge of attacker techniques, and can identify and alert for unusual behavior, even if it is performed by a legitimate application.

Although Quasar has been used by advanced persistent threat (APT) groups for global espionage operations [2], Darktrace observed the common usage of default configurations for Quasar, which appeared to use shared malicious infrastructure, and occurred alongside other non-compliant activity such as BitTorrent use and cryptocurrency mining.

Quasar Attack Overview and Darktrace Coverage

Between September and October 2023, Darktrace detected multiple cases of malicious Quasar activity across several customers, suggesting probable campaign activity.

Quasar infections can be difficult to detect using traditional network or host-based tools due to the use of stealthy techniques such as DLL side-loading and encrypted SSL connections for command-and control (C2) communication, that traditional security tools may not be able to identify. The wide array of capabilities Quasar possesses also suggests that attacks using this tool may not necessarily be modelled against a linear kill chain. Despite this, the anomaly-based detection of Darktrace DETECT allowed it to identify IoCs related to Quasar at multiple stages of the kill chain.

Quasar Initial Infection

During the initial infection stage of a Quasar compromise observed on the network of one customer, Darktrace detected a device downloading several suspicious DLL and executable (.exe) files from multiple rare external sources using the Xmlst user agent, including the executable ‘Eppzjtedzmk[.]exe’. Analyzing this file using open-source intelligence (OSINT) suggests this is a Quasar payload, potentially indicating this represented the initial infection through DLL sideloading [3].

Interestingly, the Xmlst user agent used to download the Quasar payload has also been associated with Raccoon Stealer, an information-stealing malware that also acts as a dropper for other malware strains [4][5]. The co-occurrence of different malware components is increasingly common across the threat landscape as MaaS operating models increases in popularity, allowing attackers to employ cross-functional components from different strains.

Quasar Establishing C2 Communication

During this phase, devices on multiple customer networks were identified making unusual external connections to the IP 193.142.146[.]212, which was not commonly seen in their networks. Darktrace analyzed the meta-properties of these SSL connections without needing to decrypt the content, to alert the usage of an unusual port not typically associated with the SSL protocol, 4782, and the usage of self-signed certificates. Self-signed certificates do not provide any trust value and are commonly used in malware communications and ill-reputed web servers.

Further analysis into these alerts using OSINT indicated that 193.142.146[.]212 is a Quasar C2 server and 4782 is the default port used by Quasar [6][7]. Expanding on the self-signed certificate within the Darktrace UI (see Figure 3) reveals a certificate subject and issuer of “CN=Quasar Server CA”, which is also the default self-signed certificate compiled by Quasar [6].

A number of insights can be drawn from analysis of the Quasar C2 endpoints detected by Darktrace across multiple affected networks, suggesting a level of interoperability in the tooling used by different threat actors. In one instance, Darktrace detected a device beaconing to the endpoint ‘bittorrents[.]duckdns[.]org’ using the aforementioned “CN=Quasar Server CA” certificate. DuckDNS is a dynamic DNS service that could be abused by attackers to redirect users from their intended endpoint to malicious infrastructure, and may be shared or reused in multiple different attacks.

The sharing of malicious infrastructure among threat actors is also evident as several OSINT sources have also associated the Quasar IP 193.142.146[.]212, detected in this campaign, with different threat types.

While 193.142.146[.]212:4782 is known to be associated with Quasar, 193.142.146[.]212:8808 and 193.142.146[.]212:6606 have been associated with AsyncRAT [11], and the same IP on port 8848 has been associated with RedLineStealer [12]. Aside from the relative ease of using already developed tooling, threat actors may prefer to use open-source malware in order to avoid attribution, making the true identity of the threat actor unclear to incident responders [1][13].

Quasar Executing Objectives

On multiple customer deployments affected by Quasar, Darktrace detected devices using BitTorrent and performing cryptocurrency mining. While these non-compliant, and potentially malicious, activities are not necessarily specific IoCs for Quasar, they do suggest that affected devices may have had greater attack surfaces than others.

For instance, one affected device was observed initiating connections to 162.19.139[.]184, a known Minergate cryptomining endpoint, and ‘zayprostofyrim[.]zapto[.]org’, a dynamic DNS endpoint linked to the Quasar Botnet by multiple OSINT vendors [9].

Not only does cryptocurrency mining use a significant amount of processing power, potentially disrupting an organization’s business operations and racking up high energy bills, but the software used for this mining is often written to a poor standard, thus increasing the attack surfaces of devices using them. In this instance, Quasar may have been introduced as a secondary payload from a user or attacker-initiated download of cryptocurrency mining malware.

Similarly, it is not uncommon for malicious actors to attach malware to torrented files and there were a number of examples of Darktrace detect identifying non-compliant activity, like BitTorrent connections, overlapping with connections to external locations associated with Quasar. It is therefore important for organizations to establish and enforce technical and policy controls for acceptable use on corporate devices, particularly when remote working introduces new risks.

In some cases observed by Darktrace, devices affected by Quasar were also being used to perform data exfiltration. Analysis of a period of unusual external connections to the aforementioned Quasar C2 botnet server, ‘zayprostofyrim[.]zapto[.]org’, revealed a small data upload, which may have represented the exfiltration of some data to attacker infrastructure.

Darktrace’s Autonomous Response to Quasar Attacks

On customer networks that had Darktrace RESPOND™ enabled in autonomous response mode, the threat of Quasar was mitigated and contained as soon as it was identified by DETECT. If RESPOND is not configured to respond autonomously, these actions would instead be advisory, pending manual application by the customer’s security team.

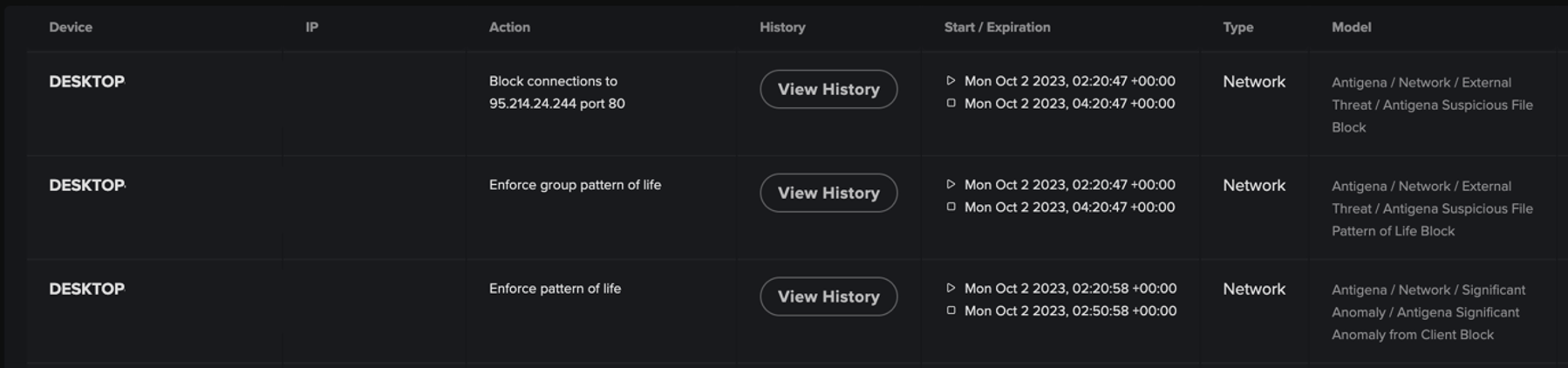

For example, following the detection of devices downloading malicious DLL and executable files, Darktrace RESPOND advised the customer to block specific connections to the relevant IP addresses and ports. However, as the device was seen attempting to download further files from other locations, RESPOND also suggested enforced a ‘pattern of life’ on the device, meaning it was only permitted to make connections that were part its normal behavior. By imposing a pattern of life, Darktrace RESPOND ensures that a device cannot perform suspicious behavior, while not disrupting any legitimate business activity.

Had RESPOND been configured to act autonomously, these mitigative actions would have been applied without any input from the customer’s security team and the Quasar compromise would have been contained in the first instance.

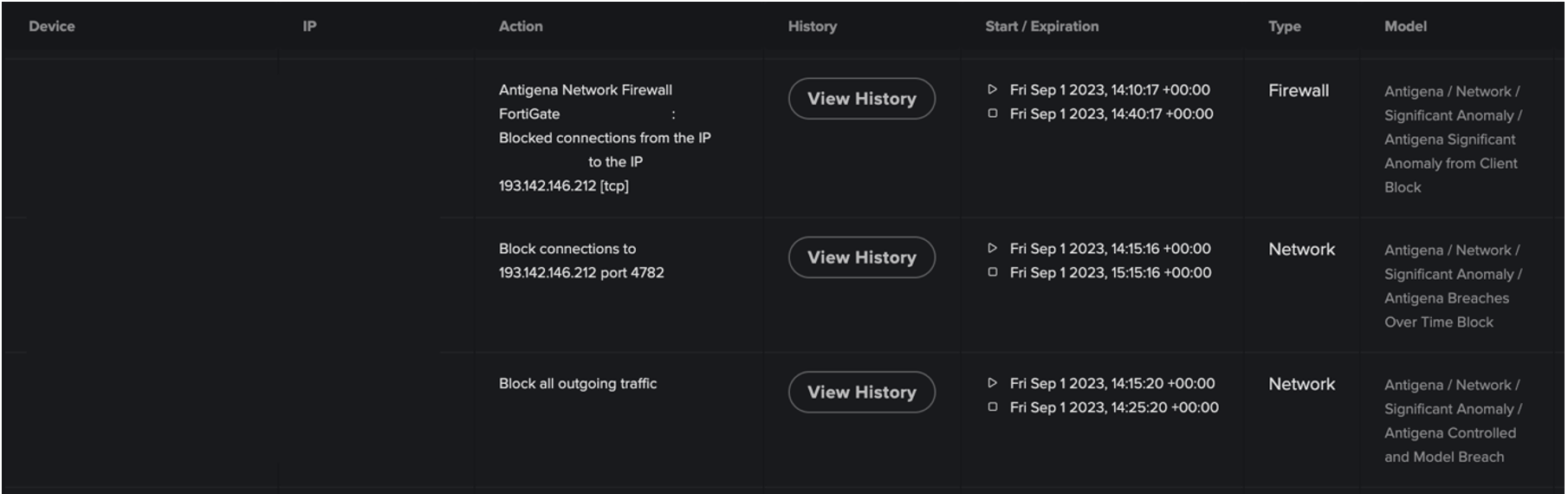

In another case, one customer affected by Quasar did have enabled RESPOND to take autonomous action, whilst also integrating it with a firewall. Here, following the detection of a device connecting to a known Quasar IP address, RESPOND initially blocked it from making connections to the IP via the customer’s firewall. However, as the device continued to perform suspicious activity after this, RESPOND escalated its response by blocking all outgoing connections from the device, effectively preventing any C2 activity or downloads.

Conclusion

When faced with a threat like Quasar that utilizes the infrastructure and tools of both legitimate services and other malicious malware variants, it is essential for security teams to move beyond relying on existing knowledge of attack techniques when safeguarding their network. It is no longer enough for organizations to rely on past attacks to defend against the attacks of tomorrow.

Crucially, Darktrace’s unique approach to threat detection focusses on the anomaly, rather than relying on a static list of IoCs or "known bads” based on outdated threat intelligence. In the case of Quasar, alternative or future strains of the malware that utilize different IoCs and TTPs would still be identified by Darktrace as anomalous and immediately alerted.

By learning the ‘normal’ for devices on a customer’s network, Darktrace DETECT can recognize the subtle deviations in a device’s behavior that could indicate an ongoing compromise. Darktrace RESPOND is subsequently able to follow this up with swift and targeted actions to contain the attack and prevent it from escalating further.

Credit to Nicole Wong, Cyber Analyst, Vivek Rajan Cyber Analyst

Appendices

Darktrace DETECT Model Breaches

- Anomalous Connection / Multiple Failed Connections to Rare Endpoint

- Anomalous Connection / Anomalous SSL without SNI to New External

- Anomalous Connection / Application Protocol on Uncommon Port

- Anomalous Connection / Rare External SSL Self-Signed

- Compromise / New or Repeated to Unusual SSL Port

- Compromise / Beaconing Activity To External Rare

- Compromise / High Volume of Connections with Beacon Score

- Compromise / Large Number of Suspicious Failed Connections

- Unusual Activity / Unusual External Activity

List of IoCs

IP:Port

193.142.146[.]212:4782 -Quasar C2 IP and default port

77.34.128[.]25: 8080 - Quasar C2 IP

Domain

zayprostofyrim[.]zapto[.]org - Quasar C2 Botnet Endpoint

bittorrents[.]duckdns[.]org - Possible Quasar C2 endpoint

Certificate

CN=Quasar Server CA - Default certificate used by Quasar

Executable

Eppzjtedzmk[.]exe - Quasar executable

IP Address

95.214.24[.]244 - Quasar C2 IP

162.19.139[.]184 - Cryptocurrency Miner IP

41.233.139[.]145[VR1] [NW2] - Possible Quasar C2 IP

MITRE ATT&CK Mapping

Command and Control

T1090.002: External Proxy

T1071.001: Web Protocols

T1571: Non-Standard Port

T1001: Data Obfuscation

T1573: Encrypted Channel

T1071: Application Layer Protocol

Resource Development

T1584: Compromise Infrastructure

References

[1] https://thehackernews.com/2023/10/quasar-rat-leverages-dll-side-loading.html

[4] https://twitter.com/g0njxa/status/1691826188581298389

[7] https://www.cisa.gov/news-events/analysis-reports/ar18-352a

[9] https://threatfox.abuse.ch/ioc/891454/

[10] https://www.virustotal.com/gui/ip-address/41.233.139.145/relations

[11] https://raw.githubusercontent.com/stamparm/maltrail/master/trails/static/malware/asyncrat.txt

[12] https://sslbl.abuse.ch/ssl-certificates/signature/RedLineStealer/