What is the Marine Transportation System (MTS)?

Marine Transportation Systems (MTS) play a substantial roll in U.S. commerce, military readiness, and economic security. Defined as a critical national infrastructure, the MTS encompasses all aspects of maritime transportation from ships and ports to the inland waterways and the rail and roadways that connect them.

MTS interconnected systems include:

- Waterways: Coastal and inland rivers, shipping channels, and harbors

- Ports: Terminals, piers, and facilities where cargo and passengers are transferred

- Vessels: Commercial ships, barges, ferries, and support craft

- Intermodal Connections: Railroads, highways, and logistics hubs that tie maritime transport into national and global supply chains

The Coast Guard plays a central role in ensuring the safety, security, and efficiency of the MTS, handling over $5.4 trillion in annual economic activity. As digital systems increasingly support operations across the MTS, from crane control to cargo tracking, cybersecurity has become essential to protecting this lifeline of U.S. trade and infrastructure.

Maritime Transportation Systems also enable international trade, making them prime targets for cyber threats from ransomware gangs to nation-state actors.

To defend against growing threats, the United States Coast Guard (USCG) has moved from encouraging cybersecurity best practices to enforcing them, culminating in a new mandate that goes into effect on July 16, 2025. These regulations aim to secure the digital backbone of the maritime industry.

Why maritime ports are at risk

Modern ports are a blend of legacy and modern OT, IoT, and IT digitally connected technologies that enable crane operations, container tracking, terminal storage, logistics, and remote maintenance.

Many of these systems were never designed with cybersecurity in mind, making them vulnerable to lateral movement and disruptive ransomware attack spillover.

The convergence of business IT networks and operational infrastructure further expands the attack surface, especially with the rise of cloud adoption and unmanaged IoT and IIoT devices.

Cyber incidents in recent years have demonstrated how ransomware or malicious activity can halt crane operations, disrupt logistics, and compromise safety at scale threatening not only port operations, but national security and economic stability.

Relevant cyber-attacks on maritime ports

Maersk & Port of Los Angeles (2017 – NotPetya):

A ransomware attack crippled A.P. Moller-Maersk, the world’s largest shipping company. Operations at 17 ports, including the Port of Los Angeles, were halted due to system outages, causing weeks of logistical chaos.

Port of San Diego (2018 – Ransomware Attack):

A ransomware attack targeted the Port of San Diego, disrupting internal IT systems including public records, business services, and dockside cargo operations. While marine traffic was unaffected, commercial activity slowed significantly during recovery.

Port of Houston (2021 – Nation-State Intrusion):

A suspected nation-state actor exploited a known vulnerability in a Port of Houston web application to gain access to its network. While the attack was reportedly thwarted, it triggered a federal investigation and highlighted the vulnerability of maritime systems.

Jawaharlal Nehru Port Trust, India (2022 – Ransomware Incident):

India’s largest container port experienced disruptions due to a ransomware attack affecting operations and logistics systems. Container handling and cargo movement slowed as IT systems were taken offline during recovery efforts.

A regulatory shift: From guidance to enforcement

Since the Maritime Transportation Security Act (MTSA) of 2002, ports have been required to develop and maintain security plans. Cybersecurity formally entered the regulatory fold in 2020 with revisions to 33 CFR Part 105 and 106, requiring port authorities to assess and address computer system vulnerabilities.

In January 2025, the USCG finalized new rules to enforce cybersecurity practices across the MTS. Key elements include (but are not limited to):

- A dedicated cyber incident response plan (PR.IP-9)

- Routine cybersecurity risk assessments and exercises (ID.RA)

- Designation of a cybersecurity officer and regular workforce training (section 3.1)

- Controls for access management, segmentation, logging, and encryption (PR.AC-1:7)

- Supply chain risk management (ID.SC)

- Incident reporting to the National Response Center

Port operators are encouraged to align their programs with the NIST Cybersecurity Framework (CSF 2.0) and NIST SP 800-82r3, which provide comprehensive guidance for IT and OT security in industrial environments.

How Darktrace can support maritime & ports

Unified IT + OT + Cloud coverage

Maritime ports operate in hybrid environments spanning business IT systems (finance, HR, ERP), industrial OT (cranes, gates, pumps, sensors), and an increasing array of cloud and SaaS platforms.

Darktrace is the only vendor that provides native visibility and threat detection across OT/IoT, IT, cloud, and SaaS environments — all in a single platform. This means:

- Cranes and other physical process control networks are monitored in the same dashboard as Active Directory and Office 365.

- Threats that start in the cloud (e.g., phishing, SaaS token theft) and pivot or attempt to pivot into OT are caught early — eliminating blind spots that siloed tools miss.

This unification is critical to meeting USCG requirements for network-wide monitoring, risk identification, and incident response.

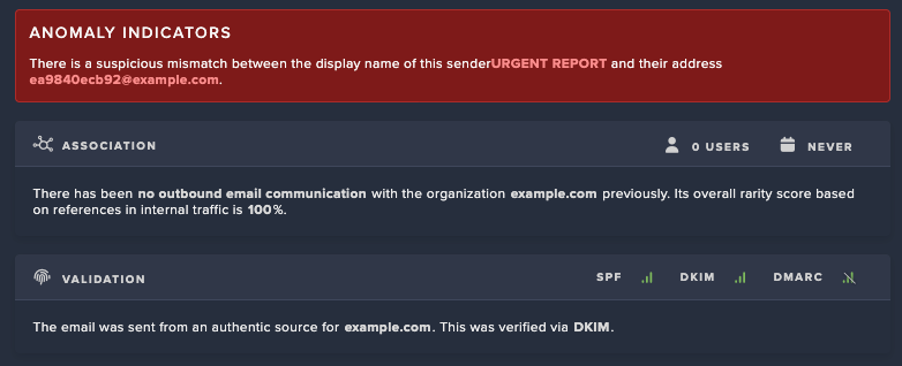

AI that understands your environment. Not just known threats

Darktrace’s AI doesn’t rely on rules or signatures. Instead, it uses Self-Learning AI TM that builds a unique “pattern of life” for every device, protocol, user, and network segment, whether it’s a crane router or PLC, SCADA server, Workstation, or Linux file server.

- No predefined baselines or manual training

- Real-time anomaly detection for zero-days, ransomware, and supply chain compromise

- Continuous adaptation to new devices, configurations, and operations

This approach is critical in diverse distributed OT environments where change and anomalous activity on the network are more frequent. It also dramatically reduces the time and expertise needed to classify and inventory assets, even for unknown or custom-built systems.

Supporting incident response requirements

A key USCG requirement is that cybersecurity plans must support effective incident response.

Key expectations include:

- Defined response roles and procedures: Personnel must know what to do and when (RS.CO-1).

- Timely reporting: Incidents must be reported and categorized according to established criteria (RS.CO-2, RS.AN-4).

- Effective communication: Information must be shared internally and externally, including voluntary collaboration with law enforcement and industry peers (RS.CO-3 through RS.CO-5).

- Thorough analysis: Alerts must be investigated, impacts understood, and forensic evidence gathered to support decision-making and recovery (RS.AN-1 through RS.AN-5).

- Swift mitigation: Incidents must be contained and resolved efficiently, with newly discovered vulnerabilities addressed or documented (RS.MI-1 through RS.MI-3).

- Ongoing improvement: Organizations must refine their response plans using lessons learned from past incidents (RS.IM-1 and RS.IM-2).

That means detections need to be clear, accurate, and actionable.

Darktrace cuts through the noise using AI that prioritizes only high-confidence incidents and provides natural-language narratives and investigative reports that explain:

- What’s happening, where it’s happening, when it’s happening

- Why it’s unusual

- How to respond

Result: Port security teams often lean and multi-tasked can meet USCG response-time expectations and reporting needs without needing to scale headcount or triage hundreds of alerts.

Built-for-edge deployment

Maritime environments are constrained. Many traditional SaaS deployment types often are unsuitable for tugboats, cranes, or air-gapped terminal systems.

Darktrace builds and maintains its own ruggedized, purpose-built appliances and unique virtual deployment options that:

- Deploy directly into crane networks or terminal enclosures

- Require no configuration or tuning, drop-in ready

- Support secure over-the-air updates and fleet management

- Operate without cloud dependency, supporting isolated and air-gapped systems

Use case: Multiple ports have been able to deploy Darktrace directly into the crane’s switch enclosure, securing lateral movement paths without interfering with the crane control software itself.

Segmentation enforcement & real-time threat containment

Darktrace visualizes real-time connectivity and attack pathways across IT, OT, and IoT it and integrates with firewalls (e.g., Fortinet, Cisco, Palo Alto) to enforce segmentation using AI insights alongside Darktrace’s own native autonomous and human confirmed response capabilities.

Benefits of autonomous and human confirmed response:

- Auto-isolate rogue devices before the threat can escalate

- Quarantine a suspicious connectivity with confidence operations won’t be halted

- Autonomously buy time for human responders during off-hours or holidays

- This ensures segmentation isn't just documented but that in the case of its failure or exploitation responses are performed as a compensating control

No reliance on 3rd parties or external connectivity

Darktrace’s supply chain integrity is a core part of its value to critical infrastructure customers. Unlike solutions that rely on indirect data collection or third-party appliances, Darktrace:

- Uses in-house engineered sensors and appliances

- Does not require transmission of data to or from the cloud

This ensures confidence in both your cyber visibility and the security of the tools you deploy.

See examples here of how Darktrace stopped supply chain attacks:

- Cyberhaven supply chain attack

- 3CX supply chain attack

- Applying zero-trust in critical infrastructure supply chains

Readiness for USCG and Beyond

With a self-learning system that adapts to each unique port environment, Darktrace helps maritime operators not just comply but build lasting cyber resilience in a high-threat landscape.

Cybersecurity is no longer optional for U.S. ports its operationally and nationally critical. Darktrace delivers the intelligence, automation, and precision needed to meet USCG requirements and protect the digital lifeblood of the modern port.

%20copy.avif)

%201.png)

.avif)

.jpg)