When is the best time to be hit with a cyber-attack?

The answer that springs to most is ‘Never’, however in today’s threat landscape, this is often wishful thinking. The next best answer is ‘When we’re ready for it’. Yet, this does not take into account the intention of those committing attacks. The reality is that the best time for a cyber-attack is when no one else is around to stop it.

When do cyber attacks happen?

Previous analysis from Mandiant reveals that over half of ransomware compromises occur at out of work hours, a trend Darktrace has also witnessed in the past two years [1]. This is deliberate, as the fewer people that are online, the harder it is to get ahold of security teams and the higher the likelihood there is of an attacker achieving their goals. Given this landscape, it is clear that autonomous response is more important than ever. In the absence of human resources, autonomous security can fill in the gap long enough for IT teams to begin remediation.

This blog will detail an incident where autonomous response provided by Darktrace RESPOND would have entirely prevented an infection attempt, despite it occurring in the early hours of the morning. Because the customer had RESPOND in human confirmation mode (AI response must first be approved by a human), the attempt by XorDDoS was ultimately successful. Given that the attack occurred in the early hours of the morning, there was likely no one around to confirm Darktrace RESPOND actions and prevent the attack.

XorDDoS Primer

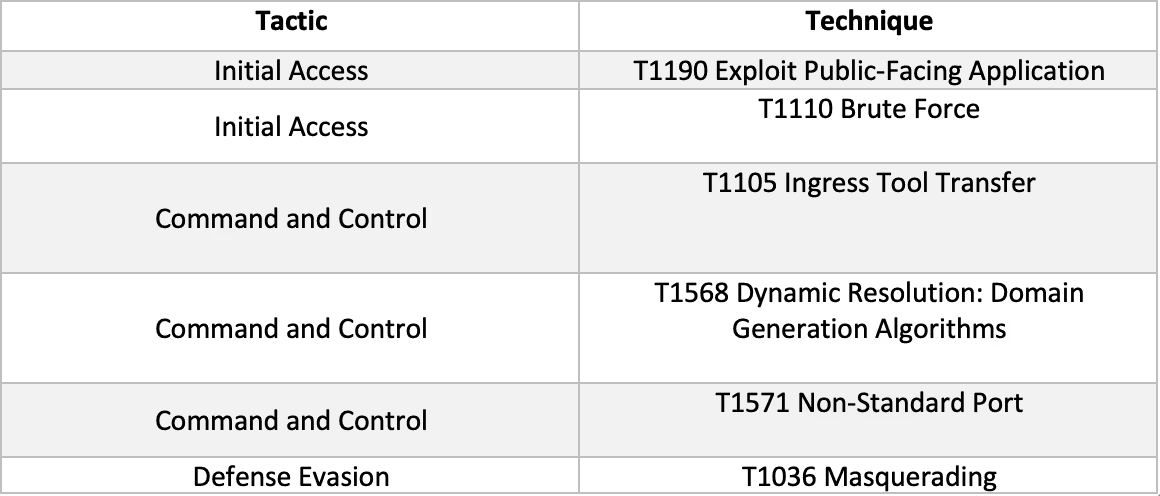

XorDDoS is a botnet, a type of malware that infects devices for the purpose of controlling them as a collective to carry out specific actions. In the case of XorDDoS, it infects devices in order to carry out denial of service attacks using said devices. This year, Microsoft has reported a substantial increase in activity from this malware strain, with an increased focus on Linux based operating systems [2]. XorDDoS most commonly finds its way onto systems via SSH brute-forcing, and once deployed, encrypts its traffic with an XOR cipher. XorDDoS has also been known to download additional payloads such as backdoors and cryptominers. Needless to say, this is not something you have on a corporate network.

Initial Intrusion of XorDDoS

The incident begins with a device first coming online on 10th August. The device appeared to be internet facing and Darktrace saw hundreds of incoming SSH connections to the device from a variety of endpoints. Over the course of the next five days, the device received thousands of failed SSH connections from several IP addresses that, according to OSINT, may be associated with web scanners [3]. Successful SSH connections were seen from internal IP addresses as well as IP addresses associated with IT solutions relevant to Asia-Pacific (the customer’s geographic location). On midnight of 15th August, the first successful SSH connection occurred from an IP address that has been associated with web scanning. This connection lasted around an hour and a half, and the external IP uploaded around 3.3 MB of data to the client device. Given all of this, and what the industry knows about XorDDoS, it is likely that the client device had SSH exposed to the Internet which was then brute-forced for initial access.

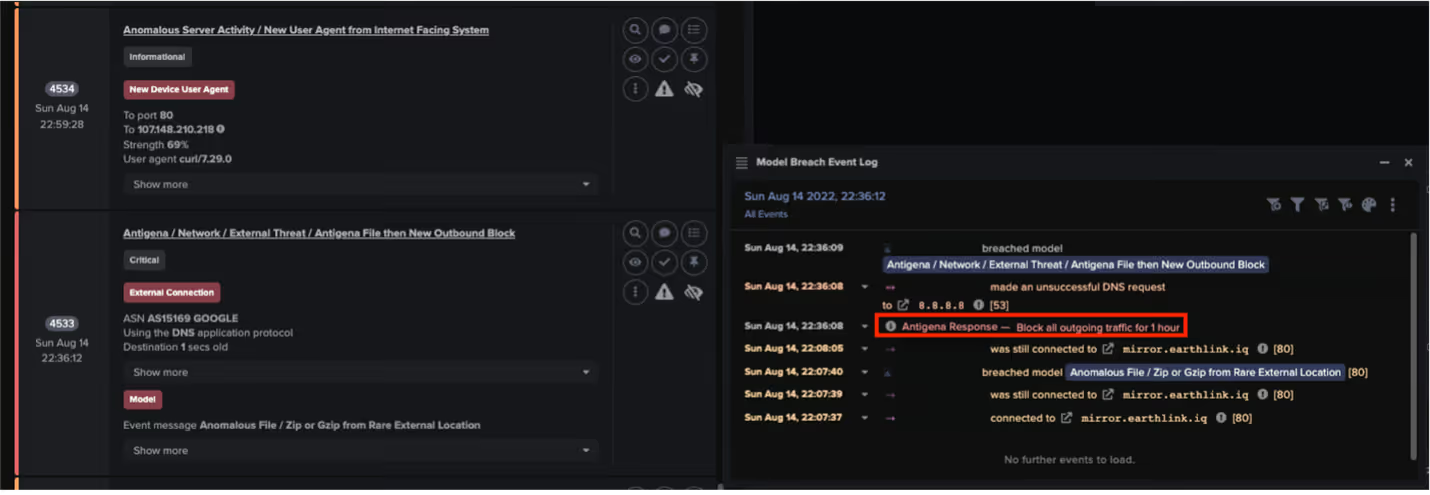

There were a few hours of dwell until the device downloaded a ZIP file from an Iraqi mirror site, mirror[.]earthlink[.]iq at around 6AM in the customer time zone. The endpoint had only been seen once before and was 100% rare for the network. Since there has been no information on OSINT around this particular endpoint or the ZIP files downloaded from the mirror site, the detection was based on the unusualness of the download.

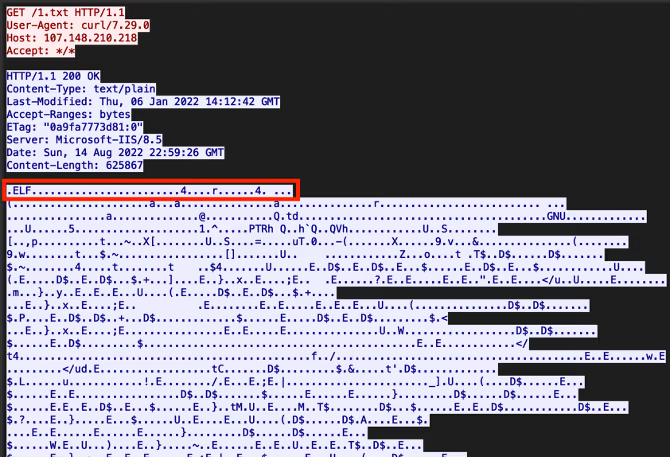

Following this, Darktrace saw the device make a curl request to the external IP address 107.148.210[.]218. This was highlighted as the user agent associated with curl had not been seen on the device before, and the connection was made directly to an IP address without a hostname (suggesting that the connection was scripted). The URIs of these requests were ‘1.txt’ and ‘2.txt’.

The ‘.txt’ extensions on the URIs were deceiving and it turned out that both were executable files masquerading as text files. OSINT on both of the hashes revealed that the files were likely associated with XorDDoS. Additionally, judging from packet captures of the connection, the true file extension appeared to be ‘.ELF’. As XorDDoS primarily affects Linux devices, this would make sense as the true extension of the payload.

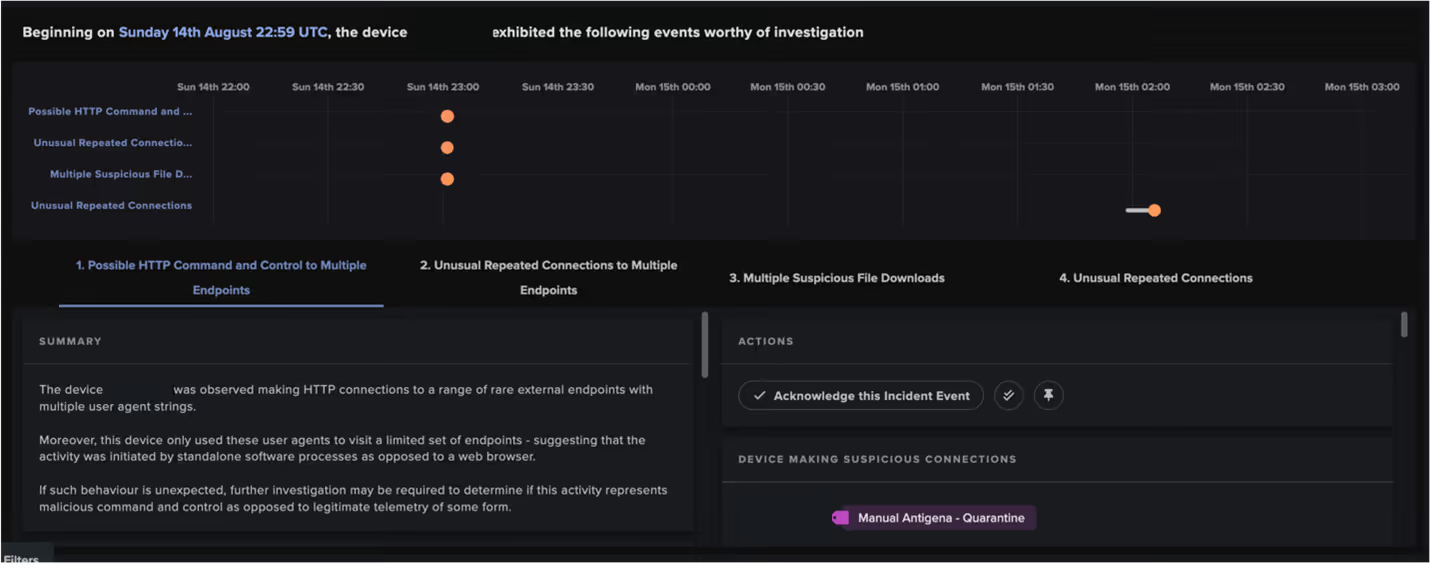

C2 Connections

Immediately after the ‘.ELF’ download, Darktrace saw the device attempting C2 connections. This included connections to DGA-like domains on unusual ports such as 1525 and 8993. Luckily, the client’s firewall seems to have blocked these connections, but that didn’t stop XorDDoS. XorDDoS continued to attempt connections to C2 domains, which triggered several Proactive Threat Notifications (PTNs) that were alerted by SOC. Following the PTNs, the client manually quarantined the device a few hours after the initial breach. This lapse in actioning was likely due to an early morning timing with the customer’s employees not being online yet. After the device was quarantined, Darktrace still saw XorDDoS attempting C2 connections. In all, hundreds of thousands of C2 connections were detected before the device was removed from the network sometime on 7th September.

An Alternate Timeline

Although the device was ultimately removed, this attack would have been entirely prevented had RESPOND/Network not been in human confirmation mode. Autonomous response would have kicked in once the device downloaded the ‘.ZIP file’ from the Iraqi mirror site and blocked all outgoing connections from the breach device for an hour:

The model breach in Figure 3 would have prevented the download of the XorDDoS executables, and then prevented the subsequent C2 connections. This hour would have been crucial, as it would have given enough time for members of the customer’s security team to get back online should the compromised device have attempted anything else. With everyone attentive, it is unlikely that this activity would have lasted as long as it did. Had the attack been allowed to progress further, the infected device would have at the very least been an unwilling participant in a future DDoS attack. Additionally, the device could have a backdoor placed within it, and additional malware such as cryptojackers might have been deployed.

Conclusions

Unfortunately, we do not exist in the alternate timeline that autonomous response would have prevented this whole series of events.Luckily, although it was not in place, the PTN alerts provided by Darktrace’s SOC team still sped up the process of remediation in an event that was never intended to be discovered given the time it occurred. Unusual times of attack are not just limited to ransomware, so organizations need to have measures in place for the times that are most inconvenient to them, but most convenient to attackers. With Darktrace/RESPOND however, this is just one click away.

Thanks to Brianna Leddy for their contribution.

Appendices

Darktrace Model Detections

Below is a list of model breaches in order of trigger. The Proactive Threat Notification models are in bold and only the first Antigena [RESPOND] breach that would have prevented the initial compromise has been included. A manual quarantine breach has also been added to show when the customer began remediation.

- Compliance / Incoming SSH, August 12th 23:39 GMT +8

- Anomalous File / Zip or Gzip from Rare External Location, August 15th, 6:07 GMT +8

- Antigena / Network / External Threat / Antigena File then New Outbound Block, August 15th 6:36 GMT +8 [part of the RESPOND functionality]

- Anomalous Connection / New User Agent to IP Without Hostname, August 15th 6:59 GMT +8

- Anomalous File / Numeric Exe Download, August 15th 6:59 GMT +8

- Anomalous File / Masqueraded File Transfer, August 15th 6:59 GMT +8

- Anomalous File / EXE from Rare External Location, August 15th 6:59 GMT +8

- Device / Internet Facing Device with High Priority Alert, August 15th 6:59 GMT +8

- Compromise / Rare Domain Pointing to Internal IP, August 15th 6:59 GMT +8

- Device / Initial Breach Chain Compromise, August 15th 6:59 GMT +8

- Compromise / Large Number of Suspicious Failed Connections, August 15th 7:01 GMT +8

- Compromise / High Volume of Connections with Beacon Score, August 15th 7:04 GMT +8

- Compromise / Fast Beaconing to DGA, August 15th 7:04 GMT +8

- Compromise / Suspicious File and C2, August 15th 7:04 GMT +8

- Antigena / Network / Manual / Quarantine Device, August 15th 8:54 GMT +8 [part of the RESPOND functionality]

List of IOCs

MITRE ATT&CK Mapping

Reference List

[1] They Come in the Night: Ransomware Deployment Trends

[2] Rise in XorDdos: A deeper look at the stealthy DDoS malware targeting Linux devices

[3] Alien Vault: Domain Navicatadvvr & https://www.virustotal.com/gui/domain/navicatadvvr.com & https://maltiverse.com/hostname/navicatadvvr.com

%201.png)