Late on a Saturday evening, a physical security company in the US was targeted by an attack after cyber-criminals exploited an exposed RDP server. By Sunday, all the organization’s internal services had become unusable. This blog will unpack the attack and the dangers of open RDP ports.

What is RDP?

With the shift to remote working, IT teams have relied on remote access tools to manage corporate devices and keep the show running. Remote Desktop Protocol (RDP) is a Microsoft protocol which enables administrators to access desktop computers. Since it gives the user complete control over the device, it is a valuable entry point for threat actors.

‘RDP shops’ selling credentials on the Dark Web have been around for years. xDedic, one of the most notorious crime forums which once boasted over 80,000 hacked servers for sale, was finally shut down by the FBI and Europol in 2019, five years after it had been founded. Selling RDP access is a booming industry because it provides immediate entry into an organization, removing the need to design a phishing email, develop malware, or manually search for zero-days and open ports. For less than $5, an attacker can purchase direct access to their target organization.

In the months following the COVID-19 outbreak, the number of exposed RDP endpoints increased by 127%. RDP usage surged as companies adapted to teleworking conditions, and it became almost impossible for traditional security tools to distinguish between the daily legitimate application of RDP and its exploitation. This led to a dramatic spike in successful server-side attacks. According to the UK’s National Cyber Security Centre, RDP is now the single most common attack vector used by cyber-criminals – particularly ransomware gangs.

Breakdown of an RDP compromise

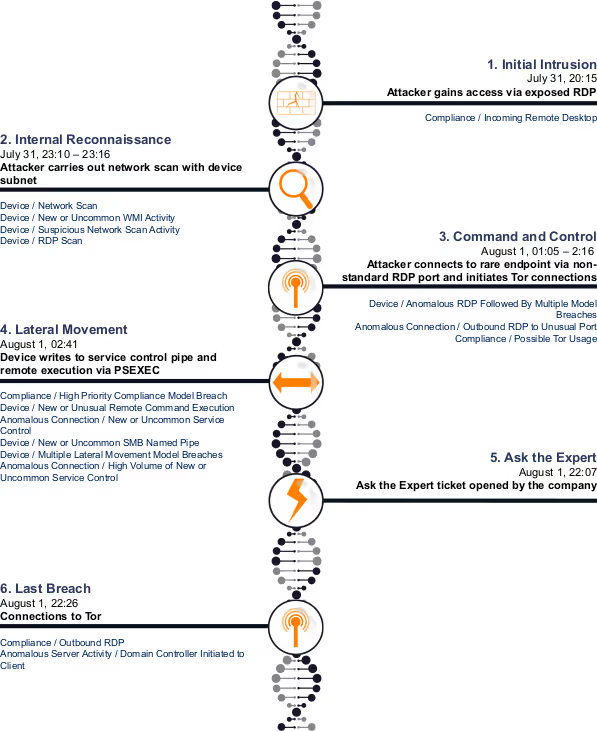

Initial intrusion

In this real-world attack, the target organization had around 7,500 devices active, one of which was an Internet-facing server with TCP port 3389 – the default port for RDP – open. In other words, the port was configured to accept network packets.

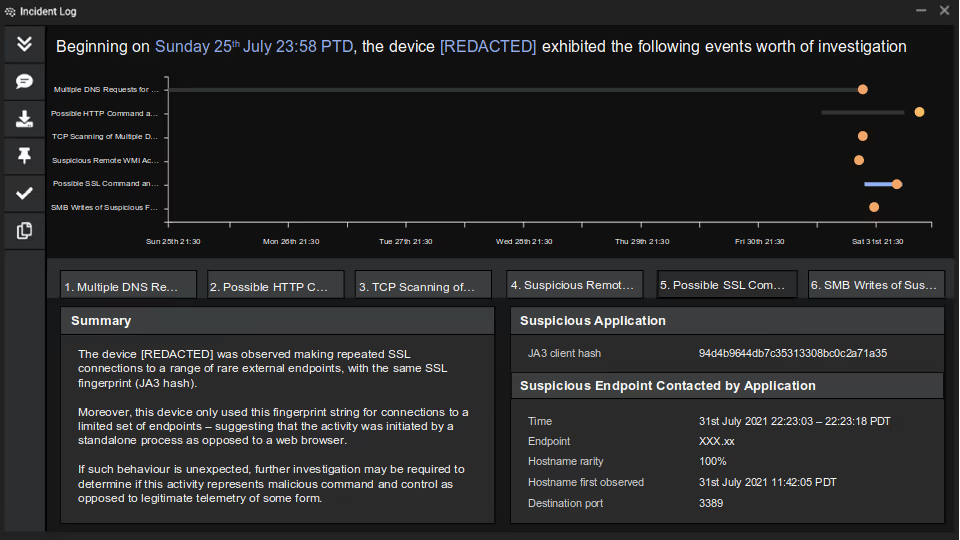

Darktrace detected a successful incoming RDP connection from a rare external endpoint, which utilized a suspicious authentication cookie. Given that the device was subject to a large volume of external RDP connections, it is likely the attacker brute-forced their way in, though they could have used an exploit or bought credentials off the Dark Web.

As incoming connections on port 3389 to this service were commonplace and expected as part of normal business, the connection was not flagged by any other security tool.

Internal reconnaissance

Following the initial compromise, the device was seen engaging in network scanning activity within its own subnet to escalate access. After the scan, the device made Windows Management Instrumentation (WMI) connections to multiple devices over DCE-RPC, which triggered multiple Darktrace alerts.

Command and control (C2)

The device then made a new RDP connection on a non-standard port, using an administrative authentication cookie to an endpoint which had never been seen on the network. Tor connections were observed after this point, indicating potential C2 communication.

Lateral movement

The attacker then attempted lateral movement via SMB service control pipes and PsExec to five devices within the breach device’s subnet, which were likely identified during the network scan.

By using native Windows admin tools (PsExec, WMI, and svcctl) for lateral movement, the attacker managed to ‘live off the land’, evading detection from the rest of the security stack.

Ask the Expert

The organization’s own internal services were unavailable, so they reached out to Darktrace’s 24/7 Ask the Expert service. Darktrace’s cyber experts quickly determined the scope and nature of the compromise using the AI and began the remediation process. As a result, the threat was neutralized before the attacker could achieve their objectives, which may have included crypto-mining, deploying ransomware, or exfiltrating sensitive data.

RDP vulnerability: Dangers of exposed servers

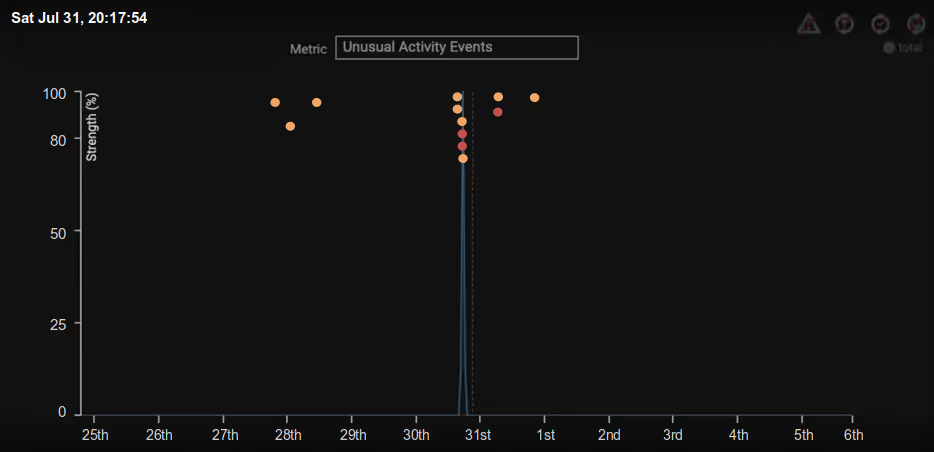

Prior to the events described above, Darktrace had observed incoming connections on RDP and SQL from a large variety of rare external endpoints, suggesting that the server had been probed many times before. When unnecessary services are left open to the Internet, compromise is inevitable – it is simply a matter of time.

This is especially true of RDP. In this case, the attacker managed to successfully carry out reconnaissance and open external communication all through their initial access to the RDP port. Threat actors are always looking for a way in, so what could be considered a compliance issue can easily, and quickly, devolve into compromise.

Out of control remote control

The attack happened out of hours – at a time when the security team were off work enjoying their Saturday evenings – and it progressed at remarkable speed, escalating from initial intrusion to lateral movement in less than seven hours. It is very common for attackers to exploit these human vulnerabilities, moving fast and remaining undetected until the IT team are back at their desks on Monday morning.

It is for this reason that a security solution which does not sleep – and which can detect and autonomously respond to threats around the clock – is critical. Self-Learning AI can keep up with threats which escalate at machine speed, stopping them at every turn.

Thanks to Darktrace analyst Steven Sosa for his insights on the above threat find.

Learn how an RDP attack led to the deployment of ransomware

Darktrace model detections:

- Compliance / Incoming Remote Desktop

- Device / Network Scan

- Device / New or Uncommon WMI Activity

- Device / Suspicious Network Scan Activity

- Device / RDP Scan

- Device / Anomalous RDP Followed By Multiple Model Breaches

- Anomalous Connection / Outbound RDP to Unusual Port

- Compliance / Possible Tor Usage

- Compliance / High Priority Compliance Model Breach

- Device / New or Unusual Remote Command Execution

- Anomalous Connection / New or Uncommon Service Control

- Device / New or Uncommon SMB Named Pipe

- Device / Multiple Lateral Movement Model Breaches

- Anomalous Connection / High Volume of New or Uncommon Service Control

- Compliance / Outbound RDP

- Anomalous Server Activity / Domain Controller Initiated to Client